Compare commits

348 Commits

daemon/fix

...

fix/kvrock

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

573d60c071 | ||

|

|

0b5f927034 | ||

|

|

605b862937 | ||

|

|

0110413528 | ||

|

|

0726d70b58 | ||

|

|

8abf6d8b65 | ||

|

|

b0f495c37a | ||

|

|

4e9b8d840d | ||

|

|

57579813de | ||

|

|

97dd238c44 | ||

|

|

3095530d0d | ||

|

|

3e8120baf6 | ||

|

|

0685c4326b | ||

|

|

af9e1993d1 | ||

|

|

ba8868d771 | ||

|

|

7ee1d7cae1 | ||

|

|

cb17633f57 | ||

|

|

18e94af22b | ||

|

|

b81665afe1 | ||

|

|

acb0fae406 | ||

|

|

e5fef95f4e | ||

|

|

55fe22ed4c | ||

|

|

fee742d756 | ||

|

|

36b4e792f6 | ||

|

|

8810a7657e | ||

|

|

59d87c860b | ||

|

|

8cda14a78c | ||

|

|

a4c0161cb1 | ||

|

|

505a438fa3 | ||

|

|

1a794c9fc4 | ||

|

|

03e8dd0ac7 | ||

|

|

eea2dfb67a | ||

|

|

316ffe4f35 | ||

|

|

08a380df61 | ||

|

|

58e869604a | ||

|

|

a61dff75b9 | ||

|

|

0b9c1a09b9 | ||

|

|

3178e06349 | ||

|

|

69c341060b | ||

|

|

d56daad3f0 | ||

|

|

2b239284b3 | ||

|

|

e2e8b84eef | ||

|

|

7afb59cd3a | ||

|

|

6474487e75 | ||

|

|

3fd15d418b | ||

|

|

243ad15e66 | ||

|

|

56367c964e | ||

|

|

8911b33d3e | ||

|

|

f7c7939493 | ||

|

|

8eee97f779 | ||

|

|

d3c1a37378 | ||

|

|

4a8303d050 | ||

|

|

61df0056ba | ||

|

|

75c48ef5ee | ||

|

|

4fed6bd618 | ||

|

|

581e252f30 | ||

|

|

f1d479cf1d | ||

|

|

d070e53480 | ||

|

|

89719a8d48 | ||

|

|

085bef64b5 | ||

|

|

963ca8ab48 | ||

|

|

59922bc5cf | ||

|

|

1f4b3f94ca | ||

|

|

aa9e89c0c9 | ||

|

|

760aef5521 | ||

|

|

ca1d7ebd09 | ||

|

|

a282878cfe | ||

|

|

95ad815142 | ||

|

|

984582c520 | ||

|

|

d10e6f0e20 | ||

|

|

0db6227f98 | ||

|

|

46aa153989 | ||

|

|

3cfd619d9d | ||

|

|

82e3d7d2d4 | ||

|

|

9188718cb6 | ||

|

|

7f27a03e84 | ||

|

|

202a17dd6f | ||

|

|

fe6817ff78 | ||

|

|

3991bc2e08 | ||

|

|

c84e4deded | ||

|

|

3a19d380f3 | ||

|

|

21cf7466ee | ||

|

|

9a0db453d3 | ||

|

|

3021a88e70 | ||

|

|

232c277412 | ||

|

|

d5e0523c6a | ||

|

|

03641fb388 | ||

|

|

023208603c | ||

|

|

21d10c37b3 | ||

|

|

5be2c61091 | ||

|

|

da12178933 | ||

|

|

b6484e1a19 | ||

|

|

206c946408 | ||

|

|

c57c67db24 | ||

|

|

1ed26c8264 | ||

|

|

18ece294ce | ||

|

|

2f44ae273f | ||

|

|

a6457f0a2a | ||

|

|

3f6bc2bf36 | ||

|

|

f7248a1c74 | ||

|

|

54fc939ea3 | ||

|

|

420bb1d805 | ||

|

|

39c0d2c777 | ||

|

|

d8e3a64b61 | ||

|

|

78dbda300b | ||

|

|

16440bc3c5 | ||

|

|

f5b8d226c9 | ||

|

|

a80142cdd7 | ||

|

|

e69364d329 | ||

|

|

6facfd93ee | ||

|

|

7e9b0bcdc5 | ||

|

|

bb461e8573 | ||

|

|

926058cbd0 | ||

|

|

44d56f64e1 | ||

|

|

8074e7dee9 | ||

|

|

67af7ee3fa | ||

|

|

e6b3624bae | ||

|

|

c27c8a61f1 | ||

|

|

79e6d4b6e6 | ||

|

|

ea15f6d04b | ||

|

|

dffcafbfd2 | ||

|

|

e30afb517b | ||

|

|

97a701c7e4 | ||

|

|

24c68ada0b | ||

|

|

ec5358f9b0 | ||

|

|

03bb1ab2b8 | ||

|

|

d5754b8977 | ||

|

|

8017975124 | ||

|

|

66b77ed5a1 | ||

|

|

b990d50b01 | ||

|

|

f1890e304b | ||

|

|

587ea07a61 | ||

|

|

e185931214 | ||

|

|

78fe2b29d2 | ||

|

|

9fc92b4f32 | ||

|

|

d33a8b7d31 | ||

|

|

825a05b02f | ||

|

|

6aa9b08b63 | ||

|

|

dcb2505c8e | ||

|

|

4917a2d2ab | ||

|

|

aba1d3336d | ||

|

|

7c2c68e03b | ||

|

|

ff30a31748 | ||

|

|

3d8d351996 | ||

|

|

eea8f607fa | ||

|

|

d3f357eb13 | ||

|

|

e19ef85071 | ||

|

|

1e7cc5b6ad | ||

|

|

6e4c27136a | ||

|

|

afb1e5b9f7 | ||

|

|

ed90b16fd3 | ||

|

|

2901fcfd24 | ||

|

|

c918459a8e | ||

|

|

9d3c560648 | ||

|

|

c901c54716 | ||

|

|

d925999a70 | ||

|

|

aa5aa78677 | ||

|

|

fd37490fcd | ||

|

|

d55fb76a71 | ||

|

|

ba3954dc0f | ||

|

|

faf20cdf0b | ||

|

|

6321909582 | ||

|

|

355f7c4e69 | ||

|

|

2c3c949bc9 | ||

|

|

babf756bd5 | ||

|

|

c341e22f76 | ||

|

|

0a0e52dd3d | ||

|

|

081b4064a1 | ||

|

|

9a224ea780 | ||

|

|

ab3a6ba34e | ||

|

|

2ec8300663 | ||

|

|

8762f26c04 | ||

|

|

65e50afd27 | ||

|

|

aff0b38c0b | ||

|

|

fefd635f6c | ||

|

|

a8b410a0da | ||

|

|

841b5229e6 | ||

|

|

89421058bc | ||

|

|

4d5f69e9dc | ||

|

|

8cb7ee6aad | ||

|

|

ab62c06d07 | ||

|

|

d85c81ff57 | ||

|

|

94d07adf9c | ||

|

|

3eeefb18c2 | ||

|

|

34b58757ec | ||

|

|

0df243184c | ||

|

|

99420a8a48 | ||

|

|

b013bf6ea9 | ||

|

|

1bedb4d182 | ||

|

|

f844d1221e | ||

|

|

7950d1be7d | ||

|

|

ffdeb91dcd | ||

|

|

a356b13d5a | ||

|

|

db61f05fb6 | ||

|

|

26937ab505 | ||

|

|

3dc2132e72 | ||

|

|

b50f2bbf6c | ||

|

|

16a0a5556d | ||

|

|

32166687ec | ||

|

|

db3498e0a0 | ||

|

|

2dc70ede78 | ||

|

|

694f385d2b | ||

|

|

407c126419 | ||

|

|

18746c917e | ||

|

|

01324970b4 | ||

|

|

b068669c3c | ||

|

|

bc134283d9 | ||

|

|

9f3a0f3c32 | ||

|

|

ca1ab3fef9 | ||

|

|

b6394cc39c | ||

|

|

36915f5f03 | ||

|

|

1ad305f874 | ||

|

|

58cdd7de69 | ||

|

|

4cee006a1e | ||

|

|

7bbc53bef9 | ||

|

|

1432168ec0 | ||

|

|

534ae8dd3a | ||

|

|

0a25611cf5 | ||

|

|

17990b3558 | ||

|

|

cb80d04265 | ||

|

|

0194a493ab | ||

|

|

06e49cb638 | ||

|

|

93dea60906 | ||

|

|

177f955a6b | ||

|

|

324a0b4071 | ||

|

|

132d6432cc | ||

|

|

4c51efb0b7 | ||

|

|

8f0f2e5844 | ||

|

|

0ae1524682 | ||

|

|

b24ba06794 | ||

|

|

ec6ce88e08 | ||

|

|

7839bed160 | ||

|

|

39d3689d01 | ||

|

|

ef347ff8ef | ||

|

|

908629dd9a | ||

|

|

4cea6ab238 | ||

|

|

a0e8a69848 | ||

|

|

df2b5b4274 | ||

|

|

f18d3af3b4 | ||

|

|

b4a447b596 | ||

|

|

d329630509 | ||

|

|

1af84b046d | ||

|

|

84e8543309 | ||

|

|

09f7ecd295 | ||

|

|

1a8dbf0f2c | ||

|

|

3f1e695581 | ||

|

|

8881503ca6 | ||

|

|

317da8a13e | ||

|

|

316d719d64 | ||

|

|

01e1b79674 | ||

|

|

9b7ff997b9 | ||

|

|

6d5c2a5e2b | ||

|

|

d0185a484f | ||

|

|

aadacbf729 | ||

|

|

86290d1ce9 | ||

|

|

d5ddd59997 | ||

|

|

64883f1752 | ||

|

|

ef0b8d3180 | ||

|

|

101379e6ba | ||

|

|

80947af962 | ||

|

|

9ebb80a111 | ||

|

|

37e99b977c | ||

|

|

dcbc505e7a | ||

|

|

9f518d6c4b | ||

|

|

6f88df0570 | ||

|

|

f97c9521f3 | ||

|

|

61aa638be9 | ||

|

|

6285359f31 | ||

|

|

f72987d55f | ||

|

|

33292988bb | ||

|

|

261cd45535 | ||

|

|

f9994e7e88 | ||

|

|

b0ecfefa09 | ||

|

|

e1e4528db6 | ||

|

|

6eecd514e4 | ||

|

|

5b4464533b | ||

|

|

62233642ad | ||

|

|

26910b80b9 | ||

|

|

306c7a2480 | ||

|

|

d26f4f1ac2 | ||

|

|

1509ab6435 | ||

|

|

df0fcb1801 | ||

|

|

359a269e88 | ||

|

|

f621aeef54 | ||

|

|

10ce9b44fc | ||

|

|

6d5e66b73b | ||

|

|

2f701510e0 | ||

|

|

ec38cbd285 | ||

|

|

640d8c1bf4 | ||

|

|

c570cf8fc2 | ||

|

|

9e18f11822 | ||

|

|

121482528b | ||

|

|

ac482bceae | ||

|

|

3692f5ed7d | ||

|

|

ce32e32433 | ||

|

|

fdeea2f4a1 | ||

|

|

837aa2037f | ||

|

|

45065b03e3 | ||

|

|

195f8c6ec7 | ||

|

|

20202d1cdb | ||

|

|

e4d31241da | ||

|

|

83dc24df94 | ||

|

|

890eb8ea46 | ||

|

|

d57f01f88b | ||

|

|

3297f3088e | ||

|

|

f34ab4d5ce | ||

|

|

2f775e098e | ||

|

|

56600420f1 | ||

|

|

4e579bc934 | ||

|

|

8571da9761 | ||

|

|

0a591f7a3c | ||

|

|

84dec294da | ||

|

|

e3cb3e5a54 | ||

|

|

9fb31d52b7 | ||

|

|

5a7c8f539a | ||

|

|

9305b09717 | ||

|

|

25b2ff91af | ||

|

|

7f6091afb1 | ||

|

|

fe3acf669e | ||

|

|

18950cc43b | ||

|

|

d25bde12c3 | ||

|

|

f0542c3ea5 | ||

|

|

70185da4a7 | ||

|

|

1dc859f225 | ||

|

|

7a84a51940 | ||

|

|

d5122fac17 | ||

|

|

36167790df | ||

|

|

ad5e1328c5 | ||

|

|

e2b8cf1cf2 | ||

|

|

6f8d9f15b2 | ||

|

|

64215b478f | ||

|

|

f8faecdc36 | ||

|

|

656894e46a | ||

|

|

3caaa6b63b | ||

|

|

ad5acdbf1d | ||

|

|

24ef743d24 | ||

|

|

0e3e61afe3 | ||

|

|

de254bee66 | ||

|

|

96f2aa5b30 | ||

|

|

f86c4e5e52 | ||

|

|

05c2fe8c35 | ||

|

|

dcd8413dcf | ||

|

|

b4b13b0aa9 | ||

|

|

d8d4b6d9f9 | ||

|

|

1305ffe910 | ||

|

|

5a434b5b50 | ||

|

|

d8db9c458c | ||

|

|

861c5812b3 |

26

.github/workflows/check.yaml

vendored

26

.github/workflows/check.yaml

vendored

@@ -3,12 +3,28 @@ name: Lint and Test Charts

|

||||

on:

|

||||

push:

|

||||

branches: [ "main", "release-*" ]

|

||||

paths-ignore:

|

||||

- 'docs/**'

|

||||

paths:

|

||||

- '!docs/**'

|

||||

- 'apps/.olares/**'

|

||||

- 'build/**'

|

||||

- 'cli/**'

|

||||

- 'daemon/**'

|

||||

- 'framework/**/.olares/**'

|

||||

- 'infrastructure/**/.olares/**'

|

||||

- 'platform/**/.olares/**'

|

||||

- 'vendor/**'

|

||||

pull_request_target:

|

||||

branches: [ "main", "release-*" ]

|

||||

paths-ignore:

|

||||

- 'docs/**'

|

||||

paths:

|

||||

- '!docs/**'

|

||||

- 'apps/.olares/**'

|

||||

- 'build/**'

|

||||

- 'cli/**'

|

||||

- 'daemon/**'

|

||||

- 'framework/**/.olares/**'

|

||||

- 'infrastructure/**/.olares/**'

|

||||

- 'platform/**/.olares/**'

|

||||

- 'vendor/**'

|

||||

|

||||

workflow_dispatch:

|

||||

|

||||

@@ -59,7 +75,7 @@ jobs:

|

||||

steps:

|

||||

- id: generate

|

||||

run: |

|

||||

v=1.12.2-$(echo $RANDOM$RANDOM)

|

||||

v=1.12.3-$(echo $RANDOM$RANDOM)

|

||||

echo "version=$v" >> "$GITHUB_OUTPUT"

|

||||

|

||||

upload-cli:

|

||||

|

||||

32

.github/workflows/module_appservice_build_main.yaml

vendored

Normal file

32

.github/workflows/module_appservice_build_main.yaml

vendored

Normal file

@@ -0,0 +1,32 @@

|

||||

name: App-Service Build test

|

||||

on:

|

||||

push:

|

||||

branches:

|

||||

- "module-appservice"

|

||||

paths:

|

||||

- 'framework/app-service/**'

|

||||

- '!framework/app-service/.olares/**'

|

||||

- '!framework/app-service/README.md'

|

||||

- '!framework/app-service/PROJECT'

|

||||

pull_request:

|

||||

branches:

|

||||

- "module-appservice"

|

||||

paths:

|

||||

- 'framework/app-service/**'

|

||||

- '!framework/app-service/.olares/**'

|

||||

- '!framework/app-service/README.md'

|

||||

- '!framework/app-service/PROJECT'

|

||||

jobs:

|

||||

build0-main:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

sudo apt-get update

|

||||

sudo apt-get install -y btrfs-progs libbtrfs-dev

|

||||

- uses: actions/checkout@v3

|

||||

- uses: actions/setup-go@v3

|

||||

with:

|

||||

go-version: '1.24.6'

|

||||

- run: make build

|

||||

working-directory: framework/app-service

|

||||

62

.github/workflows/module_appservice_publish_docker.yaml

vendored

Normal file

62

.github/workflows/module_appservice_publish_docker.yaml

vendored

Normal file

@@ -0,0 +1,62 @@

|

||||

name: Publish app-service to Dockerhub

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

tags:

|

||||

description: 'Release Tags'

|

||||

|

||||

jobs:

|

||||

publish_dockerhub_amd64:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Check out the repo

|

||||

uses: actions/checkout@v3

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASS }}

|

||||

- name: Build and push amd64 Docker image

|

||||

uses: docker/build-push-action@v3

|

||||

with:

|

||||

push: true

|

||||

tags: beclab/app-service:${{ github.event.inputs.tags }}-amd64

|

||||

context: framework/app-service

|

||||

file: framework/app-service/Dockerfile

|

||||

platforms: linux/amd64

|

||||

publish_dockerhub_arm64:

|

||||

runs-on: self-hosted

|

||||

steps:

|

||||

- name: Check out the repo

|

||||

uses: actions/checkout@v3

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASS }}

|

||||

- name: Build and push arm64 Docker image

|

||||

uses: docker/build-push-action@v3

|

||||

with:

|

||||

push: true

|

||||

tags: beclab/app-service:${{ github.event.inputs.tags }}-arm64

|

||||

context: framework/app-service

|

||||

file: framework/app-service/Dockerfile

|

||||

platforms: linux/arm64

|

||||

|

||||

publish_manifest:

|

||||

needs:

|

||||

- publish_dockerhub_amd64

|

||||

- publish_dockerhub_arm64

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASS }}

|

||||

|

||||

- name: Push manifest

|

||||

run: |

|

||||

docker manifest create beclab/app-service:${{ github.event.inputs.tags }} --amend beclab/app-service:${{ github.event.inputs.tags }}-amd64 --amend beclab/app-service:${{ github.event.inputs.tags }}-arm64

|

||||

docker manifest push beclab/app-service:${{ github.event.inputs.tags }}

|

||||

63

.github/workflows/module_appservice_publish_imageservice.yaml

vendored

Normal file

63

.github/workflows/module_appservice_publish_imageservice.yaml

vendored

Normal file

@@ -0,0 +1,63 @@

|

||||

name: Publish image-service to Dockerhub

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

tags:

|

||||

description: 'Release Tags'

|

||||

|

||||

jobs:

|

||||

publish_dockerhub_amd64:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Check out the repo

|

||||

uses: actions/checkout@v3

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASS }}

|

||||

- name: Build and push amd64 Docker image

|

||||

uses: docker/build-push-action@v3

|

||||

with:

|

||||

push: true

|

||||

tags: beclab/image-service:${{ github.event.inputs.tags }}-amd64

|

||||

context: framework/app-service

|

||||

file: framework/app-service/Dockerfile.image

|

||||

platforms: linux/amd64

|

||||

publish_dockerhub_arm64:

|

||||

runs-on: self-hosted

|

||||

steps:

|

||||

- name: Check out the repo

|

||||

uses: actions/checkout@v3

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASS }}

|

||||

- name: Build and push arm64 Docker image

|

||||

uses: docker/build-push-action@v3

|

||||

with:

|

||||

push: true

|

||||

tags: beclab/image-service:${{ github.event.inputs.tags }}-arm64

|

||||

context: framework/app-service

|

||||

file: framework/app-service/Dockerfile.image

|

||||

platforms: linux/arm64

|

||||

|

||||

publish_manifest:

|

||||

needs:

|

||||

- publish_dockerhub_amd64

|

||||

- publish_dockerhub_arm64

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASS }}

|

||||

|

||||

- name: Push manifest

|

||||

run: |

|

||||

docker manifest create beclab/image-service:${{ github.event.inputs.tags }} --amend beclab/image-service:${{ github.event.inputs.tags }}-amd64 --amend beclab/image-service:${{ github.event.inputs.tags }}-arm64

|

||||

docker manifest push beclab/image-service:${{ github.event.inputs.tags }}

|

||||

|

||||

4

.github/workflows/release-daemon.yaml

vendored

4

.github/workflows/release-daemon.yaml

vendored

@@ -44,9 +44,9 @@ jobs:

|

||||

with:

|

||||

go-version: 1.22.1

|

||||

|

||||

- name: install udev-devel

|

||||

- name: install udev-devel and pcap-devel

|

||||

run: |

|

||||

sudo apt update && sudo apt install -y libudev-dev

|

||||

sudo apt update && sudo apt install -y libudev-dev libpcap-dev

|

||||

|

||||

- name: Install x86_64 cross-compiler

|

||||

run: sudo apt-get update && sudo apt-get install -y build-essential

|

||||

|

||||

2

.github/workflows/release-daily.yaml

vendored

2

.github/workflows/release-daily.yaml

vendored

@@ -17,7 +17,7 @@ jobs:

|

||||

steps:

|

||||

- id: generate

|

||||

run: |

|

||||

v=1.12.2-$(date +"%Y%m%d")

|

||||

v=1.12.3-$(date +"%Y%m%d")

|

||||

echo "version=$v" >> "$GITHUB_OUTPUT"

|

||||

|

||||

release-id:

|

||||

|

||||

4

.gitignore

vendored

4

.gitignore

vendored

@@ -37,4 +37,6 @@ docs/.vitepress/dist/

|

||||

docs/.vitepress/cache/

|

||||

node_modules

|

||||

.idea/

|

||||

cli/olares-cli*

|

||||

cli/olares-cli*

|

||||

|

||||

framework/app-service/bin

|

||||

|

||||

@@ -10,6 +10,8 @@

|

||||

[](https://discord.gg/olares)

|

||||

[](https://github.com/beclab/olares/blob/main/LICENSE)

|

||||

|

||||

<a href="https://trendshift.io/repositories/15376" target="_blank"><img src="https://trendshift.io/api/badge/repositories/15376" alt="beclab%2FOlares | Trendshift" style="width: 250px; height: 55px;" width="250" height="55"/></a>

|

||||

|

||||

<p>

|

||||

<a href="./README.md"><img alt="Readme in English" src="https://img.shields.io/badge/English-FFFFFF"></a>

|

||||

<a href="./README_CN.md"><img alt="Readme in Chinese" src="https://img.shields.io/badge/简体中文-FFFFFF"></a>

|

||||

@@ -21,7 +23,7 @@

|

||||

<p align="center">

|

||||

<a href="https://olares.com">Website</a> ·

|

||||

<a href="https://docs.olares.com">Documentation</a> ·

|

||||

<a href="https://larepass.olares.com">Download LarePass</a> ·

|

||||

<a href="https://www.olares.com/larepass">Download LarePass</a> ·

|

||||

<a href="https://github.com/beclab/apps">Olares Apps</a> ·

|

||||

<a href="https://space.olares.com">Olares Space</a>

|

||||

</p>

|

||||

@@ -33,7 +35,7 @@

|

||||

|

||||

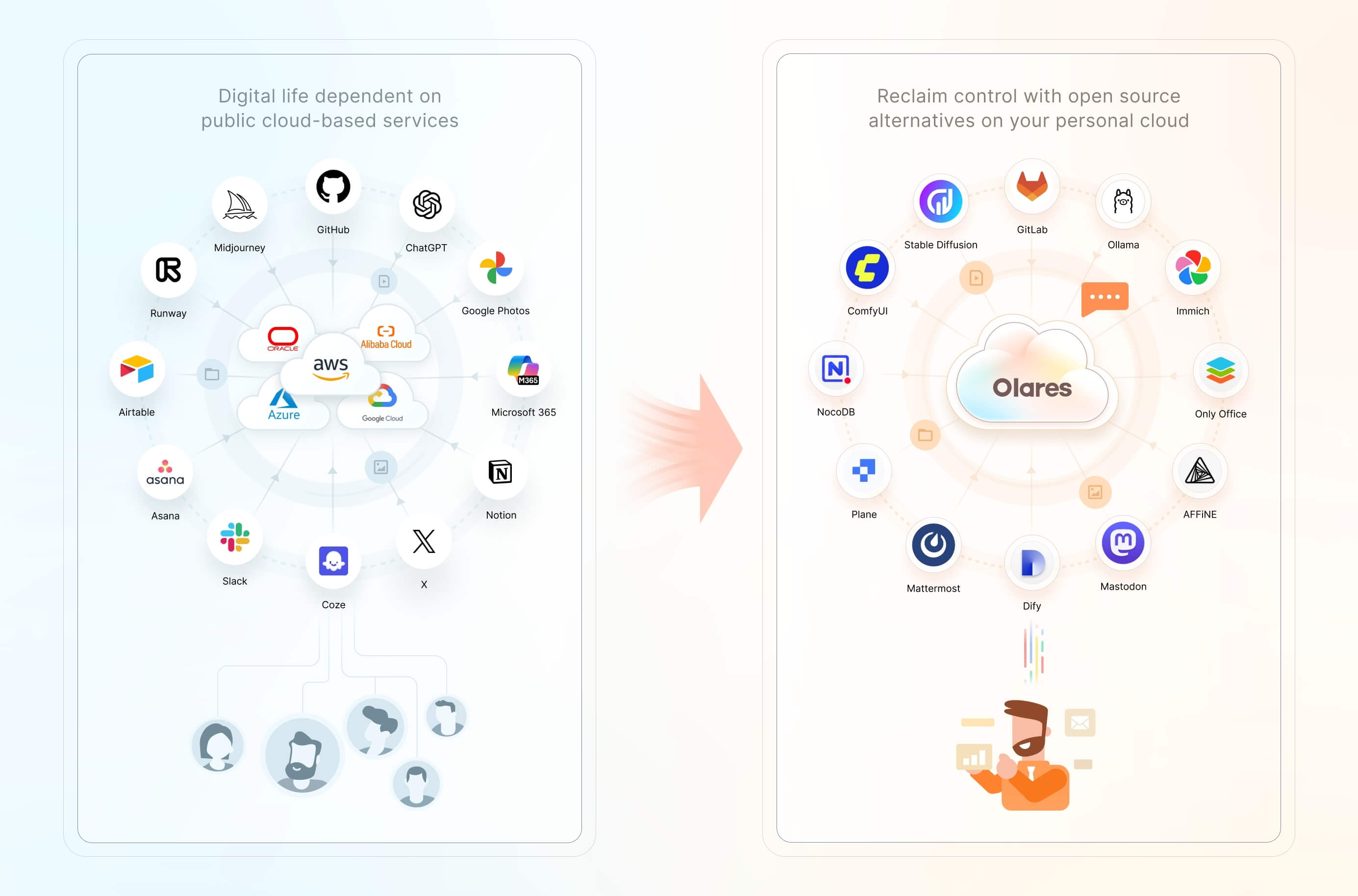

We believe you have a fundamental right to control your digital life. The most effective way to uphold this right is by hosting your data locally, on your own hardware.

|

||||

|

||||

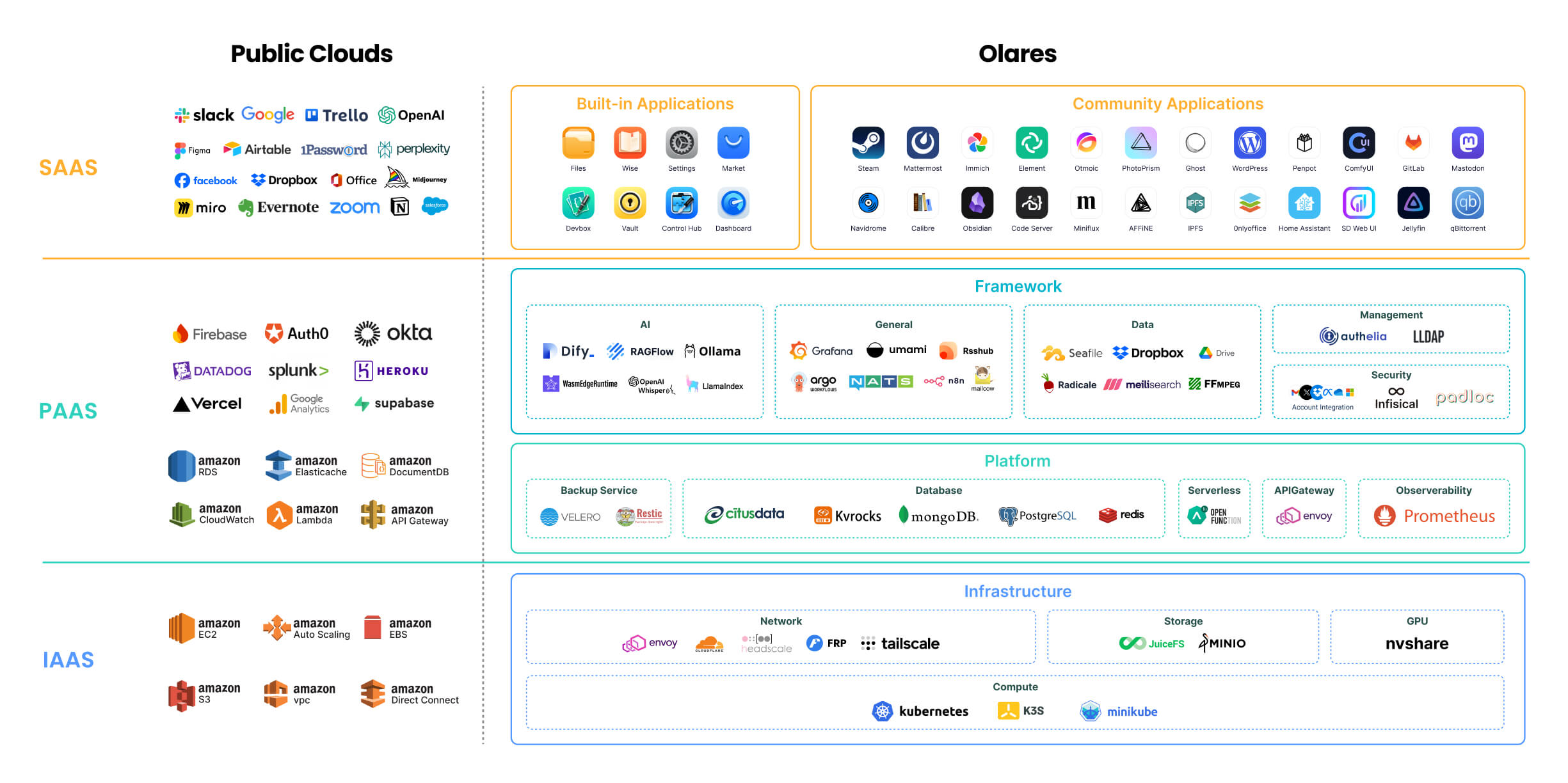

Olares is an **open-source personal cloud operating system** designed to empower you to own and manage your digital assets locally. Instead of relying on public cloud services, you can deploy powerful open-source alternatives locally on Olares, such as Ollama for hosting LLMs, SD WebUI for image generation, and Mastodon for building censor free social space. Imagine the power of the cloud, but with you in complete command.

|

||||

Olares is an **open-source personal cloud operating system** designed to empower you to own and manage your digital assets locally. Instead of relying on public cloud services, you can deploy powerful open-source alternatives locally on Olares, such as Ollama for hosting LLMs, ComfyUI for image generation, and Perplexica for private, AI-driven search and reasoning. Imagine the power of the cloud, but with you in complete command.

|

||||

|

||||

> 🌟 *Star us to receive instant notifications about new releases and updates.*

|

||||

|

||||

|

||||

@@ -10,6 +10,8 @@

|

||||

[](https://discord.gg/olares)

|

||||

[](https://github.com/beclab/olares/blob/main/LICENSE)

|

||||

|

||||

<a href="https://trendshift.io/repositories/15376" target="_blank"><img src="https://trendshift.io/api/badge/repositories/15376" alt="beclab%2FOlares | Trendshift" style="width: 250px; height: 55px;" width="250" height="55"/></a>

|

||||

|

||||

<p>

|

||||

<a href="./README.md"><img alt="Readme in English" src="https://img.shields.io/badge/English-FFFFFF"></a>

|

||||

<a href="./README_CN.md"><img alt="Readme in Chinese" src="https://img.shields.io/badge/简体中文-FFFFFF"></a>

|

||||

@@ -21,7 +23,7 @@

|

||||

<p align="center">

|

||||

<a href="https://olares.com">网站</a> ·

|

||||

<a href="https://docs.olares.com">文档</a> ·

|

||||

<a href="https://larepass.olares.com">下载 LarePass</a> ·

|

||||

<a href="https://www.olares.cn/larepass">下载 LarePass</a> ·

|

||||

<a href="https://github.com/beclab/apps">Olares 应用</a> ·

|

||||

<a href="https://space.olares.com">Olares Space</a>

|

||||

</p>

|

||||

@@ -34,7 +36,7 @@

|

||||

|

||||

我们坚信,**您拥有掌控自己数字生活的基本权利**。维护这一权利最有效的方式,就是将您的数据托管在本地,在您自己的硬件上。

|

||||

|

||||

Olares 是一款开源个人云操作系统,旨在让您能够轻松在本地拥有并管理自己的数字资产。您无需再依赖公有云服务,而可以在 Olares 上本地部署强大的开源平替服务或应用,例如可以使用 Ollama 托管大语言模型,使用 SD WebUI 用于图像生成,以及使用 Mastodon 构建不受审查的社交空间。Olares 让你坐拥云计算的强大威力,又能完全将其置于自己掌控之下。

|

||||

Olares 是一款开源个人云操作系统,旨在让您能够轻松在本地拥有并管理自己的数字资产。您无需再依赖公有云服务,而可以在 Olares 上本地部署强大的开源平替服务或应用,例如可以使用 Ollama 托管大语言模型,使用 ComfyUI 生成图像,以及使用 Perplexica 打造本地化、注重隐私的 AI 搜索与问答体验。Olares 让您坐拥云计算的强大威力,又能完全将其置于自己掌控之下。

|

||||

|

||||

> 为 Olares 点亮 🌟 以及时获取新版本和更新的通知。

|

||||

|

||||

|

||||

@@ -10,6 +10,8 @@

|

||||

[](https://discord.gg/olares)

|

||||

[](https://github.com/beclab/olares/blob/main/LICENSE)

|

||||

|

||||

<a href="https://trendshift.io/repositories/15376" target="_blank"><img src="https://trendshift.io/api/badge/repositories/15376" alt="beclab%2FOlares | Trendshift" style="width: 250px; height: 55px;" width="250" height="55"/></a>

|

||||

|

||||

<p>

|

||||

<a href="./README.md"><img alt="Readme in English" src="https://img.shields.io/badge/English-FFFFFF"></a>

|

||||

<a href="./README_CN.md"><img alt="Readme in Chinese" src="https://img.shields.io/badge/简体中文-FFFFFF"></a>

|

||||

@@ -21,7 +23,7 @@

|

||||

<p align="center">

|

||||

<a href="https://olares.com">ウェブサイト</a> ·

|

||||

<a href="https://docs.olares.com">ドキュメント</a> ·

|

||||

<a href="https://larepass.olares.com">LarePassをダウンロード</a> ·

|

||||

<a href="https://www.olares.com/larepass">LarePassをダウンロード</a> ·

|

||||

<a href="https://github.com/beclab/apps">Olaresアプリ</a> ·

|

||||

<a href="https://space.olares.com">Olares Space</a>

|

||||

</p>

|

||||

@@ -34,8 +36,7 @@

|

||||

|

||||

私たちは、あなたが自身のデジタルライフをコントロールする基本的な権利を有すると確信しています。この権利を守る最も効果的な方法は、あなたのデータをローカルの、あなた自身のハードウェア上でホストすることです。

|

||||

|

||||

Olaresは、あなたが自身のデジタル資産をローカルで容易に所有し管理できるよう設計された、オープンソースのパーソナルクラウドOSです。もはやパブリッククラウドサービスに依存する必要はありません。Olares上で、例えばOllamaを利用した大規模言語モデルのホスティング、SD WebUIによる画像生成、Mastodonを用いた検閲のないソーシャルスペースの構築など、強力なオープンソースの代替サービスやアプリケーションをローカルにデプロイできます。Olaresは、クラウドコンピューティングの絶大な力を活用しつつ、それを完全に自身のコントロール下に置くことを可能にします。

|

||||

|

||||

Olaresは、あなたが自身のデジタル資産をローカルで所有し管理できるように設計された、オープンソースのパーソナルクラウドOSです。パブリッククラウドサービスに依存する代わりに、Olares上で強力なオープンソースの代替をローカルにデプロイできます。例えば、LLMのホスティングにはOllama、画像生成にはComfyUI、そしてプライバシーを重視したAI駆動の検索と推論にはPerplexicaを利用できます。クラウドの力をそのままに、主導権は常にあなたの手に。

|

||||

> 🌟 *新しいリリースや更新についての通知を受け取るために、スターを付けてください。*

|

||||

|

||||

## アーキテクチャ

|

||||

@@ -44,7 +45,7 @@ Olaresは、あなたが自身のデジタル資産をローカルで容易に

|

||||

|

||||

|

||||

|

||||

各コンポーネントの詳細については、[Olares アーキテクチャ](https://docs.olares.com/manual/concepts/system-architecture.html)(英語版)をご参照ください。

|

||||

各コンポーネントの詳細については、[Olares アーキテクチャ](https://docs.olares.com/developer/concepts/system-architecture.html)(英語版)をご参照ください。

|

||||

|

||||

> 🔍**OlaresとNASの違いは何ですか?**

|

||||

>

|

||||

|

||||

@@ -51,6 +51,8 @@ rules:

|

||||

- "/provider/get_dataset_folder_status"

|

||||

- "/provider/update_dataset_folder_paths"

|

||||

- "/seahub/api/*"

|

||||

- "/system/configuration/encoding"

|

||||

- "/api/search/get_directory/"

|

||||

verbs: ["*"]

|

||||

|

||||

---

|

||||

|

||||

@@ -209,6 +209,21 @@ spec:

|

||||

port: 80

|

||||

targetPort: 91

|

||||

---

|

||||

apiVersion: v1

|

||||

kind: Service

|

||||

metadata:

|

||||

name: share-fe-service

|

||||

namespace: user-space-{{ .Values.bfl.username }}

|

||||

spec:

|

||||

selector:

|

||||

app: olares-app

|

||||

type: ClusterIP

|

||||

ports:

|

||||

- protocol: TCP

|

||||

name: share

|

||||

port: 80

|

||||

targetPort: 92

|

||||

---

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

metadata:

|

||||

@@ -220,12 +235,12 @@ metadata:

|

||||

applications.app.bytetrade.io/owner: '{{ .Values.bfl.username }}'

|

||||

applications.app.bytetrade.io/author: bytetrade.io

|

||||

annotations:

|

||||

applications.app.bytetrade.io/default-thirdlevel-domains: '[{"appName": "olares-app","entranceName":"dashboard","thirdLevelDomain":"dashboard"},{"appName":"olares-app","entranceName":"control-hub","thirdLevelDomain":"control-hub"},{"appName":"olares-app","entranceName":"files","thirdLevelDomain":"files"},{"appName": "olares-app","entranceName":"vault","thirdLevelDomain":"vault"},{"appName":"olares-app","entranceName":"headscale","thirdLevelDomain":"headscale"},{"appName":"olares-app","entranceName":"settings","thirdLevelDomain":"settings"},{"appName": "olares-app","entranceName":"market","thirdLevelDomain":"market"},{"appName":"olares-app","entranceName":"profile","thirdLevelDomain":"profile"}]'

|

||||

applications.app.bytetrade.io/default-thirdlevel-domains: '[{"appName": "olares-app","entranceName":"dashboard","thirdLevelDomain":"dashboard"},{"appName":"olares-app","entranceName":"control-hub","thirdLevelDomain":"control-hub"},{"appName":"olares-app","entranceName":"files","thirdLevelDomain":"files"},{"appName":"olares-app","entranceName":"share","thirdLevelDomain":"share"},{"appName": "olares-app","entranceName":"vault","thirdLevelDomain":"vault"},{"appName":"olares-app","entranceName":"headscale","thirdLevelDomain":"headscale"},{"appName":"olares-app","entranceName":"settings","thirdLevelDomain":"settings"},{"appName": "olares-app","entranceName":"market","thirdLevelDomain":"market"},{"appName":"olares-app","entranceName":"profile","thirdLevelDomain":"profile"}]'

|

||||

applications.app.bytetrade.io/icon: https://app.cdn.olares.com/appstore/olaresapps/icon.png

|

||||

applications.app.bytetrade.io/title: 'Olares Apps'

|

||||

applications.app.bytetrade.io/version: '0.0.1'

|

||||

applications.app.bytetrade.io/policies: '{"policies":[{"entranceName":"dashboard","uriRegex":"/js/script.js", "level":"public"},{"entranceName":"dashboard","uriRegex":"/js/api/send", "level":"public"}]}'

|

||||

applications.app.bytetrade.io/entrances: '[{"name":"files", "host":"files-fe-service", "port":80,"title":"Files","windowPushState":true,"icon":"https://app.cdn.olares.com/appstore/files/icon.png"},{"name":"vault", "host":"vault-service", "port":80,"title":"Vault","windowPushState":true,"icon":"https://app.cdn.olares.com/appstore/vault/icon.png"},{"name":"market", "host":"appstore-fe-service", "port":80,"title":"Market","windowPushState":true,"icon":"https://app.cdn.olares.com/appstore/appstore/icon.png"},{"name":"settings", "host":"settings-service", "port":80,"title":"Settings","icon":"https://app.cdn.olares.com/appstore/settings/icon.png"},{"name":"profile", "host":"profile-service", "port":80,"title":"Profile","windowPushState":true,"icon":"https://app.cdn.olares.com/appstore/profile/icon.png"},{"name":"dashboard","host":"dashboard-service","port":80,"title":"Dashboard","windowPushState":true,"icon":"https://app.cdn.olares.com/appstore/dashboard/icon.png"},{"name":"control-hub","host":"control-hub-service","port":80,"title":"Control Hub","windowPushState":true,"icon":"https://app.cdn.olares.com/appstore/control-hub/icon.png"},{"name":"headscale", "host":"headscale-svc", "port":80,"title":"Headscale","invisible": true,"icon":"https://app.cdn.olares.com/appstore/headscale/icon.png"}]'

|

||||

applications.app.bytetrade.io/entrances: '[{"name":"files", "host":"files-fe-service", "port":80,"title":"Files","windowPushState":true,"icon":"https://app.cdn.olares.com/appstore/files/icon.png"},{"name":"share","authLevel":"public", "host":"share-fe-service", "port":80,"title":"Share","windowPushState":true,"icon":"https://app.cdn.olares.com/appstore/files/icon.png","invisible":true},{"name":"vault", "host":"vault-service", "port":80,"title":"Vault","windowPushState":true,"icon":"https://app.cdn.olares.com/appstore/vault/icon.png"},{"name":"market", "host":"appstore-fe-service", "port":80,"title":"Market","windowPushState":true,"icon":"https://app.cdn.olares.com/appstore/appstore/icon.png"},{"name":"settings", "host":"settings-service", "port":80,"title":"Settings","icon":"https://app.cdn.olares.com/appstore/settings/icon.png"},{"name":"profile", "host":"profile-service", "port":80,"title":"Profile","windowPushState":true,"icon":"https://app.cdn.olares.com/appstore/profile/icon.png"},{"name":"dashboard","host":"dashboard-service","port":80,"title":"Dashboard","windowPushState":true,"icon":"https://app.cdn.olares.com/appstore/dashboard/icon.png"},{"name":"control-hub","host":"control-hub-service","port":80,"title":"Control Hub","windowPushState":true,"icon":"https://app.cdn.olares.com/appstore/control-hub/icon.png"},{"name":"headscale", "host":"headscale-svc", "port":80,"title":"Headscale","invisible": true,"icon":"https://app.cdn.olares.com/appstore/headscale/icon.png"}]'

|

||||

spec:

|

||||

replicas: 1

|

||||

selector:

|

||||

@@ -303,7 +318,7 @@ spec:

|

||||

chown -R 1000:1000 /uploadstemp && \

|

||||

chown -R 1000:1000 /appdata

|

||||

- name: olares-app-init

|

||||

image: beclab/system-frontend:v1.5.11

|

||||

image: beclab/system-frontend:v1.6.16

|

||||

imagePullPolicy: IfNotPresent

|

||||

command:

|

||||

- /bin/sh

|

||||

@@ -352,6 +367,7 @@ spec:

|

||||

- containerPort: 89

|

||||

- containerPort: 90

|

||||

- containerPort: 91

|

||||

- containerPort: 92

|

||||

- containerPort: 8090

|

||||

command:

|

||||

- /bin/sh

|

||||

@@ -424,7 +440,7 @@ spec:

|

||||

- name: NATS_SUBJECT_VAULT

|

||||

value: os.vault.{{ .Values.bfl.username}}

|

||||

- name: user-service

|

||||

image: beclab/user-service:v0.0.62

|

||||

image: beclab/user-service:v0.0.73

|

||||

imagePullPolicy: IfNotPresent

|

||||

ports:

|

||||

- containerPort: 3000

|

||||

|

||||

@@ -0,0 +1,11 @@

|

||||

apiVersion: rbac.authorization.k8s.io/v1

|

||||

kind: ClusterRole

|

||||

metadata:

|

||||

name: {{ .Values.bfl.username }}:prometheus-k8s

|

||||

annotations:

|

||||

provider-registry-ref: {{ .Values.bfl.username }}/4ae9f19e

|

||||

provider-service-ref: http://prometheus-k8s.kubesphere-monitoring-system:9090

|

||||

rules:

|

||||

- nonResourceURLs:

|

||||

- "*"

|

||||

verbs: ["*"]

|

||||

@@ -9,4 +9,7 @@ metadata:

|

||||

rules:

|

||||

- nonResourceURLs:

|

||||

- "/document/search*"

|

||||

- "/task/*"

|

||||

- "/search/*"

|

||||

- "/monitorsetting/*"

|

||||

verbs: ["*"]

|

||||

@@ -57,4 +57,16 @@ metadata:

|

||||

rules:

|

||||

- nonResourceURLs:

|

||||

- "/server/intent/send"

|

||||

verbs: ["*"]

|

||||

verbs: ["*"]

|

||||

---

|

||||

apiVersion: rbac.authorization.k8s.io/v1

|

||||

kind: ClusterRole

|

||||

metadata:

|

||||

name: {{ .Values.bfl.username }}:dashboard

|

||||

annotations:

|

||||

provider-registry-ref: {{ .Values.bfl.username }}/dashboard

|

||||

provider-service-ref: prometheus-k8s.kubesphere-monitoring-system:9090

|

||||

rules:

|

||||

- nonResourceURLs:

|

||||

- "*"

|

||||

verbs: ["*"]

|

||||

|

||||

@@ -29,7 +29,7 @@ spec:

|

||||

|

||||

containers:

|

||||

- name: wizard

|

||||

image: beclab/wizard:v1.5.11

|

||||

image: beclab/wizard:v1.6.5

|

||||

imagePullPolicy: IfNotPresent

|

||||

ports:

|

||||

- containerPort: 80

|

||||

|

||||

@@ -7,10 +7,18 @@ function command_exists() {

|

||||

command -v "$@" > /dev/null 2>&1

|

||||

}

|

||||

|

||||

if [[ x"$REPO_PATH" == x"" ]]; then

|

||||

export REPO_PATH="#__REPO_PATH__"

|

||||

fi

|

||||

|

||||

if [[ "x${REPO_PATH:3}" == "xREPO_PATH__" ]]; then

|

||||

export REPO_PATH="/"

|

||||

fi

|

||||

|

||||

if [[ x"$VERSION" == x"" ]]; then

|

||||

if [[ "$LOCAL_RELEASE" == "1" ]]; then

|

||||

ts=$(date +%Y%m%d%H%M%S)

|

||||

export VERSION="1.12.2-$ts"

|

||||

export VERSION="1.12.3-$ts"

|

||||

echo "will build and use a local release of Olares with version: $VERSION"

|

||||

echo ""

|

||||

else

|

||||

@@ -20,7 +28,7 @@ fi

|

||||

|

||||

if [[ "x${VERSION}" == "x" || "x${VERSION:3}" == "xVERSION__" ]]; then

|

||||

echo "error: Olares version is unspecified, please set the VERSION env var and rerun this script."

|

||||

echo "for example: VERSION=1.12.2-20241124 bash $0"

|

||||

echo "for example: VERSION=1.12.3-20241124 bash $0"

|

||||

exit 1

|

||||

fi

|

||||

|

||||

@@ -92,13 +100,17 @@ if [[ "$LOCAL_RELEASE" == "1" ]]; then

|

||||

fi

|

||||

INSTALL_OLARES_CLI=$(which olares-cli)

|

||||

else

|

||||

if command_exists olares-cli && [[ "$(olares-cli -v | awk '{print $3}')" == "$VERSION" ]]; then

|

||||

expected_vendor="main"

|

||||

if [[ "$(basename "$REPO_PATH")" == "olares-one" ]]; then

|

||||

expected_vendor="OlaresOne"

|

||||

fi

|

||||

if command_exists olares-cli && [[ "$(olares-cli -v | awk '{print $3}')" == "$VERSION" ]] && [[ "$(olares-cli --vendor)" == "$expected_vendor" ]]; then

|

||||

INSTALL_OLARES_CLI=$(which olares-cli)

|

||||

echo "olares-cli already installed and is the expected version"

|

||||

echo ""

|

||||

else

|

||||

if [[ ! -f ${CLI_FILE} ]]; then

|

||||

CLI_URL="${cdn_url}/${CLI_FILE}"

|

||||

CLI_URL="${cdn_url}${REPO_PATH}${CLI_FILE}"

|

||||

|

||||

echo "downloading Olares installer from ${CLI_URL} ..."

|

||||

echo ""

|

||||

|

||||

@@ -7,6 +7,15 @@ function command_exists() {

|

||||

command -v "$@" > /dev/null 2>&1

|

||||

}

|

||||

|

||||

if [[ x"$REPO_PATH" == x"" ]]; then

|

||||

export REPO_PATH="#__REPO_PATH__"

|

||||

fi

|

||||

|

||||

|

||||

if [[ "x${REPO_PATH:3}" == "xREPO_PATH__" ]]; then

|

||||

export REPO_PATH="/"

|

||||

fi

|

||||

|

||||

function read_tty() {

|

||||

echo -n $1

|

||||

read $2 < /dev/tty

|

||||

@@ -149,7 +158,7 @@ export VERSION="#__VERSION__"

|

||||

|

||||

if [[ "x${VERSION}" == "x" || "x${VERSION:3}" == "xVERSION__" ]]; then

|

||||

echo "error: Olares version is unspecified, please set the VERSION env var and rerun this script."

|

||||

echo "for example: VERSION=1.12.2-20241124 bash $0"

|

||||

echo "for example: VERSION=1.12.3-20241124 bash $0"

|

||||

exit 1

|

||||

fi

|

||||

|

||||

@@ -172,15 +181,17 @@ else

|

||||

RELEASE_ID_SUFFIX=".$RELEASE_ID"

|

||||

fi

|

||||

CLI_FILE="olares-cli-v${VERSION}_linux_${ARCH}${RELEASE_ID_SUFFIX}.tar.gz"

|

||||

|

||||

if command_exists olares-cli && [[ "$(olares-cli -v | awk '{print $3}')" == "$VERSION" ]]; then

|

||||

expected_vendor="main"

|

||||

if [[ "$(basename "$REPO_PATH")" == "olares-one" ]]; then

|

||||

expected_vendor="OlaresOne"

|

||||

fi

|

||||

if command_exists olares-cli && [[ "$(olares-cli -v | awk '{print $3}')" == "$VERSION" ]] && [[ "$(olares-cli --vendor)" == "$expected_vendor" ]]; then

|

||||

INSTALL_OLARES_CLI=$(which olares-cli)

|

||||

echo "olares-cli already installed and is the expected version"

|

||||

echo ""

|

||||

else

|

||||

if [[ ! -f ${CLI_FILE} ]]; then

|

||||

CLI_URL="${cdn_url}/${CLI_FILE}"

|

||||

|

||||

CLI_URL="${cdn_url}${REPO_PATH}${CLI_FILE}"

|

||||

echo "downloading Olares installer from ${CLI_URL} ..."

|

||||

echo ""

|

||||

|

||||

|

||||

@@ -17,6 +17,7 @@ metadata:

|

||||

kubesphere.io/creator: '{{ .Values.user.name }}'

|

||||

labels:

|

||||

kubesphere.io/workspace: system-workspace

|

||||

openpolicyagent.org/webhook: ignore

|

||||

name: os-platform

|

||||

|

||||

---

|

||||

@@ -27,6 +28,7 @@ metadata:

|

||||

kubesphere.io/creator: '{{ .Values.user.name }}'

|

||||

labels:

|

||||

kubesphere.io/workspace: system-workspace

|

||||

openpolicyagent.org/webhook: ignore

|

||||

name: os-framework

|

||||

|

||||

---

|

||||

@@ -37,6 +39,7 @@ metadata:

|

||||

kubesphere.io/creator: '{{ .Values.user.name }}'

|

||||

labels:

|

||||

kubesphere.io/workspace: system-workspace

|

||||

openpolicyagent.org/webhook: ignore

|

||||

name: os-protected

|

||||

|

||||

|

||||

|

||||

@@ -66,6 +66,12 @@ if [ ! -z $RELEASE_ID ]; then

|

||||

sh -c "$SED 's/#__RELEASE_ID__/${RELEASE_ID}/' joincluster.sh"

|

||||

fi

|

||||

|

||||

# replace repo path placeholder in scripts if provided

|

||||

if [ ! -z "$REPO_PATH" ]; then

|

||||

sh -c "$SED 's|#__REPO_PATH__|${REPO_PATH}|g' install.sh"

|

||||

sh -c "$SED 's|#__REPO_PATH__|${REPO_PATH}|g' joincluster.sh"

|

||||

fi

|

||||

|

||||

$TAR --exclude=wizard/tools --exclude=.git -zcvf ${BASE_DIR}/../install-wizard-${VERSION}.tar.gz .

|

||||

|

||||

popd

|

||||

|

||||

@@ -21,6 +21,11 @@ systemEnvs:

|

||||

type: url

|

||||

editable: true

|

||||

required: true

|

||||

# docker hub mirror endpoint for docker.io registry

|

||||

- envName: OLARES_SYSTEM_DOCKERHUB_SERVICE

|

||||

type: url

|

||||

editable: true

|

||||

required: false

|

||||

# the legacy OLARES_ROOT_DIR

|

||||

- envName: OLARES_SYSTEM_ROOT_PATH

|

||||

default: /olares

|

||||

|

||||

@@ -33,6 +33,7 @@ userEnvs:

|

||||

- envName: OLARES_USER_SMTP_SECURE

|

||||

type: bool

|

||||

editable: true

|

||||

default: "true"

|

||||

- envName: OLARES_USER_SMTP_USE_TLS

|

||||

type: bool

|

||||

editable: true

|

||||

@@ -54,15 +55,18 @@ userEnvs:

|

||||

- envName: OLARES_USER_HUGGINGFACE_SERVICE

|

||||

type: url

|

||||

editable: true

|

||||

default: "https://huggingface.co/"

|

||||

- envName: OLARES_USER_HUGGINGFACE_TOKEN

|

||||

type: password

|

||||

editable: true

|

||||

- envName: OLARES_USER_PYPI_SERVICE

|

||||

type: url

|

||||

editable: true

|

||||

default: "https://pypi.org/simple/"

|

||||

- envName: OLARES_USER_GITHUB_SERVICE

|

||||

type: url

|

||||

editable: true

|

||||

default: "https://github.com/"

|

||||

- envName: OLARES_USER_GITHUB_TOKEN

|

||||

type: password

|

||||

editable: true

|

||||

|

||||

445

cli/cmd/ctl/disk/extend.go

Normal file

445

cli/cmd/ctl/disk/extend.go

Normal file

@@ -0,0 +1,445 @@

|

||||

package disk

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"log"

|

||||

"os"

|

||||

"strconv"

|

||||

"strings"

|

||||

"text/tabwriter"

|

||||

|

||||

"github.com/beclab/Olares/cli/pkg/utils"

|

||||

"github.com/beclab/Olares/cli/pkg/utils/lvm"

|

||||

"github.com/pkg/errors"

|

||||

"github.com/spf13/cobra"

|

||||

)

|

||||

|

||||

const defaultOlaresVGName = "olares-vg"

|

||||

|

||||

func NewExtendDiskCommand() *cobra.Command {

|

||||

cmd := &cobra.Command{

|

||||

Use: "extend",

|

||||

Short: "extend disk operations",

|

||||

Run: func(cmd *cobra.Command, args []string) {

|

||||

// early return if no unmounted disks found

|

||||

unmountedDevices, err := lvm.FindUnmountedDevices()

|

||||

if err != nil {

|

||||

log.Fatalf("Error finding unmounted devices: %v\n", err)

|

||||

}

|

||||

|

||||

if len(unmountedDevices) == 0 {

|

||||

log.Println("No unmounted disks found to extend.")

|

||||

return

|

||||

}

|

||||

|

||||

// select volume group to extend

|

||||

currentVgs, err := lvm.FindCurrentLVM()

|

||||

if err != nil {

|

||||

log.Fatalf("Error finding current LVM: %v\n", err)

|

||||

}

|

||||

|

||||

if len(currentVgs) == 0 {

|

||||

log.Println("No valid volume groups found to extend.")

|

||||

return

|

||||

}

|

||||

|

||||

selectedVg, err := selectExtendingVG(currentVgs)

|

||||

if err != nil {

|

||||

log.Fatalf("Error selecting volume group: %v\n", err)

|

||||

}

|

||||

log.Printf("Selected volume group to extend: %s\n", selectedVg)

|

||||

|

||||

// select logical volume to extend

|

||||

lvInVg, err := lvm.FindLvByVgName(selectedVg)

|

||||

if err != nil {

|

||||

log.Fatalf("Error finding logical volumes in volume group %s: %v\n", selectedVg, err)

|

||||

}

|

||||

|

||||

if len(lvInVg) == 0 {

|

||||

log.Printf("No logical volumes found in volume group %s to extend.\n", selectedVg)

|

||||

return

|

||||

}

|

||||

|

||||

selectedLv, err := selectExtendingLV(selectedVg, lvInVg)

|

||||

if err != nil {

|

||||

log.Fatalf("Error selecting logical volume: %v\n", err)

|

||||

}

|

||||

log.Printf("Selected logical volume to extend: %s\n", selectedLv)

|

||||

|

||||

// select unmounted devices to create physical volume

|

||||

selectedDevice, err := selectExtendingDevices(unmountedDevices)

|

||||

if err != nil {

|

||||

log.Fatalf("Error selecting unmounted device: %v\n", err)

|

||||

}

|

||||

log.Printf("Selected unmounted device to use: %s\n", selectedDevice)

|

||||

|

||||

options := &LvmExtendOptions{

|

||||

VgName: selectedVg,

|

||||

DevicePath: selectedDevice,

|

||||

LvName: selectedLv,

|

||||

DeviceBlk: unmountedDevices[selectedDevice],

|

||||

}

|

||||

|

||||

log.Printf("Extending logical volume %s in volume group %s using device %s\n", options.LvName, options.VgName, options.DevicePath)

|

||||

cleanupNeeded, err := options.cleanupDiskParts()

|

||||

if err != nil {

|

||||

log.Fatalf("Error during disk partition cleanup check: %v\n", err)

|

||||

}

|

||||

|

||||

if cleanupNeeded {

|

||||

do, err := options.destroyWarning()

|

||||

if err != nil {

|

||||

log.Fatalf("Error during partition cleanup confirmation: %v\n", err)

|

||||

}

|

||||

if !do {

|

||||

log.Println("Operation aborted by user.")

|

||||

return

|

||||

}

|

||||

|

||||

err = options.deleteDevicePartitions()

|

||||

if err != nil {

|

||||

log.Fatalf("Error deleting device partitions: %v\n", err)

|

||||

}

|

||||

|

||||

} else {

|

||||

do, err := options.makeDecision()

|

||||

if err != nil {

|

||||

log.Fatalf("Error during extension confirmation: %v\n", err)

|

||||

}

|

||||

if !do {

|

||||

log.Println("Operation aborted by user.")

|

||||

return

|

||||

}

|

||||

}

|

||||

|

||||

err = options.extendLVM()

|

||||

if err != nil {

|

||||

log.Fatalf("Error extending LVM: %v\n", err)

|

||||

}

|

||||

|

||||

log.Println("Disk extension completed successfully.")

|

||||

|

||||

// end of command run, and show result

|

||||

// show the result of the extension

|

||||

lvInVg, err = lvm.FindLvByVgName(selectedVg)

|

||||

if err != nil {

|

||||

log.Fatalf("Error finding logical volumes in volume group %s: %v\n", selectedVg, err)

|

||||

}

|

||||

|

||||

w := tabwriter.NewWriter(os.Stdout, 0, 8, 2, ' ', 0)

|

||||

|

||||

fmt.Fprint(w, "id\tLV\tVG\tLSize\tMountpoints\n")

|

||||

for idx, lv := range lvInVg {

|

||||

fmt.Fprintf(w, "%d\t%s\t%s\t%s\t%s\n", idx+1, lv.LvName, lv.VgName, lv.LvSize, strings.Join(lv.Mountpoints, ","))

|

||||

}

|

||||

w.Flush()

|

||||

|

||||

},

|

||||

}

|

||||

|

||||

return cmd

|

||||

}

|

||||

|

||||

type LvmExtendOptions struct {

|

||||

VgName string

|

||||

DevicePath string

|

||||

LvName string

|

||||

DeviceBlk *lvm.BlkPart

|

||||

}

|

||||

|

||||

func selectExtendingVG(vgs []*lvm.VgItem) (string, error) {

|

||||

// if only one vg, return it directly

|

||||

if len(vgs) == 1 {

|

||||

return vgs[0].VgName, nil

|

||||

}

|

||||

|

||||

reader, err := utils.GetBufIOReaderOfTerminalInput()

|

||||

if err != nil {

|

||||

return "", errors.Wrap(err, "failed to get terminal input reader")

|

||||

}

|

||||

|

||||

fmt.Println("Multiple volume groups found. Please select one to extend:")

|

||||

fmt.Println("")

|

||||

// print header

|

||||

w := tabwriter.NewWriter(os.Stdout, 0, 8, 2, ' ', 0)

|

||||

|

||||

fmt.Fprint(w, "id\tVG\tVSize\tVFree\n")

|

||||

for idx, vg := range vgs {

|

||||

fmt.Fprintf(w, "%d\t%s\t%s\t%s\n", idx+1, vg.VgName, vg.VgSize, vg.VgFree)

|

||||

}

|

||||

w.Flush()

|

||||

|

||||

LOOP:

|

||||

fmt.Printf("\nEnter the volume group id to extend: ")

|

||||

var input string

|

||||

input, err = reader.ReadString('\n')

|

||||

if err != nil && err.Error() != "EOF" {

|

||||

return "", errors.Wrap(errors.WithStack(err), "read volume group id failed")

|

||||

}

|

||||

input = strings.TrimSpace(input)

|

||||

if input == "" {

|

||||

fmt.Printf("\ninvalid volume group id, please try again")

|

||||

goto LOOP

|

||||

}

|

||||

|

||||

selectedIdx, err := strconv.Atoi(input)

|

||||

if err != nil || selectedIdx < 1 || selectedIdx > len(vgs) {

|

||||

fmt.Printf("\ninvalid volume group id, please try again")

|

||||

goto LOOP

|

||||

}

|

||||

|

||||

return vgs[selectedIdx-1].VgName, nil

|

||||

}

|

||||

|

||||

func selectExtendingLV(vgName string, lvs []*lvm.LvItem) (string, error) {

|

||||

if len(lvs) == 1 {

|

||||

return lvs[0].LvName, nil

|

||||

}

|

||||

|

||||

if vgName == defaultOlaresVGName {

|

||||

selectedLv := ""

|

||||

for _, lv := range lvs {

|

||||

if lv.LvName == "root" {

|

||||

selectedLv = lv.LvName

|

||||

continue

|

||||

}

|

||||

|

||||

if lv.LvName == "data" {

|

||||

selectedLv = lv.LvName

|

||||

break

|

||||

}

|

||||

}

|

||||

|

||||

if selectedLv != "" {

|

||||

return selectedLv, nil

|

||||

}

|

||||

}

|

||||

|

||||

reader, err := utils.GetBufIOReaderOfTerminalInput()

|

||||

if err != nil {

|

||||

return "", errors.Wrap(err, "failed to get terminal input reader")

|

||||

}

|

||||

|

||||

fmt.Println("Multiple logical volumes found. Please select one to extend:")

|

||||

fmt.Println("")

|

||||

// print header

|

||||

w := tabwriter.NewWriter(os.Stdout, 0, 8, 2, ' ', 0)

|

||||

|

||||

fmt.Fprint(w, "id\tLV\tVG\tLSize\tMountpoints\n")

|

||||

for idx, lv := range lvs {

|

||||

fmt.Fprintf(w, "%d\t%s\t%s\t%s\t%s\n", idx+1, lv.LvName, lv.VgName, lv.LvSize, strings.Join(lv.Mountpoints, ","))

|

||||

}

|

||||

w.Flush()

|

||||

|

||||

LOOP:

|

||||

fmt.Printf("\nEnter the logical volume id to extend: ")

|

||||

var input string

|

||||

input, err = reader.ReadString('\n')

|

||||

if err != nil && err.Error() != "EOF" {

|

||||

return "", errors.Wrap(errors.WithStack(err), "read logical volume id failed")

|

||||

}

|

||||

input = strings.TrimSpace(input)

|

||||

if input == "" {

|

||||

fmt.Printf("\ninvalid logical volume id, please try again")

|

||||

goto LOOP

|

||||

}

|

||||

|

||||

selectedIdx, err := strconv.Atoi(input)

|

||||

if err != nil || selectedIdx < 1 || selectedIdx > len(lvs) {

|

||||

fmt.Printf("\ninvalid logical volume id, please try again")

|

||||

goto LOOP

|

||||

}

|

||||

|

||||

return lvs[selectedIdx-1].LvName, nil

|

||||

}

|

||||

|

||||

func selectExtendingDevices(unmountedDevices map[string]*lvm.BlkPart) (string, error) {

|

||||

if len(unmountedDevices) == 0 {

|

||||

return "", errors.New("no unmounted devices available for selection")

|

||||

}

|

||||

|

||||

if len(unmountedDevices) == 1 {

|

||||

for path := range unmountedDevices {

|

||||

return path, nil

|

||||

}

|

||||

}

|

||||

|

||||

reader, err := utils.GetBufIOReaderOfTerminalInput()

|

||||

if err != nil {

|

||||

return "", errors.Wrap(err, "failed to get terminal input reader")

|

||||

}

|

||||

|

||||

fmt.Println("Multiple unmounted devices found. Please select one to use:")

|

||||

fmt.Println("")

|

||||

// print header

|

||||

w := tabwriter.NewWriter(os.Stdout, 0, 8, 2, ' ', 0)

|

||||

|

||||

fmt.Fprint(w, "id\tDevice\tSize\n")

|

||||

idx := 1

|

||||

devicePaths := make([]string, 0, len(unmountedDevices))

|

||||

for path, device := range unmountedDevices {

|

||||

fmt.Fprintf(w, "%d\t%s\t%s\n", idx, path, device.Size)

|

||||

devicePaths = append(devicePaths, path)

|

||||

idx++

|

||||

}

|

||||

w.Flush()

|

||||

|

||||

LOOP:

|

||||

fmt.Printf("\nEnter the device id to use: ")

|

||||

var input string

|

||||

input, err = reader.ReadString('\n')

|

||||

if err != nil && err.Error() != "EOF" {

|

||||

return "", errors.Wrap(errors.WithStack(err), "read device id failed")

|

||||

}

|

||||

input = strings.TrimSpace(input)

|

||||

if input == "" {

|

||||

fmt.Printf("\ninvalid device id, please try again")

|

||||

goto LOOP

|

||||

}

|

||||

selectedIdx, err := strconv.Atoi(input)

|

||||

if err != nil || selectedIdx < 1 || selectedIdx > len(devicePaths) {

|

||||

fmt.Printf("\ninvalid device id, please try again")

|

||||

goto LOOP

|

||||

}

|

||||

|

||||

return devicePaths[selectedIdx-1], nil

|

||||

}

|

||||

|

||||

func (o LvmExtendOptions) destroyWarning() (bool, error) {

|

||||

reader, err := utils.GetBufIOReaderOfTerminalInput()

|

||||

if err != nil {

|

||||

return false, errors.Wrap(err, "failed to get terminal input reader")

|

||||

}

|

||||

|

||||

fmt.Printf("WARNING: This will DESTROY all data on %s\n", o.DevicePath)

|

||||

LOOP:

|

||||

fmt.Printf("Type 'YES' to continue, CTRL+C to abort: ")

|

||||

var input string

|

||||

input, err = reader.ReadString('\n')

|

||||

if err != nil && err.Error() != "EOF" {

|

||||

return false, errors.Wrap(errors.WithStack(err), "read confirmation input failed")

|

||||

}

|

||||

input = strings.ToUpper(strings.TrimSpace(input))

|

||||

if input != "YES" {

|

||||

goto LOOP

|

||||

}

|

||||

return true, nil

|

||||

}

|

||||

|

||||

func (o LvmExtendOptions) makeDecision() (bool, error) {

|

||||

reader, err := utils.GetBufIOReaderOfTerminalInput()

|

||||

if err != nil {

|

||||

return false, errors.Wrap(err, "failed to get terminal input reader")

|

||||

}

|

||||

|

||||

fmt.Printf("NOTICE: Extending LVM will begin on device %s\n", o.DevicePath)

|

||||

LOOP:

|

||||

fmt.Printf("Type 'YES' to continue, CTRL+C to abort: ")

|

||||

var input string

|

||||

input, err = reader.ReadString('\n')

|

||||

if err != nil && err.Error() != "EOF" {

|

||||

return false, errors.Wrap(errors.WithStack(err), "read confirmation input failed")

|

||||

}

|

||||

input = strings.ToUpper(strings.TrimSpace(input))

|

||||

if input != "YES" {

|

||||

goto LOOP

|

||||

}

|

||||

return true, nil

|

||||

}

|

||||

|

||||

func (o LvmExtendOptions) cleanupDiskParts() (bool, error) {

|

||||

if o.DeviceBlk == nil {

|

||||

return false, errors.New("device block is nil")

|

||||

}

|

||||

|

||||

if len(o.DeviceBlk.Children) == 0 {

|

||||

return false, nil

|

||||

}

|

||||

|

||||

return true, nil

|

||||

}

|

||||

|

||||

func (o LvmExtendOptions) deleteDevicePartitions() error {

|

||||

log.Printf("Selected device %s has existing partitions. Cleaning up...\n", o.DevicePath)

|

||||

if o.DeviceBlk == nil {

|

||||

return errors.New("device block is nil")

|

||||

}

|

||||

|

||||

if len(o.DeviceBlk.Children) == 0 {

|

||||

return nil

|

||||

}

|

||||

|

||||

log.Printf("Deleting existing partitions on device %s...\n", o.DevicePath)

|

||||

var partitions []string

|

||||

for _, part := range o.DeviceBlk.Children {

|

||||

partitions = append(partitions, "/dev/"+part.Name)

|

||||

}

|

||||

|

||||

vgs, err := lvm.FindVgsOnDevice(partitions)

|

||||

if err != nil {

|

||||

return errors.Wrap(err, "failed to find volume groups on device partitions")

|

||||

}

|

||||

|

||||

if len(vgs) > 0 {

|

||||

log.Println("existing volume group on device, delete it first")

|

||||

for _, vg := range vgs {

|

||||

lvs, err := lvm.FindLvByVgName(vg.VgName)

|

||||

if err != nil {

|

||||

return errors.Wrapf(err, "failed to find logical volumes in volume group %s", vg.VgName)

|

||||

}

|

||||

|

||||

err = lvm.DeactivateLv(vg.VgName)

|

||||

if err != nil {

|

||||

return errors.Wrapf(err, "failed to deactivate volume group %s", vg.VgName)

|

||||

}

|

||||

|

||||

for _, lv := range lvs {

|

||||

err = lvm.RemoveLv(lv.LvPath)

|

||||

if err != nil {

|

||||

return errors.Wrapf(err, "failed to remove logical volume %s", lv.LvPath)

|

||||

}

|

||||

}

|

||||

|

||||

err = lvm.RemoveVg(vg.VgName)

|

||||

if err != nil {

|

||||

return errors.Wrapf(err, "failed to remove volume group %s", vg)

|

||||

}

|

||||

|

||||

err = lvm.RemovePv(vg.PvName)

|

||||

if err != nil {

|

||||

return errors.Wrapf(err, "failed to remove physical volume %s", vg.PvName)

|

||||

}

|

||||

}

|

||||

|

||||

}

|

||||

|

||||

log.Printf("Deleting partitions on device %s...\n", o.DevicePath)

|

||||

err = lvm.DeleteDevicePartitions(o.DevicePath)

|

||||

if err != nil {

|

||||

return errors.Wrapf(err, "failed to delete partitions on device %s", o.DevicePath)

|

||||

}

|

||||

|

||||

return nil

|

||||

}

|

||||

|

||||

func (o LvmExtendOptions) extendLVM() error {

|

||||

log.Printf("Creating partition on device %s...\n", o.DevicePath)

|

||||

err := lvm.MakePartOnDevice(o.DevicePath)

|

||||

if err != nil {

|

||||

return errors.Wrapf(err, "failed to create partition on device %s", o.DevicePath)

|

||||

}

|

||||

|

||||

log.Printf("Creating physical volume on device %s...\n", o.DevicePath)

|

||||

err = lvm.AddNewPV(o.DevicePath, o.VgName)

|

||||

if err != nil {

|

||||

return errors.Wrapf(err, "failed to create physical volume on device %s", o.DevicePath)

|

||||

}

|

||||

|

||||

log.Printf("Extending volume group %s with logic volume %s on device %s...\n", o.VgName, o.LvName, o.DevicePath)

|

||||

err = lvm.ExtendLv(o.VgName, o.LvName)

|

||||

if err != nil {

|

||||

return errors.Wrapf(err, "failed to extend logical volume %s in volume group %s", o.LvName, o.VgName)

|

||||

}

|

||||

|

||||

return nil

|

||||

}

|

||||

34

cli/cmd/ctl/disk/listumounted.go

Normal file

34

cli/cmd/ctl/disk/listumounted.go

Normal file

@@ -0,0 +1,34 @@

|

||||

package disk

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"log"

|

||||

"os"

|

||||

"text/tabwriter"

|

||||

|

||||

"github.com/beclab/Olares/cli/pkg/utils/lvm"

|

||||

"github.com/spf13/cobra"

|

||||

)

|

||||

|

||||

func NewListUnmountedDisksCommand() *cobra.Command {

|

||||

cmd := &cobra.Command{

|

||||

Use: "list-unmounted",

|

||||

Short: "List unmounted disks",

|

||||

Run: func(cmd *cobra.Command, args []string) {

|

||||

unmountedDevices, err := lvm.FindUnmountedDevices()

|

||||

if err != nil {

|

||||

log.Fatalf("Error finding unmounted devices: %v\n", err)

|

||||

}

|

||||

|

||||

// print header

|

||||

w := tabwriter.NewWriter(os.Stdout, 0, 8, 2, ' ', 0)

|

||||

|

||||

fmt.Fprint(w, "Device\tSize\n")

|

||||

for path, device := range unmountedDevices {

|

||||

fmt.Fprintf(w, "%s\t%s\n", path, device.Size)

|

||||

}

|

||||

w.Flush()

|

||||

},

|

||||

}

|

||||

return cmd

|

||||

}

|

||||

15

cli/cmd/ctl/disk/root.go

Normal file

15

cli/cmd/ctl/disk/root.go

Normal file

@@ -0,0 +1,15 @@

|

||||

package disk

|

||||

|

||||

import "github.com/spf13/cobra"

|

||||

|

||||

func NewDiskCommand() *cobra.Command {

|

||||

cmd := &cobra.Command{

|

||||

Use: "disk",

|

||||