Compare commits

183 Commits

feat/move-

...

fix/files_

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

05d14de4fe | ||

|

|

058cf31e44 | ||

|

|

72a5b2c6a2 | ||

|

|

f78890b01b | ||

|

|

13df294653 | ||

|

|

2af86e161a | ||

|

|

ee567c270c | ||

|

|

4246bcce06 | ||

|

|

fb73d62bd5 | ||

|

|

209f0d15e3 | ||

|

|

78911d44cf | ||

|

|

d964c33c2d | ||

|

|

2b54795e10 | ||

|

|

efb4be4fcf | ||

|

|

89575096ba | ||

|

|

5edba60295 | ||

|

|

1aecc3495a | ||

|

|

2d5c1fc484 | ||

|

|

81355f4a1c | ||

|

|

2c4e9fb835 | ||

|

|

4947538e68 | ||

|

|

21bb10b72b | ||

|

|

8064c591f2 | ||

|

|

1073575a1d | ||

|

|

4cf977f6df | ||

|

|

0dda3811c7 | ||

|

|

2632b45fc2 | ||

|

|

ae3f3d6a20 | ||

|

|

4f3b824f48 | ||

|

|

9efa6df969 | ||

|

|

045dfc11bc | ||

|

|

9913d29f81 | ||

|

|

0ccf091aff | ||

|

|

01f3b27b8c | ||

|

|

475faafec4 | ||

|

|

31ab286a4b | ||

|

|

c9b4a40a1c | ||

|

|

da19d00d08 | ||

|

|

49d233a55b | ||

|

|

300aaa0753 | ||

|

|

962b220440 | ||

|

|

4da25bca36 | ||

|

|

42eff16695 | ||

|

|

450aa19dfc | ||

|

|

c750f6f85b | ||

|

|

bf57da0fa4 | ||

|

|

5df379f286 | ||

|

|

cfb54fb974 | ||

|

|

9515c05bb6 | ||

|

|

bdcd924e50 | ||

|

|

e9eb218348 | ||

|

|

9746e2c110 | ||

|

|

27d9715292 | ||

|

|

10d6c2a6fa | ||

|

|

57d8a55d8d | ||

|

|

b9a227acd7 | ||

|

|

e6115794ce | ||

|

|

22739c90db | ||

|

|

6fac46130a | ||

|

|

e19e049e7d | ||

|

|

1d0c20d6ad | ||

|

|

397590d402 | ||

|

|

fc1a59b79b | ||

|

|

3dea149790 | ||

|

|

9d6834faa1 | ||

|

|

bef61309a3 | ||

|

|

cf52a59ef7 | ||

|

|

80023be159 | ||

|

|

ae3e4e6bb9 | ||

|

|

8c9e4d532b | ||

|

|

3c48afb5b5 | ||

|

|

3d22a01eef | ||

|

|

d6263bacca | ||

|

|

3b070ea095 | ||

|

|

82b715635b | ||

|

|

1d4494c8d7 | ||

|

|

56f5c07229 | ||

|

|

697ac440c7 | ||

|

|

f0edbc08a6 | ||

|

|

001607e840 | ||

|

|

e8f525daca | ||

|

|

6d6f7705c9 | ||

|

|

46b7fa0079 | ||

|

|

793a62396b | ||

|

|

7cb4975f5b | ||

|

|

bfaf647ad1 | ||

|

|

23d3dc58ed | ||

|

|

7bf07f36b7 | ||

|

|

7e7117fc3a | ||

|

|

ff159c7a29 | ||

|

|

92b84ab70b | ||

|

|

561d4ba93c | ||

|

|

2089e42c32 | ||

|

|

b50139af5d | ||

|

|

daacba2fa4 | ||

|

|

018b3ef3cc | ||

|

|

ddaa0daf14 | ||

|

|

13e924fcc7 | ||

|

|

6b3032f04d | ||

|

|

4f08f5f341 | ||

|

|

67e91df96b | ||

|

|

e915b70e4b | ||

|

|

e1ca1a97db | ||

|

|

688c4b4010 | ||

|

|

52f6dc7159 | ||

|

|

9f824292d1 | ||

|

|

1bef38380e | ||

|

|

b83729f6d8 | ||

|

|

d484e41bbd | ||

|

|

f9072c9312 | ||

|

|

fb78685c1e | ||

|

|

bb7eba1f92 | ||

|

|

3f778d63c1 | ||

|

|

161f84bc59 | ||

|

|

9168e3d358 | ||

|

|

085da97ca5 | ||

|

|

eed5632794 | ||

|

|

d7cd77f941 | ||

|

|

bb8fbb239d | ||

|

|

b09ef303d1 | ||

|

|

e532682558 | ||

|

|

1b3deedc47 | ||

|

|

8c68fcf89c | ||

|

|

3f8e046855 | ||

|

|

4de8756cac | ||

|

|

1e729ec2ee | ||

|

|

cffa3bb1cc | ||

|

|

4781090e29 | ||

|

|

e0cbc9d874 | ||

|

|

e0ba27f7d0 | ||

|

|

50f6b127ac | ||

|

|

df23dc64e3 | ||

|

|

f704cf1846 | ||

|

|

66d0eccb2f | ||

|

|

a226fd99b8 | ||

|

|

60b823d9db | ||

|

|

7b9be6cce7 | ||

|

|

b99fc51cc2 | ||

|

|

cdf70c5c58 | ||

|

|

1c7fa01df8 | ||

|

|

2b4b590a3a | ||

|

|

2bef0056d3 | ||

|

|

da5ad17e7b | ||

|

|

3b14b95469 | ||

|

|

d0a5da4266 | ||

|

|

a2efa54140 | ||

|

|

f0106180d5 | ||

|

|

9261253126 | ||

|

|

16f554ed54 | ||

|

|

ac212583ea | ||

|

|

186d6dd309 | ||

|

|

79f96c94f7 | ||

|

|

5bd1bd2ab9 | ||

|

|

6be4e1ff6e | ||

|

|

df722bf1cd | ||

|

|

d428295fa5 | ||

|

|

7cecd9d360 | ||

|

|

a48de4efd4 | ||

|

|

d8078cc8ce | ||

|

|

f4d9487d1f | ||

|

|

b5121bde2e | ||

|

|

5f79f7fbe4 | ||

|

|

df6f0bf2d8 | ||

|

|

21be331121 | ||

|

|

cff07d4c2b | ||

|

|

a371b3ce44 | ||

|

|

2712202c48 | ||

|

|

7b17f3b2a4 | ||

|

|

cc6b2c9239 | ||

|

|

46df22854d | ||

|

|

eec03ee9b4 | ||

|

|

0c5a80653e | ||

|

|

e58743fa87 | ||

|

|

d5673b81e0 | ||

|

|

37e37a814d | ||

|

|

73d484b681 | ||

|

|

ddf10130f0 | ||

|

|

5e0534cc2c | ||

|

|

58a7ce05b8 | ||

|

|

448a5c1551 | ||

|

|

4e7ba01bcd | ||

|

|

a034b37239 | ||

|

|

bf17a91062 |

17

.github/workflows/check.yaml

vendored

17

.github/workflows/check.yaml

vendored

@@ -64,6 +64,17 @@ jobs:

|

||||

secrets: inherit

|

||||

with:

|

||||

version: ${{ needs.test-version.outputs.version }}

|

||||

ref: ${{ github.event.pull_request.head.ref }}

|

||||

repository: ${{ github.event.pull_request.head.repo.full_name }}

|

||||

|

||||

upload-daemon:

|

||||

needs: test-version

|

||||

uses: ./.github/workflows/release-daemon.yaml

|

||||

secrets: inherit

|

||||

with:

|

||||

version: ${{ needs.test-version.outputs.version }}

|

||||

ref: ${{ github.event.pull_request.head.ref }}

|

||||

repository: ${{ github.event.pull_request.head.repo.full_name }}

|

||||

|

||||

push-image:

|

||||

runs-on: ubuntu-latest

|

||||

@@ -106,6 +117,7 @@ jobs:

|

||||

|

||||

|

||||

push-deps:

|

||||

needs: [test-version, upload-daemon]

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

steps:

|

||||

@@ -121,10 +133,13 @@ jobs:

|

||||

AWS_ACCESS_KEY_ID: ${{ secrets.AWS_ACCESS_KEY_ID }}

|

||||

AWS_SECRET_ACCESS_KEY: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

|

||||

AWS_DEFAULT_REGION: "us-east-1"

|

||||

VERSION: ${{ needs.test-version.outputs.version }}

|

||||

REPO_PATH: '${{ secrets.REPO_PATH }}'

|

||||

run: |

|

||||

bash build/deps-manifest.sh && bash build/upload-deps.sh

|

||||

|

||||

push-deps-arm64:

|

||||

needs: [test-version, upload-daemon]

|

||||

runs-on: [self-hosted, linux, ARM64]

|

||||

|

||||

steps:

|

||||

@@ -143,6 +158,8 @@ jobs:

|

||||

AWS_ACCESS_KEY_ID: ${{ secrets.AWS_ACCESS_KEY_ID }}

|

||||

AWS_SECRET_ACCESS_KEY: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

|

||||

AWS_DEFAULT_REGION: "us-east-1"

|

||||

VERSION: ${{ needs.test-version.outputs.version }}

|

||||

REPO_PATH: '${{ secrets.REPO_PATH }}'

|

||||

run: |

|

||||

export PATH=$PATH:/usr/local/bin:/home/ubuntu/.local/bin

|

||||

bash build/deps-manifest.sh linux/arm64 && bash build/upload-deps.sh linux/arm64

|

||||

|

||||

34

.github/workflows/push-deps-to-s3.yml

vendored

34

.github/workflows/push-deps-to-s3.yml

vendored

@@ -11,27 +11,13 @@ jobs:

|

||||

- name: "Checkout source code"

|

||||

uses: actions/checkout@v3

|

||||

|

||||

- name: Install coscmd

|

||||

run: pip install coscmd

|

||||

|

||||

- name: Configure coscmd

|

||||

env:

|

||||

TENCENT_SECRET_ID: ${{ secrets.TENCENT_SECRET_ID }}

|

||||

TENCENT_SECRET_KEY: ${{ secrets.TENCENT_SECRET_KEY }}

|

||||

COS_BUCKET: ${{ secrets.COS_BUCKET }}

|

||||

COS_REGION: ${{ secrets.COS_REGION }}

|

||||

END_POINT: ${{ secrets.END_POINT }}

|

||||

run: |

|

||||

coscmd config -a $TENCENT_SECRET_ID \

|

||||

-s $TENCENT_SECRET_KEY \

|

||||

-b $COS_BUCKET \

|

||||

-r $COS_REGION

|

||||

|

||||

# test

|

||||

- env:

|

||||

AWS_ACCESS_KEY_ID: ${{ secrets.AWS_ACCESS_KEY_ID }}

|

||||

AWS_SECRET_ACCESS_KEY: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

|

||||

AWS_DEFAULT_REGION: "us-east-1"

|

||||

REPO_PATH: '${{ secrets.REPO_PATH }}'

|

||||

run: |

|

||||

bash build/deps-manifest.sh && bash build/upload-deps.sh

|

||||

|

||||

@@ -42,28 +28,12 @@ jobs:

|

||||

- name: "Checkout source code"

|

||||

uses: actions/checkout@v3

|

||||

|

||||

- name: Install coscmd

|

||||

run: pip install coscmd

|

||||

|

||||

- name: Configure coscmd

|

||||

env:

|

||||

TENCENT_SECRET_ID: ${{ secrets.TENCENT_SECRET_ID }}

|

||||

TENCENT_SECRET_KEY: ${{ secrets.TENCENT_SECRET_KEY }}

|

||||

COS_BUCKET: ${{ secrets.COS_BUCKET }}

|

||||

COS_REGION: ${{ secrets.COS_REGION }}

|

||||

END_POINT: ${{ secrets.END_POINT }}

|

||||

run: |

|

||||

export PATH=$PATH:/usr/local/bin:/home/ubuntu/.local/bin

|

||||

coscmd config -m 10 -p 10 -a $TENCENT_SECRET_ID \

|

||||

-s $TENCENT_SECRET_KEY \

|

||||

-b $COS_BUCKET \

|

||||

-r $COS_REGION

|

||||

|

||||

# test

|

||||

- env:

|

||||

AWS_ACCESS_KEY_ID: ${{ secrets.AWS_ACCESS_KEY_ID }}

|

||||

AWS_SECRET_ACCESS_KEY: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

|

||||

AWS_DEFAULT_REGION: "us-east-1"

|

||||

REPO_PATH: '${{ secrets.REPO_PATH }}'

|

||||

run: |

|

||||

export PATH=$PATH:/usr/local/bin:/home/ubuntu/.local/bin

|

||||

bash build/deps-manifest.sh linux/arm64 && bash build/upload-deps.sh linux/arm64

|

||||

|

||||

33

.github/workflows/push-to-s3.yaml

vendored

33

.github/workflows/push-to-s3.yaml

vendored

@@ -11,22 +11,6 @@ jobs:

|

||||

- name: "Checkout source code"

|

||||

uses: actions/checkout@v3

|

||||

|

||||

- name: Install coscmd

|

||||

run: pip install coscmd

|

||||

|

||||

- name: Configure coscmd

|

||||

env:

|

||||

TENCENT_SECRET_ID: ${{ secrets.TENCENT_SECRET_ID }}

|

||||

TENCENT_SECRET_KEY: ${{ secrets.TENCENT_SECRET_KEY }}

|

||||

COS_BUCKET: ${{ secrets.COS_BUCKET }}

|

||||

COS_REGION: ${{ secrets.COS_REGION }}

|

||||

END_POINT: ${{ secrets.END_POINT }}

|

||||

run: |

|

||||

coscmd config -a $TENCENT_SECRET_ID \

|

||||

-s $TENCENT_SECRET_KEY \

|

||||

-b $COS_BUCKET \

|

||||

-r $COS_REGION

|

||||

|

||||

# test

|

||||

- env:

|

||||

AWS_ACCESS_KEY_ID: ${{ secrets.AWS_ACCESS_KEY_ID }}

|

||||

@@ -42,23 +26,6 @@ jobs:

|

||||

- name: "Checkout source code"

|

||||

uses: actions/checkout@v3

|

||||

|

||||

- name: Install coscmd

|

||||

run: pip install coscmd

|

||||

|

||||

- name: Configure coscmd

|

||||

env:

|

||||

TENCENT_SECRET_ID: ${{ secrets.TENCENT_SECRET_ID }}

|

||||

TENCENT_SECRET_KEY: ${{ secrets.TENCENT_SECRET_KEY }}

|

||||

COS_BUCKET: ${{ secrets.COS_BUCKET }}

|

||||

COS_REGION: ${{ secrets.COS_REGION }}

|

||||

END_POINT: ${{ secrets.END_POINT }}

|

||||

run: |

|

||||

export PATH=$PATH:/usr/local/bin:/home/ubuntu/.local/bin

|

||||

coscmd config -m 10 -p 10 -a $TENCENT_SECRET_ID \

|

||||

-s $TENCENT_SECRET_KEY \

|

||||

-b $COS_BUCKET \

|

||||

-r $COS_REGION

|

||||

|

||||

- env:

|

||||

AWS_ACCESS_KEY_ID: ${{ secrets.AWS_ACCESS_KEY_ID }}

|

||||

AWS_SECRET_ACCESS_KEY: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

|

||||

|

||||

19

.github/workflows/release-cli.yaml

vendored

19

.github/workflows/release-cli.yaml

vendored

@@ -6,7 +6,19 @@ on:

|

||||

version:

|

||||

type: string

|

||||

required: true

|

||||

ref:

|

||||

type: string

|

||||

repository:

|

||||

type: string

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

version:

|

||||

type: string

|

||||

required: true

|

||||

ref:

|

||||

type: string

|

||||

repository:

|

||||

type: string

|

||||

jobs:

|

||||

goreleaser:

|

||||

runs-on: ubuntu-22.04

|

||||

@@ -15,6 +27,8 @@ jobs:

|

||||

uses: actions/checkout@v3

|

||||

with:

|

||||

fetch-depth: 1

|

||||

ref: ${{ inputs.ref }}

|

||||

repository: ${{ inputs.repository }}

|

||||

|

||||

- name: Add Local Git Tag For GoReleaser

|

||||

run: git tag ${{ inputs.version }}

|

||||

@@ -23,7 +37,7 @@ jobs:

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v3

|

||||

with:

|

||||

go-version: 1.22.4

|

||||

go-version: 1.24.3

|

||||

|

||||

- name: Install x86_64 cross-compiler

|

||||

run: sudo apt-get update && sudo apt-get install -y build-essential

|

||||

@@ -48,6 +62,5 @@ jobs:

|

||||

AWS_DEFAULT_REGION: "us-east-1"

|

||||

run: |

|

||||

cd cli/output && for file in *.tar.gz; do

|

||||

aws s3 cp "$file" s3://terminus-os-install/$file --acl=public-read

|

||||

# coscmd upload $file /$file

|

||||

aws s3 cp "$file" s3://terminus-os-install${{ secrets.REPO_PATH }}${file} --acl=public-read

|

||||

done

|

||||

|

||||

69

.github/workflows/release-daemon.yaml

vendored

Normal file

69

.github/workflows/release-daemon.yaml

vendored

Normal file

@@ -0,0 +1,69 @@

|

||||

name: Release Daemon

|

||||

|

||||

on:

|

||||

workflow_call:

|

||||

inputs:

|

||||

version:

|

||||

type: string

|

||||

required: true

|

||||

ref:

|

||||

type: string

|

||||

repository:

|

||||

type: string

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

version:

|

||||

type: string

|

||||

required: true

|

||||

ref:

|

||||

type: string

|

||||

repository:

|

||||

type: string

|

||||

|

||||

jobs:

|

||||

goreleaser:

|

||||

runs-on: ubuntu-24.04

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v3

|

||||

with:

|

||||

fetch-depth: 1

|

||||

ref: ${{ inputs.ref }}

|

||||

repository: ${{ inputs.repository }}

|

||||

|

||||

- name: Add Local Git Tag For GoReleaser

|

||||

run: git tag ${{ inputs.version }}

|

||||

continue-on-error: true

|

||||

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v3

|

||||

with:

|

||||

go-version: 1.22.1

|

||||

|

||||

- name: install udev-devel

|

||||

run: |

|

||||

sudo apt update && sudo apt install -y libudev-dev

|

||||

|

||||

- name: Install x86_64 cross-compiler

|

||||

run: sudo apt-get update && sudo apt-get install -y build-essential

|

||||

|

||||

- name: Install ARM cross-compiler

|

||||

run: sudo apt-get update && sudo apt-get install -y gcc-aarch64-linux-gnu

|

||||

|

||||

- name: Run GoReleaser

|

||||

uses: goreleaser/goreleaser-action@v3.1.0

|

||||

with:

|

||||

distribution: goreleaser

|

||||

workdir: './daemon'

|

||||

version: v1.18.2

|

||||

args: release --clean

|

||||

|

||||

- name: Upload to CDN

|

||||

env:

|

||||

AWS_ACCESS_KEY_ID: ${{ secrets.AWS_ACCESS_KEY_ID }}

|

||||

AWS_SECRET_ACCESS_KEY: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

|

||||

AWS_DEFAULT_REGION: 'us-east-1'

|

||||

run: |

|

||||

cd daemon/output && for file in *.tar.gz; do

|

||||

aws s3 cp "$file" s3://terminus-os-install${{ secrets.REPO_PATH }}${file} --acl=public-read

|

||||

done

|

||||

18

.github/workflows/release-daily.yaml

vendored

18

.github/workflows/release-daily.yaml

vendored

@@ -27,6 +27,13 @@ jobs:

|

||||

with:

|

||||

version: ${{ needs.daily-version.outputs.version }}

|

||||

|

||||

release-daemon:

|

||||

needs: daily-version

|

||||

uses: ./.github/workflows/release-daemon.yaml

|

||||

secrets: inherit

|

||||

with:

|

||||

version: ${{ needs.daily-version.outputs.version }}

|

||||

|

||||

push-images:

|

||||

runs-on: ubuntu-22.04

|

||||

|

||||

@@ -57,6 +64,7 @@ jobs:

|

||||

bash build/image-manifest.sh && bash build/upload-images.sh .manifest/images.mf linux/arm64

|

||||

|

||||

push-deps:

|

||||

needs: [daily-version, release-daemon]

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

steps:

|

||||

@@ -68,10 +76,13 @@ jobs:

|

||||

AWS_ACCESS_KEY_ID: ${{ secrets.AWS_ACCESS_KEY_ID }}

|

||||

AWS_SECRET_ACCESS_KEY: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

|

||||

AWS_DEFAULT_REGION: "us-east-1"

|

||||

VERSION: ${{ needs.daily-version.outputs.version }}

|

||||

REPO_PATH: '${{ secrets.REPO_PATH }}'

|

||||

run: |

|

||||

bash build/deps-manifest.sh && bash build/upload-deps.sh

|

||||

|

||||

push-deps-arm64:

|

||||

needs: [daily-version, release-daemon]

|

||||

runs-on: [self-hosted, linux, ARM64]

|

||||

|

||||

steps:

|

||||

@@ -83,6 +94,8 @@ jobs:

|

||||

AWS_ACCESS_KEY_ID: ${{ secrets.AWS_ACCESS_KEY_ID }}

|

||||

AWS_SECRET_ACCESS_KEY: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

|

||||

AWS_DEFAULT_REGION: "us-east-1"

|

||||

VERSION: ${{ needs.daily-version.outputs.version }}

|

||||

REPO_PATH: '${{ secrets.REPO_PATH }}'

|

||||

run: |

|

||||

export PATH=$PATH:/usr/local/bin:/home/ubuntu/.local/bin

|

||||

bash build/deps-manifest.sh linux/arm64 && bash build/upload-deps.sh linux/arm64

|

||||

@@ -110,8 +123,8 @@ jobs:

|

||||

AWS_DEFAULT_REGION: 'us-east-1'

|

||||

run: |

|

||||

md5sum install-wizard-v${{ needs.daily-version.outputs.version }}.tar.gz > install-wizard-v${{ needs.daily-version.outputs.version }}.md5sum.txt && \

|

||||

aws s3 cp install-wizard-v${{ needs.daily-version.outputs.version }}.md5sum.txt s3://terminus-os-install/install-wizard-v${{ needs.daily-version.outputs.version }}.md5sum.txt --acl=public-read && \

|

||||

aws s3 cp install-wizard-v${{ needs.daily-version.outputs.version }}.tar.gz s3://terminus-os-install/install-wizard-v${{ needs.daily-version.outputs.version }}.tar.gz --acl=public-read && \

|

||||

aws s3 cp install-wizard-v${{ needs.daily-version.outputs.version }}.md5sum.txt s3://terminus-os-install${{ secrets.REPO_PATH }}install-wizard-v${{ needs.daily-version.outputs.version }}.md5sum.txt --acl=public-read && \

|

||||

aws s3 cp install-wizard-v${{ needs.daily-version.outputs.version }}.tar.gz s3://terminus-os-install${{ secrets.REPO_PATH }}install-wizard-v${{ needs.daily-version.outputs.version }}.tar.gz --acl=public-read && \

|

||||

echo "md5sum=$(awk '{print $1}' install-wizard-v${{ needs.daily-version.outputs.version }}.md5sum.txt)" >> "$GITHUB_OUTPUT"

|

||||

|

||||

|

||||

@@ -139,6 +152,7 @@ jobs:

|

||||

cp .dist/install-wizard/install.sh build/base-package

|

||||

cp build/base-package/install.sh build/base-package/publicInstaller.sh

|

||||

cp .dist/install-wizard/install.ps1 build/base-package

|

||||

cp .dist/install-wizard/joincluster.sh build/base-package

|

||||

|

||||

- name: Release public files

|

||||

uses: softprops/action-gh-release@v1

|

||||

|

||||

71

.github/workflows/release-mdns-agent.yaml

vendored

Normal file

71

.github/workflows/release-mdns-agent.yaml

vendored

Normal file

@@ -0,0 +1,71 @@

|

||||

name: Publish mdns-agent to Dockerhub

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

version:

|

||||

type: string

|

||||

required: true

|

||||

|

||||

jobs:

|

||||

update_dockerhub:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v3

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v3

|

||||

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASS }}

|

||||

|

||||

- name: Build and push Docker image

|

||||

uses: docker/build-push-action@v3

|

||||

with:

|

||||

push: true

|

||||

context: ./daemon

|

||||

tags: beclab/olaresd:${{ inputs.version }}

|

||||

file: ./daemon/docker/Dockerfile.agent

|

||||

platforms: linux/amd64,linux/arm64

|

||||

|

||||

upload_release_package:

|

||||

runs-on: ubuntu-24.04

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v3

|

||||

with:

|

||||

fetch-depth: 1

|

||||

- name: Add Local Git Tag For GoReleaser

|

||||

run: git tag ${{ inputs.version }}

|

||||

continue-on-error: true

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v3

|

||||

with:

|

||||

go-version: 1.22.1

|

||||

|

||||

- name: Install x86_64 cross-compiler

|

||||

run: sudo apt-get update && sudo apt-get install -y build-essential

|

||||

|

||||

- name: Install ARM cross-compiler

|

||||

run: sudo apt-get update && sudo apt-get install -y gcc-aarch64-linux-gnu

|

||||

|

||||

- name: Run GoReleaser

|

||||

uses: goreleaser/goreleaser-action@v3.1.0

|

||||

with:

|

||||

distribution: goreleaser

|

||||

version: v1.18.2

|

||||

args: release --clean --skip-validate -f .goreleaser.agent.yml

|

||||

workdir: './daemon'

|

||||

|

||||

- name: Upload to CDN

|

||||

env:

|

||||

AWS_ACCESS_KEY_ID: ${{ secrets.AWS_ACCESS_KEY_ID }}

|

||||

AWS_SECRET_ACCESS_KEY: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

|

||||

AWS_DEFAULT_REGION: 'us-east-1'

|

||||

run: |

|

||||

cd daemon/output && for file in *.tar.gz; do

|

||||

aws s3 cp "$file" s3://terminus-os-install/$file --acl=public-read

|

||||

done

|

||||

19

.github/workflows/release.yaml

vendored

19

.github/workflows/release.yaml

vendored

@@ -15,6 +15,14 @@ jobs:

|

||||

secrets: inherit

|

||||

with:

|

||||

version: ${{ github.event.inputs.tags }}

|

||||

ref: ${{ github.event.inputs.tags }}

|

||||

|

||||

release-daemon:

|

||||

uses: ./.github/workflows/release-daemon.yaml

|

||||

secrets: inherit

|

||||

with:

|

||||

version: ${{ github.event.inputs.tags }}

|

||||

ref: ${{ github.event.inputs.tags }}

|

||||

|

||||

push:

|

||||

runs-on: ubuntu-22.04

|

||||

@@ -29,6 +37,7 @@ jobs:

|

||||

AWS_ACCESS_KEY_ID: ${{ secrets.AWS_ACCESS_KEY_ID }}

|

||||

AWS_SECRET_ACCESS_KEY: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

|

||||

AWS_DEFAULT_REGION: 'us-east-1'

|

||||

VERSION: ${{ github.event.inputs.tags }}

|

||||

run: |

|

||||

bash build/image-manifest.sh && bash build/upload-images.sh .manifest/images.mf

|

||||

|

||||

@@ -45,12 +54,13 @@ jobs:

|

||||

AWS_ACCESS_KEY_ID: ${{ secrets.AWS_ACCESS_KEY_ID }}

|

||||

AWS_SECRET_ACCESS_KEY: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

|

||||

AWS_DEFAULT_REGION: 'us-east-1'

|

||||

VERSION: ${{ github.event.inputs.tags }}

|

||||

run: |

|

||||

export PATH=$PATH:/usr/local/bin:/home/ubuntu/.local/bin

|

||||

bash build/image-manifest.sh && bash build/upload-images.sh .manifest/images.mf linux/arm64

|

||||

|

||||

upload-package:

|

||||

needs: [push, push-arm64]

|

||||

needs: [push, push-arm64, release-daemon]

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

steps:

|

||||

@@ -70,8 +80,8 @@ jobs:

|

||||

AWS_DEFAULT_REGION: 'us-east-1'

|

||||

run: |

|

||||

md5sum install-wizard-v${{ github.event.inputs.tags }}.tar.gz > install-wizard-v${{ github.event.inputs.tags }}.md5sum.txt && \

|

||||

aws s3 cp install-wizard-v${{ github.event.inputs.tags }}.md5sum.txt s3://terminus-os-install/install-wizard-v${{ github.event.inputs.tags }}.md5sum.txt --acl=public-read && \

|

||||

aws s3 cp install-wizard-v${{ github.event.inputs.tags }}.tar.gz s3://terminus-os-install/install-wizard-v${{ github.event.inputs.tags }}.tar.gz --acl=public-read

|

||||

aws s3 cp install-wizard-v${{ github.event.inputs.tags }}.md5sum.txt s3://terminus-os-install${{ secrets.REPO_PATH }}install-wizard-v${{ github.event.inputs.tags }}.md5sum.txt --acl=public-read && \

|

||||

aws s3 cp install-wizard-v${{ github.event.inputs.tags }}.tar.gz s3://terminus-os-install${{ secrets.REPO_PATH }}install-wizard-v${{ github.event.inputs.tags }}.tar.gz --acl=public-read

|

||||

|

||||

release:

|

||||

runs-on: ubuntu-latest

|

||||

@@ -91,7 +101,7 @@ jobs:

|

||||

- name: Get checksum

|

||||

id: vars

|

||||

run: |

|

||||

echo "version_md5sum=$(curl -sSfL https://dc3p1870nn3cj.cloudfront.net/install-wizard-v${{ github.event.inputs.tags }}.md5sum.txt|awk '{print $1}')" >> $GITHUB_OUTPUT

|

||||

echo "version_md5sum=$(curl -sSfL https://dc3p1870nn3cj.cloudfront.net${{ secrets.REPO_PATH }}install-wizard-v${{ github.event.inputs.tags }}.md5sum.txt|awk '{print $1}')" >> $GITHUB_OUTPUT

|

||||

|

||||

- name: Update checksum

|

||||

uses: eball/write-tag-to-version-file@latest

|

||||

@@ -111,6 +121,7 @@ jobs:

|

||||

cp build/base-package/install.sh build/base-package/publicInstaller.latest

|

||||

cp .dist/install-wizard/install.ps1 build/insbase-packagetaller

|

||||

cp build/base-package/install.ps1 build/base-package/publicInstaller.latest.ps1

|

||||

cp .dist/install-wizard/joincluster.sh build/base-package

|

||||

|

||||

- name: Release public files

|

||||

uses: softprops/action-gh-release@v1

|

||||

|

||||

2

.gitignore

vendored

2

.gitignore

vendored

@@ -30,3 +30,5 @@ olares-cli-*.tar.gz

|

||||

.vscode

|

||||

.DS_Store

|

||||

cli/output

|

||||

daemon/output

|

||||

daemon/bin

|

||||

|

||||

93

README.md

93

README.md

@@ -1,6 +1,6 @@

|

||||

<div align="center">

|

||||

|

||||

# Olares: An Open-Source Personal Cloud OS to Reclaim Your Data<!-- omit in toc -->

|

||||

# Olares: An Open-Source Personal Cloud to </br>Reclaim Your Data<!-- omit in toc -->

|

||||

|

||||

[](#)<br/>

|

||||

[](https://github.com/beclab/olares/commits/main)

|

||||

@@ -18,10 +18,6 @@

|

||||

|

||||

</div>

|

||||

|

||||

https://github.com/user-attachments/assets/3089a524-c135-4f96-ad2b-c66bf4ee7471

|

||||

|

||||

*Build your local AI assistants, sync data across places, self-host your workspace, stream your own media, and more—all in your personal cloud on Olares.*

|

||||

|

||||

<p align="center">

|

||||

<a href="https://olares.com">Website</a> ·

|

||||

<a href="https://docs.olares.com">Documentation</a> ·

|

||||

@@ -34,13 +30,54 @@ https://github.com/user-attachments/assets/3089a524-c135-4f96-ad2b-c66bf4ee7471

|

||||

>

|

||||

>*It's time for a change.*

|

||||

|

||||

|

||||

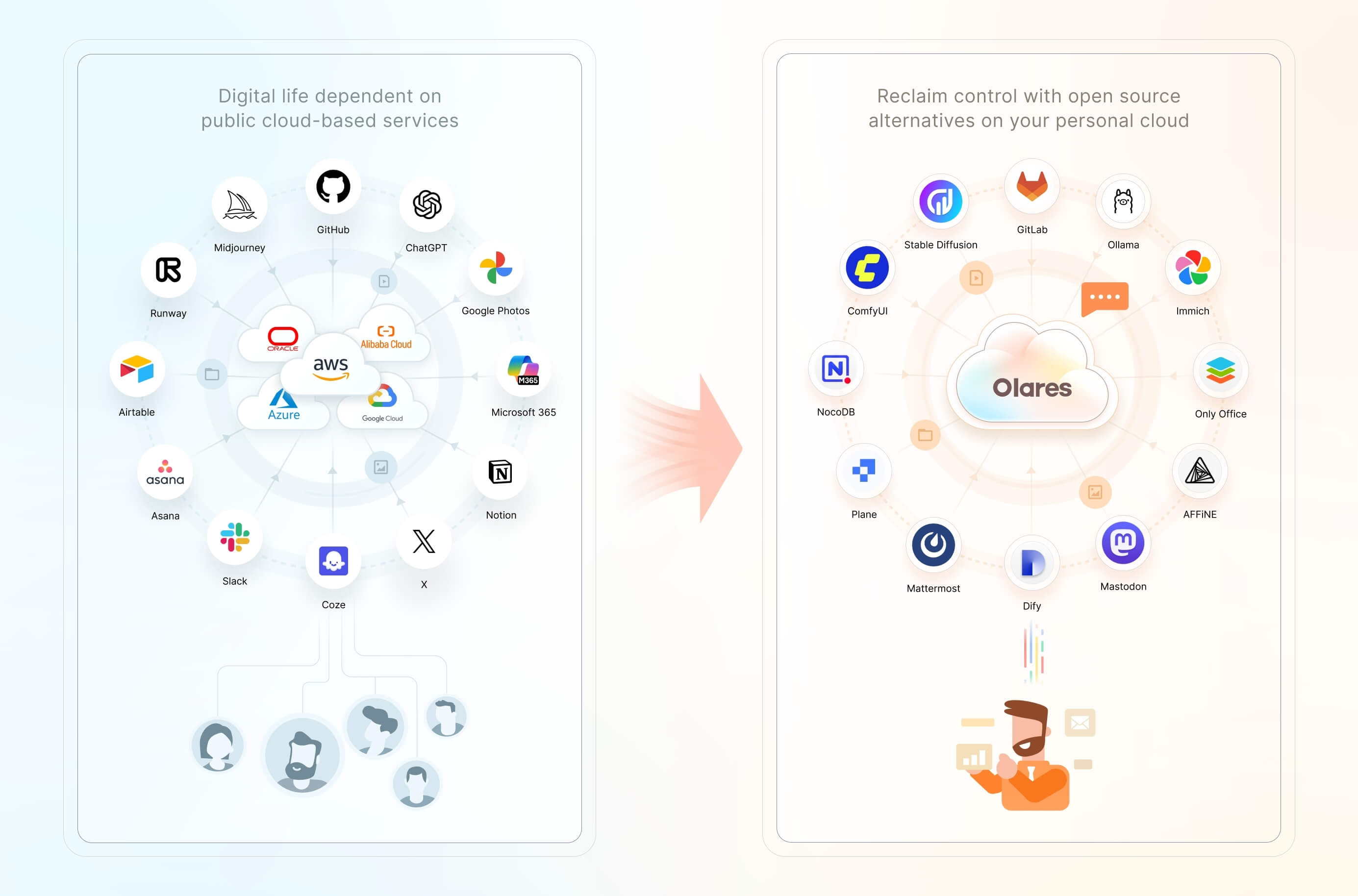

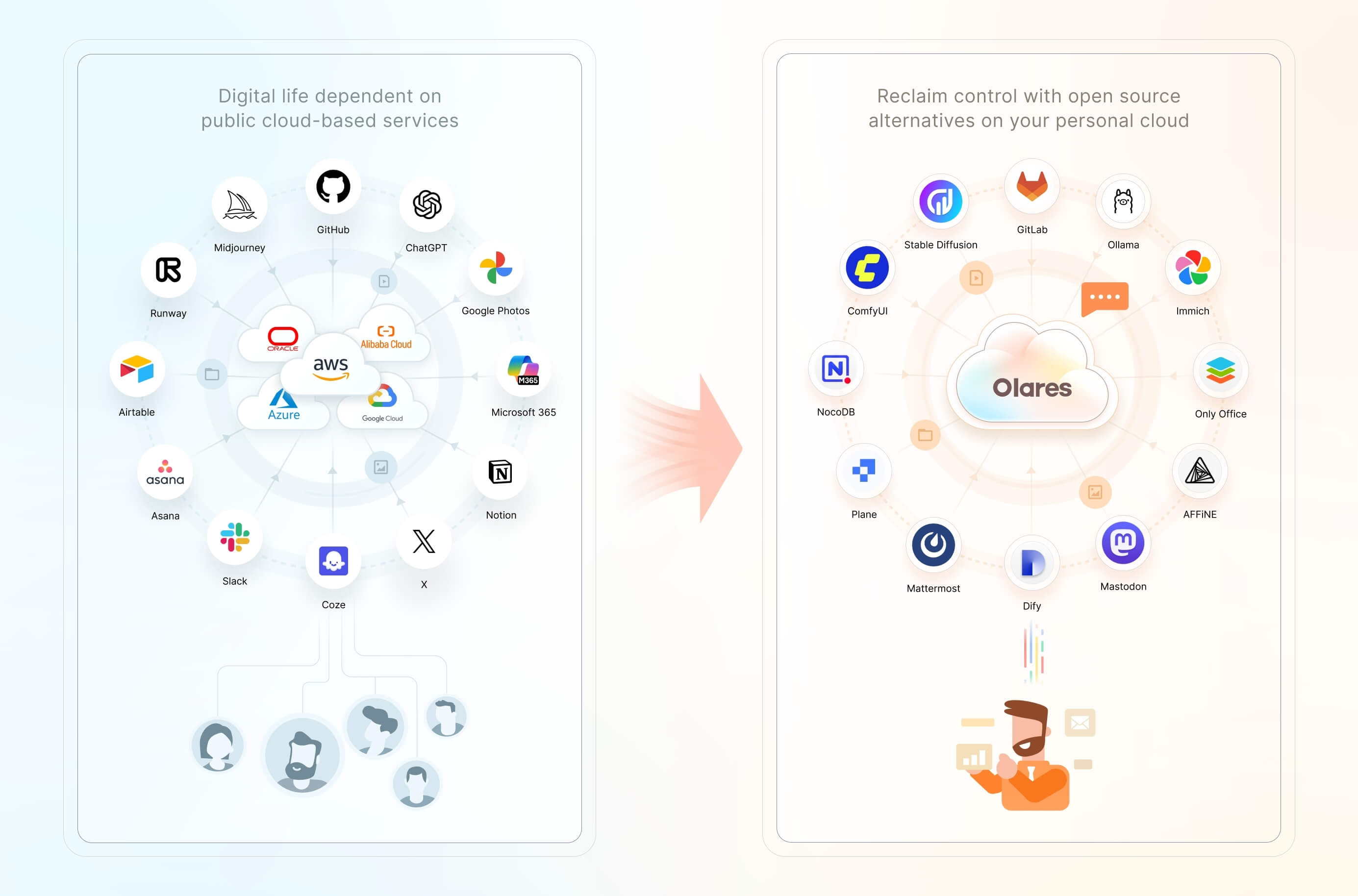

We believe you have a fundamental right to control your digital life. The most effective way to uphold this right is by hosting your data locally, on your own hardware.

|

||||

|

||||

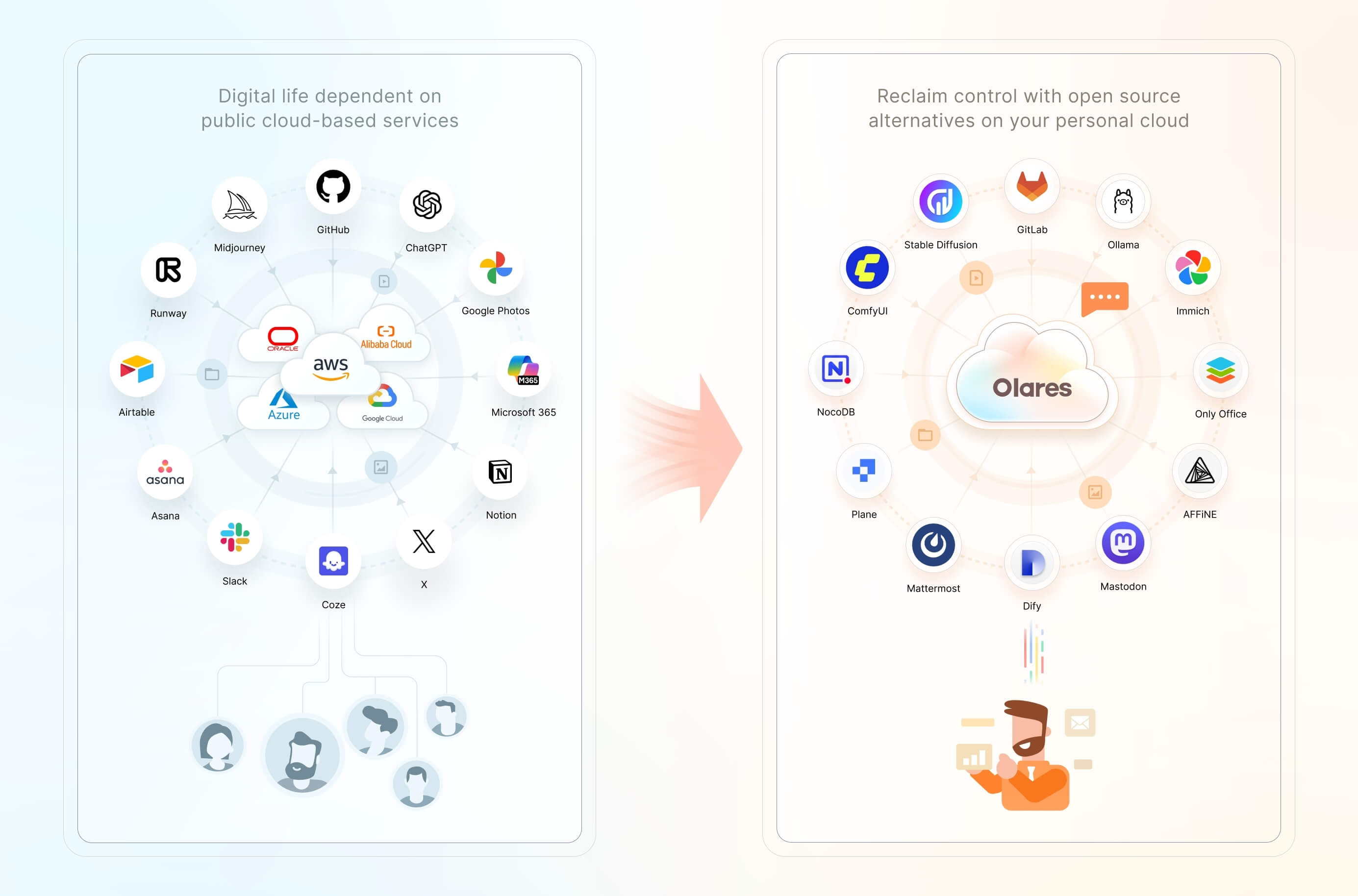

Olares is an **open-source personal cloud operating system** designed to empower you to own and manage your digital assets locally. Instead of relying on public cloud services, you can deploy powerful open-source alternatives locally on Olares, such as Ollama for hosting LLMs, SD WebUI for image generation, and Mastodon for building censor free social space. Imagine the power of the cloud, but with you in complete command.

|

||||

|

||||

> 🌟 *Star us to receive instant notifications about new releases and updates.*

|

||||

|

||||

## Key Features & Use Cases

|

||||

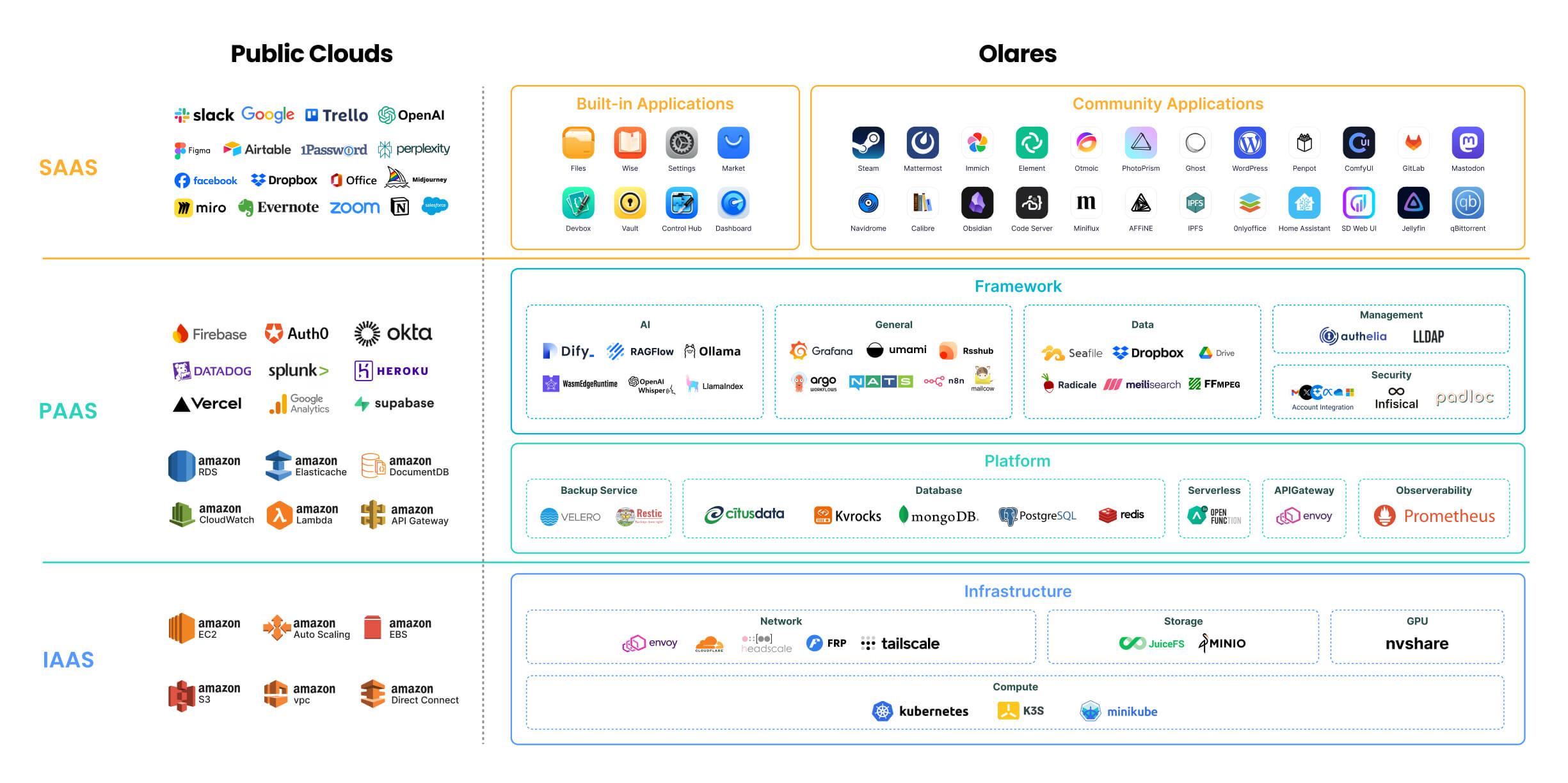

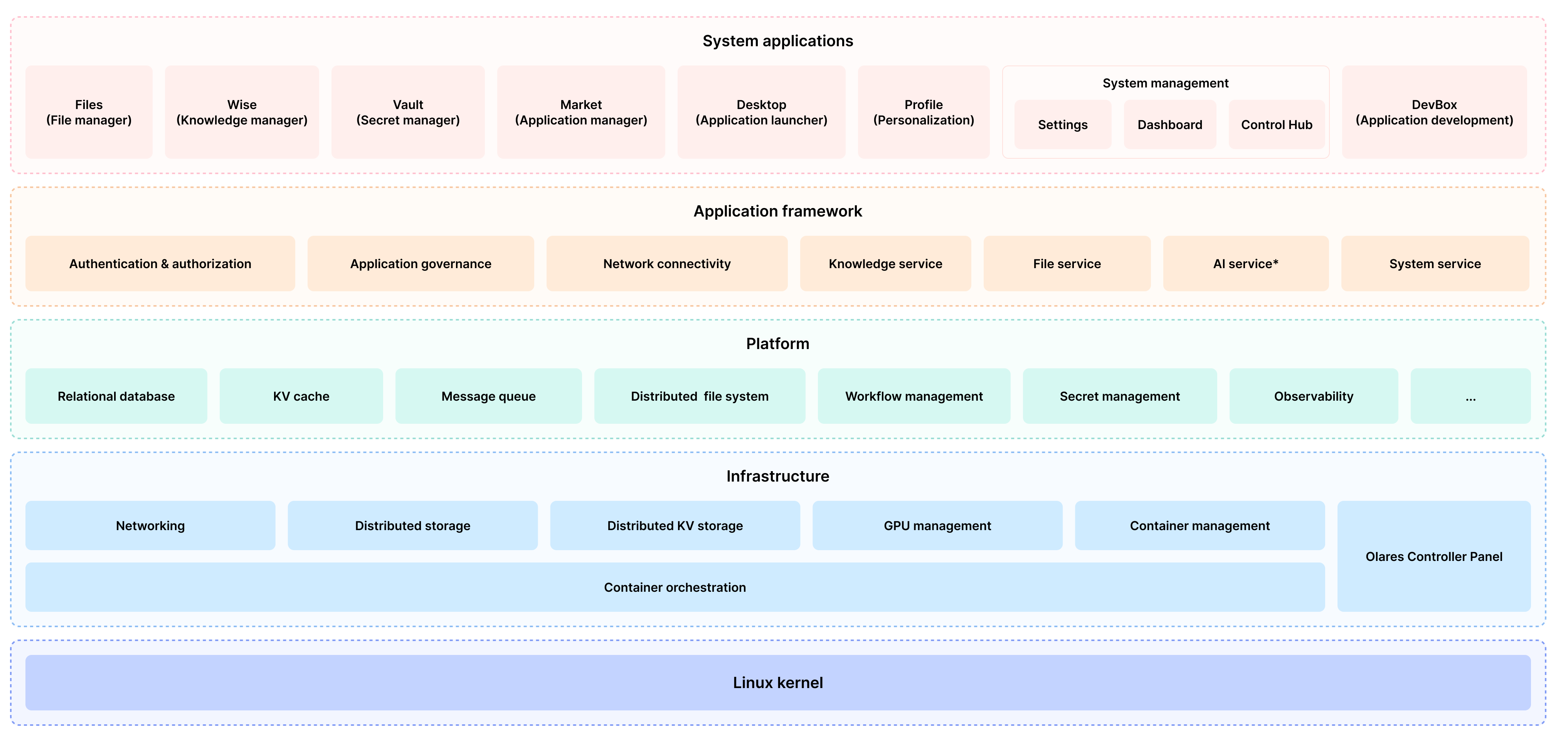

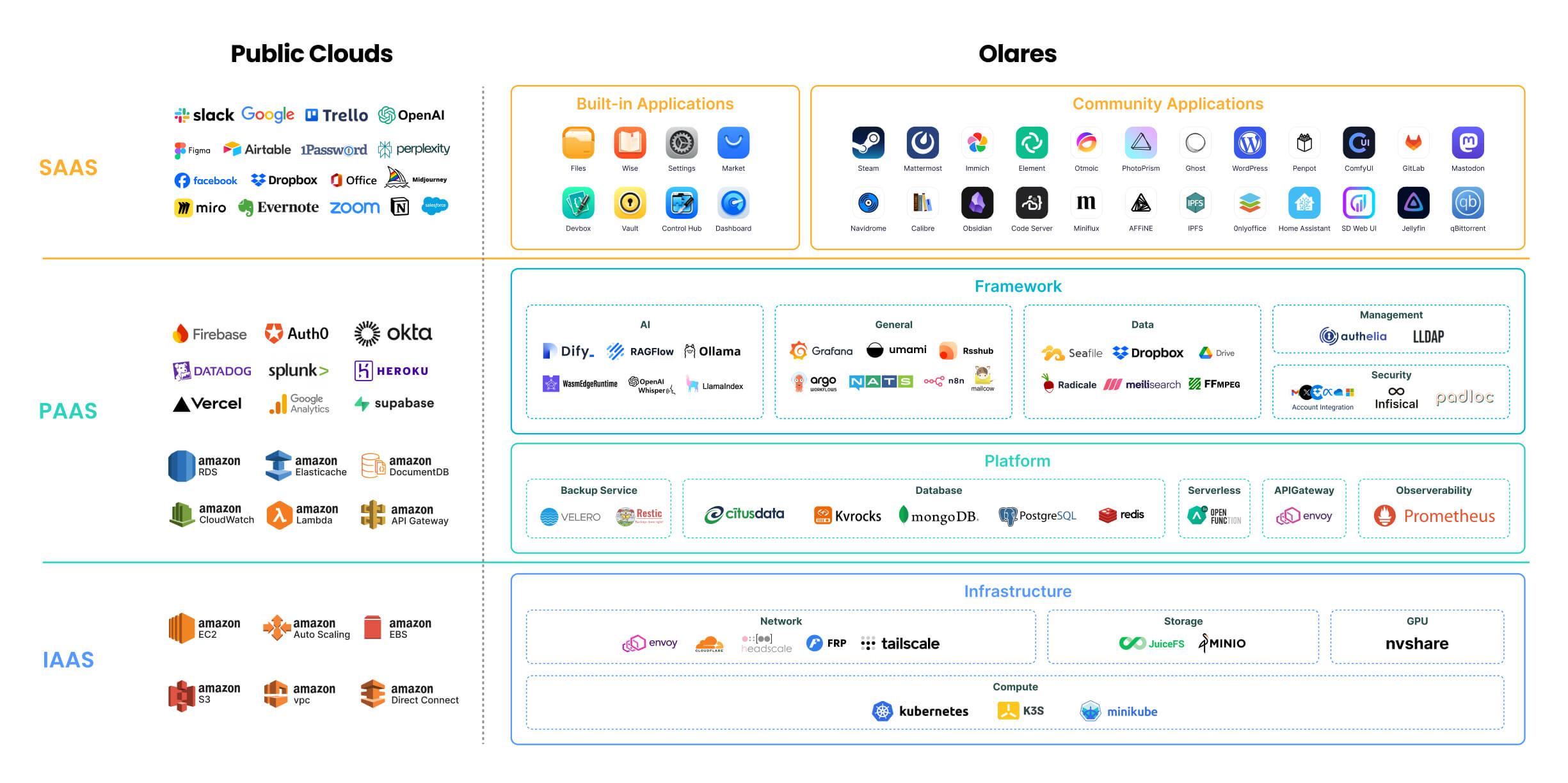

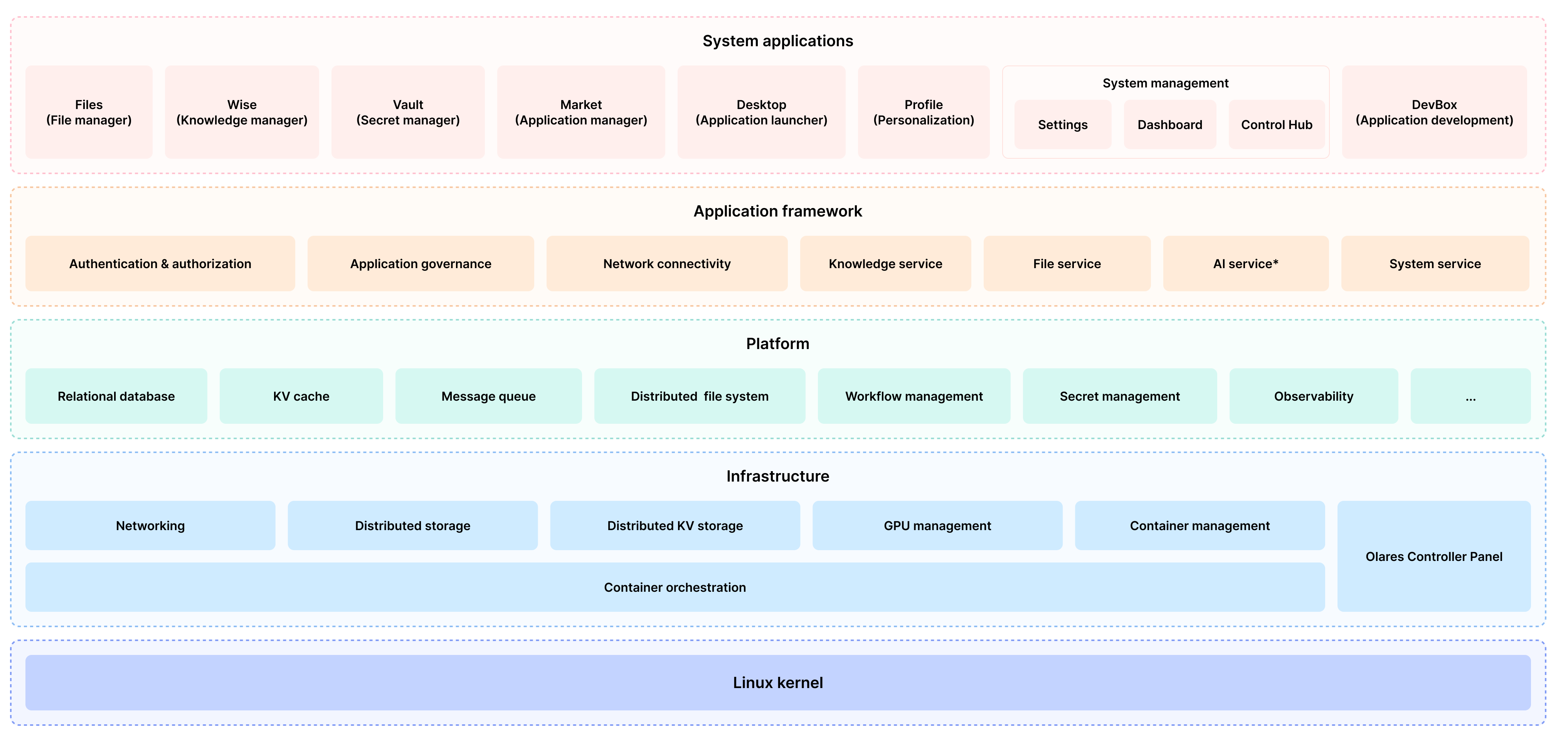

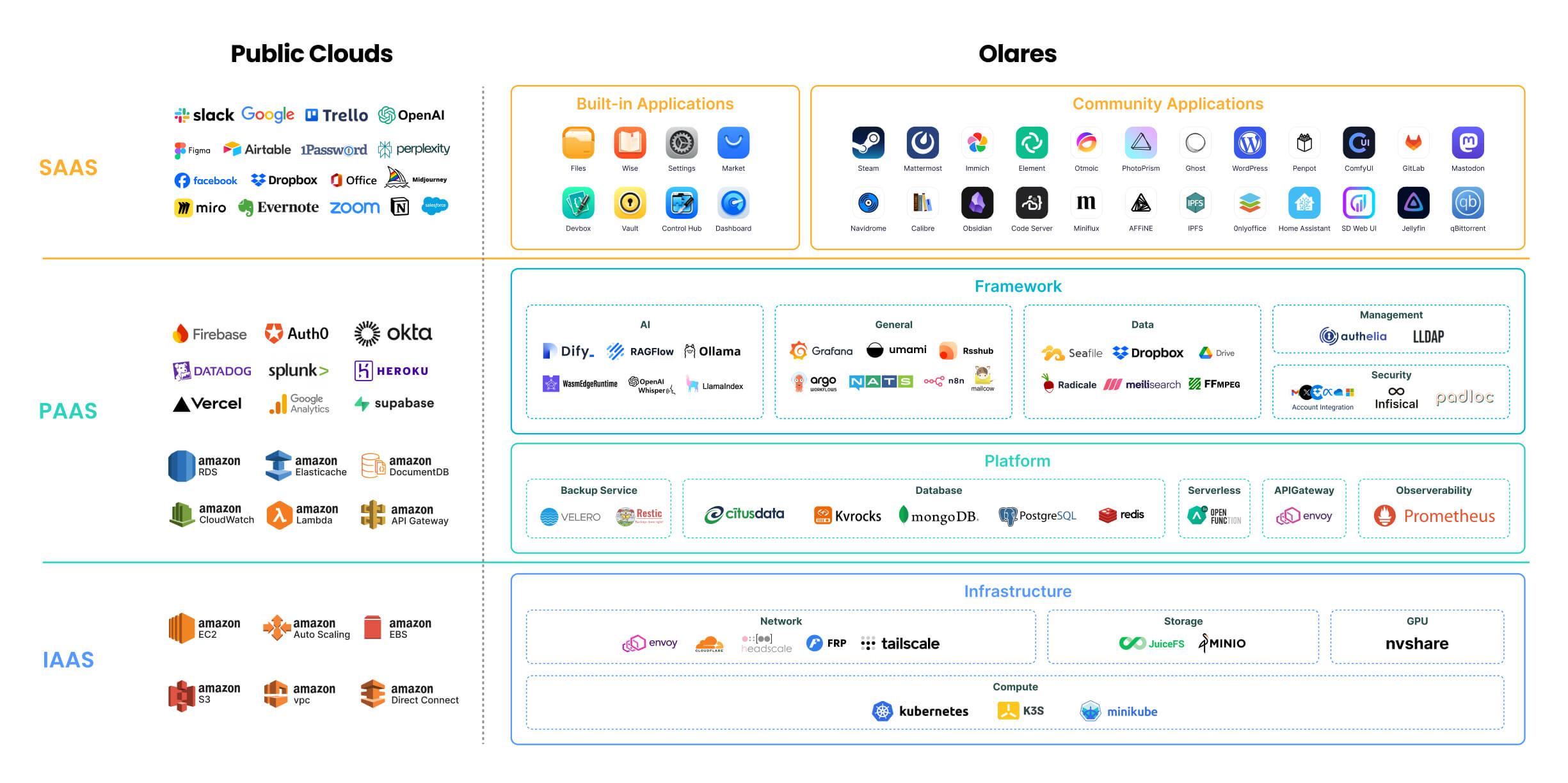

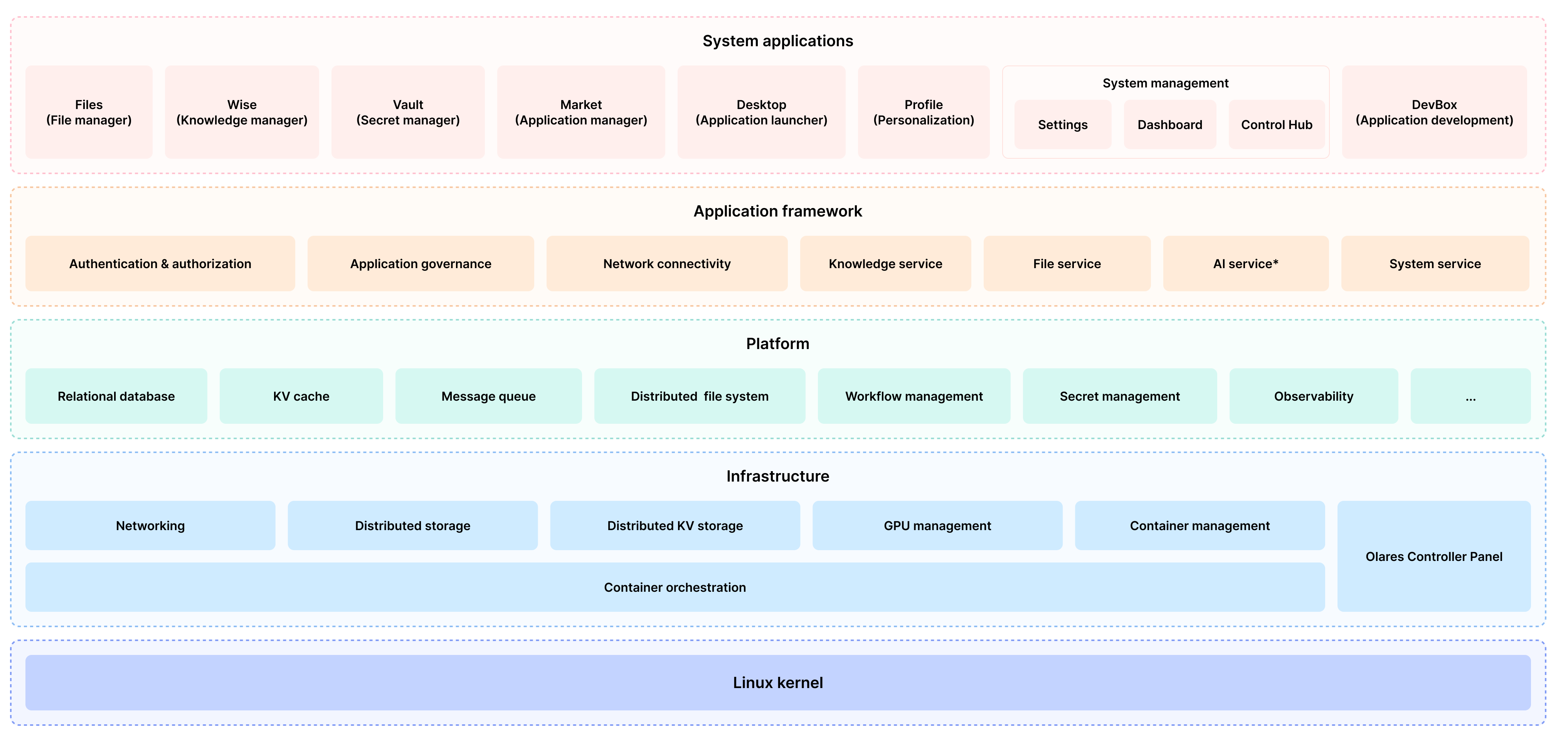

## Architecture

|

||||

|

||||

Just as Public clouds offer IaaS, PaaS, and SaaS layers, Olares provides open-source alternatives to each of these layers.

|

||||

|

||||

|

||||

|

||||

For detailed description of each component, refer to [Olares architecture](https://docs.olares.com/manual/system-architecture.html).

|

||||

|

||||

> 🔍 **How is Olares different from traditional NAS?**

|

||||

>

|

||||

> Olares focuses on building an all-in-one self-hosted personal cloud experience. Its core features and target users differ significantly from traditional Network Attached Storage (NAS) systems, which primarily focus on network storage. For more details, see [Compare Olares and NAS](https://docs.olares.com/manual/olares-vs-nas.html).

|

||||

|

||||

## Features

|

||||

|

||||

Olares offers a wide array of features designed to enhance security, ease of use, and development flexibility:

|

||||

|

||||

- **Enterprise-grade security**: Simplified network configuration using Tailscale, Headscale, Cloudflare Tunnel, and FRP.

|

||||

- **Secure and permissionless application ecosystem**: Sandboxing ensures application isolation and security.

|

||||

- **Unified file system and database**: Automated scaling, backups, and high availability.

|

||||

- **Single sign-on**: Log in once to access all applications within Olares with a shared authentication service.

|

||||

- **AI capabilities**: Comprehensive solution for GPU management, local AI model hosting, and private knowledge bases while maintaining data privacy.

|

||||

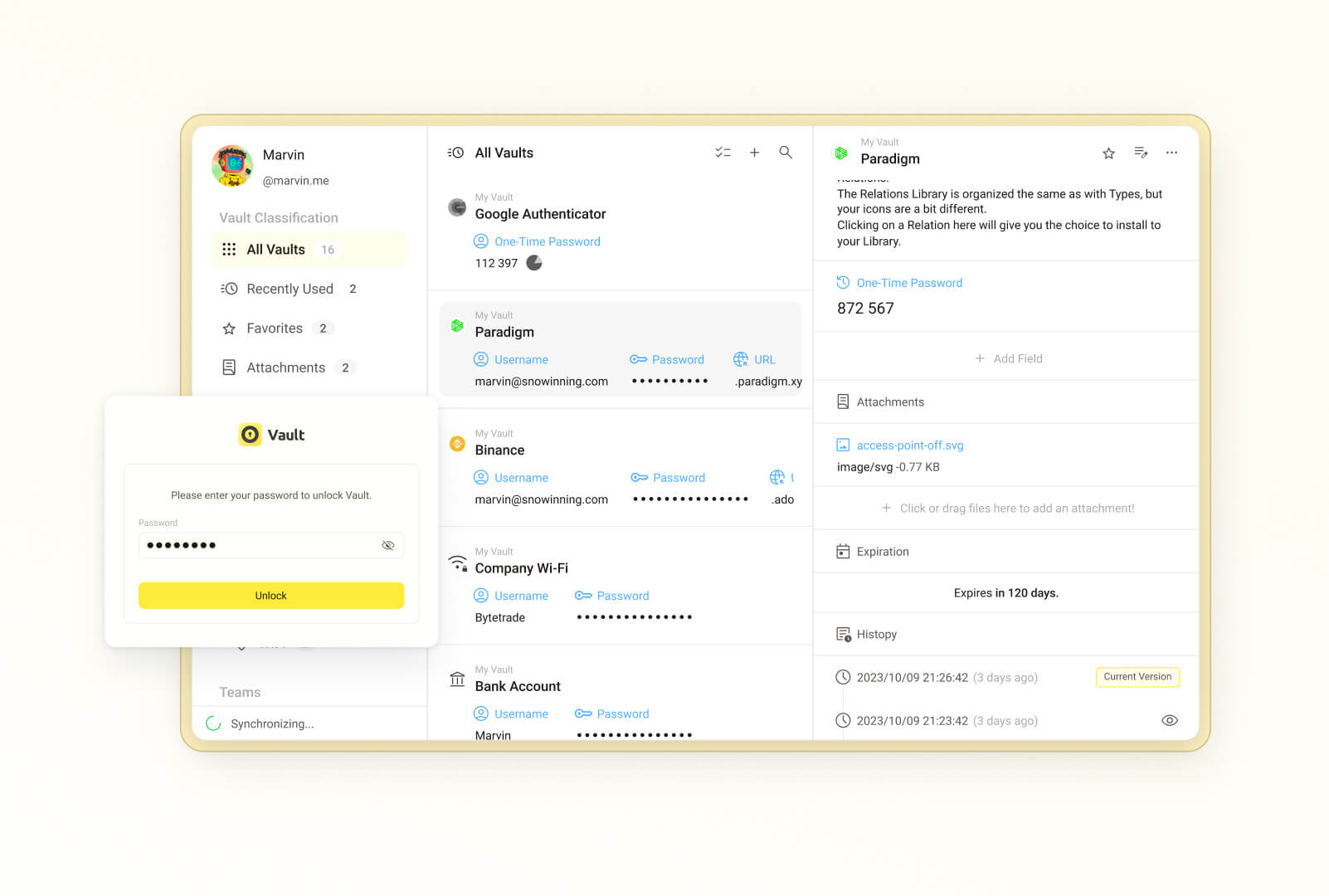

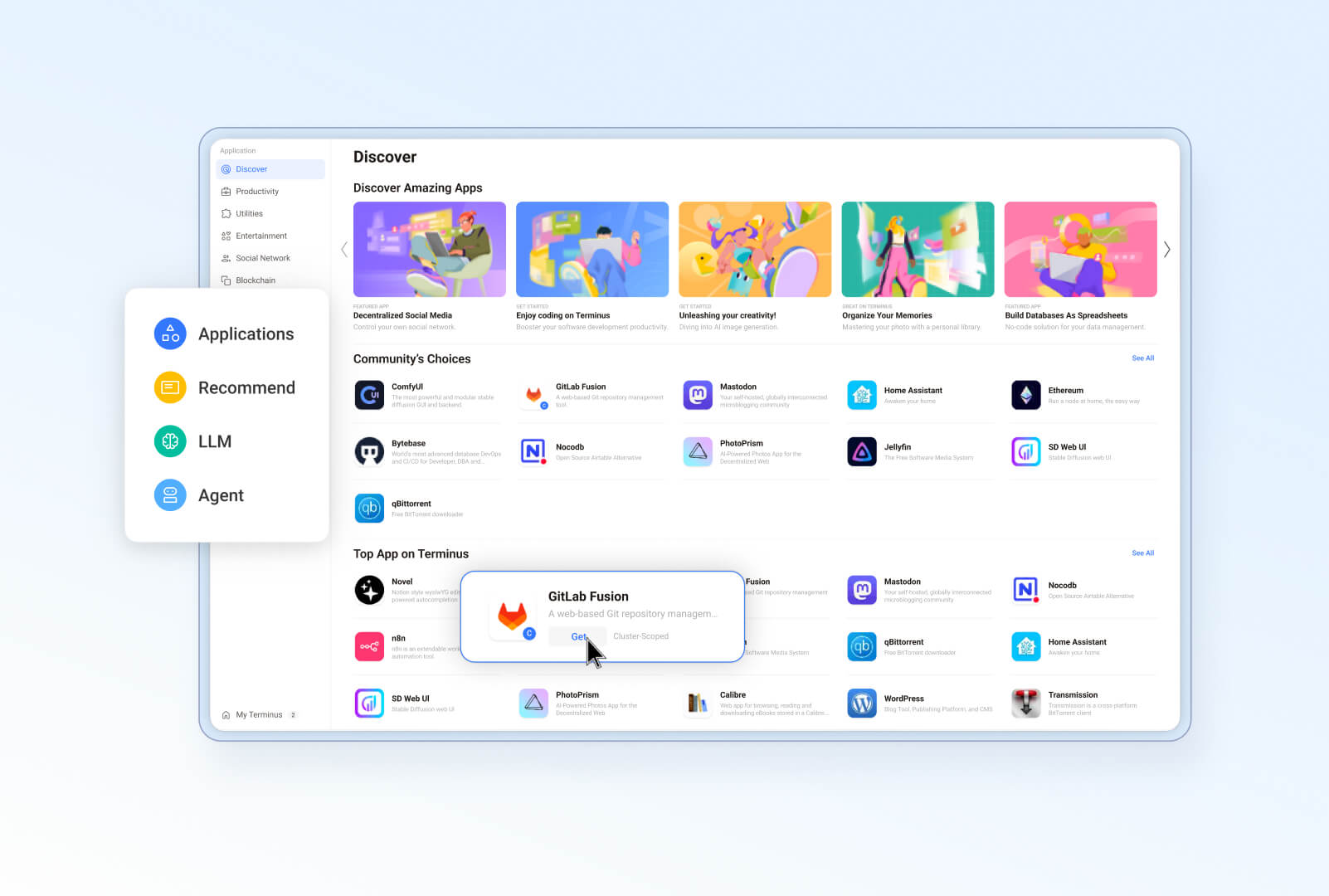

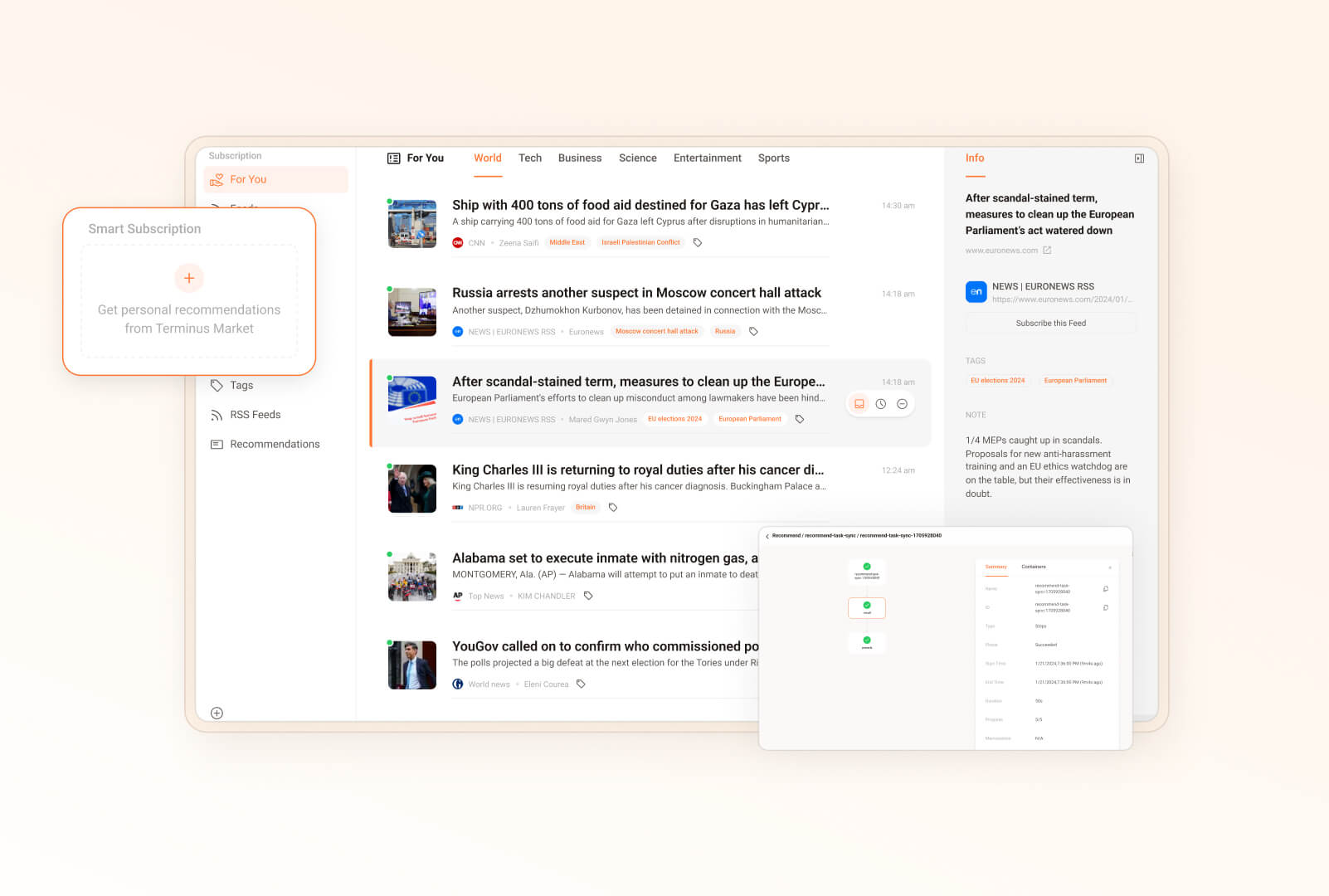

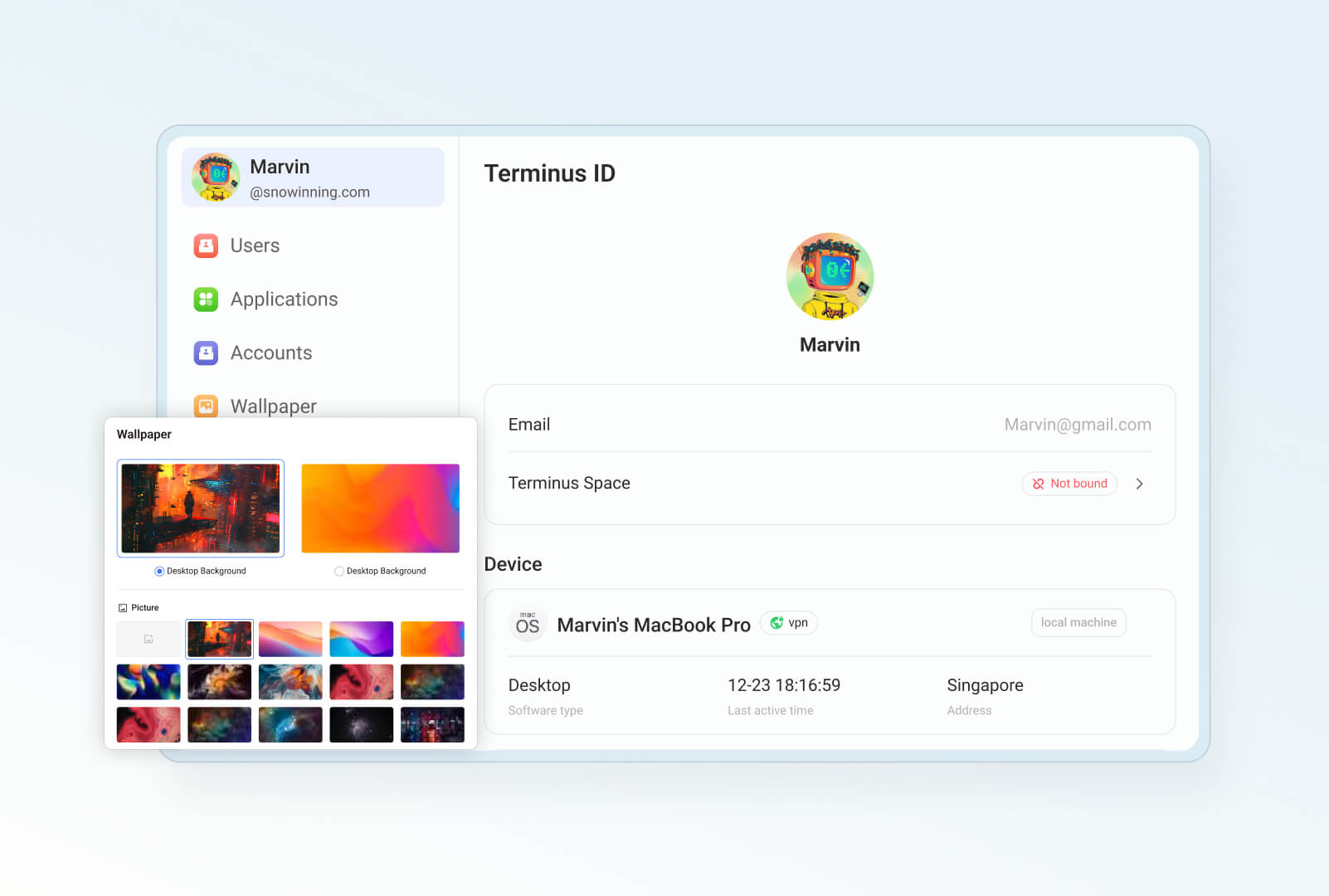

- **Built-in applications**: Includes file manager, sync drive, vault, reader, app market, settings, and dashboard.

|

||||

- **Seamless anywhere access**: Access your devices from anywhere using dedicated clients for mobile, desktop, and browsers.

|

||||

- **Development tools**: Comprehensive development tools for effortless application development and porting.

|

||||

|

||||

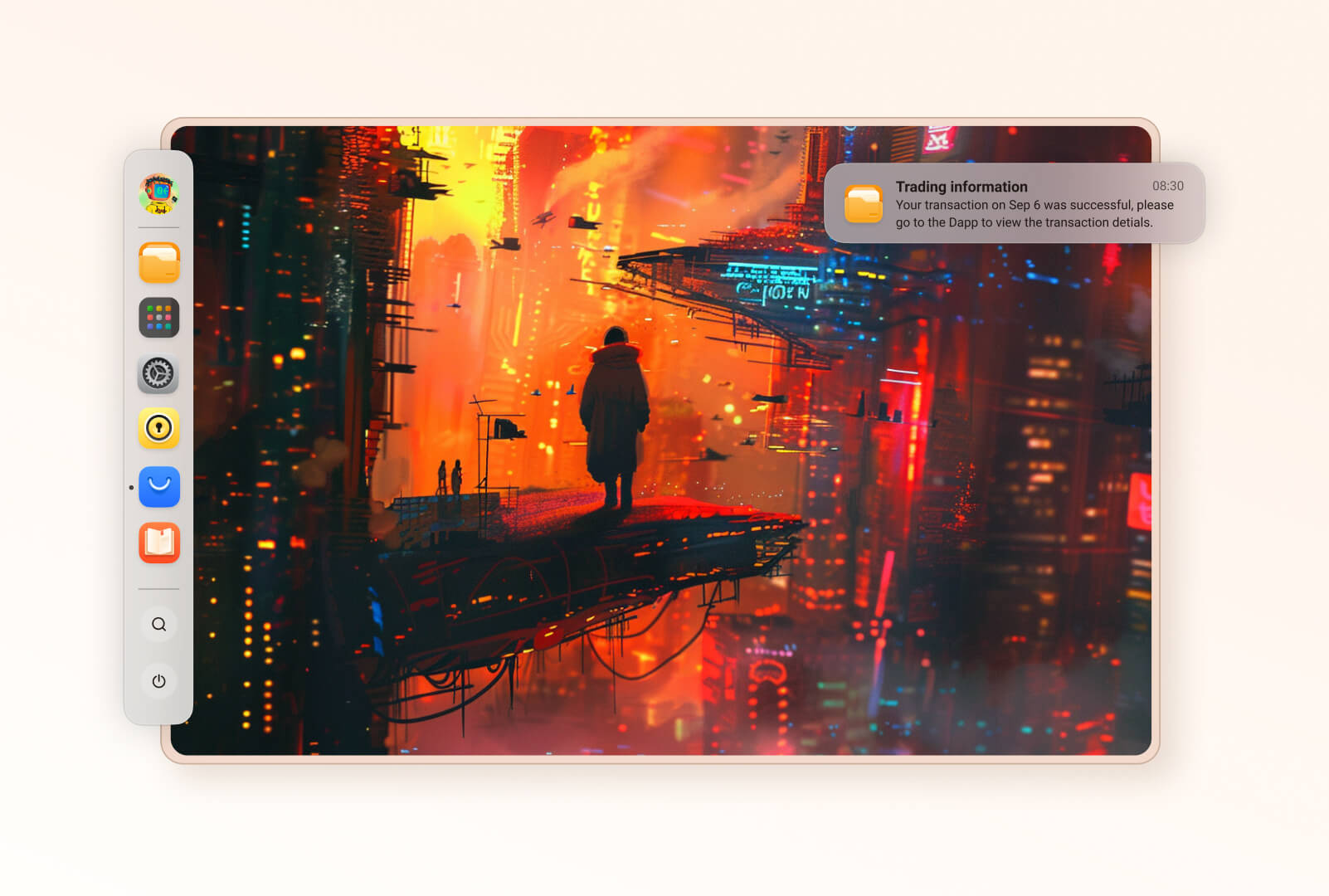

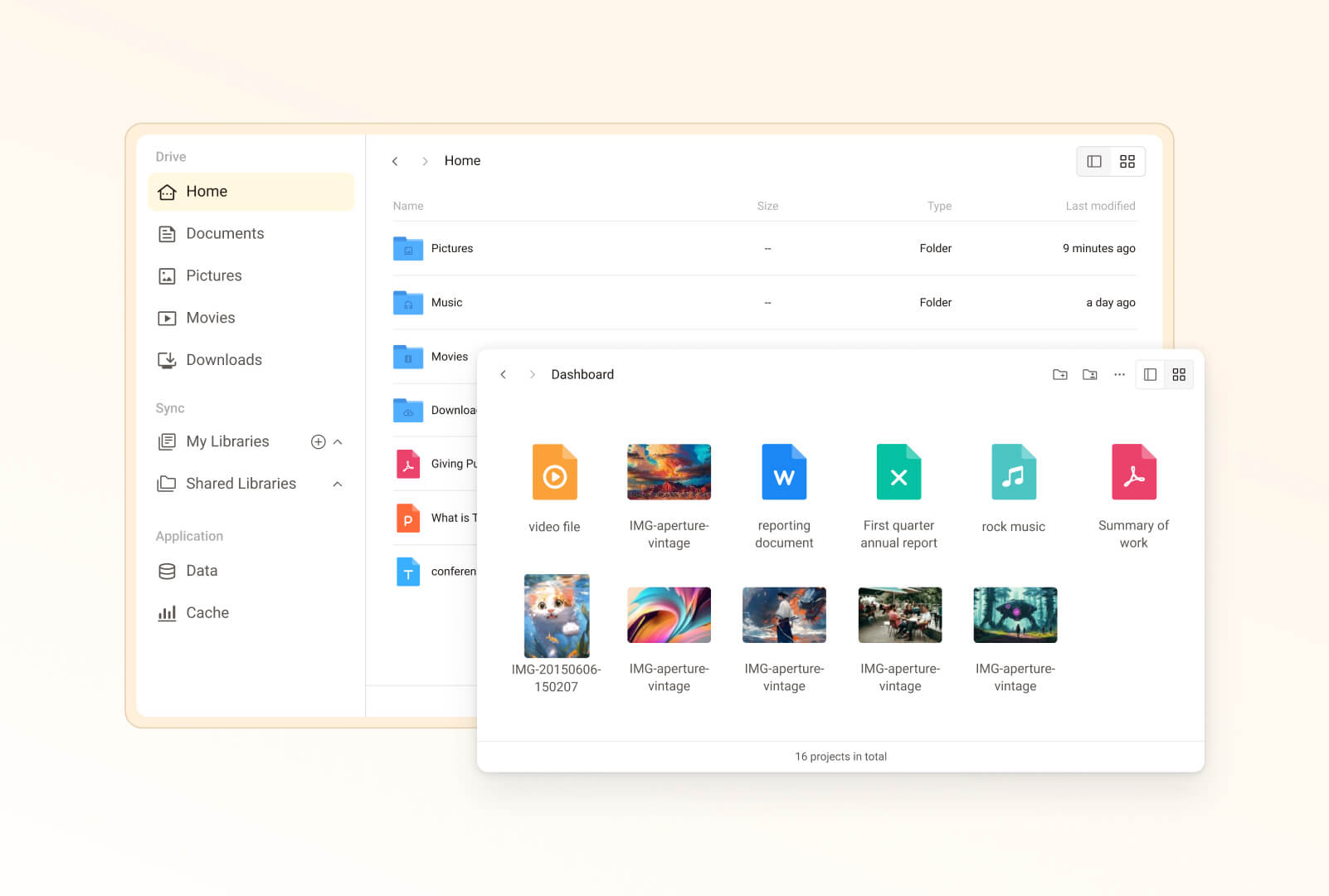

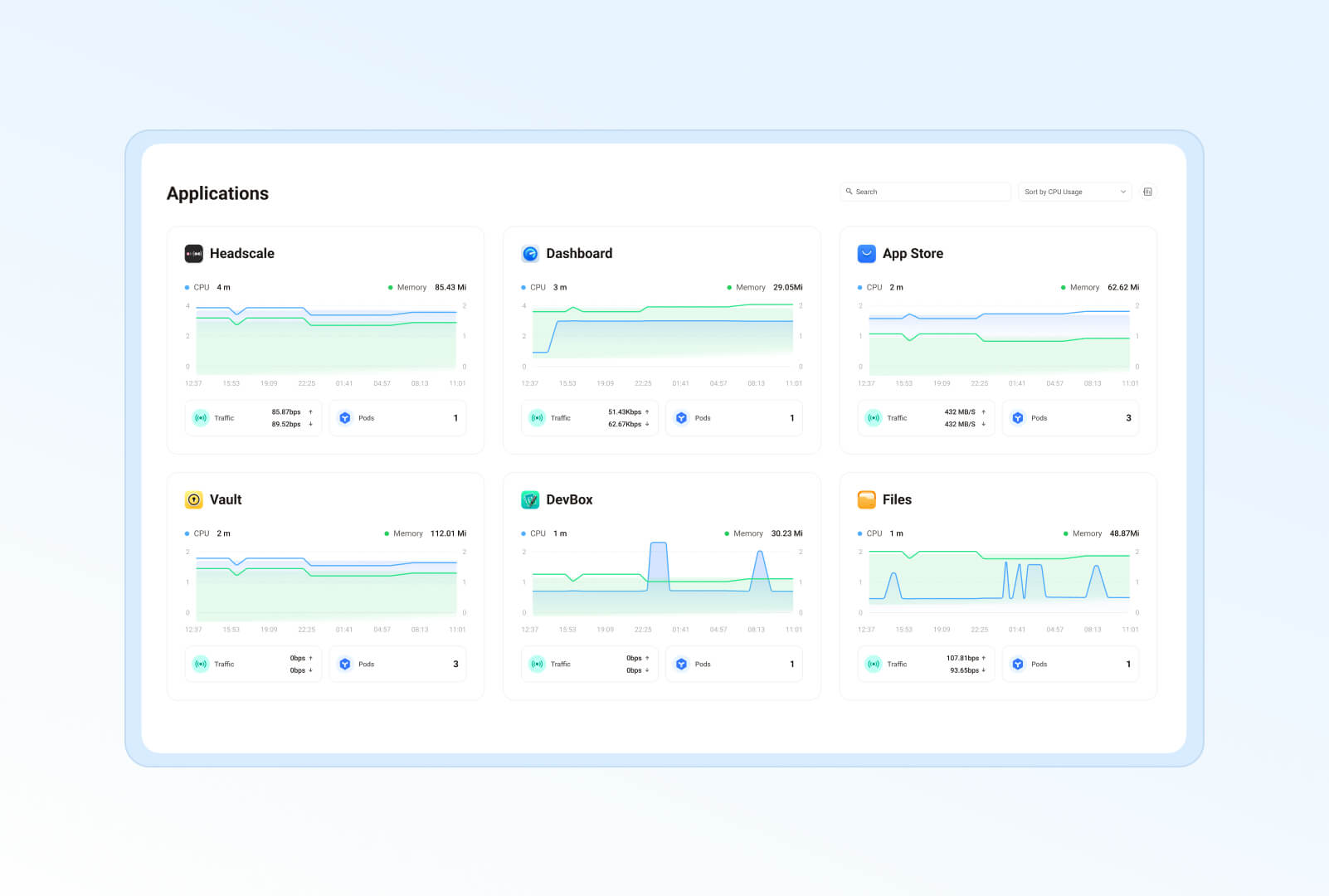

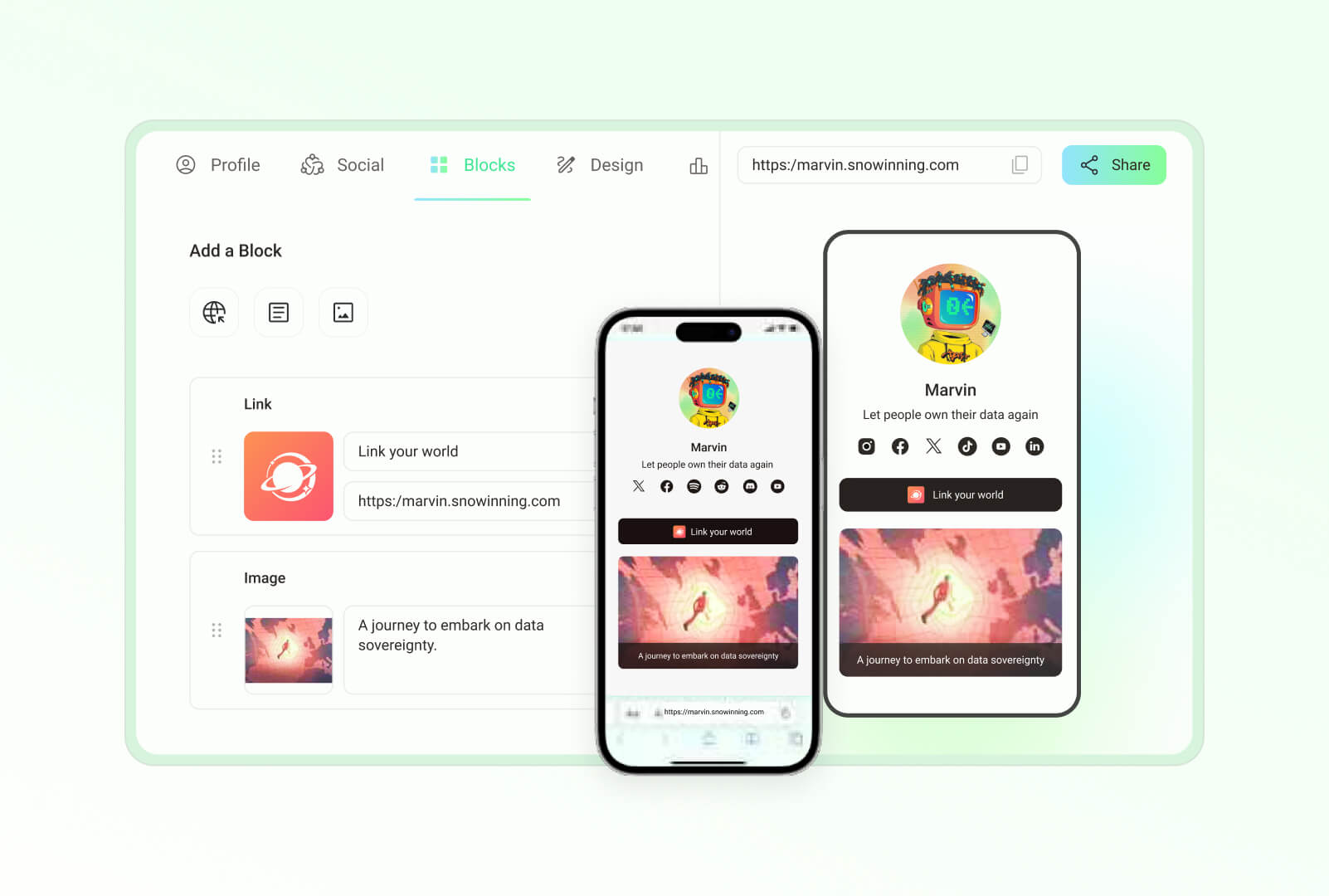

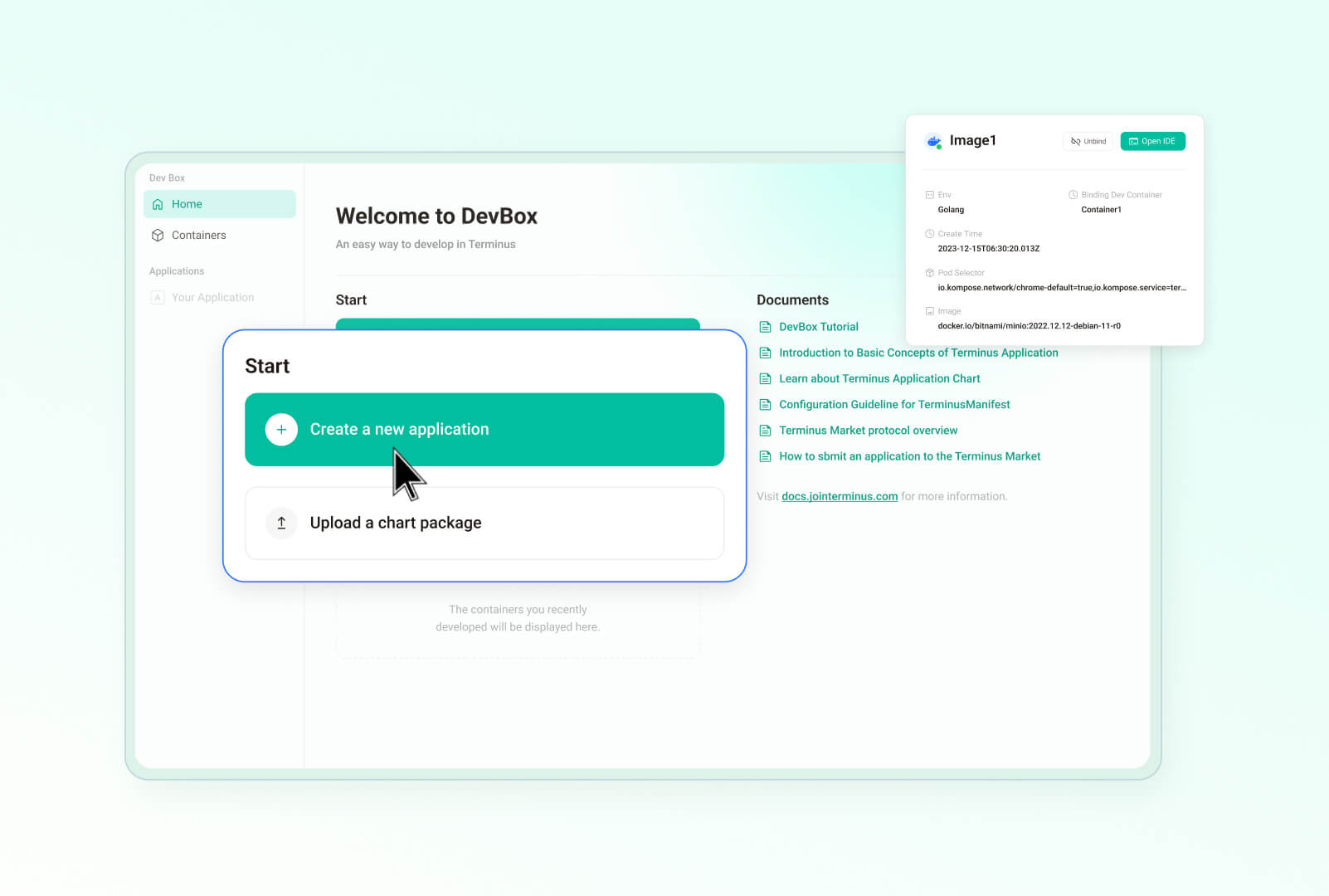

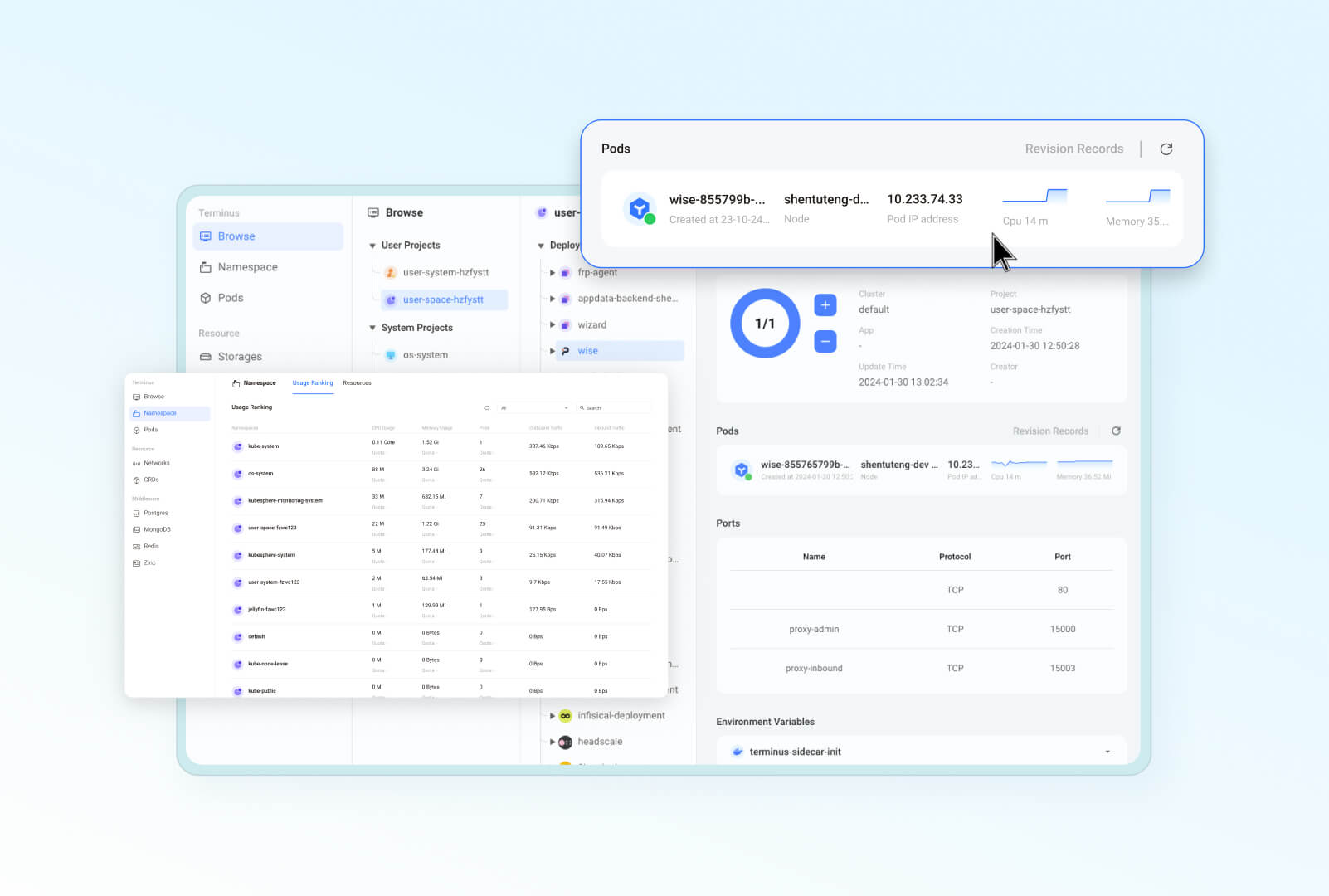

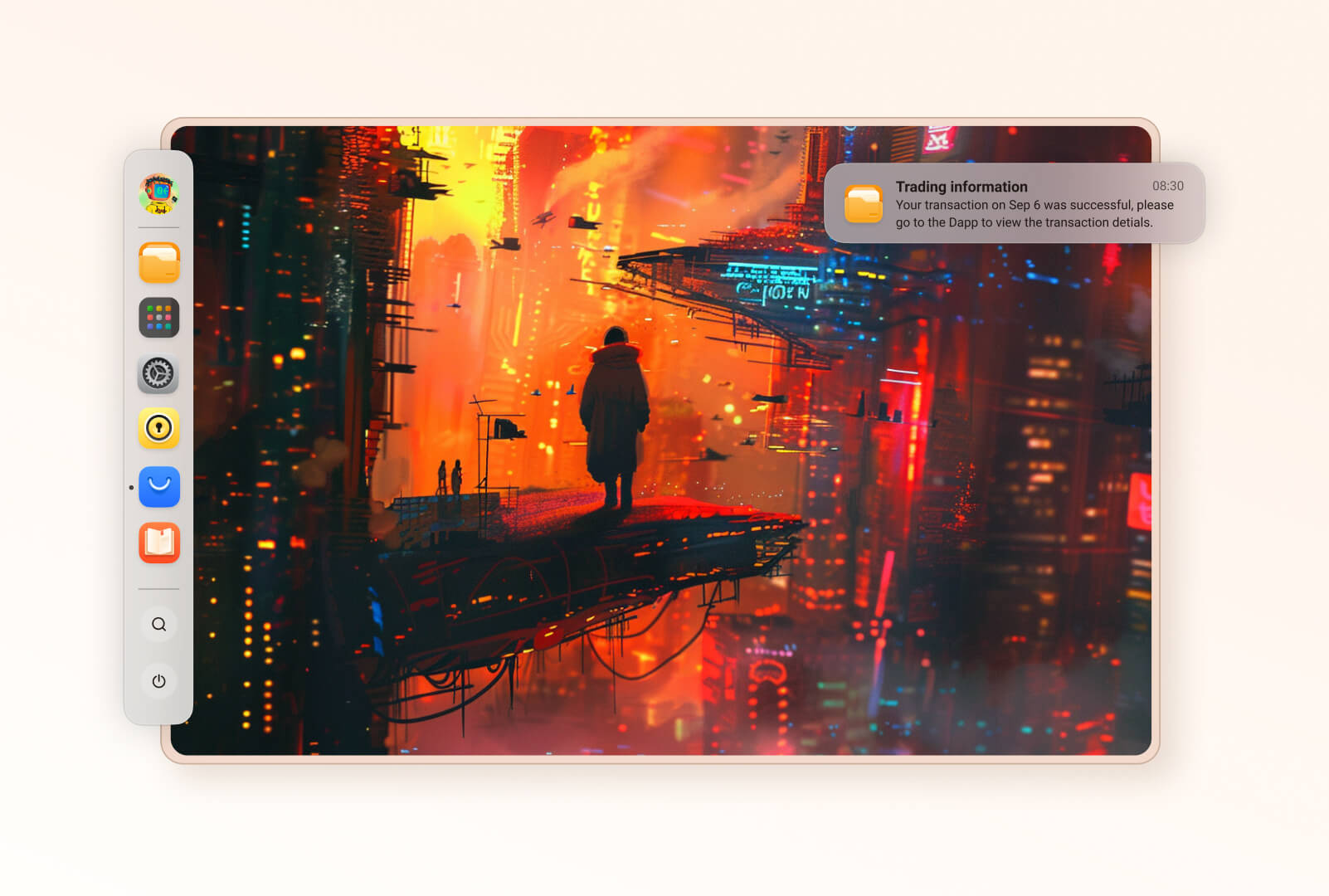

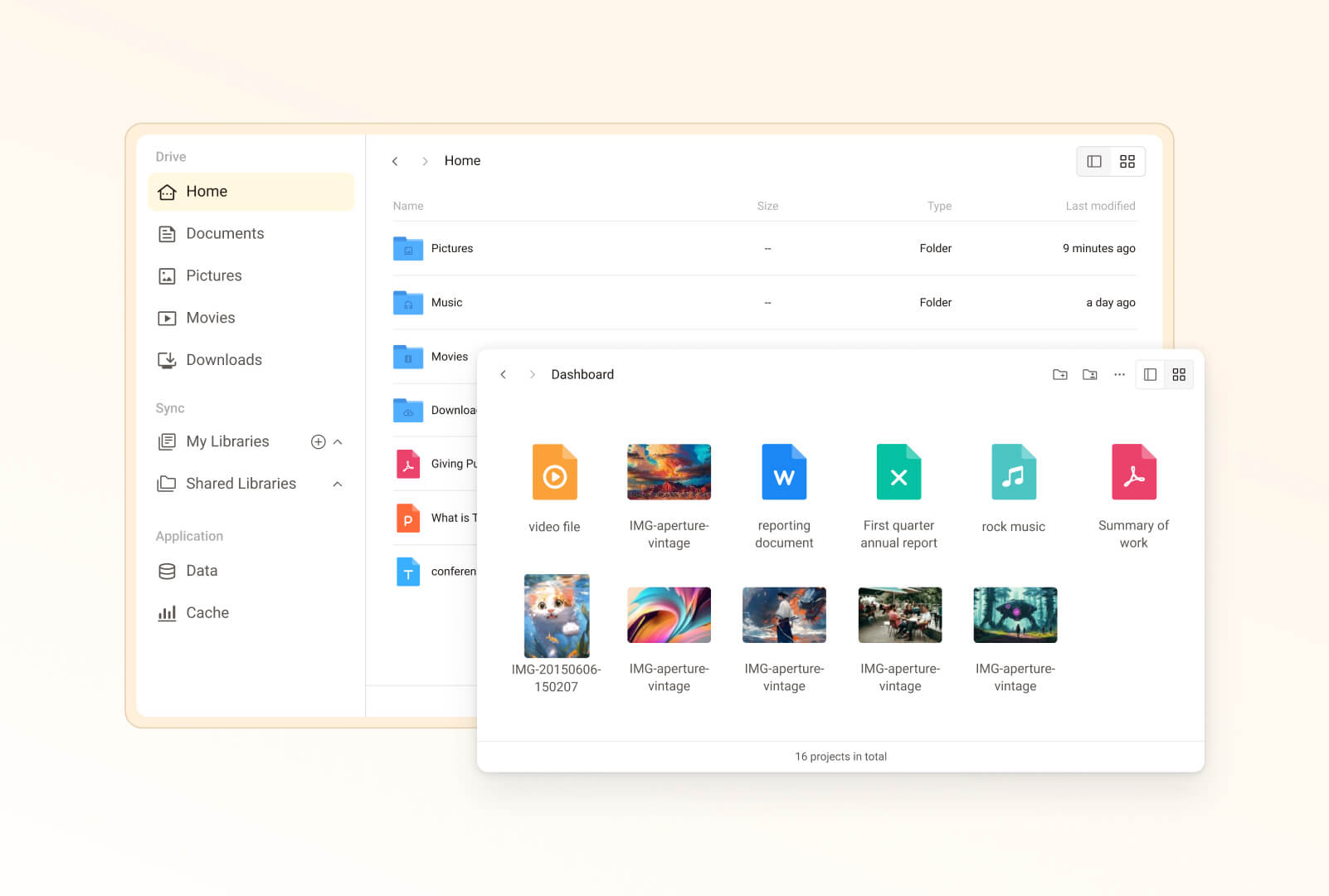

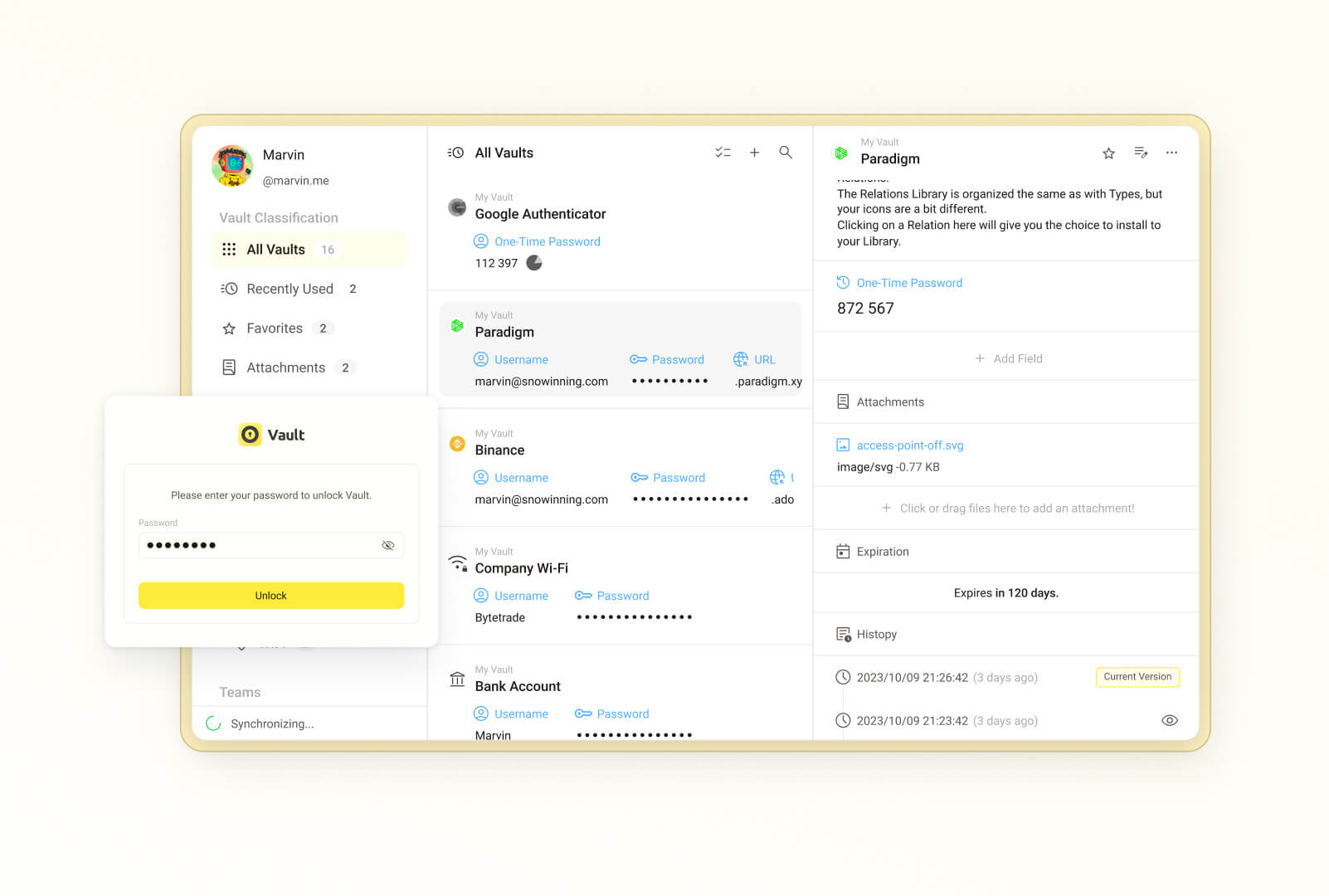

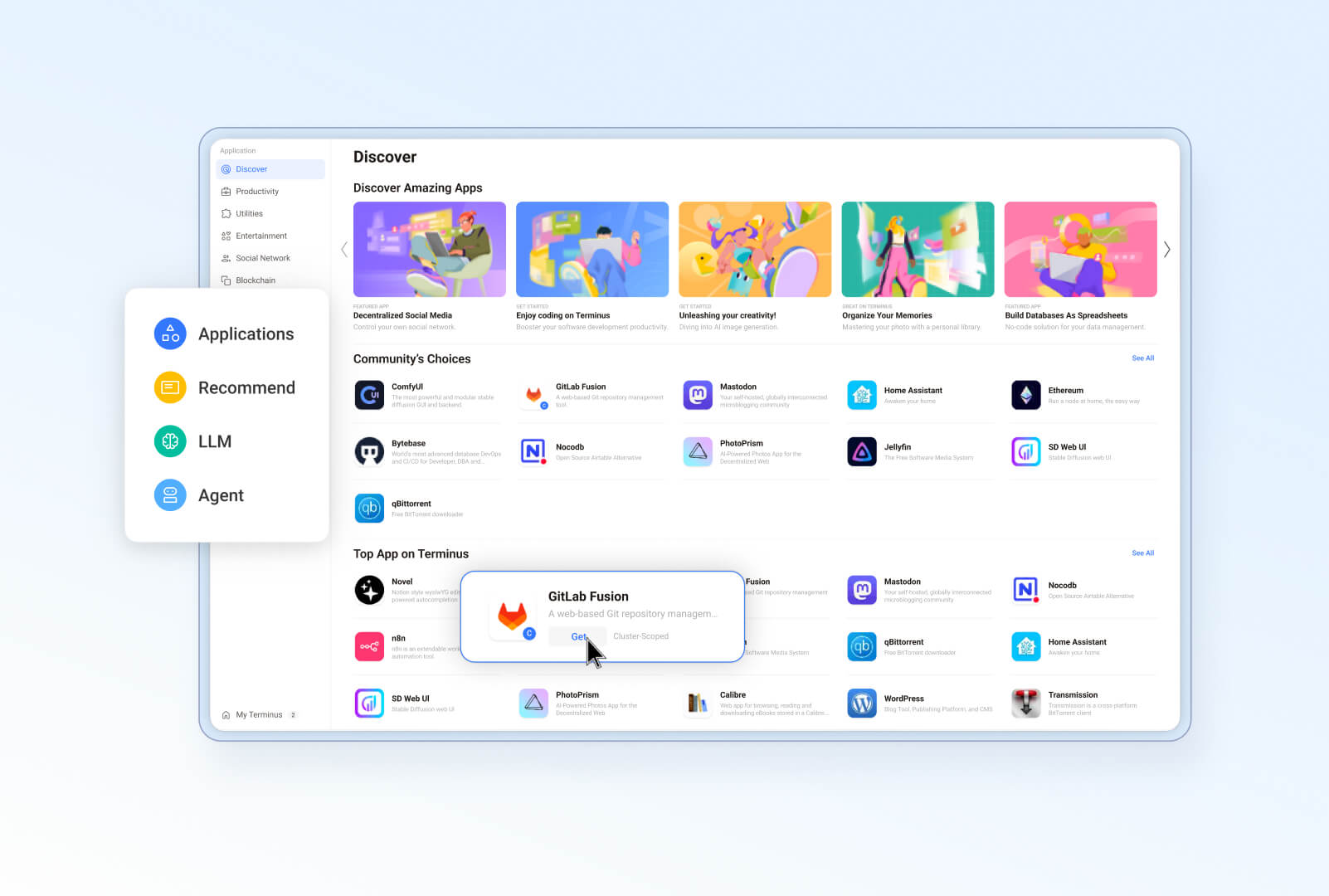

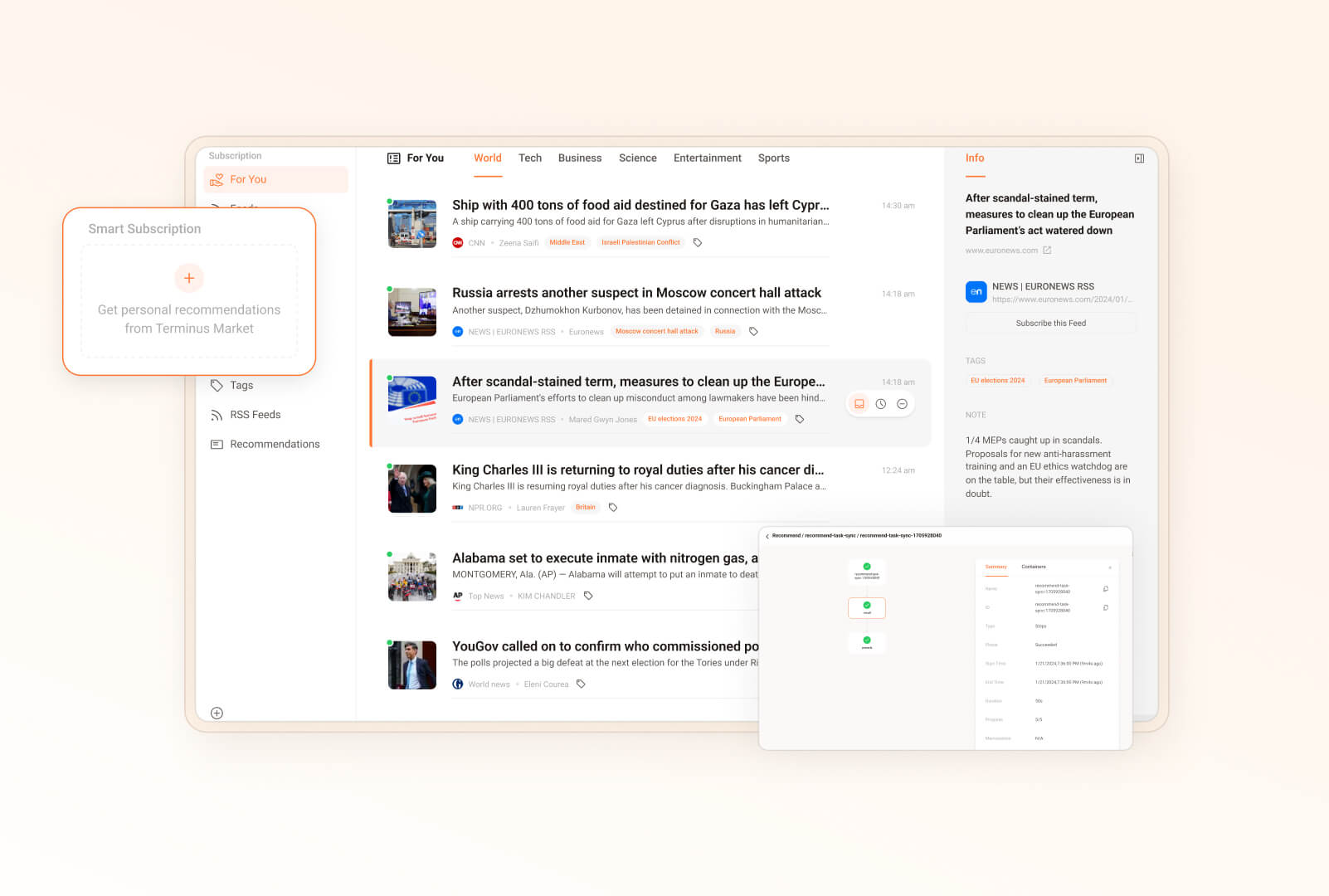

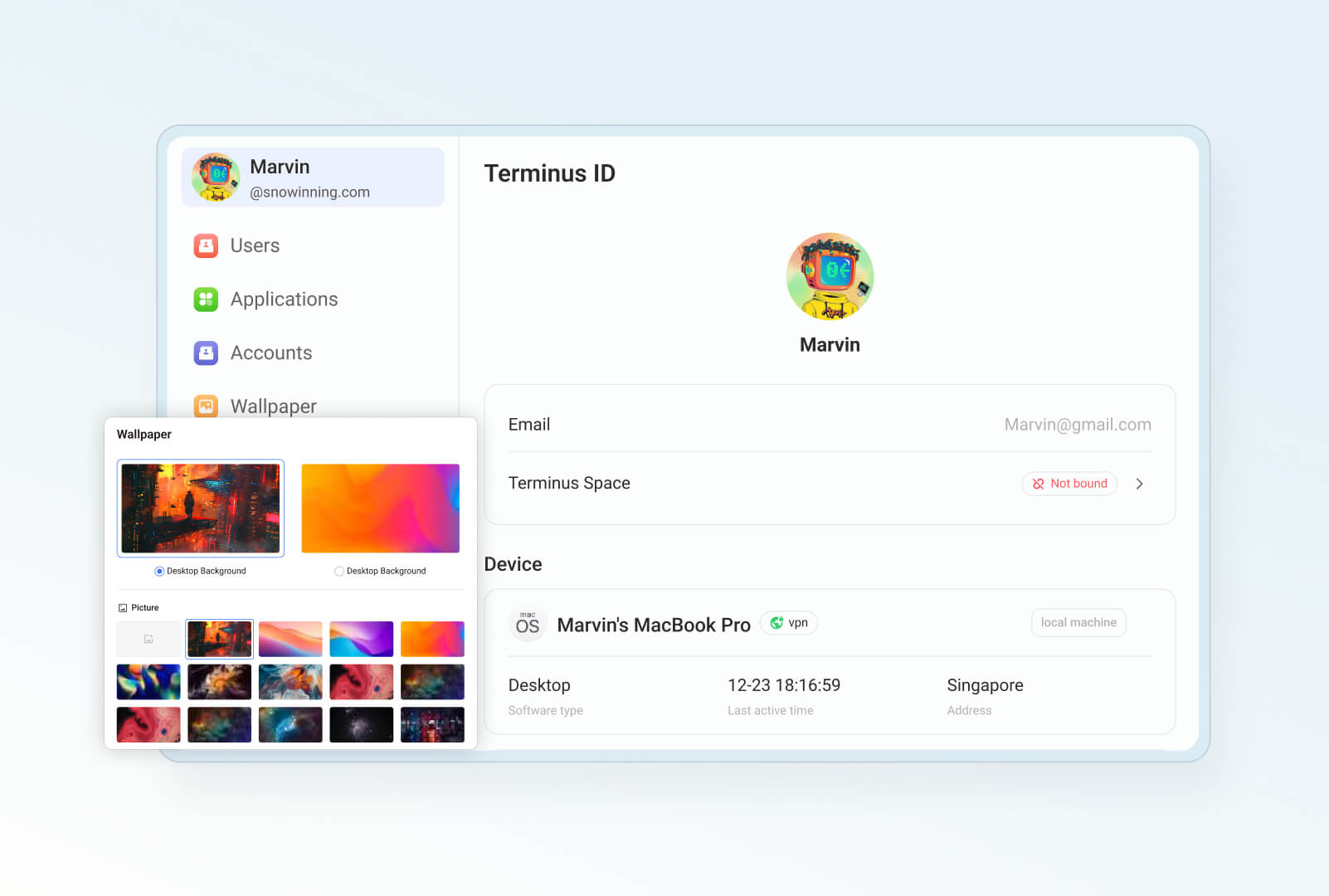

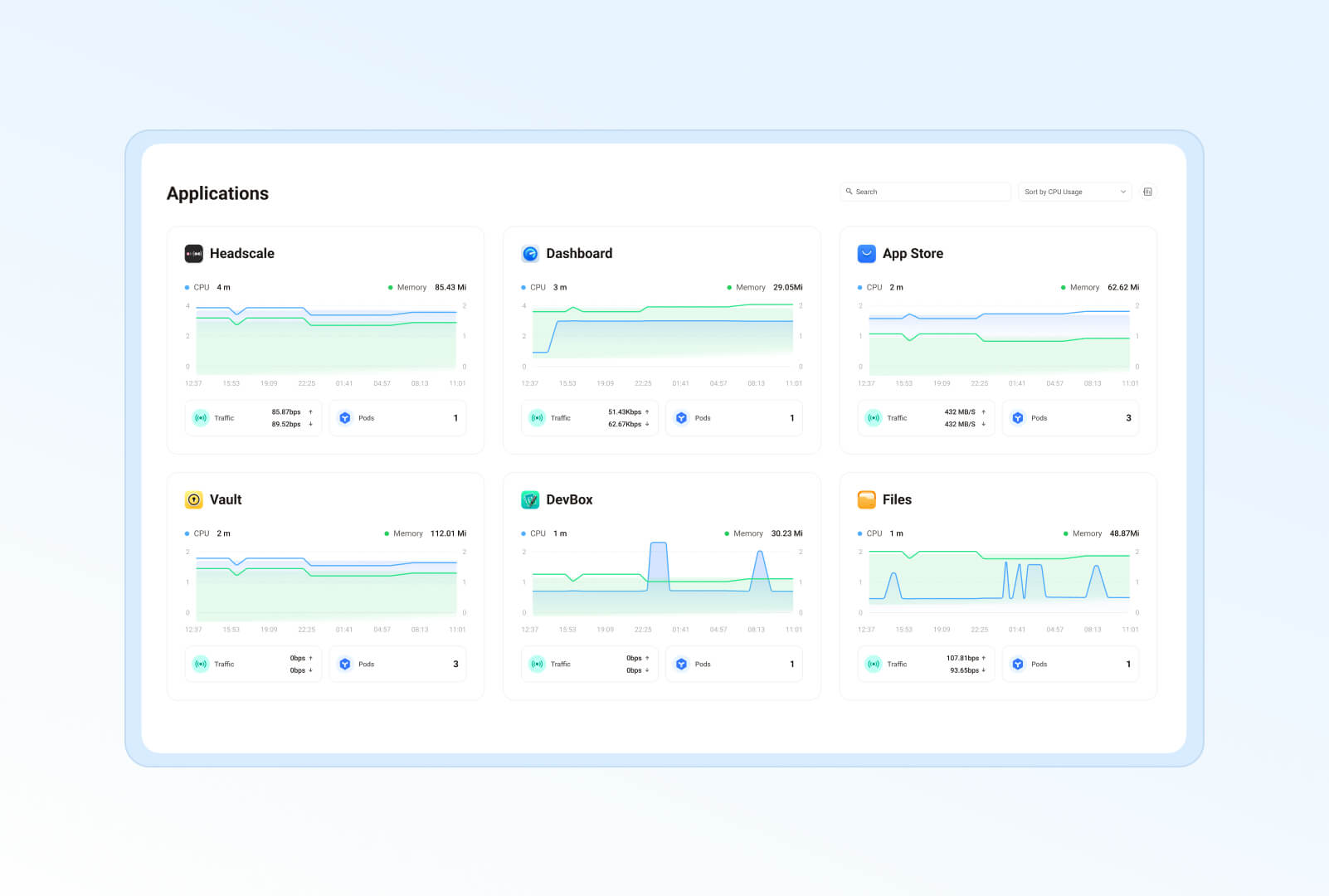

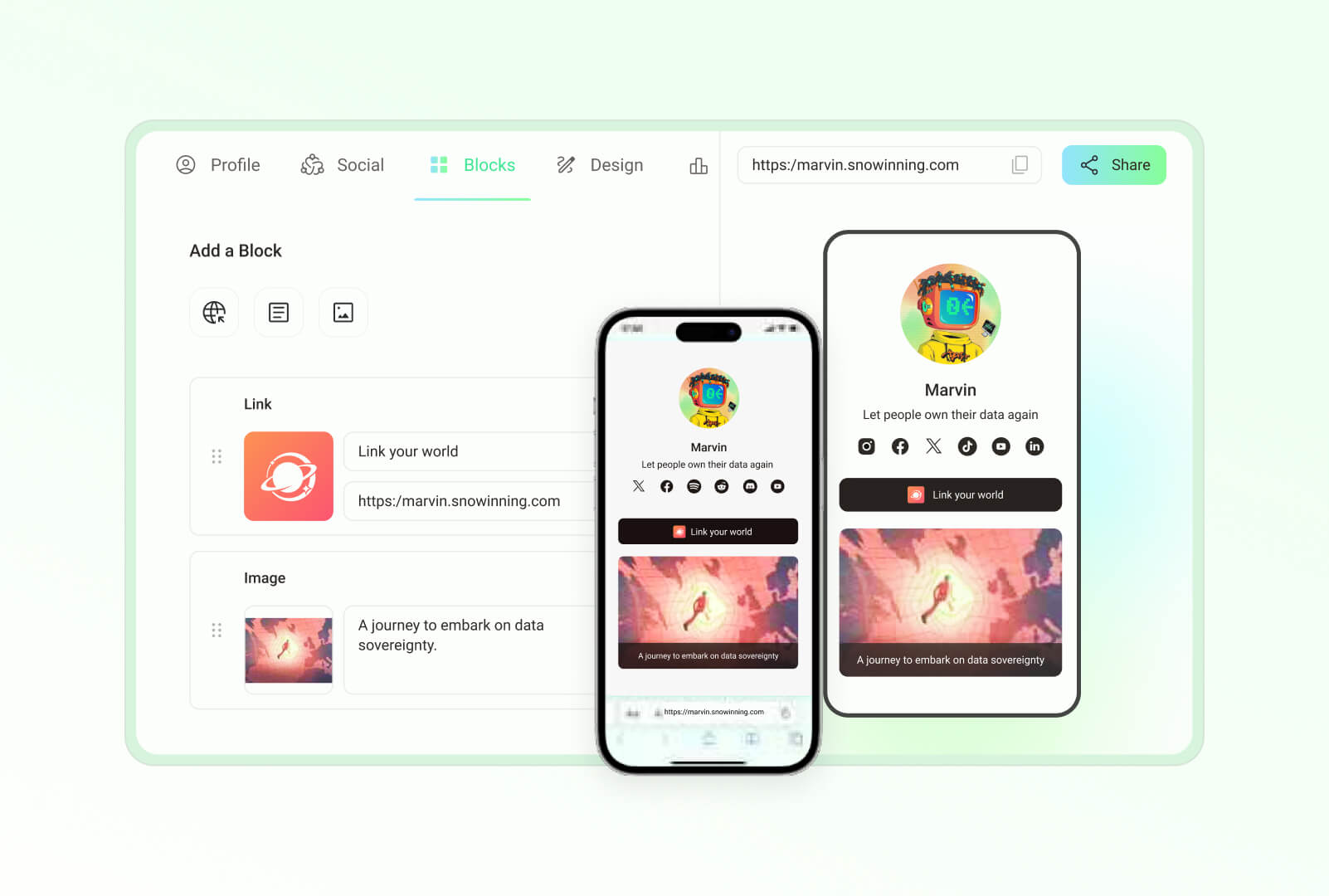

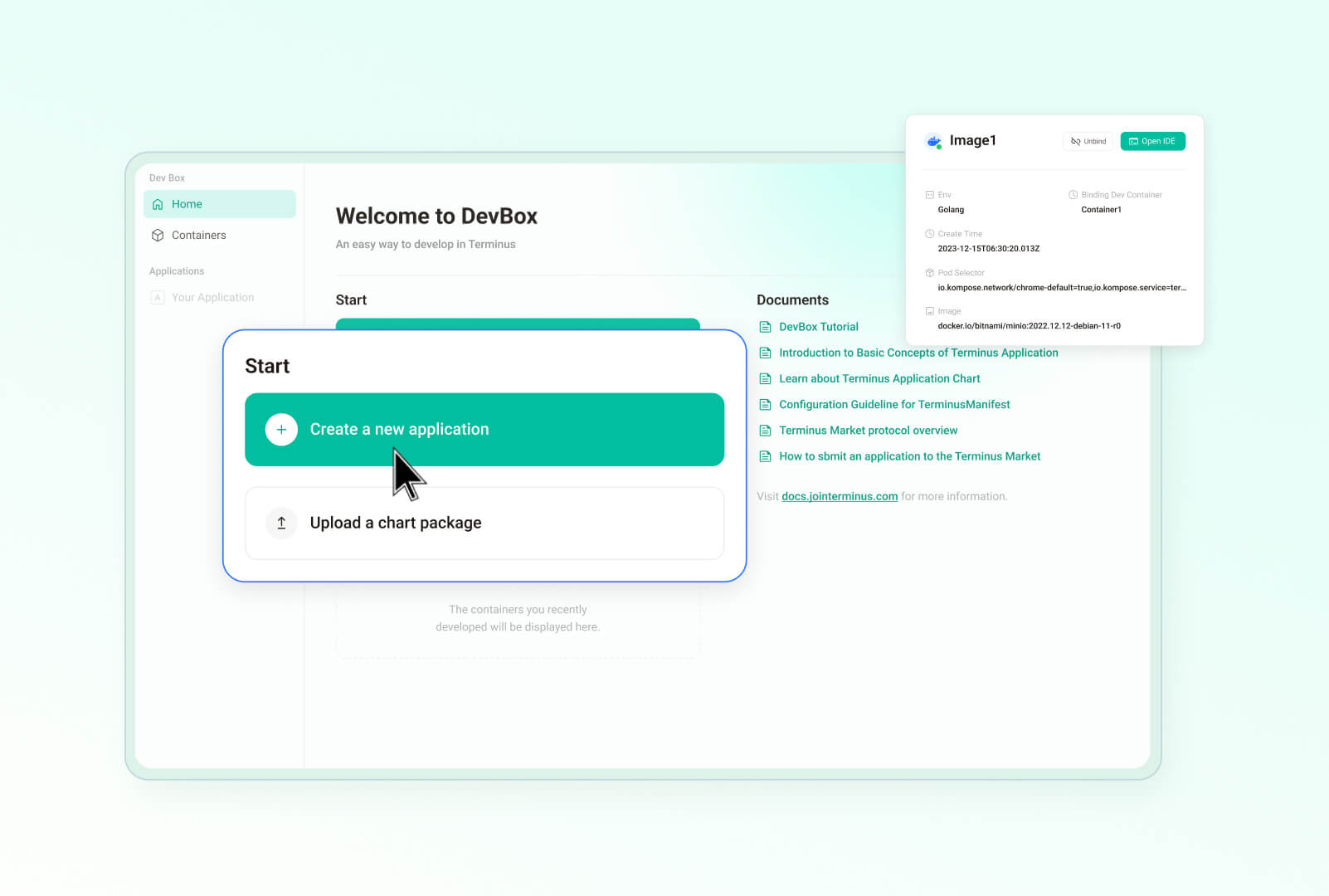

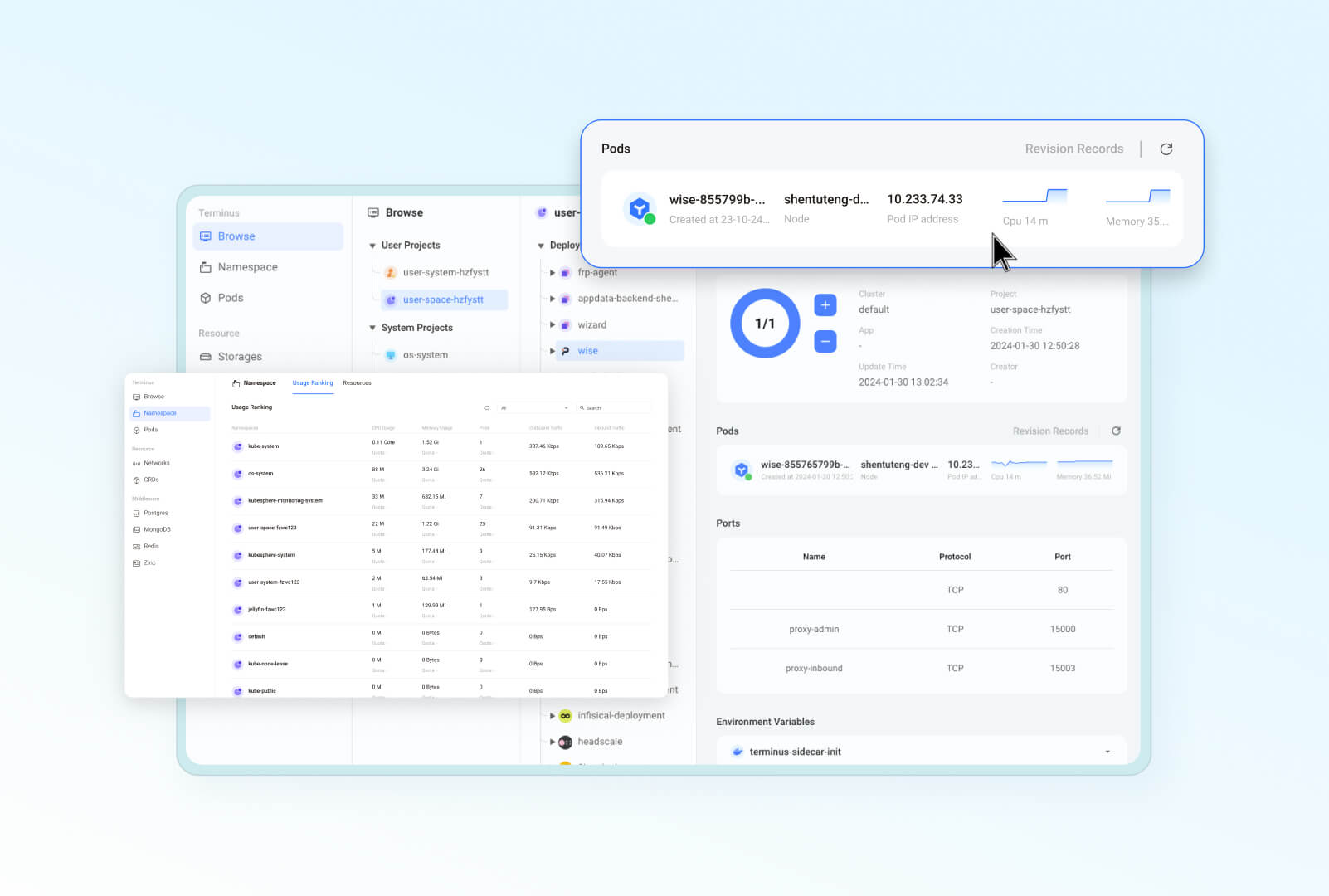

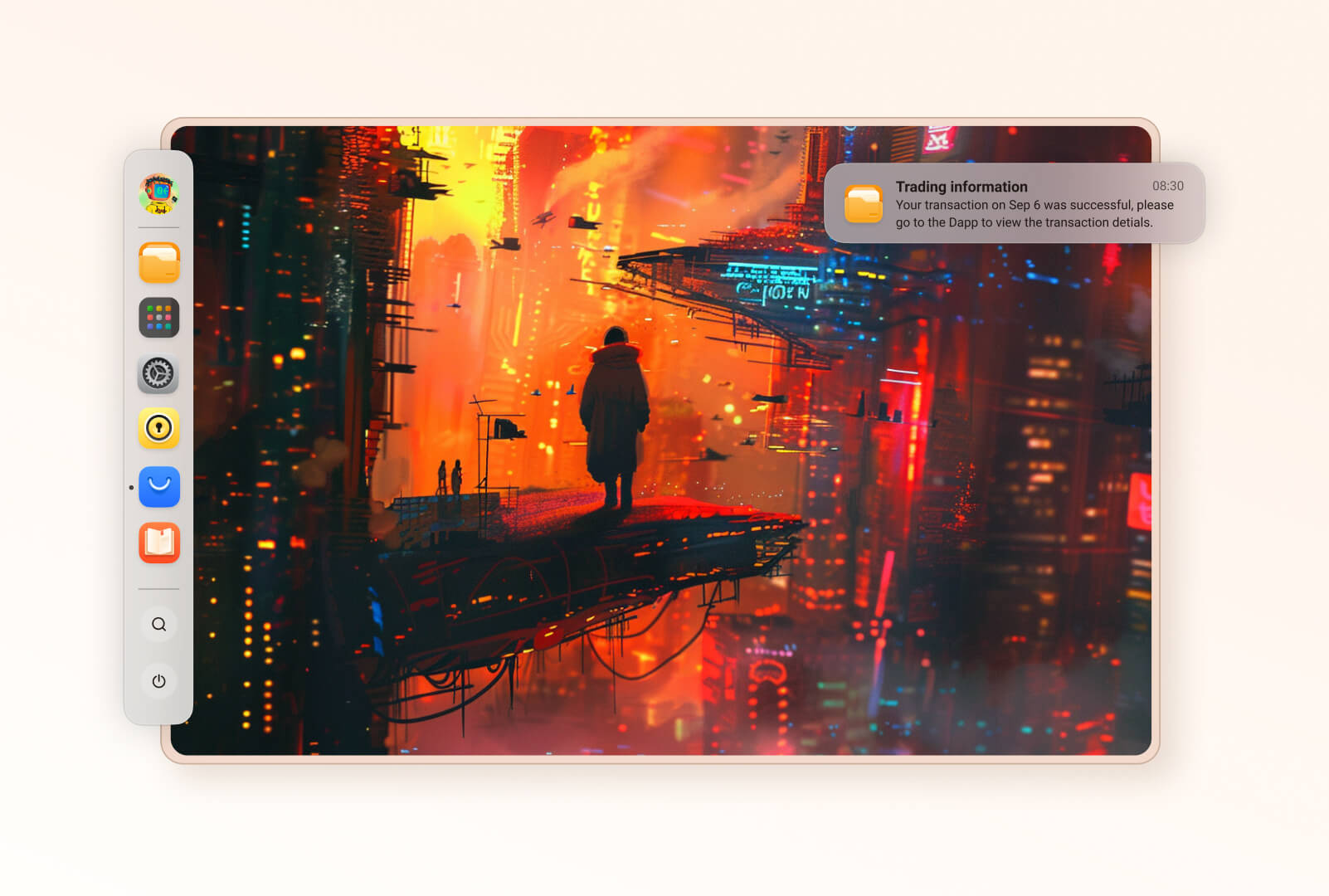

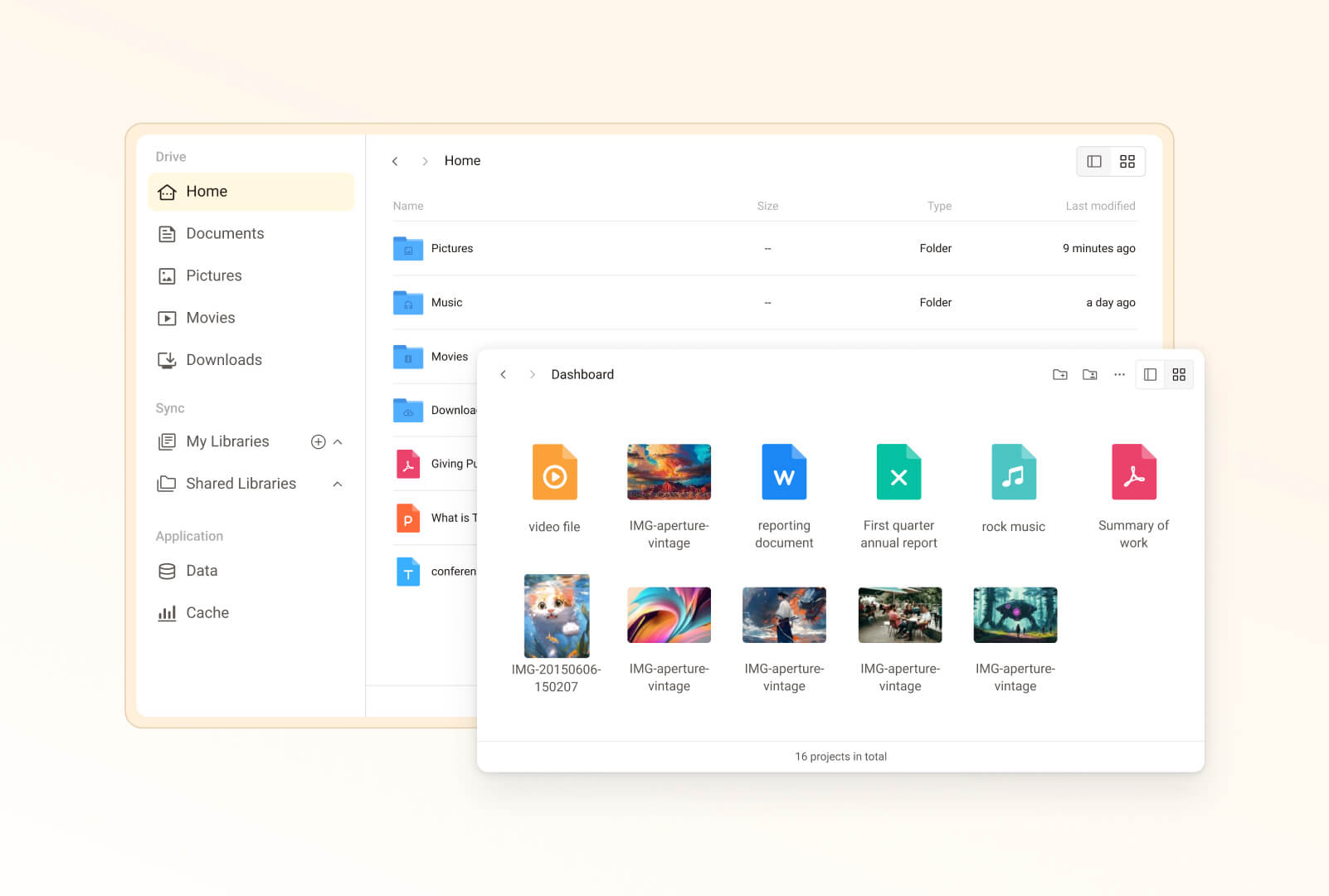

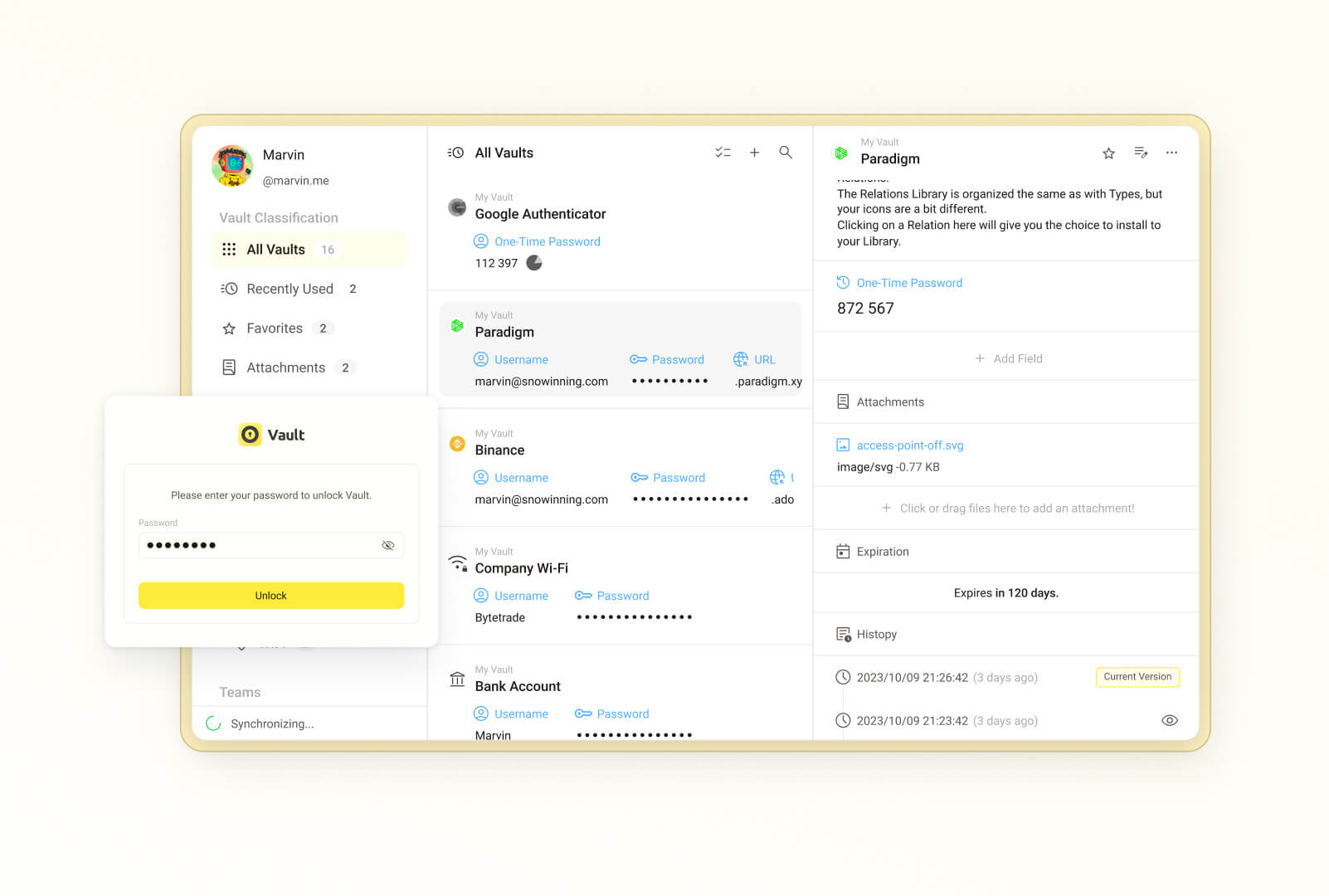

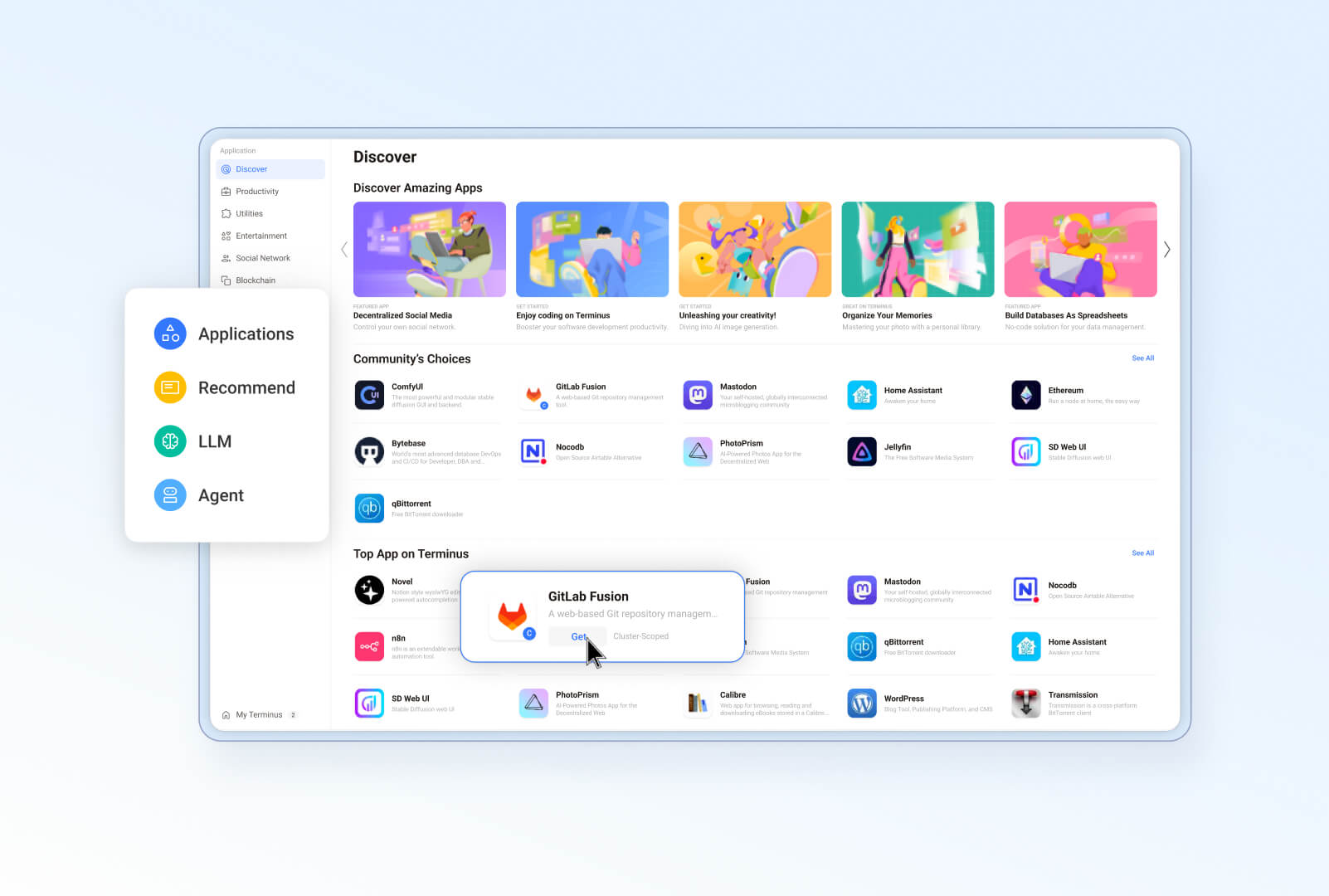

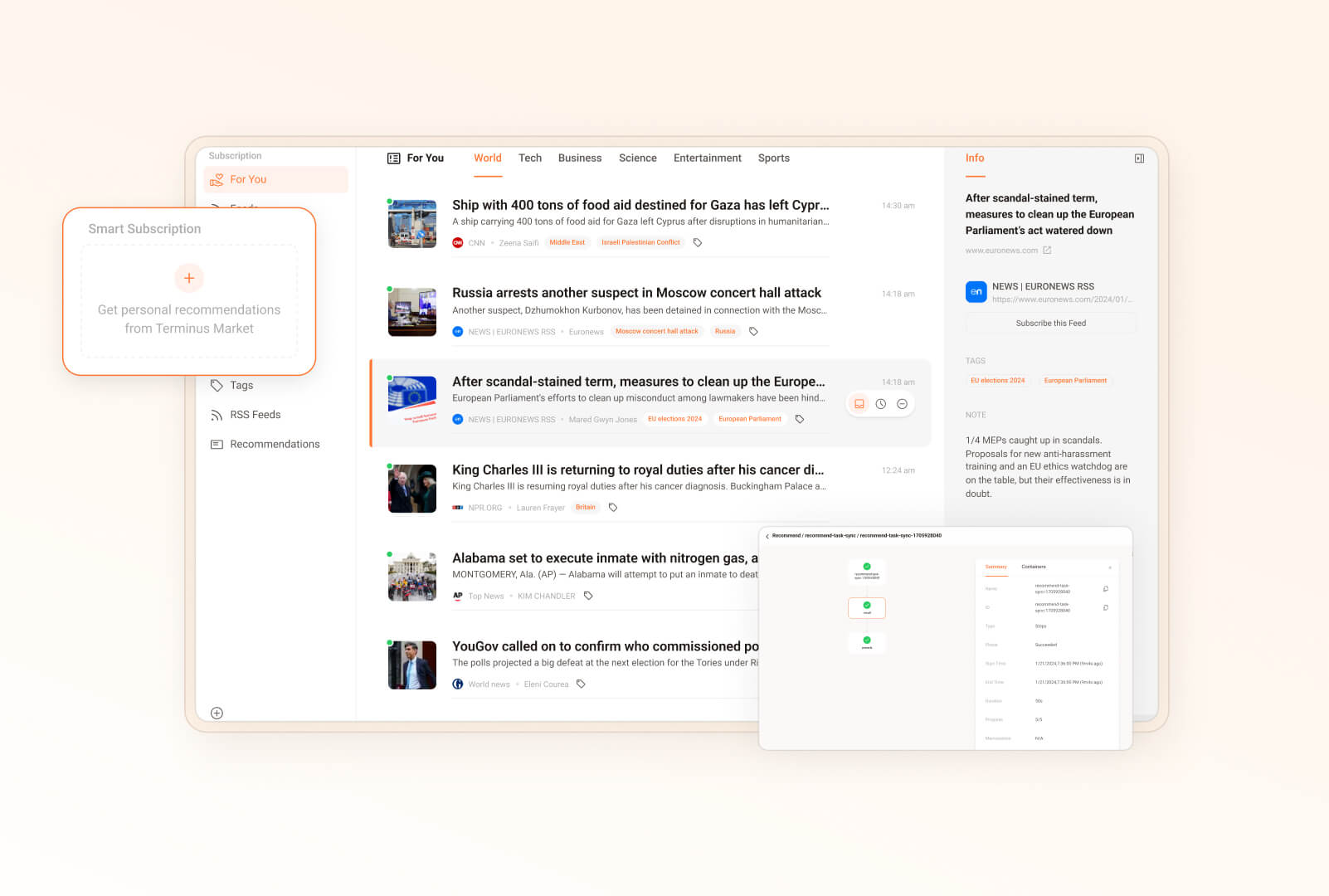

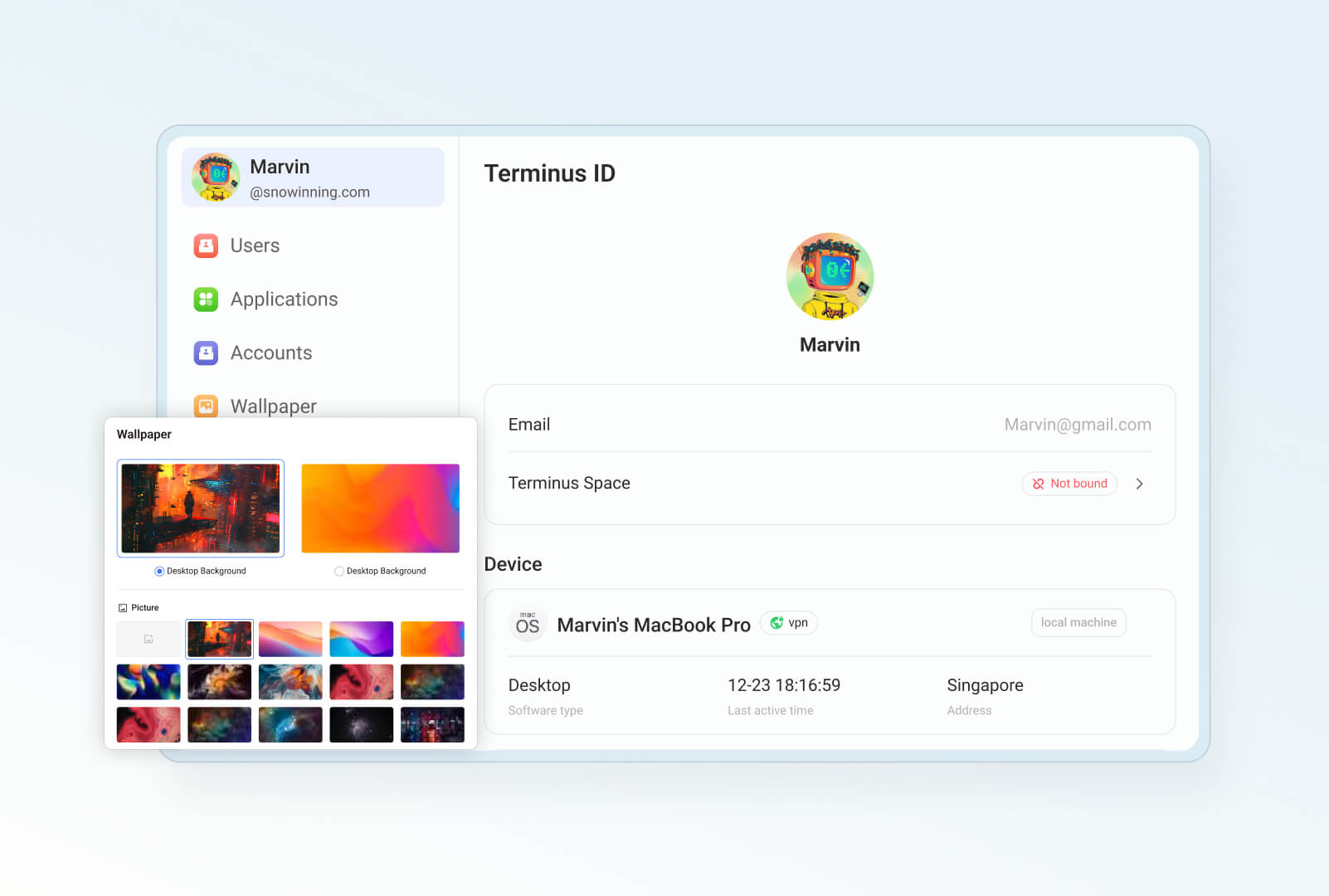

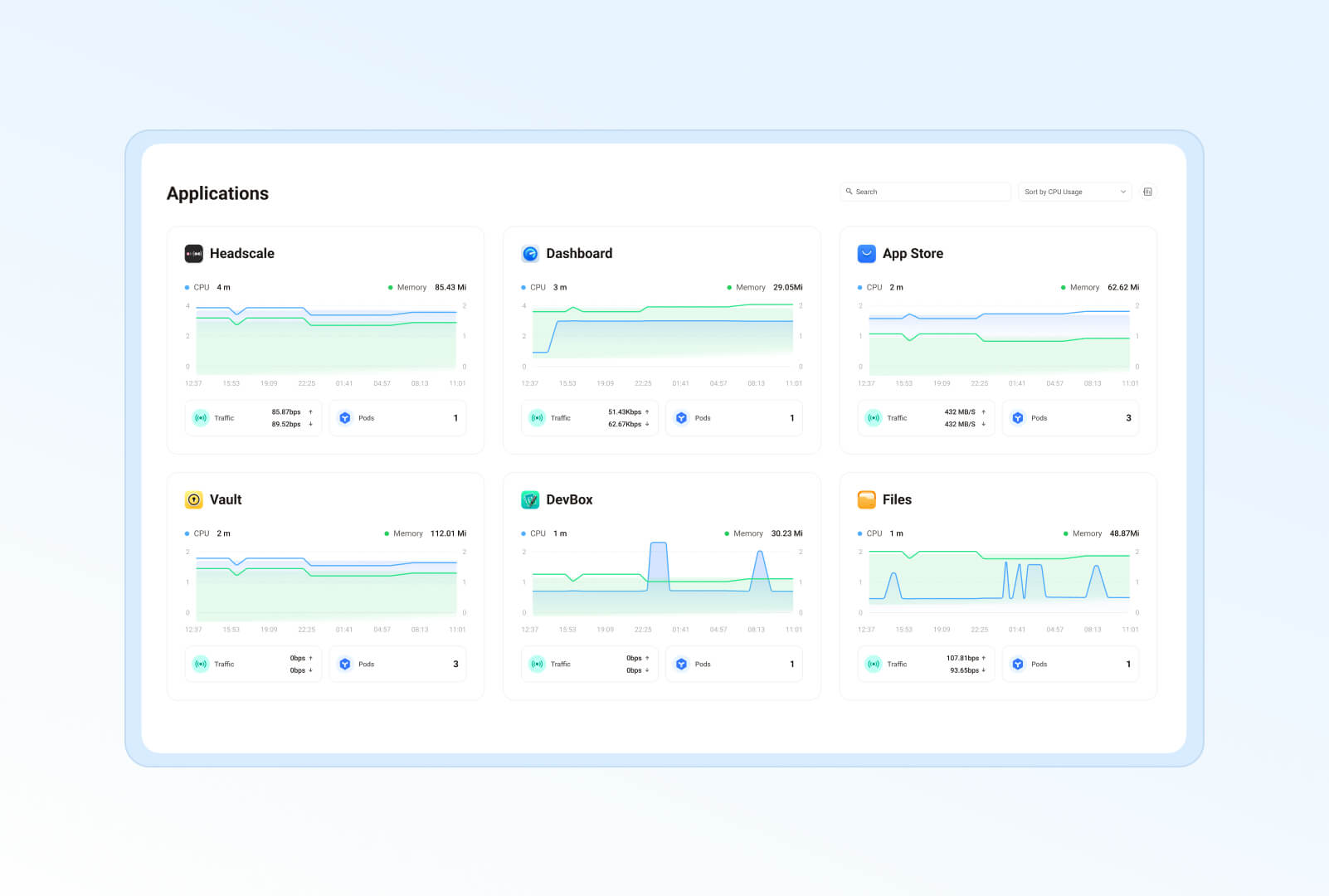

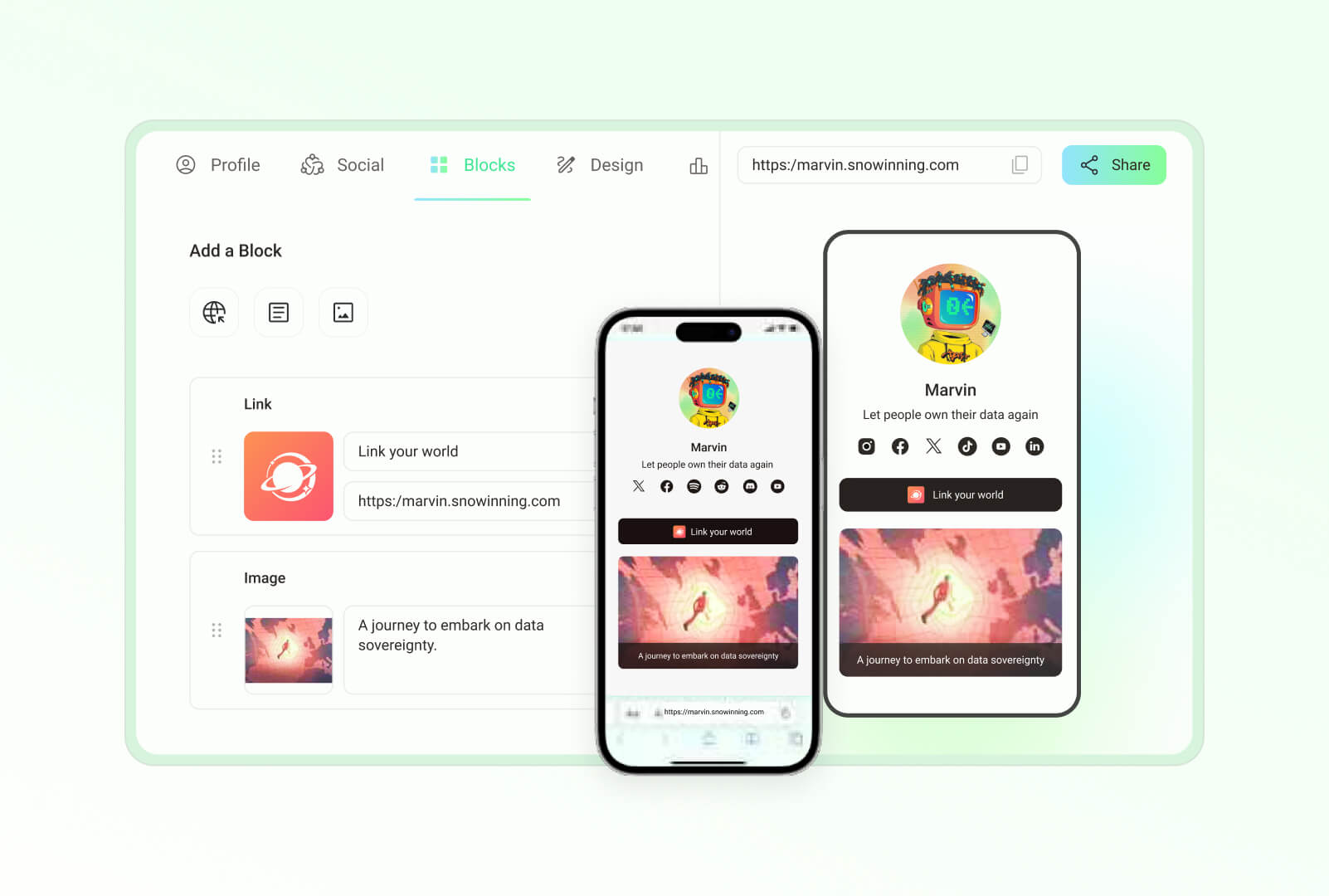

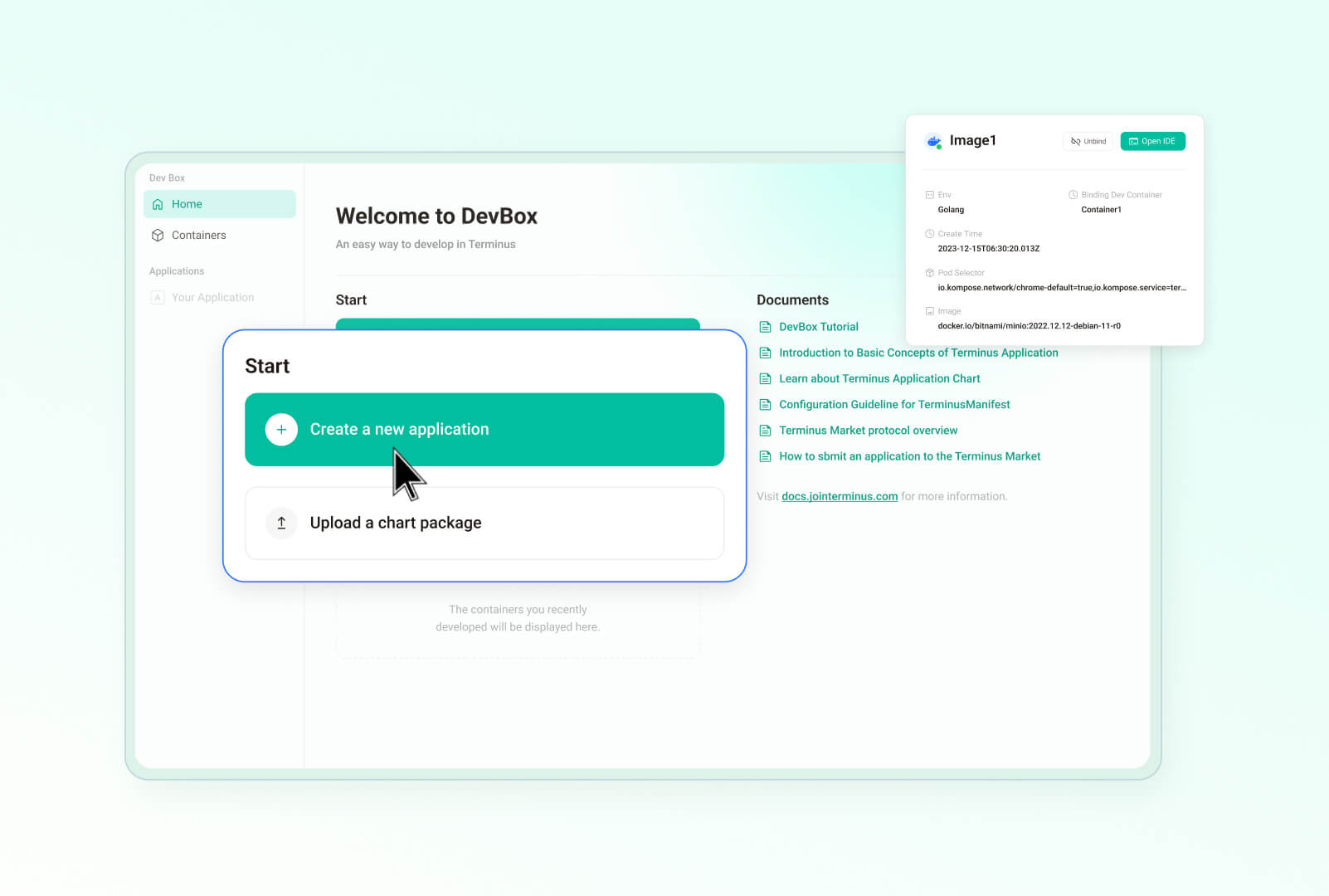

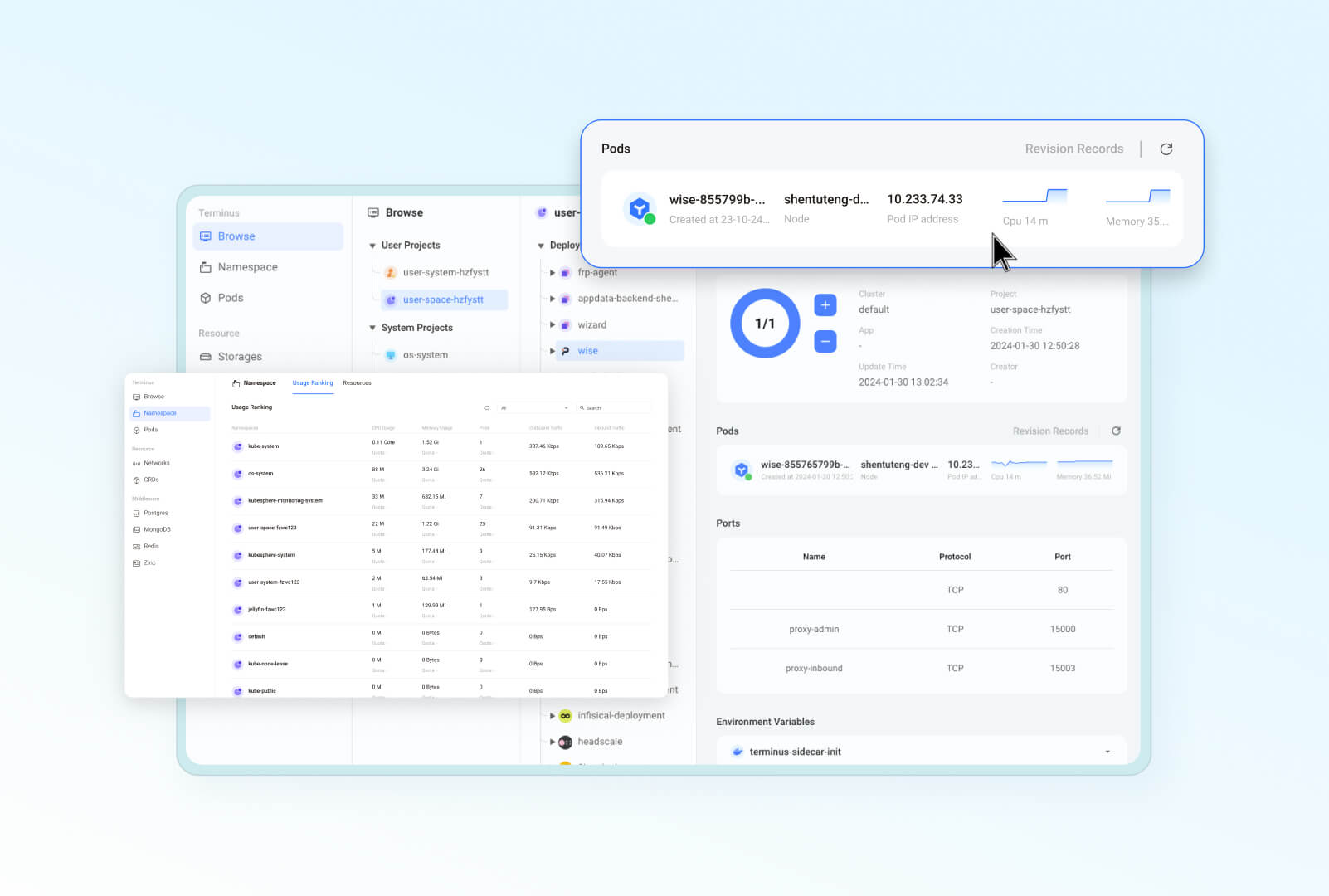

Here are some screenshots from the UI for a sneak peek:

|

||||

|

||||

| **Desktop–Streamlined and familiar portal** | **Files–A secure home to your data**

|

||||

| :--------: | :-------: |

|

||||

|  |  |

|

||||

| **Vault–1Password alternative**|**Market–App ecosystem in your control** |

|

||||

|  |  |

|

||||

|**Wise–Your digital secret garden** | **Settings–Manage Olares efficiently** |

|

||||

|  |  |

|

||||

|**Dashboard–Constant system monitoring** | **Profile–Your unique homepage** |

|

||||

|  |  |

|

||||

| **Studio–Develop, debug, and deploy**|**Control Hub–Manage Kubernetes clusters easily** |

|

||||

|  | |

|

||||

|

||||

|

||||

## Key use cases

|

||||

|

||||

Here is why and where you can count on Olares for private, powerful, and secure sovereign cloud experience:

|

||||

|

||||

@@ -58,10 +95,6 @@ Here is why and where you can count on Olares for private, powerful, and secure

|

||||

|

||||

📚 **Learning platform**: Explore self-hosting, container orchestration, and cloud technologies hands-on.

|

||||

|

||||

> 🔍 **How is Olares different from traditional NAS?**

|

||||

>

|

||||

> Olares focuses on building an all-in-one self-hosted personal cloud experience. Its core features and target users differ significantly from traditional Network Attached Storage (NAS) systems, which primarily focus on network storage. For more details, see [Compare Olares and NAS](https://docs.olares.com/manual/olares-vs-nas.html).

|

||||

|

||||

## Getting started

|

||||

|

||||

### System compatibility

|

||||

@@ -74,42 +107,16 @@ Olares has been tested and verified on the following Linux platforms:

|

||||

### Set up Olares

|

||||

To get started with Olares on your own device, follow the [Getting Started Guide](https://docs.olares.com/manual/get-started/) for step-by-step instructions.

|

||||

|

||||

## Architecture

|

||||

|

||||

Olares' architecture is based on two core principles:

|

||||

- Adopts an Android-like approach to control software permissions and interactivity, ensuring smooth and secure system operations.

|

||||

- Leverages cloud-native technologies to manage hardware and middleware services efficiently.

|

||||

|

||||

|

||||

|

||||

For detailed description of each component, refer to [Olares architecture](https://docs.olares.com/manual/system-architecture.html).

|

||||

|

||||

## Features

|

||||

|

||||

Olares offers a wide array of features designed to enhance security, ease of use, and development flexibility:

|

||||

|

||||

- **Enterprise-grade security**: Simplified network configuration using Tailscale, Headscale, Cloudflare Tunnel, and FRP.

|

||||

- **Secure and permissionless application ecosystem**: Sandboxing ensures application isolation and security.

|

||||

- **Unified file system and database**: Automated scaling, backups, and high availability.

|

||||

- **Single sign-on**: Log in once to access all applications within Olares with a shared authentication service.

|

||||

- **AI capabilities**: Comprehensive solution for GPU management, local AI model hosting, and private knowledge bases while maintaining data privacy.

|

||||

- **Built-in applications**: Includes file manager, sync drive, vault, reader, app market, settings, and dashboard.

|

||||

- **Seamless anywhere access**: Access your devices from anywhere using dedicated clients for mobile, desktop, and browsers.

|

||||

- **Development tools**: Comprehensive development tools for effortless application development and porting.

|

||||

|

||||

## Project Navigation

|

||||

> We are currently migrating the code of subprojects from other repositories within the organization to this repository. This process may take a few months. Once completed, you will be able to get a comprehensive view of the Olares system through this repository.

|

||||

|

||||

|

||||

## Project navigation

|

||||

This section lists the main directories in the Olares repository:

|

||||

|

||||

* **`apps`**: Contains the code for system applications, primarily for `larepass`.

|

||||

* **`cli`**: Contains the code for `olares-cli`, the command-line interface tool for Olares.

|

||||

* **`daemon`**: Contains the code for `olaresd`, the system daemon process.

|

||||

* **[`apps`](./apps)**: Contains the code for system applications, primarily for `larepass`.

|

||||

* **[`cli`](./cli)**: Contains the code for `olares-cli`, the command-line interface tool for Olares.

|

||||

* **[`daemon`](./daemon)**: Contains the code for `olaresd`, the system daemon process.

|

||||

* **`docs`**: Contains documentation for the project.

|

||||

* **`framework`**: Contains the Olares system services.

|

||||

* **`infrastructure`**: Contains code related to infrastructure components such as computing, storage, networking, and GPUs.

|

||||

* **`platform`**: Contains code for cloud-native components like databases and message queues.

|

||||

* **[`framework`](./framework)**: Contains the Olares system services.

|

||||

* **[`infrastructure`](./infrastructure)**: Contains code related to infrastructure components such as computing, storage, networking, and GPUs.

|

||||

* **[`platform`](./platform)**: Contains code for cloud-native components like databases and message queues.

|

||||

* **`vendor`**: Contains code from third-party hardware vendors.

|

||||

|

||||

## Contributing to Olares

|

||||

|

||||

92

README_CN.md

92

README_CN.md

@@ -1,6 +1,6 @@

|

||||

<div align="center">

|

||||

|

||||

# Olares:开源个人云操作系统,助您重获数据主权

|

||||

# Olares:助您重获数据主权的开源个人云

|

||||

|

||||

[](#)<br/>

|

||||

[](https://github.com/beclab/olares/commits/main)

|

||||

@@ -18,11 +18,6 @@

|

||||

|

||||

</div>

|

||||

|

||||

|

||||

https://github.com/user-attachments/assets/3089a524-c135-4f96-ad2b-c66bf4ee7471

|

||||

|

||||

*Olares 让你体验更多可能:构建个人 AI 助理、随时随地同步数据、自托管团队协作空间、打造私人影视厅——无缝整合你的数字生活。*

|

||||

|

||||

<p align="center">

|

||||

<a href="https://olares.com">网站</a> ·

|

||||

<a href="https://docs.olares.com">文档</a> ·

|

||||

@@ -35,12 +30,54 @@ https://github.com/user-attachments/assets/3089a524-c135-4f96-ad2b-c66bf4ee7471

|

||||

>

|

||||

> *是时候做出改变了。*

|

||||

|

||||

|

||||

|

||||

我们坚信,**您拥有掌控自己数字生活的基本权利**。维护这一权利最有效的方式,就是将您的数据托管在本地,在您自己的硬件上。

|

||||

|

||||

Olares 是一款开源个人云操作系统,旨在让您能够轻松在本地拥有并管理自己的数字资产。您无需再依赖公有云服务,而可以在 Olares 上本地部署强大的开源平替服务或应用,例如可以使用 Ollama 托管大语言模型,使用 SD WebUI 用于图像生成,以及使用 Mastodon 构建不受审查的社交空间。Olares 让你坐拥云计算的强大威力,又能完全将其置于自己掌控之下。

|

||||

|

||||

> 为 Olares 点亮 🌟 以及时获取新版本和更新的通知。

|

||||

|

||||

## 系统架构

|

||||

|

||||

公有云具有基础设施即服务(IaaS)、平台即服务(PaaS)和软件即服务(SaaS)等层级。Olares 为这些层级提供了开源替代方案。

|

||||

|

||||

|

||||

|

||||

详细描述请参考 [Olares 架构](https://docs.olares.cn/zh/manual/system-architecture.html)文档。

|

||||

|

||||

>🔍**Olares 和 NAS 有什么不同?**

|

||||

>

|

||||

> Olares 致力于打造一站式的自托管个人云体验。其核心功能与用户定位,均与专注于网络存储的传统 NAS 有着显著的不同,详情请参考 [Olares 与 NAS 对比](https://docs.olares.com/zh/manual/olares-vs-nas.html)。

|

||||

|

||||

|

||||

## 功能特性

|

||||

|

||||

Olares 提供了一系列功能,旨在提升安全性、使用便捷性以及开发的灵活性:

|

||||

|

||||

- **企业级安全**:使用 Tailscale、Headscale、Cloudflare Tunnel 和 FRP 简化网络配置,确保安全连接。

|

||||

- **安全且无需许可的应用生态系统**:应用通过沙箱化技术实现隔离,保障应用运行的安全性。

|

||||

- **统一文件系统和数据库**:提供自动扩展、数据备份和高可用性功能,确保数据的持久安全。

|

||||

- **单点登录**:用户仅需一次登录,即可访问 Olares 中所有应用的共享认证服务。

|

||||

- **AI 功能**:包括全面的 GPU 管理、本地 AI 模型托管及私有知识库,同时严格保护数据隐私。

|

||||

- **内置应用程序**:涵盖文件管理器、同步驱动器、密钥管理器、阅读器、应用市场、设置和面板等,提供全面的应用支持。

|

||||

- **无缝访问**:通过移动端、桌面端和网页浏览器客户端,从全球任何地方访问设备。

|

||||

- **开发工具**:提供全面的工具支持,便于开发和移植应用,加速开发进程。

|

||||

|

||||

以下是用户界面的一些截图预览:

|

||||

|

||||

| **桌面:熟悉高效的访问入口** | **文件管理器:安全存储数据**

|

||||

| :--------: | :-------: |

|

||||

|  |  |

|

||||

| **Vault:密码无忧管理**|**市场:可控的应用生态系统** |

|

||||

|  |  |

|

||||

|**Wise:数字后花园** | **设置:高效管理 Olares** |

|

||||

|  |  |

|

||||

|**仪表盘:持续监控 Olares** | **Profile:独特的个人主页** |

|

||||

|  |  |

|

||||

| **Studio:一站式开发、调试和部署**|**控制面板:轻松管理 Kubernetes 集群** |

|

||||

|  | |

|

||||

|

||||

## 使用场景

|

||||

|

||||

在以下场景中,Olares 为您带来私密、强大且安全的私有云体验:

|

||||

@@ -59,10 +96,6 @@ Olares 是一款开源个人云操作系统,旨在让您能够轻松在本地

|

||||

|

||||

📚**学习探索**:深入学习自托管服务、容器技术和云计算,并上手实践。<br>

|

||||

|

||||

> 🔍**Olares 和 NAS 有什么不同?**

|

||||

>

|

||||

> Olares 致力于打造一站式的自托管个人云体验。其核心功能与用户定位,均与专注于网络存储的传统 NAS 有着显著的不同,详情请参考 [Olares 与 NAS 对比](https://docs.olares.com/zh/manual/olares-vs-nas.html)。

|

||||

|

||||

## 快速开始

|

||||

|

||||

### 系统兼容性

|

||||

@@ -74,43 +107,18 @@ Olares 已在以下 Linux 平台完成测试与验证:

|

||||

|

||||

### 安装 Olares

|

||||

|

||||

参考[快速上手指南](https://docs.joinolares.cn/zh/manual/get-started/)安装并激活 Olares。

|

||||

|

||||

## 系统架构

|

||||

Olares 的架构设计遵循两个核心原则:

|

||||

- 参考 Android 模式,控制软件权限和交互性,确保系统的流畅性和安全性。

|

||||

- 借鉴云原生技术,高效管理硬件和中间件服务。

|

||||

|

||||

|

||||

|

||||

详细描述请参考 [Olares 架构](https://docs.joinolares.cn/zh/manual/system-architecture.html)文档。

|

||||

|

||||

## 功能特性

|

||||

|

||||

Olares 提供了一系列功能,旨在提升安全性、使用便捷性以及开发的灵活性:

|

||||

|

||||

- **企业级安全**:使用 Tailscale、Headscale、Cloudflare Tunnel 和 FRP 简化网络配置,确保安全连接。

|

||||

- **安全且无需许可的应用生态系统**:应用通过沙箱化技术实现隔离,保障应用运行的安全性。

|

||||

- **统一文件系统和数据库**:提供自动扩展、数据备份和高可用性功能,确保数据的持久安全。

|

||||

- **单点登录**:用户仅需一次登录,即可访问 Olares 中所有应用的共享认证服务。

|

||||

- **AI 功能**:包括全面的 GPU 管理、本地 AI 模型托管及私有知识库,同时严格保护数据隐私。

|

||||

- **内置应用程序**:涵盖文件管理器、同步驱动器、密钥管理器、阅读器、应用市场、设置和面板等,提供全面的应用支持。

|

||||

- **无缝访问**:通过移动端、桌面端和网页浏览器客户端,从全球任何地方访问设备。

|

||||

- **开发工具**:提供全面的工具支持,便于开发和移植应用,加速开发进程。

|

||||

参考[快速上手指南](https://docs.olares.cn/zh/manual/get-started/)安装并激活 Olares。

|

||||

|

||||

## 项目目录

|

||||

|

||||

> 我们正将子项目的代码从组织中其他代码仓库移动到当前仓库,这个过程可能会持续几个月。届时您就可以通过本仓库了解 Olares 系统的全貌

|

||||

>

|

||||

Olares 代码库中的主要目录如下:

|

||||

|

||||

* **`apps`**: 用于存放系统应用,主要是 `larepass` 的代码。

|

||||

* **`cli`**: 用于存放 `olares-cli`(Olares 的命令行界面工具)的代码。

|

||||

* **`daemon`**: 用于存放 `olaresd`(系统守护进程)的代码。

|

||||

* **[`apps`](./apps)**: 用于存放系统应用,主要是 `larepass` 的代码。

|

||||

* **[`cli`](./cli)**: 用于存放 `olares-cli`(Olares 的命令行界面工具)的代码。

|

||||

* **[`daemon`](./daemon)**: 用于存放 `olaresd`(系统守护进程)的代码。

|

||||

* **`docs`**: 用于存放 Olares 项目的文档。

|

||||

* **`framework`**: 用来存放 Olares 系统服务代码。

|

||||

* **`infrastructure`**: 用于存放计算,存储,网络,GPU 等基础设施的代码。

|

||||

* **`platform`**: 用于存放数据库、消息队列等云原生组件的代码。

|

||||

* **[`framework`](./framework)**: 用来存放 Olares 系统服务代码。

|

||||

* **[`infrastructure`](./infrastructure)**: 用于存放计算,存储,网络,GPU 等基础设施的代码。

|

||||

* **[`platform`](./platform)**: 用于存放数据库、消息队列等云原生组件的代码。

|

||||

* **`vendor`**: 用于存放来自第三方硬件供应商的代码。

|

||||

|

||||

## 社区贡献

|

||||

|

||||

91

README_JP.md

91

README_JP.md

@@ -18,10 +18,6 @@

|

||||

|

||||

</div>

|

||||

|

||||

https://github.com/user-attachments/assets/3089a524-c135-4f96-ad2b-c66bf4ee7471

|

||||

|

||||

*Olaresを使って、ローカルAIアシスタントを構築し、データを場所を問わず同期し、ワークスペースをセルフホストし、独自のメディアをストリーミングし、その他多くのことを実現できます。*

|

||||

|

||||

<p align="center">

|

||||

<a href="https://olares.com">ウェブサイト</a> ·

|

||||

<a href="https://docs.olares.com">ドキュメント</a> ·

|

||||

@@ -30,18 +26,57 @@ https://github.com/user-attachments/assets/3089a524-c135-4f96-ad2b-c66bf4ee7471

|

||||

<a href="https://space.olares.com">Olares Space</a>

|

||||

</p>

|

||||

|

||||

> [!IMPORTANT]

|

||||

> 最近、TerminusからOlaresへのリブランディングを完了しました。詳細については、[リブランディングブログ](https://blog.olares.com/terminus-is-now-olares/)をご覧ください。

|

||||

> *パブリッククラウドを基盤とする現代のインターネットは、あなたの個人データのプライバシーをますます脅かしています。ChatGPT、Midjourney、Facebookといったサービスへの依存が深まるにつれ、デジタル主権に対するあなたのコントロールも弱まっています。あなたのデータは他者のサーバーに保存され、その利用規約に縛られ、追跡され、検閲されているのです。*

|

||||

>

|

||||

>*今こそ、変革の時です。*

|

||||

|

||||

Olaresを使用して、ハードウェアをAIホームサーバーに変換します。Olaresは、ローカルAIのためのオープンソース主権クラウドOSです。

|

||||

|

||||

|

||||

- **最先端のAIモデルを自分の条件で実行**: LLaMA、Stable Diffusion、Whisper、Flux.1などの強力なオープンAIモデルをハードウェア上で簡単にホストし、AI環境を完全に制御します。

|

||||

- **簡単にデプロイ**: Olares Marketから幅広いオープンソースAIアプリを数クリックで発見してインストールします。複雑な設定やセットアップは不要です。

|

||||

- **いつでもどこでもアクセス**: ブラウザを通じて、必要なときにAIアプリやモデルにアクセスします。

|

||||

- **統合されたAIでスマートなAI体験**: [Model Context Protocol](https://spec.modelcontextprotocol.io/specification/)(MCP)に似たメカニズムを使用して、OlaresはAIモデルとAIアプリ、およびプライベートデータセットをシームレスに接続します。これにより、ニーズに応じて適応する高度にパーソナライズされたコンテキスト対応のAIインタラクションが実現します。

|

||||

私たちは、あなたが自身のデジタルライフをコントロールする基本的な権利を有すると確信しています。この権利を守る最も効果的な方法は、あなたのデータをローカルの、あなた自身のハードウェア上でホストすることです。

|

||||

|

||||

Olaresは、あなたが自身のデジタル資産をローカルで容易に所有し管理できるよう設計された、オープンソースのパーソナルクラウドOSです。もはやパブリッククラウドサービスに依存する必要はありません。Olares上で、例えばOllamaを利用した大規模言語モデルのホスティング、SD WebUIによる画像生成、Mastodonを用いた検閲のないソーシャルスペースの構築など、強力なオープンソースの代替サービスやアプリケーションをローカルにデプロイできます。Olaresは、クラウドコンピューティングの絶大な力を活用しつつ、それを完全に自身のコントロール下に置くことを可能にします。

|

||||

|

||||

> 🌟 *新しいリリースや更新についての通知を受け取るために、スターを付けてください。*

|

||||

|

||||

## アーキテクチャ

|

||||

|

||||

パブリッククラウドは、IaaS (Infrastructure as a Service)、PaaS (Platform as a Service)、SaaS (Software as a Service) といったサービスレイヤーで構成されています。Olaresは、これら各レイヤーに対するオープンソースの代替ソリューションを提供しています。

|

||||

|

||||

|

||||

|

||||

各コンポーネントの詳細については、[Olares アーキテクチャ](https://docs.olares.com/manual/system-architecture.html)(英語版)をご参照ください。

|

||||

|

||||

> 🔍**OlaresとNASの違いは何ですか?**

|

||||

>

|

||||

> Olaresは、ワンストップのセルフホスティング・パーソナルクラウド体験の実現を目指しています。そのコア機能とユーザーの位置付けは、ネットワークストレージに特化した従来のNASとは大きく異なります。詳細は、[OlaresとNASの比較](https://docs.olares.com/manual/olares-vs-nas.html)(英語版)をご参照ください。

|

||||

|

||||

## 機能

|

||||

|

||||

Olaresは、セキュリティ、使いやすさ、開発の柔軟性を向上させるための幅広い機能を提供します:

|

||||

|

||||

- **エンタープライズグレードのセキュリティ**: Tailscale、Headscale、Cloudflare Tunnel、FRPを使用してネットワーク構成を簡素化します。

|

||||

- **安全で許可のないアプリケーションエコシステム**: サンドボックス化によりアプリケーションの分離とセキュリティを確保します。

|

||||

- **統一ファイルシステムとデータベース**: 自動スケーリング、バックアップ、高可用性を提供します。

|

||||

- **シングルサインオン**: 一度ログインするだけで、Olares内のすべてのアプリケーションに共有認証サービスを使用してアクセスできます。

|

||||

- **AI機能**: GPU管理、ローカルAIモデルホスティング、プライベートナレッジベースの包括的なソリューションを提供し、データプライバシーを維持します。

|

||||

- **内蔵アプリケーション**: ファイルマネージャー、同期ドライブ、ボールト、リーダー、アプリマーケット、設定、ダッシュボードを含みます。

|

||||

- **どこからでもシームレスにアクセス**: モバイル、デスクトップ、ブラウザ用の専用クライアントを使用して、どこからでもデバイスにアクセスできます。

|

||||

- **開発ツール**: アプリケーションの開発と移植を容易にする包括的な開発ツールを提供します。

|

||||

|

||||

以下はUIのスクリーンショットプレビューです。

|

||||

|

||||

| **デスクトップ:馴染みやすく効率的なアクセスポイント** | **ファイルマネージャー:データを安全に保管** |

|

||||

| :--------: | :-------: |

|

||||

|  |  |

|

||||

| **Vault:安心のパスワード管理**|**マーケット:コントロール可能なアプリエコシステム** |

|

||||

|  |  |

|

||||

| **Wise:あなただけのデジタルガーデン** | **設定:Olaresを効率的に管理** |

|

||||

|  |  |

|

||||

| **ダッシュボード:Olaresを継続的に監視** | **プロフィール:ユニークなパーソナルページ** |

|

||||

|  |  |

|

||||

| **Studio:開発、デバッグ、デプロイをワンストップで**|**コントロールパネル:Kubernetesクラスターを簡単に管理** |

|

||||

|  | |

|

||||

|

||||

## なぜOlaresなのか?

|

||||

|

||||

以下の理由とシナリオで、Olaresはプライベートで強力かつ安全な主権クラウド体験を提供します:

|

||||

@@ -72,40 +107,18 @@ Olaresは以下のLinuxプラットフォームで動作検証を完了してい

|

||||

### Olaresのセットアップ

|

||||

自分のデバイスでOlaresを始めるには、[はじめにガイド](https://docs.olares.com/manual/get-started/)に従ってステップバイステップの手順を確認してください。

|

||||

|

||||

## アーキテクチャ

|

||||

|

||||

Olaresのアーキテクチャは、次の2つの基本原則に基づいています:

|

||||

- Androidの設計思想を取り入れ、ソフトウェアの権限と対話性を制御することで、システムの安全かつ円滑な運用を実現します。

|

||||

- クラウドネイティブ技術を活用し、ハードウェアとミドルウェアサービスを効率的に管理します。

|

||||

|

||||

|

||||

|

||||

各コンポーネントの詳細については、[Olares アーキテクチャ](https://docs.olares.com/manual/system-architecture.html)(英語版)をご参照ください。

|

||||

|

||||

## 機能

|

||||

|

||||

Olaresは、セキュリティ、使いやすさ、開発の柔軟性を向上させるための幅広い機能を提供します:

|

||||

|

||||

- **エンタープライズグレードのセキュリティ**: Tailscale、Headscale、Cloudflare Tunnel、FRPを使用してネットワーク構成を簡素化します。

|

||||

- **安全で許可のないアプリケーションエコシステム**: サンドボックス化によりアプリケーションの分離とセキュリティを確保します。

|

||||

- **統一ファイルシステムとデータベース**: 自動スケーリング、バックアップ、高可用性を提供します。

|

||||

- **シングルサインオン**: 一度ログインするだけで、Olares内のすべてのアプリケーションに共有認証サービスを使用してアクセスできます。

|

||||

- **AI機能**: GPU管理、ローカルAIモデルホスティング、プライベートナレッジベースの包括的なソリューションを提供し、データプライバシーを維持します。

|

||||

- **内蔵アプリケーション**: ファイルマネージャー、同期ドライブ、ボールト、リーダー、アプリマーケット、設定、ダッシュボードを含みます。

|

||||

- **どこからでもシームレスにアクセス**: モバイル、デスクトップ、ブラウザ用の専用クライアントを使用して、どこからでもデバイスにアクセスできます。

|

||||

- **開発ツール**: アプリケーションの開発と移植を容易にする包括的な開発ツールを提供します。

|

||||

|

||||

## プロジェクトナビゲーション

|

||||

|

||||

このセクションでは、Olares リポジトリ内の主要なディレクトリをリストアップしています:

|

||||

|

||||

* **`apps`**: システムアプリケーションのコードが含まれており、主に `larepass` 用です。

|

||||

* **`cli`**: Olares のコマンドラインインターフェースツールである `olares-cli` のコードが含まれています。

|

||||

* **`daemon`**: システムデーモンプロセスである `olaresd` のコードが含まれています。

|

||||

* **[`apps`](./apps)**: システムアプリケーションのコードが含まれており、主に `larepass` 用です。

|

||||

* **[`cli`](./cli)**: Olares のコマンドラインインターフェースツールである `olares-cli` のコードが含まれています。

|

||||

* **[`daemon`](./daemon)**: システムデーモンプロセスである `olaresd` のコードが含まれています。

|

||||

* **`docs`**: プロジェクトのドキュメントが含まれています。

|

||||

* **`framework`**: Olares システムサービスが含まれています。

|

||||

* **`infrastructure`**: コンピューティング、ストレージ、ネットワーキング、GPU などのインフラストラクチャコンポーネントに関連するコードが含まれています。

|

||||

* **`platform`**: データベースやメッセージキューなどのクラウドネイティブコンポーネントのコードが含まれています。

|

||||

* **[`framework`](./framework)**: Olares システムサービスが含まれています。

|

||||

* **[`infrastructure`](./infrastructure)**: コンピューティング、ストレージ、ネットワーキング、GPU などのインフラストラクチャコンポーネントに関連するコードが含まれています。

|

||||

* **[`platform`](./platform)**: データベースやメッセージキューなどのクラウドネイティブコンポーネントのコードが含まれています。

|

||||

* **`vendor`**: サードパーティのハードウェアベンダーからのコードが含まれています。

|

||||

|

||||

## Olaresへの貢献

|

||||

|

||||

@@ -1,26 +0,0 @@

|

||||

apiVersion: v2

|

||||

name: appstore

|

||||

description: A Helm chart for Kubernetes

|

||||

maintainers:

|

||||

- name: bytetrade

|

||||

|

||||

# A chart can be either an 'application' or a 'library' chart.

|

||||

#

|

||||

# Application charts are a collection of templates that can be packaged into versioned archives

|

||||

# to be deployed.

|

||||

#

|

||||

# Library charts provide useful utilities or functions for the chart developer. They're included as

|

||||

# a dependency of application charts to inject those utilities and functions into the rendering

|

||||

# pipeline. Library charts do not define any templates and therefore cannot be deployed.

|

||||

type: application

|

||||

|

||||

# This is the chart version. This version number should be incremented each time you make changes

|

||||

# to the chart and its templates, including the app version.

|

||||

# Versions are expected to follow Semantic Versioning (https://semver.org/)

|

||||

version: 0.0.1

|

||||

|

||||

# This is the version number of the application being deployed. This version number should be

|

||||

# incremented each time you make changes to the application. Versions are not expected to

|

||||

# follow Semantic Versioning. They should reflect the version the application is using.

|

||||

# It is recommended to use it with quotes.

|

||||

appVersion: "1.16.0"

|

||||

@@ -1,62 +0,0 @@

|

||||

{{/*

|

||||

Expand the name of the chart.

|

||||

*/}}

|

||||

{{- define "appstore.name" -}}

|

||||

{{- default .Chart.Name .Values.nameOverride | trunc 63 | trimSuffix "-" }}

|

||||

{{- end }}

|

||||

|

||||

{{/*

|

||||

Create a default fully qualified app name.

|

||||

We truncate at 63 chars because some Kubernetes name fields are limited to this (by the DNS naming spec).

|

||||

If release name contains chart name it will be used as a full name.

|

||||

*/}}

|

||||

{{- define "appstore.fullname" -}}

|

||||

{{- if .Values.fullnameOverride }}

|

||||

{{- .Values.fullnameOverride | trunc 63 | trimSuffix "-" }}

|

||||

{{- else }}

|

||||

{{- $name := default .Chart.Name .Values.nameOverride }}

|

||||

{{- if contains $name .Release.Name }}

|

||||

{{- .Release.Name | trunc 63 | trimSuffix "-" }}

|

||||

{{- else }}

|

||||

{{- printf "%s-%s" .Release.Name $name | trunc 63 | trimSuffix "-" }}

|

||||

{{- end }}

|

||||

{{- end }}

|

||||

{{- end }}

|

||||

|

||||

{{/*

|

||||

Create chart name and version as used by the chart label.

|

||||

*/}}

|

||||

{{- define "appstore.chart" -}}

|

||||

{{- printf "%s-%s" .Chart.Name .Chart.Version | replace "+" "_" | trunc 63 | trimSuffix "-" }}

|

||||

{{- end }}

|

||||

|

||||

{{/*

|

||||

Common labels

|

||||

*/}}

|

||||

{{- define "appstore.labels" -}}

|

||||

helm.sh/chart: {{ include "appstore.chart" . }}

|

||||

{{ include "appstore.selectorLabels" . }}

|

||||

{{- if .Chart.AppVersion }}

|

||||

app.kubernetes.io/version: {{ .Chart.AppVersion | quote }}

|

||||

{{- end }}

|

||||

app.kubernetes.io/managed-by: {{ .Release.Service }}

|

||||

{{- end }}

|

||||

|

||||

{{/*

|

||||

Selector labels

|

||||

*/}}

|

||||

{{- define "appstore.selectorLabels" -}}

|

||||

app.kubernetes.io/name: {{ include "appstore.name" . }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

{{- end }}

|

||||

|

||||

{{/*

|

||||

Create the name of the service account to use

|

||||

*/}}

|

||||

{{- define "appstore.serviceAccountName" -}}

|

||||

{{- if .Values.serviceAccount.create }}

|

||||

{{- default (include "appstore.fullname" .) .Values.serviceAccount.name }}

|

||||

{{- else }}

|

||||

{{- default "default" .Values.serviceAccount.name }}

|

||||

{{- end }}

|

||||

{{- end }}

|

||||

@@ -1,291 +0,0 @@

|

||||

{{- $market_secret := (lookup "v1" "Secret" .Release.Namespace "market-secrets") -}}

|

||||

|

||||

{{- $redis_password := "" -}}

|

||||

{{ if $market_secret -}}

|

||||

{{ $redis_password = (index $market_secret "data" "redis-passwords") }}

|

||||

{{ else -}}

|

||||

{{ $redis_password = randAlphaNum 16 | b64enc }}

|

||||

{{- end -}}

|

||||

|

||||

apiVersion: v1

|

||||

kind: Secret

|

||||

metadata:

|

||||

name: market-secrets

|

||||

namespace: {{ .Release.Namespace }}

|

||||

type: Opaque

|

||||

data:

|

||||

redis-passwords: {{ $redis_password }}

|

||||

|

||||

---

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

metadata:

|

||||

name: market-deployment

|

||||

namespace: {{ .Release.Namespace }}

|

||||

labels:

|

||||

app: appstore

|

||||

applications.app.bytetrade.io/author: bytetrade.io

|

||||

spec:

|

||||

replicas: 1

|

||||

selector:

|

||||

matchLabels:

|

||||

app: appstore

|

||||

template:

|

||||

metadata:

|

||||

labels:

|

||||

app: appstore

|

||||

io.bytetrade.app: "true"

|

||||

annotations:

|

||||

instrumentation.opentelemetry.io/inject-go: "olares-instrumentation"

|

||||

instrumentation.opentelemetry.io/go-container-names: "appstore-backend"

|

||||

instrumentation.opentelemetry.io/otel-go-auto-target-exe: "/opt/app/market"

|

||||

spec:

|

||||

priorityClassName: "system-cluster-critical"

|

||||

initContainers:

|

||||

- args:

|

||||

- -it

|

||||

- authelia-backend.os-system:9091

|

||||

image: owncloudci/wait-for:latest

|

||||

imagePullPolicy: IfNotPresent

|

||||

name: check-auth

|

||||

- name: terminus-sidecar-init

|

||||

image: openservicemesh/init:v1.2.3

|

||||

imagePullPolicy: IfNotPresent

|

||||

securityContext:

|

||||

privileged: true

|

||||

capabilities:

|

||||

add:

|

||||

- NET_ADMIN

|

||||

runAsNonRoot: false

|

||||

runAsUser: 0

|

||||

command:

|

||||

- /bin/sh

|

||||

- -c

|

||||

- |

|

||||

iptables-restore --noflush <<EOF

|

||||

# sidecar interception rules

|

||||

*nat

|

||||

:PROXY_IN_REDIRECT - [0:0]

|

||||

:PROXY_INBOUND - [0:0]

|

||||

-A PROXY_IN_REDIRECT -p tcp -j REDIRECT --to-port 15003

|

||||

-A PROXY_INBOUND -p tcp --dport 15000 -j RETURN

|

||||

-A PROXY_INBOUND -p tcp -j PROXY_IN_REDIRECT

|

||||

-A PREROUTING -p tcp -j PROXY_INBOUND

|

||||

COMMIT

|

||||

EOF

|

||||

|

||||

env:

|

||||

- name: POD_IP

|

||||

valueFrom:

|

||||

fieldRef:

|

||||

apiVersion: v1

|

||||

fieldPath: status.podIP

|

||||

containers:

|

||||

- name: appstore-backend

|

||||

image: beclab/market-backend:v0.3.12

|

||||

imagePullPolicy: IfNotPresent

|

||||

ports:

|

||||

- containerPort: 81

|

||||

env:

|

||||

- name: OS_SYSTEM_SERVER

|

||||

value: system-server.user-system-{{ .Values.bfl.username }}

|

||||

- name: OS_APP_SECRET

|

||||

value: '{{ .Values.os.appstore.appSecret }}'

|

||||

- name: OS_APP_KEY

|

||||

value: {{ .Values.os.appstore.appKey }}

|

||||

- name: APP_SOTRE_SERVICE_SERVICE_HOST

|

||||

value: appstore-server-prod.bttcdn.com

|

||||

- name: MARKET_PROVIDER

|

||||

value: '{{ .Values.os.appstore.marketProvider }}'

|

||||

- name: APP_SOTRE_SERVICE_SERVICE_PORT

|

||||

value: '443'

|

||||

- name: APP_SERVICE_SERVICE_HOST

|

||||

value: app-service.os-system

|

||||

- name: APP_SERVICE_SERVICE_PORT

|

||||

value: '6755'

|

||||

- name: REPO_URL_PORT

|

||||

value: "82"

|

||||

- name: REDIS_ADDRESS

|

||||

value: 'redis-cluster-proxy.user-system-{{ .Values.bfl.username }}:6379'

|

||||

- name: REDIS_PASSWORD

|

||||

valueFrom:

|

||||

secretKeyRef:

|

||||

name: market-secrets

|

||||

key: redis-passwords

|

||||

- name: REDIS_DB_NUMBER

|

||||

value: '0'

|

||||

- name: REPO_URL_HOST

|

||||

valueFrom:

|

||||

fieldRef:

|

||||

fieldPath: status.podIP

|

||||

|

||||

volumeMounts:

|

||||

- name: opt-data

|

||||

mountPath: /opt/app/data

|

||||

- name: terminus-envoy-sidecar

|

||||

image: bytetrade/envoy:v1.25.11

|

||||

imagePullPolicy: IfNotPresent

|

||||

securityContext:

|

||||

allowPrivilegeEscalation: false

|

||||

runAsUser: 1000

|

||||

ports:

|

||||

- name: proxy-admin

|

||||

containerPort: 15000

|

||||

- name: proxy-inbound

|

||||

containerPort: 15003

|

||||

volumeMounts:

|

||||

- name: terminus-sidecar-config

|

||||

readOnly: true

|

||||

mountPath: /etc/envoy/envoy.yaml

|

||||

subPath: envoy.yaml

|

||||

command:

|

||||

- /usr/local/bin/envoy

|

||||

- --log-level

|

||||

- debug

|

||||

- -c

|

||||

- /etc/envoy/envoy.yaml

|

||||

env:

|

||||

- name: POD_UID

|

||||

valueFrom:

|

||||

fieldRef:

|

||||

fieldPath: metadata.uid

|

||||

- name: POD_NAME

|

||||

valueFrom:

|

||||

fieldRef:

|

||||

fieldPath: metadata.name

|

||||

- name: POD_NAMESPACE

|

||||

valueFrom:

|

||||

fieldRef:

|

||||

fieldPath: metadata.namespace

|

||||

- name: POD_IP

|

||||

valueFrom:

|

||||

fieldRef:

|

||||

fieldPath: status.podIP

|

||||

- name: terminus-ws-sidecar

|

||||

image: 'beclab/ws-gateway:v1.0.5'

|

||||

command:

|

||||

- /ws-gateway

|

||||

env:

|

||||

- name: WS_PORT

|

||||

value: '81'

|

||||

- name: WS_URL

|

||||

value: /app-store/v1/websocket/message

|

||||

resources: { }

|

||||

terminationMessagePath: /dev/termination-log

|

||||

terminationMessagePolicy: File

|

||||

imagePullPolicy: IfNotPresent

|

||||

volumes:

|

||||

- name: terminus-sidecar-config

|

||||

configMap:

|

||||

name: sidecar-ws-configs

|

||||

items:

|

||||

- key: envoy.yaml

|

||||

path: envoy.yaml

|

||||

- name: opt-data

|

||||

hostPath:

|

||||

path: '{{ .Values.userspace.appData}}/appstore/data'

|

||||

type: DirectoryOrCreate

|

||||

- name: app

|

||||

emptyDir: {}

|

||||

- name: nginx-confd

|

||||

emptyDir: {}

|

||||

|

||||

---

|

||||

apiVersion: v1

|

||||

kind: Service

|

||||

metadata:

|

||||

name: appstore-service

|

||||

namespace: {{ .Release.Namespace }}

|

||||

spec:

|

||||

selector:

|

||||

app: appstore

|

||||

type: ClusterIP

|

||||

ports:

|

||||

- protocol: TCP

|

||||

name: appstore-backend

|

||||

port: 81

|

||||

targetPort: 81

|

||||

|

||||

---

|

||||

apiVersion: sys.bytetrade.io/v1alpha1

|

||||

kind: ApplicationPermission

|

||||

metadata:

|

||||

name: appstore

|

||||

namespace: user-system-{{ .Values.bfl.username }}

|

||||

spec:

|

||||

app: appstore

|

||||

appid: appstore

|

||||

key: {{ .Values.os.appstore.appKey }}

|

||||

secret: {{ .Values.os.appstore.appSecret }}

|

||||

permissions:

|

||||

- dataType: event

|

||||

group: message-disptahcer.system-server

|

||||

ops:

|

||||

- Create

|

||||

version: v1

|

||||

- dataType: app

|

||||

group: service.bfl

|

||||

ops:

|

||||

- UserApps

|

||||

version: v1

|

||||

status:

|

||||

state: active

|

||||

|

||||

---

|

||||

apiVersion: sys.bytetrade.io/v1alpha1

|

||||

kind: ProviderRegistry

|

||||

metadata:

|

||||

name: appstore-backend-provider

|

||||

namespace: user-system-{{ .Values.bfl.username }}

|

||||

spec:

|

||||

dataType: app

|

||||

deployment: market

|

||||

description: app store provider

|

||||