mirror of

https://github.com/goauthentik/authentik

synced 2026-04-25 17:15:26 +02:00

Compare commits

168 Commits

developer-

...

version-20

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

49b46b5338 | ||

|

|

cfcdc70542 | ||

|

|

471f0d65e0 | ||

|

|

3c0731ab6d | ||

|

|

bce6ba2afa | ||

|

|

1b490c1b5a | ||

|

|

9a038aa0fe | ||

|

|

c80112e5f8 | ||

|

|

65ae736fe2 | ||

|

|

271e7eae1c | ||

|

|

8bf66b8987 | ||

|

|

6f9369283c | ||

|

|

859ef3a722 | ||

|

|

b47ed8894c | ||

|

|

8c1f08fecb | ||

|

|

cc676793b0 | ||

|

|

76815cd5b9 | ||

|

|

57a378bbd0 | ||

|

|

f542a0d415 | ||

|

|

f69bbbe85e | ||

|

|

0845e23337 | ||

|

|

de6d2a02b2 | ||

|

|

73be5321a9 | ||

|

|

ff8ad31a6b | ||

|

|

ad2cedac98 | ||

|

|

7a0bd0d0c1 | ||

|

|

269551cf4d | ||

|

|

23a6ab915b | ||

|

|

0c77e5c33e | ||

|

|

93aeae5405 | ||

|

|

cd3ff47221 | ||

|

|

a370a54db8 | ||

|

|

1d0e45abe8 | ||

|

|

a501b627eb | ||

|

|

78a4c08fc8 | ||

|

|

8d3a289d12 | ||

|

|

99e92bf998 | ||

|

|

1df7b22d29 | ||

|

|

af484738f6 | ||

|

|

19ba3c8453 | ||

|

|

9d1b384baa | ||

|

|

8a702b148f | ||

|

|

2db42a3a04 | ||

|

|

4403baaa28 | ||

|

|

8030d4d734 | ||

|

|

4b7a78453c | ||

|

|

b9746106a0 | ||

|

|

faa47670ef | ||

|

|

add5f4e0cb | ||

|

|

7636c038d9 | ||

|

|

557f781f5c | ||

|

|

1997011328 | ||

|

|

dfdd5ebc8c | ||

|

|

42977caa6a | ||

|

|

bce46c880d | ||

|

|

9c987c5694 | ||

|

|

f33e50c28c | ||

|

|

280d6febf7 | ||

|

|

3a32918ceb | ||

|

|

723bd4bbbc | ||

|

|

7b130e1832 | ||

|

|

3aa969d64e | ||

|

|

04688c86b3 | ||

|

|

4f8dbb5efb | ||

|

|

3b382199eb | ||

|

|

db50991e9c | ||

|

|

88036ebe14 | ||

|

|

680feaefa1 | ||

|

|

8676cd3a43 | ||

|

|

53e0f6b734 | ||

|

|

eaee475662 | ||

|

|

19b672b3bc | ||

|

|

bf0a31ce86 | ||

|

|

28ff561400 | ||

|

|

ff2472a551 | ||

|

|

dac302e8be | ||

|

|

930a6f7c6f | ||

|

|

b661b0bb39 | ||

|

|

5203713ca0 | ||

|

|

94794b106e | ||

|

|

eb8c21cf04 | ||

|

|

8a501377f2 | ||

|

|

5c67a0fecd | ||

|

|

145e6a3a4f | ||

|

|

0219ed73f5 | ||

|

|

88ccb7857b | ||

|

|

e33d6becba | ||

|

|

41417affc0 | ||

|

|

1b4a6c3f6d | ||

|

|

aa98edd661 | ||

|

|

cb70331c82 | ||

|

|

31e105b190 | ||

|

|

08d2615f71 | ||

|

|

bb9e8b1c42 | ||

|

|

6b8d7376a6 | ||

|

|

4f680d8c06 | ||

|

|

3eeb741975 | ||

|

|

39cb638132 | ||

|

|

be8f5d21cb | ||

|

|

acf18836e8 | ||

|

|

d688621de4 | ||

|

|

5173d09191 | ||

|

|

45bab4d32f | ||

|

|

f383e54c72 | ||

|

|

ab6b9b27cc | ||

|

|

db3fb0bf2e | ||

|

|

5859e6a5e5 | ||

|

|

1d3271fec7 | ||

|

|

673c8ef62c | ||

|

|

4614ae320f | ||

|

|

10b103c0bf | ||

|

|

361e64a8a1 | ||

|

|

e56081b863 | ||

|

|

01a44b281b | ||

|

|

7a6631c6e8 | ||

|

|

ada973dd44 | ||

|

|

3a7e962bde | ||

|

|

ae297e2f60 | ||

|

|

2bc2b6bd41 | ||

|

|

8199371172 | ||

|

|

dac1879de5 | ||

|

|

dd7c6b29d9 | ||

|

|

41b7e05f59 | ||

|

|

c5f5714e02 | ||

|

|

d20d8322af | ||

|

|

288f5d5015 | ||

|

|

a640eb9180 | ||

|

|

7d48baab3e | ||

|

|

b1cd6d34fc | ||

|

|

f14d033cef | ||

|

|

ec75e161e2 | ||

|

|

2e8fb8f2c6 | ||

|

|

362bf22139 | ||

|

|

890da9b287 | ||

|

|

7b8dadf945 | ||

|

|

8f70dbb963 | ||

|

|

15505f5caf | ||

|

|

b2d770c0a4 | ||

|

|

f7e25fff1a | ||

|

|

688b3a9f8b | ||

|

|

8f48e18854 | ||

|

|

306f75be59 | ||

|

|

982c3cf4dc | ||

|

|

156cda6cb6 | ||

|

|

9f5125cf6b | ||

|

|

a84411363e | ||

|

|

9f3bb0210b | ||

|

|

d1271502ef | ||

|

|

d1065b2d49 | ||

|

|

aa19227e30 | ||

|

|

d7a2861bbe | ||

|

|

5aae9c9afa | ||

|

|

93cb48c928 | ||

|

|

55f7f93a24 | ||

|

|

5a608a4235 | ||

|

|

77d023758f | ||

|

|

f9edafd374 | ||

|

|

d94219eb0e | ||

|

|

0871aa2cf3 | ||

|

|

e0fe99d0b8 | ||

|

|

c1acf53585 | ||

|

|

baf4eed0d9 | ||

|

|

60e1192a7a | ||

|

|

2608e02d6e | ||

|

|

0a5928fbcb | ||

|

|

7b99b02b4a | ||

|

|

dfeadc9ebe | ||

|

|

7ff64fbd09 |

@@ -1,10 +1,11 @@

|

||||

"""Helper script to get the actual branch name, docker safe"""

|

||||

|

||||

import os

|

||||

from importlib.metadata import version as package_version

|

||||

from json import dumps

|

||||

from time import time

|

||||

|

||||

from authentik import authentik_version

|

||||

|

||||

# Decide if we should push the image or not

|

||||

should_push = True

|

||||

if len(os.environ.get("DOCKER_USERNAME", "")) < 1:

|

||||

@@ -28,7 +29,7 @@ is_release = "dev" not in image_names[0]

|

||||

sha = os.environ["GITHUB_SHA"] if not is_pull_request else os.getenv("PR_HEAD_SHA")

|

||||

|

||||

# 2042.1.0 or 2042.1.0-rc1

|

||||

version = package_version("authentik")

|

||||

version = authentik_version()

|

||||

# 2042.1

|

||||

version_family = ".".join(version.split("-", 1)[0].split(".")[:-1])

|

||||

prerelease = "-" in version

|

||||

|

||||

@@ -49,7 +49,7 @@ jobs:

|

||||

uses: ./.github/actions/docker-push-variables

|

||||

id: ev

|

||||

env:

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_USERNAME }}

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_CORP_USERNAME }}

|

||||

with:

|

||||

image-name: ${{ inputs.image_name }}

|

||||

image-arch: ${{ inputs.image_arch }}

|

||||

@@ -58,8 +58,8 @@ jobs:

|

||||

if: ${{ inputs.registry_dockerhub }}

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

username: ${{ secrets.DOCKER_USERNAME }}

|

||||

password: ${{ secrets.DOCKER_PASSWORD }}

|

||||

username: ${{ secrets.DOCKER_CORP_USERNAME }}

|

||||

password: ${{ secrets.DOCKER_CORP_PASSWORD }}

|

||||

- name: Login to GitHub Container Registry

|

||||

if: ${{ inputs.registry_ghcr }}

|

||||

uses: docker/login-action@v3

|

||||

@@ -67,14 +67,20 @@ jobs:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

- name: make empty clients

|

||||

if: ${{ inputs.release }}

|

||||

- name: Setup node

|

||||

uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: web/package.json

|

||||

cache: "npm"

|

||||

cache-dependency-path: web/package-lock.json

|

||||

- name: Setup go

|

||||

uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version-file: "go.mod"

|

||||

- name: Generate API Clients

|

||||

run: |

|

||||

mkdir -p ./gen-ts-api

|

||||

mkdir -p ./gen-go-api

|

||||

- name: generate ts client

|

||||

if: ${{ !inputs.release }}

|

||||

run: make gen-client-ts

|

||||

make gen-client-ts

|

||||

make gen-client-go

|

||||

- name: Build Docker Image

|

||||

uses: docker/build-push-action@v6

|

||||

id: push

|

||||

|

||||

@@ -54,7 +54,7 @@ jobs:

|

||||

uses: ./.github/actions/docker-push-variables

|

||||

id: ev

|

||||

env:

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_USERNAME }}

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_CORP_USERNAME }}

|

||||

with:

|

||||

image-name: ${{ inputs.image_name }}

|

||||

merge-server:

|

||||

@@ -74,15 +74,15 @@ jobs:

|

||||

uses: ./.github/actions/docker-push-variables

|

||||

id: ev

|

||||

env:

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_USERNAME }}

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_CORP_USERNAME }}

|

||||

with:

|

||||

image-name: ${{ inputs.image_name }}

|

||||

- name: Login to Docker Hub

|

||||

if: ${{ inputs.registry_dockerhub }}

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

username: ${{ secrets.DOCKER_USERNAME }}

|

||||

password: ${{ secrets.DOCKER_PASSWORD }}

|

||||

username: ${{ secrets.DOCKER_CORP_USERNAME }}

|

||||

password: ${{ secrets.DOCKER_CORP_PASSWORD }}

|

||||

- name: Login to GitHub Container Registry

|

||||

if: ${{ inputs.registry_ghcr }}

|

||||

uses: docker/login-action@v3

|

||||

|

||||

1

.github/workflows/api-ts-publish.yml

vendored

1

.github/workflows/api-ts-publish.yml

vendored

@@ -10,7 +10,6 @@ on:

|

||||

|

||||

jobs:

|

||||

build:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: generate_token

|

||||

|

||||

1

.github/workflows/ci-docs-source.yml

vendored

1

.github/workflows/ci-docs-source.yml

vendored

@@ -13,7 +13,6 @@ env:

|

||||

|

||||

jobs:

|

||||

publish-source-docs:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 120

|

||||

steps:

|

||||

|

||||

4

.github/workflows/ci-docs.yml

vendored

4

.github/workflows/ci-docs.yml

vendored

@@ -61,7 +61,6 @@ jobs:

|

||||

working-directory: website/

|

||||

run: npm run build -w integrations

|

||||

build-container:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

# Needed to upload container images to ghcr.io

|

||||

@@ -81,7 +80,7 @@ jobs:

|

||||

uses: ./.github/actions/docker-push-variables

|

||||

id: ev

|

||||

env:

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_USERNAME }}

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_CORP_USERNAME }}

|

||||

with:

|

||||

image-name: ghcr.io/goauthentik/dev-docs

|

||||

- name: Login to Container Registry

|

||||

@@ -121,4 +120,3 @@ jobs:

|

||||

- uses: re-actors/alls-green@release/v1

|

||||

with:

|

||||

jobs: ${{ toJSON(needs) }}

|

||||

allowed-skips: ${{ github.repository == 'goauthentik/authentik-internal' && 'build-container' || '[]' }}

|

||||

|

||||

1

.github/workflows/ci-main-daily.yml

vendored

1

.github/workflows/ci-main-daily.yml

vendored

@@ -9,7 +9,6 @@ on:

|

||||

|

||||

jobs:

|

||||

test-container:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

fail-fast: false

|

||||

|

||||

12

.github/workflows/ci-main.yml

vendored

12

.github/workflows/ci-main.yml

vendored

@@ -80,7 +80,15 @@ jobs:

|

||||

cp authentik/lib/default.yml local.env.yml

|

||||

cp -R .github ..

|

||||

cp -R scripts ..

|

||||

git checkout $(git tag --sort=version:refname | grep '^version/' | grep -vE -- '-rc[0-9]+$' | tail -n1)

|

||||

# Previous stable tag

|

||||

prev_stable=$(git tag --sort=version:refname | grep '^version/' | grep -vE -- '-rc[0-9]+$' | tail -n1)

|

||||

# Current version family based on

|

||||

current_version_family=$(python -c "from authentik import VERSION; print(VERSION)" | grep -vE -- 'rc[0-9]+$')

|

||||

if [[ -n $current_version_family ]]; then

|

||||

prev_stable=$current_version_family

|

||||

fi

|

||||

echo "::notice::Checking out ${prev_stable} as stable version..."

|

||||

git checkout $(prev_stable)

|

||||

rm -rf .github/ scripts/

|

||||

mv ../.github ../scripts .

|

||||

- name: Setup authentik env (stable)

|

||||

@@ -278,7 +286,7 @@ jobs:

|

||||

uses: ./.github/actions/docker-push-variables

|

||||

id: ev

|

||||

env:

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_USERNAME }}

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_CORP_USERNAME }}

|

||||

with:

|

||||

image-name: ghcr.io/goauthentik/dev-server

|

||||

- name: Comment on PR

|

||||

|

||||

3

.github/workflows/ci-outpost.yml

vendored

3

.github/workflows/ci-outpost.yml

vendored

@@ -59,7 +59,6 @@ jobs:

|

||||

with:

|

||||

jobs: ${{ toJSON(needs) }}

|

||||

build-container:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

timeout-minutes: 120

|

||||

needs:

|

||||

- ci-outpost-mark

|

||||

@@ -90,7 +89,7 @@ jobs:

|

||||

uses: ./.github/actions/docker-push-variables

|

||||

id: ev

|

||||

env:

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_USERNAME }}

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_CORP_USERNAME }}

|

||||

with:

|

||||

image-name: ghcr.io/goauthentik/dev-${{ matrix.type }}

|

||||

- name: Login to Container Registry

|

||||

|

||||

@@ -13,7 +13,6 @@ env:

|

||||

|

||||

jobs:

|

||||

build:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: generate_token

|

||||

|

||||

15

.github/workflows/gh-ghcr-retention.yml

vendored

15

.github/workflows/gh-ghcr-retention.yml

vendored

@@ -5,10 +5,13 @@ on:

|

||||

# schedule:

|

||||

# - cron: "0 0 * * *" # every day at midnight

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

dry-run:

|

||||

type: boolean

|

||||

description: Enable dry-run mode

|

||||

|

||||

jobs:

|

||||

clean-ghcr:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

name: Delete old unused container images

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

@@ -18,12 +21,12 @@ jobs:

|

||||

app_id: ${{ secrets.GH_APP_ID }}

|

||||

private_key: ${{ secrets.GH_APP_PRIVATE_KEY }}

|

||||

- name: Delete 'dev' containers older than a week

|

||||

uses: snok/container-retention-policy@v2

|

||||

uses: snok/container-retention-policy@3b0972b2276b171b212f8c4efbca59ebba26eceb # v3.0.1

|

||||

with:

|

||||

image-names: dev-server,dev-ldap,dev-proxy

|

||||

image-tags: "!gh-next,!gh-main"

|

||||

cut-off: One week ago UTC

|

||||

account-type: org

|

||||

org-name: goauthentik

|

||||

untagged-only: false

|

||||

account: goauthentik

|

||||

tag-selection: untagged

|

||||

token: ${{ steps.generate_token.outputs.token }}

|

||||

skip-tags: gh-next,gh-main

|

||||

dry-run: ${{ inputs.dry-run }}

|

||||

|

||||

1

.github/workflows/packages-npm-publish.yml

vendored

1

.github/workflows/packages-npm-publish.yml

vendored

@@ -14,7 +14,6 @@ on:

|

||||

|

||||

jobs:

|

||||

publish:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

fail-fast: false

|

||||

|

||||

168

.github/workflows/release-bump-version.yml

vendored

168

.github/workflows/release-bump-version.yml

vendored

@@ -1,168 +0,0 @@

|

||||

---

|

||||

name: Release - Bump version

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

version:

|

||||

description: Version

|

||||

required: true

|

||||

type: string

|

||||

release_reason:

|

||||

description: Release reason

|

||||

required: true

|

||||

type: choice

|

||||

options:

|

||||

- bugfix

|

||||

- feature

|

||||

- security

|

||||

- other

|

||||

- prerelease

|

||||

|

||||

env:

|

||||

POSTGRES_DB: authentik

|

||||

POSTGRES_USER: authentik

|

||||

POSTGRES_PASSWORD: "EK-5jnKfjrGRm<77"

|

||||

|

||||

jobs:

|

||||

check-inputs:

|

||||

name: Check inputs validity

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: check

|

||||

run: |

|

||||

echo "${{ inputs.version }}" | grep -E "^[0-9]{4}\.[0-9]{1,2}\.[0-9]+(-rc[0-9]+)?$"

|

||||

echo "major_version=${{ inputs.version }}" | grep -oE "^major_version=[0-9]{4}\.[0-9]{1,2}" >> "$GITHUB_OUTPUT"

|

||||

outputs:

|

||||

major_version: "${{ steps.check.outputs.major_version }}"

|

||||

bump-authentik:

|

||||

name: Bump authentik version

|

||||

needs:

|

||||

- check-inputs

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: app-token

|

||||

name: Generate app token

|

||||

uses: actions/create-github-app-token@v2

|

||||

with:

|

||||

app-id: ${{ secrets.GH_APP_ID }}

|

||||

private-key: ${{ secrets.GH_APP_PRIVATE_KEY }}

|

||||

- id: get-user-id

|

||||

name: Get GitHub app user ID

|

||||

run: echo "user-id=$(gh api "/users/${{ steps.app-token.outputs.app-slug }}[bot]" --jq .id)" >> "$GITHUB_OUTPUT"

|

||||

env:

|

||||

GH_TOKEN: "${{ steps.app-token.outputs.token }}"

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

ref: "version-${{ needs.check-inputs.outputs.major_version }}"

|

||||

token: "${{ steps.app-token.outputs.token }}"

|

||||

- name: Setup authentik env

|

||||

uses: ./.github/actions/setup

|

||||

- name: Run migrations

|

||||

run: make migrate

|

||||

- name: Bump version

|

||||

run: "make bump version=${{ inputs.version }}"

|

||||

- name: Commit and push

|

||||

run: |

|

||||

# ID from https://api.github.com/users/authentik-automation[bot]

|

||||

git config --global user.name '${{ steps.app-token.outputs.app-slug }}[bot]'

|

||||

git config --global user.email '${{ steps.get-user-id.outputs.user-id }}+${{ steps.app-token.outputs.app-slug }}[bot]@users.noreply.github.com'

|

||||

git commit -a -m "release: ${{ inputs.version }}" --allow-empty

|

||||

git tag "version/${{ inputs.version }}" HEAD -m "version/${{ inputs.version }}"

|

||||

git push --follow-tags

|

||||

bump-helm:

|

||||

name: Bump Helm version

|

||||

if: ${{ inputs.release_reason != 'prerelease' }}

|

||||

needs:

|

||||

- bump-authentik

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: app-token

|

||||

name: Generate app token

|

||||

uses: actions/create-github-app-token@v2

|

||||

with:

|

||||

app-id: ${{ secrets.GH_APP_ID }}

|

||||

private-key: ${{ secrets.GH_APP_PRIVATE_KEY }}

|

||||

repositories: helm

|

||||

- id: get-user-id

|

||||

name: Get GitHub app user ID

|

||||

run: echo "user-id=$(gh api "/users/${{ steps.app-token.outputs.app-slug }}[bot]" --jq .id)" >> "$GITHUB_OUTPUT"

|

||||

env:

|

||||

GH_TOKEN: "${{ steps.app-token.outputs.token }}"

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

repository: "${{ github.repository_owner }}/helm"

|

||||

token: "${{ steps.app-token.outputs.token }}"

|

||||

- name: Bump version

|

||||

run: |

|

||||

sed -i 's/^version: .*/version: ${{ inputs.version }}/' charts/authentik/Chart.yaml

|

||||

sed -i 's/^appVersion: .*/appVersion: ${{ inputs.version }}/' charts/authentik/Chart.yaml

|

||||

sed -i 's/upgrade to authentik .*/upgrade to authentik ${{ inputs.version }}/' charts/authentik/Chart.yaml

|

||||

sed -E -i 's/[0-9]{4}\.[0-9]{1,2}\.[0-9]+$/${{ inputs.version }}/' charts/authentik/Chart.yaml

|

||||

./scripts/helm-docs.sh

|

||||

- name: Create pull request

|

||||

uses: peter-evans/create-pull-request@v7

|

||||

with:

|

||||

token: "${{ steps.app-token.outputs.token }}"

|

||||

branch: bump-${{ inputs.version }}

|

||||

commit-message: "charts/authentik: bump to ${{ inputs.version }}"

|

||||

title: "charts/authentik: bump to ${{ inputs.version }}"

|

||||

body: "charts/authentik: bump to ${{ inputs.version }}"

|

||||

delete-branch: true

|

||||

signoff: true

|

||||

author: "${{ steps.app-token.outputs.app-slug }}[bot] ${{ steps.get-user-id.outputs.user-id }}+${{ steps.app-token.outputs.app-slug }}[bot]@users.noreply.github.com"

|

||||

bump-version:

|

||||

name: Bump version repository

|

||||

if: ${{ inputs.release_reason != 'prerelease' }}

|

||||

needs:

|

||||

- check-inputs

|

||||

- bump-authentik

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: app-token

|

||||

name: Generate app token

|

||||

uses: actions/create-github-app-token@v2

|

||||

with:

|

||||

app-id: ${{ secrets.GH_APP_ID }}

|

||||

private-key: ${{ secrets.GH_APP_PRIVATE_KEY }}

|

||||

repositories: version

|

||||

- id: get-user-id

|

||||

name: Get GitHub app user ID

|

||||

run: echo "user-id=$(gh api "/users/${{ steps.app-token.outputs.app-slug }}[bot]" --jq .id)" >> "$GITHUB_OUTPUT"

|

||||

env:

|

||||

GH_TOKEN: "${{ steps.app-token.outputs.token }}"

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

repository: "${{ github.repository_owner }}/version"

|

||||

token: "${{ steps.app-token.outputs.token }}"

|

||||

- name: Bump feature version

|

||||

if: "${{ inputs.release_reason == 'feature' }}"

|

||||

run: |

|

||||

changelog_url="https://docs.goauthentik.io/docs/releases/${{ needs.check-inputs.outputs.major_version }}"

|

||||

jq \

|

||||

--arg version "${{ inputs.version }}" \

|

||||

--arg changelog "See ${changelog_url}" \

|

||||

--arg changelog_url "${changelog_url}" \

|

||||

'.stable.version = $version | .stable.changelog = $changelog | .stable.changelog_url = $changelog_url' version.json > version.new.json

|

||||

mv version.new.json version.json

|

||||

- name: Bump feature version

|

||||

if: "${{ inputs.release_reason != 'feature' }}"

|

||||

run: |

|

||||

changelog_url="https://docs.goauthentik.io/docs/releases/${{ needs.check-inputs.outputs.major_version }}#fixed-in-$(echo -n ${{ inputs.version}} | sed 's/\.//g')"

|

||||

jq \

|

||||

--arg version "${{ inputs.version }}" \

|

||||

--arg changelog "See ${changelog_url}" \

|

||||

--arg changelog_url "${changelog_url}" \

|

||||

'.stable.version = $version | .stable.changelog = $changelog | .stable.changelog_url = $changelog_url' version.json > version.new.json

|

||||

mv version.new.json version.json

|

||||

- name: Create pull request

|

||||

uses: peter-evans/create-pull-request@v7

|

||||

with:

|

||||

token: "${{ steps.app-token.outputs.token }}"

|

||||

branch: bump-${{ inputs.version }}

|

||||

commit-message: "version: bump to ${{ inputs.version }}"

|

||||

title: "version: bump to ${{ inputs.version }}"

|

||||

body: "version: bump to ${{ inputs.version }}"

|

||||

delete-branch: true

|

||||

signoff: true

|

||||

author: "${{ steps.app-token.outputs.app-slug }}[bot] <${{ steps.get-user-id.outputs.user-id }}+${{ steps.app-token.outputs.app-slug }}[bot]@users.noreply.github.com>"

|

||||

1

.github/workflows/release-next-branch.yml

vendored

1

.github/workflows/release-next-branch.yml

vendored

@@ -12,7 +12,6 @@ permissions:

|

||||

|

||||

jobs:

|

||||

update-next:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

environment: internal-production

|

||||

steps:

|

||||

|

||||

23

.github/workflows/release-publish.yml

vendored

23

.github/workflows/release-publish.yml

vendored

@@ -10,6 +10,7 @@ jobs:

|

||||

uses: ./.github/workflows/_reusable-docker-build.yaml

|

||||

secrets: inherit

|

||||

permissions:

|

||||

contents: read

|

||||

# Needed to upload container images to ghcr.io

|

||||

packages: write

|

||||

# Needed for attestation

|

||||

@@ -23,6 +24,7 @@ jobs:

|

||||

build-docs:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

contents: read

|

||||

# Needed to upload container images to ghcr.io

|

||||

packages: write

|

||||

# Needed for attestation

|

||||

@@ -66,6 +68,7 @@ jobs:

|

||||

build-outpost:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

contents: read

|

||||

# Needed to upload container images to ghcr.io

|

||||

packages: write

|

||||

# Needed for attestation

|

||||

@@ -84,6 +87,11 @@ jobs:

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version-file: "go.mod"

|

||||

- uses: actions/setup-node@v5

|

||||

with:

|

||||

node-version-file: web/package.json

|

||||

cache: "npm"

|

||||

cache-dependency-path: web/package-lock.json

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v3.6.0

|

||||

- name: Set up Docker Buildx

|

||||

@@ -95,10 +103,10 @@ jobs:

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_CORP_USERNAME }}

|

||||

with:

|

||||

image-name: ghcr.io/goauthentik/${{ matrix.type }},authentik/${{ matrix.type }}

|

||||

- name: make empty clients

|

||||

- name: Generate API Clients

|

||||

run: |

|

||||

mkdir -p ./gen-ts-api

|

||||

mkdir -p ./gen-go-api

|

||||

make gen-client-ts

|

||||

make gen-client-go

|

||||

- name: Docker Login Registry

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

@@ -152,10 +160,17 @@ jobs:

|

||||

node-version-file: web/package.json

|

||||

cache: "npm"

|

||||

cache-dependency-path: web/package-lock.json

|

||||

- name: Build web

|

||||

- name: Install web dependencies

|

||||

working-directory: web/

|

||||

run: |

|

||||

npm ci

|

||||

- name: Generate API Clients

|

||||

run: |

|

||||

make gen-client-ts

|

||||

make gen-client-go

|

||||

- name: Build web

|

||||

working-directory: web/

|

||||

run: |

|

||||

npm run build-proxy

|

||||

- name: Build outpost

|

||||

run: |

|

||||

|

||||

213

.github/workflows/release-tag.yml

vendored

213

.github/workflows/release-tag.yml

vendored

@@ -1,39 +1,202 @@

|

||||

---

|

||||

name: Release - On tag

|

||||

name: Release - Tag new version

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- "version/*"

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

version:

|

||||

description: Version

|

||||

required: true

|

||||

type: string

|

||||

release_reason:

|

||||

description: Release reason

|

||||

required: true

|

||||

type: choice

|

||||

options:

|

||||

- bugfix

|

||||

- feature

|

||||

- security

|

||||

- other

|

||||

- prerelease

|

||||

|

||||

env:

|

||||

POSTGRES_DB: authentik

|

||||

POSTGRES_USER: authentik

|

||||

POSTGRES_PASSWORD: "EK-5jnKfjrGRm<77"

|

||||

|

||||

jobs:

|

||||

build:

|

||||

name: Create Release from Tag

|

||||

check-inputs:

|

||||

name: Check inputs validity

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- name: Pre-release test

|

||||

- id: check

|

||||

run: |

|

||||

make test-docker

|

||||

- id: generate_token

|

||||

uses: tibdex/github-app-token@v2

|

||||

echo "${{ inputs.version }}" | grep -E '^[0-9]{4}\.(0?[1-9]|1[0-2])\.[0-9]+(-rc[0-9]+)?$'

|

||||

echo "major_version=${{ inputs.version }}" | grep -oE "^major_version=[0-9]{4}\.[0-9]{1,2}" >> "$GITHUB_OUTPUT"

|

||||

- id: changelog-url

|

||||

run: |

|

||||

if [ "${{ inputs.release_reason }}" = "feature" ] || [ "${{ inputs.release_reason }}" = "prerelease" ]; then

|

||||

changelog_url="https://docs.goauthentik.io/docs/releases/${{ steps.check.outputs.major_version }}"

|

||||

else

|

||||

changelog_url="https://docs.goauthentik.io/docs/releases/${{ steps.check.outputs.major_version }}#fixed-in-$(echo -n ${{ inputs.version }} | sed 's/\.//g')"

|

||||

fi

|

||||

echo "changelog_url=${changelog_url}" >> "$GITHUB_OUTPUT"

|

||||

outputs:

|

||||

major_version: "${{ steps.check.outputs.major_version }}"

|

||||

changelog_url: "${{ steps.changelog-url.outputs.changelog_url }}"

|

||||

test:

|

||||

name: Pre-release test

|

||||

runs-on: ubuntu-latest

|

||||

needs:

|

||||

- check-inputs

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

app_id: ${{ secrets.GH_APP_ID }}

|

||||

private_key: ${{ secrets.GH_APP_PRIVATE_KEY }}

|

||||

- name: prepare variables

|

||||

uses: ./.github/actions/docker-push-variables

|

||||

id: ev

|

||||

ref: "version-${{ needs.check-inputs.outputs.major_version }}"

|

||||

- name: Setup authentik env

|

||||

uses: ./.github/actions/setup

|

||||

- run: make test-docker

|

||||

bump-authentik:

|

||||

name: Bump authentik version

|

||||

needs:

|

||||

- check-inputs

|

||||

- test

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: app-token

|

||||

name: Generate app token

|

||||

uses: actions/create-github-app-token@v2

|

||||

with:

|

||||

app-id: ${{ secrets.GH_APP_ID }}

|

||||

private-key: ${{ secrets.GH_APP_PRIVATE_KEY }}

|

||||

- id: get-user-id

|

||||

name: Get GitHub app user ID

|

||||

run: echo "user-id=$(gh api "/users/${{ steps.app-token.outputs.app-slug }}[bot]" --jq .id)" >> "$GITHUB_OUTPUT"

|

||||

env:

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_USERNAME }}

|

||||

GH_TOKEN: "${{ steps.app-token.outputs.token }}"

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

image-name: ghcr.io/goauthentik/server

|

||||

ref: "version-${{ needs.check-inputs.outputs.major_version }}"

|

||||

token: "${{ steps.app-token.outputs.token }}"

|

||||

- name: Setup authentik env

|

||||

uses: ./.github/actions/setup

|

||||

- name: Run migrations

|

||||

run: make migrate

|

||||

- name: Bump version

|

||||

run: "make bump version=${{ inputs.version }}"

|

||||

- name: Commit and push

|

||||

run: |

|

||||

# ID from https://api.github.com/users/authentik-automation[bot]

|

||||

git config --global user.name '${{ steps.app-token.outputs.app-slug }}[bot]'

|

||||

git config --global user.email '${{ steps.get-user-id.outputs.user-id }}+${{ steps.app-token.outputs.app-slug }}[bot]@users.noreply.github.com'

|

||||

git pull

|

||||

git commit -a -m "release: ${{ inputs.version }}" --allow-empty

|

||||

git tag "version/${{ inputs.version }}" HEAD -m "version/${{ inputs.version }}"

|

||||

git push --follow-tags

|

||||

- name: Create Release

|

||||

id: create_release

|

||||

uses: actions/create-release@v1.1.4

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ steps.generate_token.outputs.token }}

|

||||

uses: softprops/action-gh-release@v2

|

||||

with:

|

||||

tag_name: ${{ github.ref }}

|

||||

release_name: Release ${{ steps.ev.outputs.version }}

|

||||

token: "${{ steps.app-token.outputs.token }}"

|

||||

tag_name: "version/${{ inputs.version }}"

|

||||

name: Release ${{ inputs.version }}

|

||||

draft: true

|

||||

prerelease: ${{ steps.ev.outputs.prerelease == 'true' }}

|

||||

prerelease: ${{ inputs.release_reason == 'prerelease' }}

|

||||

generate_release_notes: true

|

||||

body: |

|

||||

See ${{ needs.check-inputs.outputs.changelog_url }}

|

||||

bump-helm:

|

||||

name: Bump Helm version

|

||||

if: ${{ inputs.release_reason != 'prerelease' }}

|

||||

needs:

|

||||

- bump-authentik

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: app-token

|

||||

name: Generate app token

|

||||

uses: actions/create-github-app-token@v2

|

||||

with:

|

||||

app-id: ${{ secrets.GH_APP_ID }}

|

||||

private-key: ${{ secrets.GH_APP_PRIVATE_KEY }}

|

||||

repositories: helm

|

||||

- id: get-user-id

|

||||

name: Get GitHub app user ID

|

||||

run: echo "user-id=$(gh api "/users/${{ steps.app-token.outputs.app-slug }}[bot]" --jq .id)" >> "$GITHUB_OUTPUT"

|

||||

env:

|

||||

GH_TOKEN: "${{ steps.app-token.outputs.token }}"

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

repository: "${{ github.repository_owner }}/helm"

|

||||

token: "${{ steps.app-token.outputs.token }}"

|

||||

- name: Bump version

|

||||

run: |

|

||||

sed -i 's/^version: .*/version: ${{ inputs.version }}/' charts/authentik/Chart.yaml

|

||||

sed -i 's/^appVersion: .*/appVersion: ${{ inputs.version }}/' charts/authentik/Chart.yaml

|

||||

sed -i 's/upgrade to authentik .*/upgrade to authentik ${{ inputs.version }}/' charts/authentik/Chart.yaml

|

||||

sed -E -i 's/[0-9]{4}\.[0-9]{1,2}\.[0-9]+$/${{ inputs.version }}/' charts/authentik/Chart.yaml

|

||||

./scripts/helm-docs.sh

|

||||

- name: Create pull request

|

||||

uses: peter-evans/create-pull-request@v7

|

||||

with:

|

||||

token: "${{ steps.app-token.outputs.token }}"

|

||||

branch: bump-${{ inputs.version }}

|

||||

commit-message: "charts/authentik: bump to ${{ inputs.version }}"

|

||||

title: "charts/authentik: bump to ${{ inputs.version }}"

|

||||

body: "charts/authentik: bump to ${{ inputs.version }}"

|

||||

delete-branch: true

|

||||

signoff: true

|

||||

author: "${{ steps.app-token.outputs.app-slug }}[bot] <${{ steps.get-user-id.outputs.user-id }}+${{ steps.app-token.outputs.app-slug }}[bot]@users.noreply.github.com>"

|

||||

bump-version:

|

||||

name: Bump version repository

|

||||

if: ${{ inputs.release_reason != 'prerelease' }}

|

||||

needs:

|

||||

- check-inputs

|

||||

- bump-authentik

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: app-token

|

||||

name: Generate app token

|

||||

uses: actions/create-github-app-token@v2

|

||||

with:

|

||||

app-id: ${{ secrets.GH_APP_ID }}

|

||||

private-key: ${{ secrets.GH_APP_PRIVATE_KEY }}

|

||||

repositories: version

|

||||

- id: get-user-id

|

||||

name: Get GitHub app user ID

|

||||

run: echo "user-id=$(gh api "/users/${{ steps.app-token.outputs.app-slug }}[bot]" --jq .id)" >> "$GITHUB_OUTPUT"

|

||||

env:

|

||||

GH_TOKEN: "${{ steps.app-token.outputs.token }}"

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

repository: "${{ github.repository_owner }}/version"

|

||||

token: "${{ steps.app-token.outputs.token }}"

|

||||

- name: Bump version

|

||||

if: "${{ inputs.release_reason == 'feature' }}"

|

||||

run: |

|

||||

changelog_url="https://docs.goauthentik.io/docs/releases/${{ needs.check-inputs.outputs.major_version }}"

|

||||

jq \

|

||||

--arg version "${{ inputs.version }}" \

|

||||

--arg changelog "See ${changelog_url}" \

|

||||

--arg changelog_url "${changelog_url}" \

|

||||

'.stable.version = $version | .stable.changelog = $changelog | .stable.changelog_url = $changelog_url' version.json > version.new.json

|

||||

mv version.new.json version.json

|

||||

- name: Bump version

|

||||

if: "${{ inputs.release_reason != 'feature' }}"

|

||||

run: |

|

||||

changelog_url="https://docs.goauthentik.io/docs/releases/${{ needs.check-inputs.outputs.major_version }}#fixed-in-$(echo -n ${{ inputs.version}} | sed 's/\.//g')"

|

||||

jq \

|

||||

--arg version "${{ inputs.version }}" \

|

||||

--arg changelog "See ${changelog_url}" \

|

||||

--arg changelog_url "${changelog_url}" \

|

||||

'.stable.version = $version | .stable.changelog = $changelog | .stable.changelog_url = $changelog_url' version.json > version.new.json

|

||||

mv version.new.json version.json

|

||||

- name: Create pull request

|

||||

uses: peter-evans/create-pull-request@v7

|

||||

with:

|

||||

token: "${{ steps.app-token.outputs.token }}"

|

||||

branch: bump-${{ inputs.version }}

|

||||

commit-message: "version: bump to ${{ inputs.version }}"

|

||||

title: "version: bump to ${{ inputs.version }}"

|

||||

body: "version: bump to ${{ inputs.version }}"

|

||||

delete-branch: true

|

||||

signoff: true

|

||||

author: "${{ steps.app-token.outputs.app-slug }}[bot] <${{ steps.get-user-id.outputs.user-id }}+${{ steps.app-token.outputs.app-slug }}[bot]@users.noreply.github.com>"

|

||||

|

||||

22

.github/workflows/repo-mirror-cleanup.yml

vendored

22

.github/workflows/repo-mirror-cleanup.yml

vendored

@@ -1,22 +0,0 @@

|

||||

---

|

||||

name: Repo - Cleanup internal mirror

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

|

||||

jobs:

|

||||

to_internal:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

fetch-depth: 0

|

||||

- if: ${{ env.MIRROR_KEY != '' }}

|

||||

uses: BeryJu/repository-mirroring-action@5cf300935bc2e068f73ea69bcc411a8a997208eb

|

||||

with:

|

||||

target_repo_url: git@github.com:goauthentik/authentik-internal.git

|

||||

ssh_private_key: ${{ secrets.GH_MIRROR_KEY }}

|

||||

args: --tags --force --prune

|

||||

env:

|

||||

MIRROR_KEY: ${{ secrets.GH_MIRROR_KEY }}

|

||||

21

.github/workflows/repo-mirror.yml

vendored

21

.github/workflows/repo-mirror.yml

vendored

@@ -1,21 +0,0 @@

|

||||

---

|

||||

name: Repo - Mirror to internal

|

||||

|

||||

on: [push, delete]

|

||||

|

||||

jobs:

|

||||

to_internal:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

fetch-depth: 0

|

||||

- if: ${{ env.MIRROR_KEY != '' }}

|

||||

uses: BeryJu/repository-mirroring-action@5cf300935bc2e068f73ea69bcc411a8a997208eb

|

||||

with:

|

||||

target_repo_url: git@github.com:goauthentik/authentik-internal.git

|

||||

ssh_private_key: ${{ secrets.GH_MIRROR_KEY }}

|

||||

args: --tags --force

|

||||

env:

|

||||

MIRROR_KEY: ${{ secrets.GH_MIRROR_KEY }}

|

||||

1

.github/workflows/repo-stale.yml

vendored

1

.github/workflows/repo-stale.yml

vendored

@@ -12,7 +12,6 @@ permissions:

|

||||

|

||||

jobs:

|

||||

stale:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: generate_token

|

||||

|

||||

@@ -17,7 +17,6 @@ env:

|

||||

|

||||

jobs:

|

||||

compile:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: generate_token

|

||||

|

||||

@@ -1 +0,0 @@

|

||||

website/docs/developer-docs/index.md

|

||||

4

CONTRIBUTING.md

Normal file

4

CONTRIBUTING.md

Normal file

@@ -0,0 +1,4 @@

|

||||

# Contributing to authentik

|

||||

|

||||

Thanks for your interest in contributing! Please see our [contributing guide](https://docs.goauthentik.io/docs/developer-docs/?utm_source=github) for more information.

|

||||

|

||||

11

Dockerfile

11

Dockerfile

@@ -26,7 +26,7 @@ RUN npm run build && \

|

||||

npm run build:sfe

|

||||

|

||||

# Stage 2: Build go proxy

|

||||

FROM --platform=${BUILDPLATFORM} docker.io/library/golang:1.24-bookworm AS go-builder

|

||||

FROM --platform=${BUILDPLATFORM} docker.io/library/golang:1.25-bookworm AS go-builder

|

||||

|

||||

ARG TARGETOS

|

||||

ARG TARGETARCH

|

||||

@@ -44,6 +44,7 @@ RUN --mount=type=cache,id=apt-$TARGETARCH$TARGETVARIANT,sharing=locked,target=/v

|

||||

|

||||

RUN --mount=type=bind,target=/go/src/goauthentik.io/go.mod,src=./go.mod \

|

||||

--mount=type=bind,target=/go/src/goauthentik.io/go.sum,src=./go.sum \

|

||||

--mount=type=bind,target=/go/src/goauthentik.io/gen-go-api,src=./gen-go-api \

|

||||

--mount=type=cache,target=/go/pkg/mod \

|

||||

go mod download

|

||||

|

||||

@@ -57,6 +58,7 @@ COPY ./go.mod /go/src/goauthentik.io/go.mod

|

||||

COPY ./go.sum /go/src/goauthentik.io/go.sum

|

||||

|

||||

RUN --mount=type=cache,sharing=locked,target=/go/pkg/mod \

|

||||

--mount=type=bind,target=/go/src/goauthentik.io/gen-go-api,src=./gen-go-api \

|

||||

--mount=type=cache,id=go-build-$TARGETARCH$TARGETVARIANT,sharing=locked,target=/root/.cache/go-build \

|

||||

if [ "$TARGETARCH" = "arm64" ]; then export CC=aarch64-linux-gnu-gcc && export CC_FOR_TARGET=gcc-aarch64-linux-gnu; fi && \

|

||||

CGO_ENABLED=1 GOFIPS140=latest GOARM="${TARGETVARIANT#v}" \

|

||||

@@ -119,7 +121,11 @@ RUN --mount=type=cache,id=apt-$TARGETARCH$TARGETVARIANT,sharing=locked,target=/v

|

||||

libltdl-dev && \

|

||||

curl https://sh.rustup.rs -sSf | sh -s -- -y

|

||||

|

||||

ENV UV_NO_BINARY_PACKAGE="cryptography lxml python-kadmin-rs xmlsec"

|

||||

ENV UV_NO_BINARY_PACKAGE="cryptography lxml python-kadmin-rs xmlsec" \

|

||||

# https://github.com/rust-lang/rustup/issues/2949

|

||||

# Fixes issues where the rust version in the build cache is older than latest

|

||||

# and rustup tries to update it, which fails

|

||||

RUSTUP_PERMIT_COPY_RENAME="true"

|

||||

|

||||

RUN --mount=type=bind,target=pyproject.toml,src=pyproject.toml \

|

||||

--mount=type=bind,target=uv.lock,src=uv.lock \

|

||||

@@ -175,6 +181,7 @@ COPY ./lifecycle/ /lifecycle

|

||||

COPY ./authentik/sources/kerberos/krb5.conf /etc/krb5.conf

|

||||

COPY --from=go-builder /go/authentik /bin/authentik

|

||||

COPY ./packages/ /ak-root/packages

|

||||

RUN ln -s /ak-root/packages /packages

|

||||

COPY --from=python-deps /ak-root/.venv /ak-root/.venv

|

||||

COPY --from=node-builder /work/web/dist/ /web/dist/

|

||||

COPY --from=node-builder /work/web/authentik/ /web/authentik/

|

||||

|

||||

15

Makefile

15

Makefile

@@ -98,11 +98,11 @@ bump: ## Bump authentik version. Usage: make bump version=20xx.xx.xx

|

||||

ifndef version

|

||||

$(error Usage: make bump version=20xx.xx.xx )

|

||||

endif

|

||||

$(eval current_version := $(shell cat ${PWD}/internal/constants/VERSION))

|

||||

sed -i 's/^version = ".*"/version = "$(version)"/' pyproject.toml

|

||||

sed -i 's/^VERSION = ".*"/VERSION = "$(version)"/' authentik/__init__.py

|

||||

$(MAKE) gen-build gen-compose aws-cfn

|

||||

npm version --no-git-tag-version --allow-same-version $(version)

|

||||

cd ${PWD}/web && npm version --no-git-tag-version --allow-same-version $(version)

|

||||

sed -i "s/\"${current_version}\"/\"$(version)\"/" ${PWD}/package.json ${PWD}/package-lock.json ${PWD}/web/package.json ${PWD}/web/package-lock.json

|

||||

echo -n $(version) > ${PWD}/internal/constants/VERSION

|

||||

|

||||

#########################

|

||||

@@ -144,12 +144,7 @@ gen-clean-ts: ## Remove generated API client for TypeScript

|

||||

rm -rf ${PWD}/web/node_modules/@goauthentik/api/

|

||||

|

||||

gen-clean-go: ## Remove generated API client for Go

|

||||

mkdir -p ${PWD}/${GEN_API_GO}

|

||||

ifneq ($(wildcard ${PWD}/${GEN_API_GO}/.*),)

|

||||

make -C ${PWD}/${GEN_API_GO} clean

|

||||

else

|

||||

rm -rf ${PWD}/${GEN_API_GO}

|

||||

endif

|

||||

|

||||

gen-clean-py: ## Remove generated API client for Python

|

||||

rm -rf ${PWD}/${GEN_API_PY}/

|

||||

@@ -187,13 +182,9 @@ gen-client-py: gen-clean-py ## Build and install the authentik API for Python

|

||||

|

||||

gen-client-go: gen-clean-go ## Build and install the authentik API for Golang

|

||||

mkdir -p ${PWD}/${GEN_API_GO}

|

||||

ifeq ($(wildcard ${PWD}/${GEN_API_GO}/.*),)

|

||||

git clone --depth 1 https://github.com/goauthentik/client-go.git ${PWD}/${GEN_API_GO}

|

||||

else

|

||||

cd ${PWD}/${GEN_API_GO} && git pull

|

||||

endif

|

||||

cp ${PWD}/schema.yml ${PWD}/${GEN_API_GO}

|

||||

make -C ${PWD}/${GEN_API_GO} build

|

||||

make -C ${PWD}/${GEN_API_GO} build version=${NPM_VERSION}

|

||||

go mod edit -replace goauthentik.io/api/v3=./${GEN_API_GO}

|

||||

|

||||

gen-dev-config: ## Generate a local development config file

|

||||

|

||||

27

README.md

27

README.md

@@ -15,15 +15,16 @@

|

||||

|

||||

## What is authentik?

|

||||

|

||||

authentik is an open-source Identity Provider that emphasizes flexibility and versatility, with support for a wide set of protocols.

|

||||

authentik is an open-source Identity Provider (IdP) for modern SSO. It supports SAML, OAuth2/OIDC, LDAP, RADIUS, and more, designed for self-hosting from small labs to large production clusters.

|

||||

|

||||

Our [enterprise offer](https://goauthentik.io/pricing) can also be used as a self-hosted replacement for large-scale deployments of Okta/Auth0, Entra ID, Ping Identity, or other legacy IdPs for employees and B2B2C use.

|

||||

Our [enterprise offering](https://goauthentik.io/pricing) is available for organizations to securely replace existing IdPs such as Okta, Auth0, Entra ID, and Ping Identity for robust, large-scale identity management.

|

||||

|

||||

## Installation

|

||||

|

||||

For small/test setups it is recommended to use Docker Compose; refer to the [documentation](https://goauthentik.io/docs/installation/docker-compose/?utm_source=github).

|

||||

|

||||

For bigger setups, there is a Helm Chart [here](https://github.com/goauthentik/helm). This is documented [here](https://goauthentik.io/docs/installation/kubernetes/?utm_source=github).

|

||||

- Docker Compose: recommended for small/test setups. See the [documentation](https://docs.goauthentik.io/docs/install-config/install/docker-compose/).

|

||||

- Kubernetes (Helm Chart): recommended for larger setups. See the [documentation](https://docs.goauthentik.io/docs/install-config/install/kubernetes/) and the Helm chart [repository](https://github.com/goauthentik/helm).

|

||||

- AWS CloudFormation: deploy on AWS using our official templates. See the [documentation](https://docs.goauthentik.io/docs/install-config/install/aws/).

|

||||

- DigitalOcean Marketplace: one-click deployment via the official Marketplace app. See the [app listing](https://marketplace.digitalocean.com/apps/authentik).

|

||||

|

||||

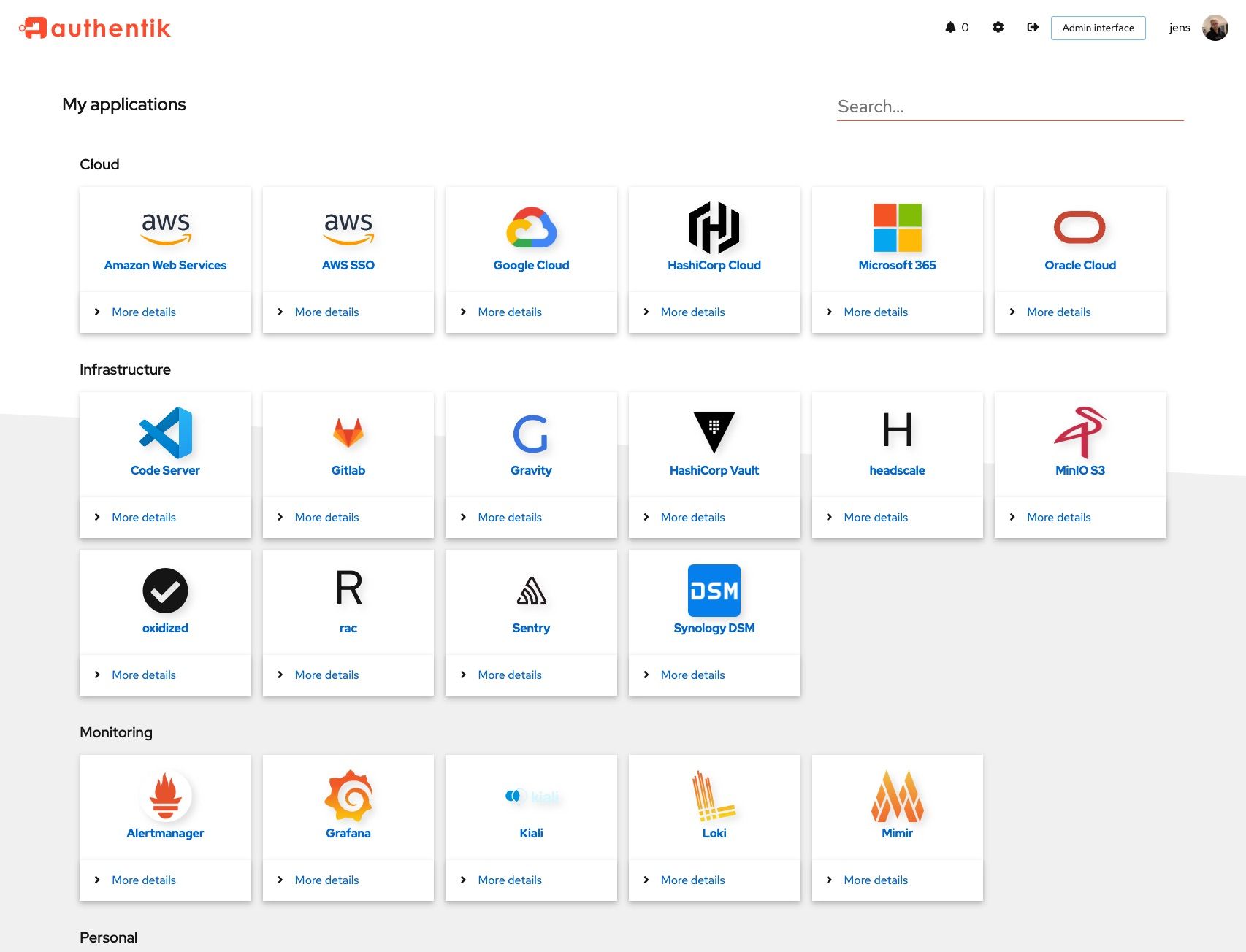

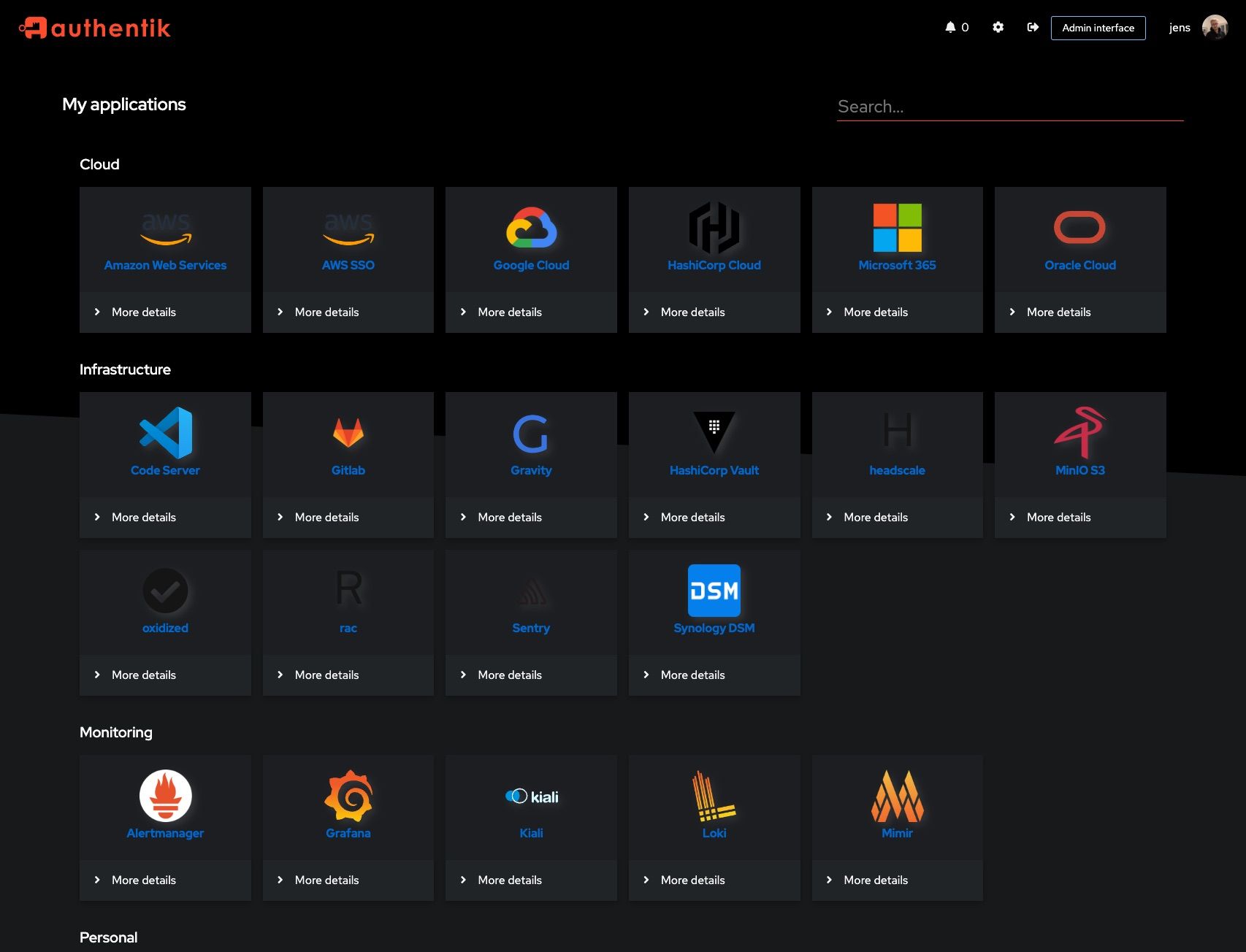

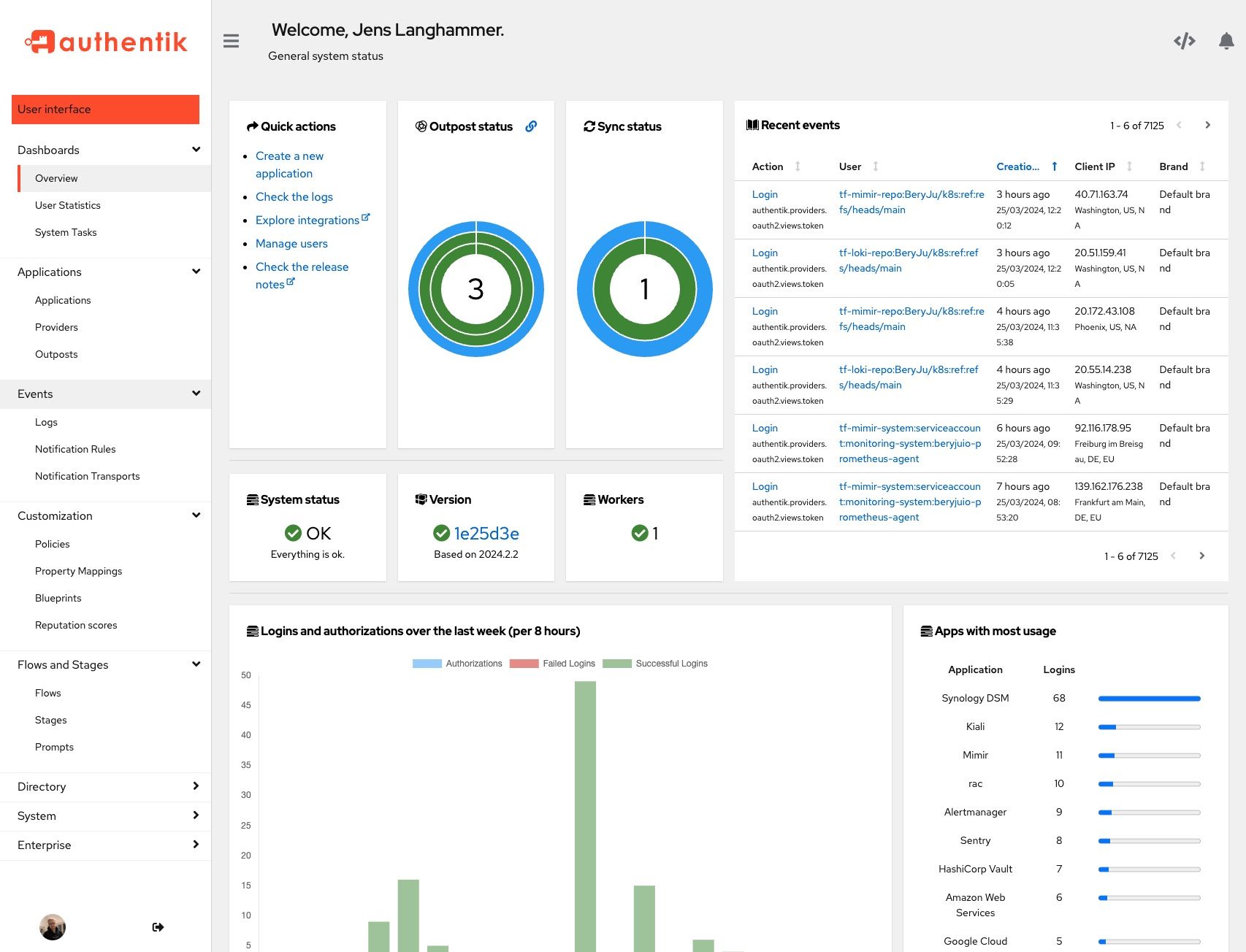

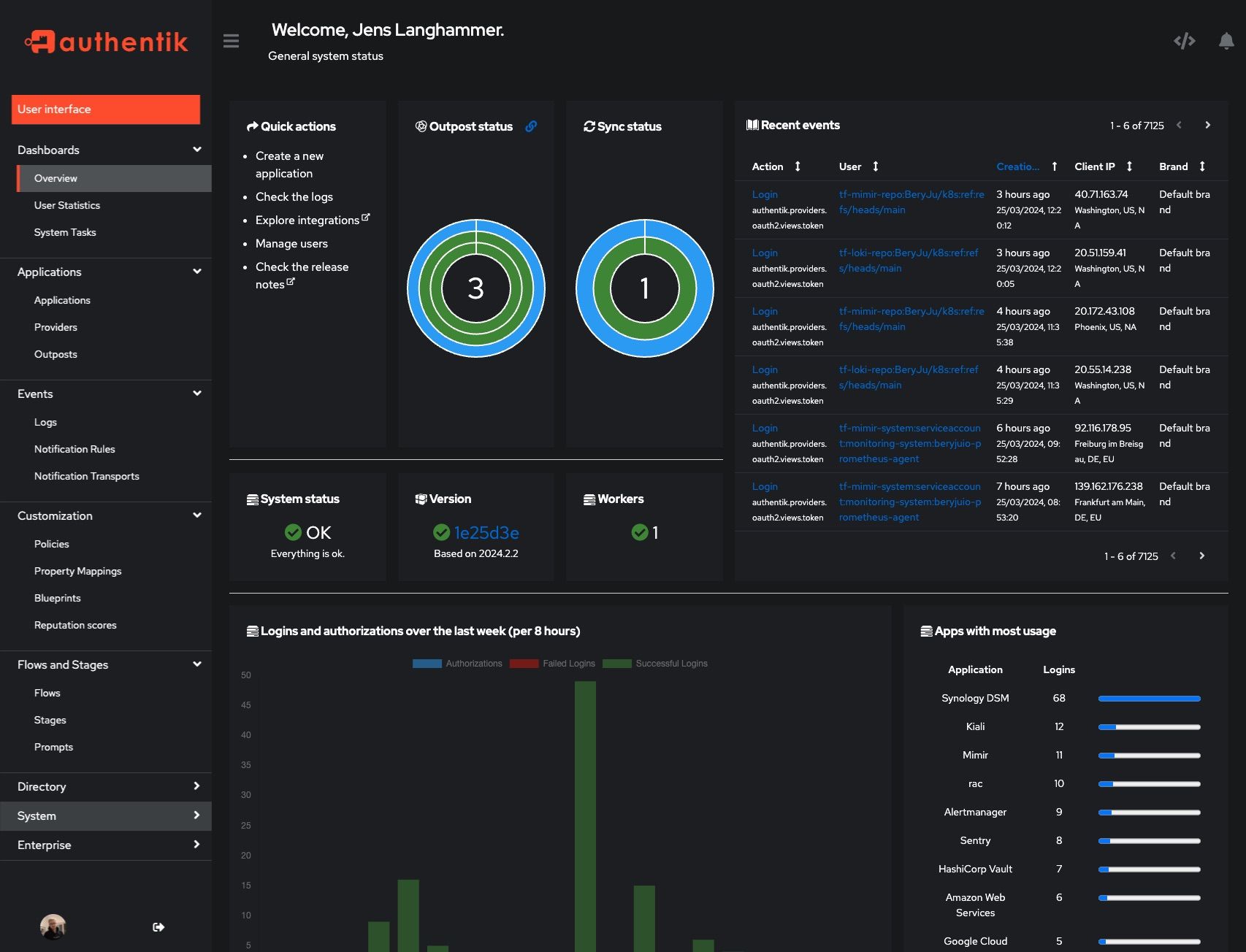

## Screenshots

|

||||

|

||||

@@ -32,14 +33,20 @@ For bigger setups, there is a Helm Chart [here](https://github.com/goauthentik/h

|

||||

|  |  |

|

||||

|  |  |

|

||||

|

||||

## Development

|

||||

## Development and contributions

|

||||

|

||||

See [Developer Documentation](https://docs.goauthentik.io/docs/developer-docs/?utm_source=github)

|

||||

See the [Developer Documentation](https://docs.goauthentik.io/docs/developer-docs/) for information about setting up local build environments, testing your contributions, and our contribution process.

|

||||

|

||||

## Security

|

||||

|

||||

See [SECURITY.md](SECURITY.md)

|

||||

Please see [SECURITY.md](SECURITY.md).

|

||||

|

||||

## Adoption and Contributions

|

||||

## Adoption

|

||||

|

||||

Your organization uses authentik? We'd love to add your logo to the readme and our website! Email us @ hello@goauthentik.io or open a GitHub Issue/PR! For more information on how to contribute to authentik, please refer to our [contribution guide](https://docs.goauthentik.io/docs/developer-docs?utm_source=github).

|

||||

Using authentik? We'd love to hear your story and feature your logo. Email us at [hello@goauthentik.io](mailto:hello@goauthentik.io) or open a GitHub Issue/PR!

|

||||

|

||||

## License

|

||||

|

||||

[](LICENSE)

|

||||

[](website/LICENSE)

|

||||

[](authentik/enterprise/LICENSE)

|

||||

|

||||

25

SECURITY.md

25

SECURITY.md

@@ -20,12 +20,33 @@ Even if the issue is not a CVE, we still greatly appreciate your help in hardeni

|

||||

|

||||

| Version | Supported |

|

||||

| --------- | --------- |

|

||||

| 2025.4.x | ✅ |

|

||||

| 2025.6.x | ✅ |

|

||||

| 2025.8.x | ✅ |

|

||||

|

||||

## Reporting a Vulnerability

|

||||

|

||||

To report a vulnerability, send an email to [security@goauthentik.io](mailto:security@goauthentik.io). Be sure to include relevant information like which version you've found the issue in, instructions on how to reproduce the issue, and anything else that might make it easier for us to find the issue.

|

||||

If you discover a potential vulnerability, please report it responsibly through one of the following channels:

|

||||

|

||||

- **Email**: [security@goauthentik.io](mailto:security@goauthentik.io)

|

||||

- **GitHub**: Submit a private security advisory via our [repository’s advisory portal](https://github.com/goauthentik/authentik/security/advisories/new)

|

||||

|

||||

When submitting a report, please include as much detail as possible, such as:

|

||||

|

||||

- **Affected version(s)**: The version of authentik where the issue was identified.

|

||||

- **Steps to reproduce**: A clear description or proof of concept to help us verify the issue.

|

||||

- **Impact assessment**: How the vulnerability could be exploited and its potential effect.

|

||||

- **Additional information**: Logs, configuration details (if relevant), or any suggested mitigations.

|

||||

|

||||

We kindly ask that you do not disclose the vulnerability publicly until we have confirmed and addressed the issue.

|

||||

|

||||

Our team will:

|

||||

|

||||

- Acknowledge receipt of your report as quickly as possible.

|

||||

- Keep you updated on the investigation and resolution progress.

|

||||

|

||||

## Researcher Recognition

|

||||

|

||||

We value contributions from the security community. For each valid report, we will publish a dedicated entry on our Security Advisory page that optionally includes the reporter’s name (or preferred alias). Please note that while we do not currently offer monetary bounties, we are committed to giving researchers appropriate credit for their efforts in keeping authentik secure.

|

||||

|

||||

## Severity levels

|

||||

|

||||

|

||||

@@ -3,7 +3,7 @@

|

||||

from functools import lru_cache

|

||||

from os import environ

|

||||

|

||||

VERSION = "2025.8.0-rc1"

|

||||

VERSION = "2025.8.6"

|

||||

ENV_GIT_HASH_KEY = "GIT_BUILD_HASH"

|

||||

|

||||

|

||||

|

||||

@@ -38,6 +38,7 @@ from authentik.blueprints.v1.oci import OCI_PREFIX

|

||||

from authentik.events.logs import capture_logs

|

||||

from authentik.events.utils import sanitize_dict

|

||||

from authentik.lib.config import CONFIG

|

||||

from authentik.tasks.apps import PRIORITY_HIGH

|

||||

from authentik.tasks.models import Task

|

||||

from authentik.tasks.schedules.models import Schedule

|

||||

from authentik.tenants.models import Tenant

|

||||

@@ -110,7 +111,7 @@ class BlueprintEventHandler(FileSystemEventHandler):

|

||||

|

||||

@actor(

|

||||

description=_("Find blueprints as `blueprints_find` does, but return a safe dict."),

|

||||

throws=(DatabaseError, ProgrammingError, InternalError),

|

||||

priority=PRIORITY_HIGH,

|

||||

)

|

||||

def blueprints_find_dict():

|

||||

blueprints = []

|

||||

@@ -148,10 +149,7 @@ def blueprints_find() -> list[BlueprintFile]:

|

||||

return blueprints

|

||||

|

||||

|

||||

@actor(

|

||||

description=_("Find blueprints and check if they need to be created in the database."),

|

||||

throws=(DatabaseError, ProgrammingError, InternalError),

|

||||

)

|

||||

@actor(description=_("Find blueprints and check if they need to be created in the database."))

|

||||

def blueprints_discovery(path: str | None = None):

|

||||

self: Task = CurrentTask.get_task()

|

||||

count = 0

|

||||

|

||||

@@ -63,28 +63,6 @@ class TestBrands(APITestCase):

|

||||

},

|

||||

)

|

||||

|

||||

def test_brand_subdomain_same_suffix(self):

|

||||

"""Test Current brand API"""

|

||||

Brand.objects.all().delete()

|

||||

Brand.objects.create(domain="bar.baz", branding_title="custom")

|

||||

Brand.objects.create(domain="foo.bar.baz", branding_title="custom")

|

||||

self.assertJSONEqual(

|

||||

self.client.get(

|

||||

reverse("authentik_api:brand-current"), HTTP_HOST="foo.bar.baz"

|

||||

).content.decode(),

|

||||

{

|

||||

"branding_logo": "/static/dist/assets/icons/icon_left_brand.svg",

|

||||

"branding_favicon": "/static/dist/assets/icons/icon.png",

|

||||

"branding_title": "custom",

|

||||

"branding_custom_css": "",

|

||||

"matched_domain": "foo.bar.baz",

|

||||

"ui_footer_links": [],

|

||||

"ui_theme": Themes.AUTOMATIC,

|

||||

"default_locale": "",

|

||||

"flags": self.default_flags,

|

||||

},

|

||||

)

|

||||

|

||||

def test_fallback(self):

|

||||

"""Test fallback brand"""

|

||||

Brand.objects.all().delete()

|

||||

|

||||

@@ -4,7 +4,6 @@ from typing import Any

|

||||

|

||||

from django.db.models import F, Q

|

||||

from django.db.models import Value as V

|

||||

from django.db.models.functions import Length

|

||||

from django.http.request import HttpRequest

|

||||

from django.utils.html import _json_script_escapes

|

||||

from django.utils.safestring import mark_safe

|

||||

@@ -21,9 +20,9 @@ DEFAULT_BRAND = Brand(domain="fallback")

|

||||

def get_brand_for_request(request: HttpRequest) -> Brand:

|

||||

"""Get brand object for current request"""

|

||||

db_brands = (

|

||||

Brand.objects.annotate(host_domain=V(request.get_host()), match_length=Length("domain"))

|

||||

Brand.objects.annotate(host_domain=V(request.get_host()))

|

||||

.filter(Q(host_domain__iendswith=F("domain")) | _q_default)

|

||||

.order_by("-match_length", "default")

|

||||

.order_by("default")

|

||||

)

|

||||

brands = list(db_brands.all())

|

||||

if len(brands) < 1:

|

||||

|

||||

@@ -18,10 +18,14 @@ from authentik.core.models import Provider

|

||||

class ProviderSerializer(ModelSerializer, MetaNameSerializer):

|

||||

"""Provider Serializer"""

|

||||

|

||||

assigned_application_slug = ReadOnlyField(source="application.slug")

|

||||

assigned_application_name = ReadOnlyField(source="application.name")

|

||||

assigned_backchannel_application_slug = ReadOnlyField(source="backchannel_application.slug")

|

||||

assigned_backchannel_application_name = ReadOnlyField(source="backchannel_application.name")

|

||||

assigned_application_slug = ReadOnlyField(source="application.slug", allow_null=True)

|

||||

assigned_application_name = ReadOnlyField(source="application.name", allow_null=True)

|

||||

assigned_backchannel_application_slug = ReadOnlyField(

|

||||

source="backchannel_application.slug", allow_null=True

|

||||

)

|

||||

assigned_backchannel_application_name = ReadOnlyField(

|

||||

source="backchannel_application.name", allow_null=True

|

||||

)

|

||||

|

||||

component = SerializerMethodField()

|

||||

|

||||

|

||||

@@ -328,6 +328,12 @@ class SessionUserSerializer(PassiveSerializer):

|

||||

original = UserSelfSerializer(required=False)

|

||||

|

||||

|

||||

class UserPasswordSetSerializer(PassiveSerializer):

|

||||

"""Payload to set a users' password directly"""

|

||||

|

||||

password = CharField(required=True)

|

||||

|

||||

|

||||

class UsersFilter(FilterSet):

|

||||

"""Filter for users"""

|

||||

|

||||

@@ -585,12 +591,7 @@ class UserViewSet(UsedByMixin, ModelViewSet):

|

||||

|

||||

@permission_required("authentik_core.reset_user_password")

|

||||

@extend_schema(

|

||||

request=inline_serializer(

|

||||

"UserPasswordSetSerializer",

|

||||

{

|

||||

"password": CharField(required=True),

|

||||

},

|

||||

),

|

||||

request=UserPasswordSetSerializer,

|

||||

responses={

|

||||

204: OpenApiResponse(description="Successfully changed password"),

|

||||

400: OpenApiResponse(description="Bad request"),

|

||||

@@ -599,9 +600,11 @@ class UserViewSet(UsedByMixin, ModelViewSet):

|

||||

@action(detail=True, methods=["POST"], permission_classes=[])

|

||||

def set_password(self, request: Request, pk: int) -> Response:

|

||||

"""Set password for user"""

|

||||

data = UserPasswordSetSerializer(data=request.data)

|

||||

data.is_valid(raise_exception=True)

|

||||

user: User = self.get_object()

|

||||

try:

|

||||

user.set_password(request.data.get("password"), request=request)

|

||||

user.set_password(data.validated_data["password"], request=request)

|

||||

user.save()

|

||||

except (ValidationError, IntegrityError) as exc:

|

||||

LOGGER.debug("Failed to set password", exc=exc)

|

||||

|

||||

@@ -0,0 +1,18 @@

|

||||

# Generated by Django 5.1.12 on 2025-09-25 13:39

|

||||

|

||||

from django.db import migrations, models

|

||||

|

||||

|

||||

class Migration(migrations.Migration):

|

||||

|

||||

dependencies = [

|

||||

("authentik_core", "0050_user_last_updated_and_more"),

|

||||

("authentik_rbac", "0006_alter_role_options"),

|

||||

]

|

||||

|

||||

operations = [

|

||||

migrations.AddIndex(

|

||||

model_name="group",

|

||||

index=models.Index(fields=["is_superuser"], name="authentik_c_is_supe_1e5a97_idx"),

|

||||

),

|

||||

]

|

||||

@@ -29,6 +29,7 @@ from authentik.blueprints.models import ManagedModel

|

||||

from authentik.core.expression.exceptions import PropertyMappingExpressionException

|

||||

from authentik.core.types import UILoginButton, UserSettingSerializer

|

||||

from authentik.lib.avatars import get_avatar

|

||||

from authentik.lib.config import CONFIG

|

||||

from authentik.lib.expression.exceptions import ControlFlowException

|

||||

from authentik.lib.generators import generate_id

|

||||

from authentik.lib.merge import MERGE_LIST_UNIQUE

|

||||

@@ -200,7 +201,10 @@ class Group(SerializerModel, AttributesMixin):

|

||||

"parent",

|

||||

),

|

||||

)

|

||||

indexes = [models.Index(fields=["name"])]

|

||||

indexes = (

|

||||

models.Index(fields=["name"]),

|

||||

models.Index(fields=["is_superuser"]),

|

||||

)

|

||||

verbose_name = _("Group")

|

||||

verbose_name_plural = _("Groups")

|

||||

permissions = [

|

||||

@@ -563,8 +567,10 @@ class Application(SerializerModel, PolicyBindingModel):

|

||||

it is returned as-is"""

|

||||

if not self.meta_icon:

|

||||

return None

|

||||

if "://" in self.meta_icon.name or self.meta_icon.name.startswith("/static"):

|

||||

if self.meta_icon.name.startswith("http"):

|

||||

return self.meta_icon.name

|

||||

if self.meta_icon.name.startswith("/"):

|

||||

return CONFIG.get("web.path", "/")[:-1] + self.meta_icon.name

|

||||

return self.meta_icon.url

|

||||

|

||||