## Thinking Path

> - Paperclip orchestrates AI agents for zero-human companies

> - The plugin system is the extension boundary for optional product

capabilities

> - Rich plugins need more than a worker entrypoint: they need scoped

database storage, local project folders, managed agents/routines, host

navigation, and reusable UI components

> - The LLM Wiki work exposed those missing host surfaces while keeping

plugin code outside the core control plane

> - This pull request expands the core plugin host, SDK, server APIs,

and UI bridge so plugins can declare and use those surfaces

> - The benefit is that future plugins can integrate with Paperclip

through documented, validated contracts instead of bespoke server or UI

imports

## What Changed

- Added plugin-managed database namespaces and migration tracking,

including Drizzle schema/migration files and SQL validation for

namespace isolation.

- Added server support for plugin local folders, managed agents, managed

routines, scoped plugin APIs, and plugin operation visibility.

- Expanded shared plugin manifest/types/validators and SDK

host/testing/UI exports for richer plugin surfaces.

- Added reusable UI pieces for file trees, managed routines, resizable

sidebars, route sidebars, and plugin bridge initialization.

- Updated plugin docs and example plugins to use the expanded host and

SDK surface.

## Verification

- `pnpm install --frozen-lockfile`

- `pnpm run preflight:workspace-links && pnpm exec vitest run

packages/shared/src/validators/plugin.test.ts

server/src/__tests__/plugin-database.test.ts

server/src/__tests__/plugin-local-folders.test.ts

server/src/__tests__/plugin-managed-agents.test.ts

server/src/__tests__/plugin-managed-routines.test.ts

server/src/__tests__/plugin-orchestration-apis.test.ts

ui/src/api/plugins.test.ts ui/src/components/FileTree.test.tsx

ui/src/components/ResizableSidebarPane.test.tsx

ui/src/pages/PluginPage.test.tsx ui/src/plugins/bridge.test.ts` passed:

11 files, 67 tests.

- Confirmed this PR changes 89 files and does not include

`pnpm-lock.yaml` or `.github/workflows/*`.

## Risks

- Medium: this expands plugin host contracts across db/shared/server/ui

and includes a new core migration (`0076_useful_elektra.sql`).

- The plugin database namespace validator is intentionally restrictive;

plugin authors may need follow-up affordances for SQL patterns that

remain blocked.

- Merge this before the LLM Wiki plugin PR so the plugin can resolve the

new SDK and host APIs.

> For core feature work, check [`ROADMAP.md`](ROADMAP.md) first and

discuss it in `#dev` before opening the PR. Feature PRs that overlap

with planned core work may need to be redirected — check the roadmap

first. See `CONTRIBUTING.md`.

## Model Used

- OpenAI Codex, GPT-5 coding agent, tool-enabled shell/git/GitHub

workflow. Context window size was not exposed by the runtime.

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [x] If this change affects the UI, I have included before/after

screenshots

- [x] I have updated relevant documentation to reflect my changes

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

---------

Co-authored-by: Paperclip <noreply@paperclip.ing>

## Summary

- Allow Cloud tenant issue identifiers with alphanumeric prefixes, such

as `PC1897-1`, to normalize as issue references.

- Resolve those identifiers through issue detail/update routes, active

run/live run polling, activity, costs, and `issueService.getById`.

- Keep UI issue-link parsing aligned so tenant links normalize back to

`/issues/<IDENTIFIER>`.

## Root Cause

Cloud tenant issue prefixes include digits from the stack-id hash. The

app-side route normalization still accepted only all-letter prefixes, so

`/api/issues/PC1897-1` skipped identifier lookup and fell through as a

non-UUID id.

## Verification

- `pnpm exec vitest run packages/shared/src/issue-references.test.ts

ui/src/lib/issue-reference.test.ts

server/src/__tests__/issue-identifier-routes.test.ts

server/src/__tests__/activity-routes.test.ts

server/src/__tests__/costs-service.test.ts

server/src/__tests__/agent-live-run-routes.test.ts

server/src/__tests__/issues-service.test.ts`

- `pnpm --filter @paperclipai/shared typecheck && pnpm --filter

@paperclipai/server typecheck`

- `git diff --check`

Co-authored-by: Paperclip <noreply@paperclip.ing>

## Thinking Path

> - Paperclip orchestrates AI agents for autonomous companies, so

developer throughput on the control plane repo directly affects how fast

the product can evolve.

> - The PR workflow is part of that throughput surface because every

change waits on it before review and merge.

> - This branch started from measured evidence that the PR critical path

was dominated by work that was either serialized unnecessarily or placed

on the wrong part of the graph.

> - The biggest concrete problems were: the canary dry run living inside

`verify`, the server isolated suites running one-by-one in a single

lane, and duplicate CI work that the PR path was paying for without

increasing coverage proportionally.

> - This pull request restructures the PR workflow so those costs are

reduced without removing the important coverage that was already

protecting release and test quality.

> - Follow-up fixes on the branch hardened the new entrypoints so they

work on clean GitHub runners and so the reduced PR typecheck path stays

self-maintaining as workspace packages evolve.

> - The benefit is materially faster PR wall-clock time while keeping

canary packaging checks, serialized-suite isolation, plugin SDK

consumers, and explicit TypeScript coverage where builds do not already

provide it.

## What Changed

- Moved the PR canary dry run into its own `Canary Dry Run` job so it

still runs on PRs but no longer extends the `verify` critical path.

- Split the custom Vitest runner into `general`, `serialized`, and `all`

modes, and added shard support for the isolated server suites.

- Added `test:run:general` and `test:run:serialized` scripts, then

rewired PR CI to fan the serialized server suites out across a 4-way

matrix.

- Added the required `@paperclipai/plugin-sdk` build preflight before

the new reduced-scope typecheck and test entrypoints so they succeed on

clean CI runners.

- Replaced the hardcoded PR build-gap list with

`scripts/run-typecheck-build-gaps.mjs`, which discovers workspace

packages whose `build` scripts skip TypeScript and runs only their

explicit `typecheck` scripts.

- Removed the redundant `pnpm build` from the PR `e2e` job because the

Playwright onboarding path boots Paperclip from source.

## Verification

- `ruby -e "require 'yaml'; YAML.load_file('.github/workflows/pr.yml');

puts 'workflow ok'"`

- `node scripts/run-vitest-stable.mjs --mode general --dry-run`

- `node scripts/run-vitest-stable.mjs --mode serialized --shard-index 0

--shard-count 4 --dry-run`

- `pnpm run typecheck:build-gaps`

- `pnpm test:run:general`

- `pnpm test:run:serialized -- --shard-index 0 --shard-count 4`

- `pnpm build`

- `pnpm paperclipai onboard --yes --run`

- `curl http://127.0.0.1:3299/api/health`

## Risks

- Branch protection or required-check configuration may need to be

updated for the new standalone `Canary Dry Run` job and the

serialized-suite matrix job names.

- `scripts/run-typecheck-build-gaps.mjs` assumes packages that need

explicit PR-time typechecking are the ones whose `build` scripts omit

`tsc`; if build conventions change, that heuristic needs to stay

aligned.

- Serialized test sharding preserves per-suite isolation, but the first

few CI runs should still be watched for shard-balance or naming

assumptions in downstream tooling.

## Model Used

- OpenAI GPT-5.4 via the Codex local adapter, using high reasoning

effort with shell, git, and file-edit tool use in a local worktree.

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [x] If this change affects the UI, I have included before/after

screenshots

- [x] I have updated relevant documentation to reflect my changes

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

---------

Co-authored-by: Paperclip <noreply@paperclip.ing>

## Thinking Path

> - Paperclip is a control plane for autonomous agent companies, so its

release automation is part of the core operator trust boundary.

> - The affected subsystem is npm/GitHub Actions release publishing for

the public monorepo packages.

> - The concrete failure was that a newly added package reached

`master`, the canary workflow attempted its first publish, and npm

trusted publishing was not yet bootstrapped for that package.

> - That means the problem is not just one broken run; it is a missing

pre-merge guard that lets release-ineligible packages land and only fail

once `publish_canary` runs.

> - This pull request makes release enrollment explicit, validates that

enrollment in CI, and adds a PR-time bootstrap check against npm for

changed release-enabled package manifests.

> - The result is that we keep trusted publishing, avoid teaching CI to

`npm adduser`, and move this class of failure from post-merge canary

time to pre-merge review time.

## What Changed

- Added `scripts/release-package-manifest.json` so release-managed

public packages are explicitly enrolled instead of being inferred from

every non-private workspace package.

- Hardened `scripts/release-package-map.mjs` to validate the manifest

before release workflows rewrite versions or assemble publish payloads.

- Added `scripts/check-release-package-bootstrap.mjs` and wired it into

`.github/workflows/pr.yml` so PRs that change a release-enabled package

manifest fail if that package does not already exist on npm.

- Added release-package manifest coverage tests to

`scripts/release-package-map.test.mjs` and included them in `pnpm run

test:release-registry`.

- Wired manifest validation into `.github/workflows/release.yml` and

documented the first-publish bootstrap policy in `doc/PUBLISHING.md` and

`doc/RELEASE-AUTOMATION-SETUP.md`.

## Verification

- `pnpm run test:release-registry`

- `./scripts/release.sh canary --skip-verify --dry-run`

- Confirmed the committed diff contains no obvious PII/secrets via

targeted pattern scan before pushing.

## Risks

- Low risk overall: this is CI/release-policy code, not product runtime

logic.

- The new PR bootstrap check depends on npm metadata availability, so a

transient npm outage could block a PR that changes a release-enabled

package manifest.

- The manifest introduces a new source of truth that must stay aligned

with public package additions, but that is intentional and now enforced.

## Model Used

- OpenAI Codex via the `codex_local` Paperclip adapter; GPT-5-based

coding agent with tool use, terminal execution, git, and GitHub CLI.

Exact served model ID/context window are not exposed by the local

runtime.

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [x] If this change affects the UI, I have included before/after

screenshots

- [x] I have updated relevant documentation to reflect my changes

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

## Thinking Path

> - Paperclip orchestrates AI agents for zero-human companies, including

agents

> running the Gemini CLI (`gemini-local` adapter)

> - The Gemini CLI emits a JSONL event stream during a run that the

adapter

> parses to extract the assistant's response text, tool results, and

usage

> - Recent versions of the Gemini CLI emit assistant responses as

> `{ "type": "message", "role": "assistant", "content": ... }` events in

> addition to the previously-handled event shapes

> - The parser was not handling the new event type, so the assistant's

actual

> response text was being silently dropped from parsed output. Callers

ended

> up with empty assistant messages even when Gemini had successfully

> responded

> - This PR teaches the parser to recognize `{type: "message", role:

> "assistant"}` events and extract their content text via the same

> `collectMessageText` helper used for other message-shaped events

> - The benefit is that Gemini runs surface the assistant's real

response in

> downstream consumers (issue comments, run logs, downstream agent

context)

> instead of vanishing

## What Changed

- `packages/adapters/gemini-local/src/server/parse.ts`: in

`parseGeminiJsonl(...)`, add a branch for `event.type === "message"`

with

`role === "assistant"` that calls

`messages.push(...collectMessageText(event.content))`.

- `packages/adapters/gemini-local/src/server/parse.test.ts`: ~19 lines

of

coverage for the new branch.

## Verification

- `pnpm --filter @paperclipai/adapter-gemini-local test -- parse`

- Manual QA: run a Gemini agent on an issue, confirm the assistant's

response

appears as the issue comment / run output. Before this fix the comment

was

empty even when the run completed successfully.

## Risks

- Tightly scoped: 8 lines of production code in one parser branch. No

effect

on existing event shapes or other adapters.

- If the Gemini CLI changes its event schema again, this branch may need

to be

revisited — but adding it is strictly additive over current behaviour.

## Model Used

- OpenAI GPT-5.4 (reasoning effort: high) via Codex CLI

- Provider: OpenAI

- Used to author the code changes in this PR

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [ ] If this change affects the UI, I have included before/after

screenshots — N/A

- [ ] I have updated relevant documentation to reflect my changes — N/A

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

## Thinking Path

> - Paperclip orchestrates AI agents for zero-human companies

> - Agents executing on remote SSH hosts receive an env map built from

the host

> process's env plus per-run additions like `PAPERCLIP_API_KEY`,

> `PAPERCLIP_RUN_ID`, etc.

> - The env map currently includes inherited host vars by default,

including

> identity-bound ones like `PATH`, `HOME`, `USER`, `NVM_DIR`, `XDG_*` —

> variables whose values are meaningful only on the host they came from

> - Sending the host's `PATH` (containing host-only directories like a

local

> nvm install path) to a remote SSH box overrides the remote's actual

`PATH`

> and breaks command resolution. Same hazard for `HOME` (commands

looking for

> config files end up in a non-existent dir), `USER` (writes go to the

wrong

> path), etc.

> - This PR adds `sanitizeSshRemoteEnv()` that drops inherited

identity-bound

> vars when their value matches the host process's value. Explicitly-set

> values pass through untouched, so callers that genuinely want to

override

> remote `PATH` etc. still can — but accidental leakage from

> `process.env` is filtered.

> - The benefit is that SSH remote execution stops corrupting the remote

> shell's environment with host-shaped paths, so commands resolve

correctly

> against the remote PATH and config files land in the remote `HOME`

## What Changed

- New `sanitizeSshRemoteEnv(env, inheritedEnv = process.env)` in

`packages/adapter-utils/src/server-utils.ts`. The identity-bound key set

is:

- `PATH`, `HOME`, `PWD`, `SHELL`, `USER`, `LOGNAME`

- `NVM_DIR`, `TMPDIR`, `TMP`, `TEMP`

- `XDG_CONFIG_HOME`, `XDG_CACHE_HOME`, `XDG_DATA_HOME`,

`XDG_STATE_HOME`, `XDG_RUNTIME_DIR`

For any key in this set, the entry is dropped iff the env value equals

the

inherited (host process) value. Other keys pass through unchanged.

- `readEnvValueCaseInsensitive(...)` helper handles Windows-style

case-insensitive env var lookups.

- Wired into `resolveSpawnTarget(...)` for the SSH transport. Sandbox

and local

paths are unaffected.

- Tests added in `server-utils.test.ts` (~50 lines) covering: matching

keys

filtered, mismatched keys preserved, non-identity keys passed through,

case

insensitivity.

## Verification

- `pnpm --filter @paperclipai/adapter-utils test -- server-utils`

- Manual QA: run any adapter against an SSH-backed environment, confirm

remote command resolution works (e.g. `node`, `npm`, the adapter's CLI)

and

config files land in the remote user's `HOME`. Compare to the prior

behaviour

by transiently re-introducing the inherited `PATH` and watching commands

fail with `command not found`.

## Risks

- Behavioural shift: SSH remote execution previously passed inherited

host env

vars verbatim. Code that relied on that (e.g. a remote command somehow

expecting the host's `PATH`) will see different behaviour. None of the

adapter code in this repo has such a dependency.

- Edge case: if a caller explicitly sets `PATH` to the same value as the

host's

`PATH` (literally — same exact string), the sanitizer drops it as a

leak.

In practice no caller constructs the env this way.

- Windows host: case-insensitive lookup handles `Path` vs `PATH`

correctly.

Tested.

## Model Used

- OpenAI GPT-5.4 (reasoning effort: high) via Codex CLI

- Provider: OpenAI

- Used to author the code changes in this PR

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [ ] If this change affects the UI, I have included before/after

screenshots — N/A

- [ ] I have updated relevant documentation to reflect my changes — N/A

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

## Thinking Path

> - Paperclip orchestrates AI agents for zero-human companies, running

adapter

> commands like `claude`, `codex`, `pi` either locally or on remote

runtimes

> (SSH hosts, sandboxes, etc.)

> - On a fresh remote runtime — particularly an ephemeral sandbox — the

> adapter's CLI may not be installed yet. Today operators handle this

via

> external configuration (e.g. a project-level `provisionCommand` shell

> script) that has to know about every adapter the operator might want

to use

> - This means every adapter has its own well-known npm package, but

operators

> end up writing duplicate provision shell scripts that paste together

> `npm install -g @anthropic-ai/claude-code`, `npm install -g

@openai/codex`,

> etc. — knowledge the adapter itself already has

> - This PR moves that knowledge into the adapter modules: each adapter

declares

> how its runtime command should be detected and (if applicable)

installed

> via `getRuntimeCommandSpec(config)`. The execution path runs the

adapter's

> own install command on remote sandbox targets before launching, so a

fresh

> sandbox bootstraps itself instead of requiring a hand-written

provision script

> - The benefit is fewer footguns for operators provisioning remote

runtimes,

> and a clean place for new adapters to plug in their install recipe

## What Changed

- New types in `packages/adapter-utils/src/types.ts`:

- `AdapterRuntimeCommandSpec` describing `command`, optional

`detectCommand`, and optional `installCommand`

- Optional `getRuntimeCommandSpec(config)` on `ServerAdapterModule`

- Optional `runtimeCommandSpec` on `AdapterExecutionContext` so adapters

receive the resolved spec at execute time

- New helper `ensureAdapterExecutionTargetRuntimeCommandInstalled(...)`

in

`packages/adapter-utils/src/execution-target.ts` that runs the install

command

on remote targets when `transport === "sandbox"`. SSH and local targets

are

no-ops. Throws on timeout or non-zero exit so failures surface early.

- Each of `claude-local`, `codex-local`, `cursor-local`, `gemini-local`,

`opencode-local`, `pi-local`'s `execute.ts` now reads

`ctx.runtimeCommandSpec?.installCommand` and calls the helper before

launching

the adapter command.

- `server/src/adapters/registry.ts` declares `getRuntimeCommandSpec` for

each

adapter:

- claude/codex/gemini/opencode/pi-local: `npm install -g <package>`

recipe via

a shared `buildNpmRuntimeCommandSpec` helper, with a defensive guard

that

only auto-installs when the configured `command` matches the well-known

fallback (custom binaries are left alone).

- cursor-local: declares `command` only; no auto-install (no public npm

package), preserving the existing manual setup.

- `server/src/services/heartbeat.ts` resolves the spec via

`adapter.getRuntimeCommandSpec?.(runtimeConfig)` and passes it through

to

`AdapterExecutionContext`.

- Tests added in `execution-target.test.ts` (~75 lines), e2b

`plugin.test.ts` (~32 lines), and `environment-run-orchestrator.test.ts`

(~76 lines).

## Verification

- `pnpm --filter @paperclipai/adapter-utils test`

- `pnpm --filter @paperclipai/server test --

environment-run-orchestrator`

- `pnpm --filter @paperclipai/sandbox-providers-e2b test`

- Manual QA: run an adapter (claude/codex/etc.) against a fresh

sandbox-backed

environment that does NOT have the adapter CLI pre-installed. Confirm

the

install runs once at the start of the agent run and the adapter then

launches

successfully. Re-run on the same sandbox; confirm the install command is

idempotent and the second run starts faster.

- Confirm SSH and local execution paths are unaffected (gated by

`transport === "sandbox"`).

## Risks

- Behavioural shift on sandbox runs: a new install step now runs at the

start

of every sandbox agent run for adapters with `installCommand` set. The

install commands are idempotent (`if ! command -v X >/dev/null 2>&1;

then

npm install -g <pkg>; fi`), so this is fast on warm sandboxes. On a cold

sandbox, the first run takes longer.

- Operators who used the legacy project-level `provisionCommand` to

install

adapter CLIs can drop that part of their script; the adapter handles it

now.

Existing scripts continue to work — installs are idempotent.

- The cursor-local adapter has no auto-install (no public npm package).

Behaviour for cursor-local on sandboxes is unchanged.

- New optional surface on `ServerAdapterModule`. Plugins that don't

implement

`getRuntimeCommandSpec` retain previous behaviour (no auto-install).

## Model Used

- OpenAI GPT-5.4 (reasoning effort: high) via Codex CLI

- Provider: OpenAI

- Used to author the code changes in this PR

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [ ] If this change affects the UI, I have included before/after

screenshots — N/A

- [ ] I have updated relevant documentation to reflect my changes — N/A

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

## Thinking Path

> - Paperclip orchestrates AI agents for zero-human companies

> - Agents on remote sandboxes call back into the Paperclip control

plane via a

> callback bridge — the host process running the bridge proxies HTTP

requests

> from the sandbox to the Paperclip API

> - When something goes wrong end-to-end (sandbox can't reach Paperclip,

requests

> timing out, malformed responses), it's hard to tell whether the bridge

> processed the request, what URL/method it used, and what the upstream

> responded with

> - There was no built-in way to log bridge proxy traffic without

modifying

> adapter code or attaching a debugger

> - This PR adds opt-in stdout logging of every bridge proxy request and

response

> (method, path, query, status), gated behind `PAPERCLIP_BRIDGE_DEBUG`

so it

> stays off by default

> - The benefit is that operators can flip a single env var to get full

visibility

> into bridge traffic when debugging remote runs, without changing code

## What Changed

- `packages/adapter-utils/src/execution-target.ts`:

`startAdapterExecutionTargetPaperclipBridge`'s `handleRequest` now logs

each

proxied request and response when `PAPERCLIP_BRIDGE_DEBUG` is truthy:

- `[paperclip] Bridge proxy <METHOD> <path>?<query>` before fetch

- `[paperclip] Bridge proxy response <status> for <METHOD>

<path>?<query>` after

- Logging is no-op when the env var is unset/`"0"`/`"false"`.

## Verification

- Set `PAPERCLIP_BRIDGE_DEBUG=1` in the host process env, run an agent

against

a sandbox-backed environment, confirm the bridge log lines appear in

stdout.

- Unset the env var and confirm no extra log lines appear during normal

runs.

## Risks

- Off-by-default, no observable change for shipping users.

- When enabled, the logging is verbose — every API call from the sandbox

produces 2 stdout lines. Operators should only enable it during active

debugging.

## Model Used

- OpenAI GPT-5.4 (reasoning effort: high) via Codex CLI

- Provider: OpenAI

- Used to author the code changes in this PR

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [ ] I have added or updated tests where applicable — covered by

exercising the

flag in dev; the underlying handleRequest behavior is unchanged

- [ ] If this change affects the UI, I have included before/after

screenshots — N/A

- [ ] I have updated relevant documentation to reflect my changes — N/A

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

## Thinking Path

> - Paperclip orchestrates AI agents for zero-human companies

> - Agents run inside execution workspaces (a per-issue cwd + env), and

an issue

> can prefer to reuse an existing workspace or get a fresh one each time

> - The heartbeat service was reading the existing workspace's config to

derive

> environment selection regardless of whether the issue actually wanted

to reuse

> it. So fresh-run issues were inheriting stale config from a workspace

that was

> about to be discarded

> - Separately, when an issue is assigned to an agent, the issue's

execution

> workspace settings weren't picking up the agent's

`defaultEnvironmentId`,

> even though the agent's choice is the natural default for that issue

> - This PR makes both selection paths honor the obvious source of

truth:

> workspace config flows only when the issue actually wants

`reuse_existing`,

> and the assignee agent's default environment is applied at assignment

time if

> nothing else is set on the issue

> - The benefit is that re-running a flaky issue picks up the right

environment

> instead of inheriting the previous run's config, and assigning an

agent to an

> issue does the obvious thing without operator intervention

## What Changed

- `server/src/services/heartbeat.ts`: introduce

`reusableExecutionWorkspaceConfig`

that is non-null only when `shouldReuseExisting` is true. Both

`resolveExecutionWorkspaceEnvironmentId(...)` and

`applyPersistedExecutionWorkspaceConfig(...)` now read from it instead

of

unconditionally consulting `existingExecutionWorkspace?.config`.

Fresh-run

issues no longer inherit stale environment config from an in-flight

workspace

about to be discarded.

- `server/src/services/issues.ts`: when an issue update sets a new

`assigneeAgentId` and isolated workspaces are enabled, populate

`executionWorkspaceSettings.environmentId` from the assignee agent's

`defaultEnvironmentId` if the issue doesn't have an explicit

`environmentId` set yet.

- Tests added in `heartbeat-plugin-environment.test.ts` (~216 lines) and

`issues-service.test.ts` (~85 lines) covering both paths.

## Verification

- `pnpm --filter @paperclipai/server test --

heartbeat-plugin-environment issues-service`

- Manual QA: assign an issue to an agent that has a non-default

`defaultEnvironmentId`, confirm the issue's workspace settings now

include that

environment id without operator intervention. Trigger a rerun on an

issue

whose existing workspace points at a stale environment, confirm the

rerun uses

the freshly-resolved environment.

## Risks

- Behavioural shift on assignment: previously assigning an agent didn't

propagate the agent's default environment to the issue. Now it does.

Callers

that explicitly want the issue to keep its existing/null environment

must set

`executionWorkspaceSettings.environmentId` themselves; the new logic

only

fires when no explicit value is set.

- Behavioural shift on rerun: stale workspace config is no longer

applied to

fresh runs. Operators who relied on this implicit inheritance may see

different environment selection on the first rerun after deploy.

Mitigation:

the explicit isssue settings and project policy are still honored as

before.

## Model Used

- OpenAI GPT-5.4 (reasoning effort: high) via Codex CLI

- Provider: OpenAI

- Used to author the code changes in this PR

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [ ] If this change affects the UI, I have included before/after

screenshots — N/A (no UI changes)

- [ ] I have updated relevant documentation to reflect my changes — N/A

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

> **Stacked PR (part 7 of 7).** Depends on:

- PR #5114

- PR #5115

- PR #5116

- PR #5117

- PR #5118

- PR #5119

> Diff against `master` includes commits from earlier PRs in the stack —

the new commit in this PR is the topmost one.

## Thinking Path

> - Paperclip orchestrates AI agents for zero-human companies

> - The Pi adapter persists a session jsonl per agent so subsequent runs

resume

> conversation context instead of starting cold

> - SSH testing reproduced a real failure: a verification issue reached

terminal

> `done` and the agent claimed success, but the proof artifact

> `manual-qa/environment-matrix/ssh/pi_local.md` was missing from the

realized

> SSH workspace on the QA target box

> - Root cause: the saved session header recorded a different cwd than

the new

> execution cwd, but the resume eligibility check only compared

session-params

> cwd via local-style `path.resolve` (which doesn't roundtrip on remote

POSIX

> paths). The stale session got resumed and writes landed in the wrong

cwd

> - This PR tightens resume eligibility for remote targets: it adds

remote-aware

> cwd normalisation, reads the first line of the session jsonl over SSH

(`head

> -n 1`) to verify the saved header cwd, and only resumes when both

> session-params cwd *and* the on-disk header cwd match the realised

execution

> cwd. Stale sessions are skipped silently and the run starts cold

> - The benefit is that Pi runs across cwd-changing environments stop

> accidentally resuming each other's sessions, and proof artifacts land

where

> reviewers expect them

## What Changed

- Added `normalizeExecutionCwd`, `executionCwdsMatch`,

`readSessionHeaderCwd`,

and `readSavedSessionCwd` helpers in `pi-local/src/server/execute.ts`

- `readSavedSessionCwd` reads the first line of the session jsonl —

locally via

`fs.readFile`, remotely via `runAdapterExecutionTargetShellCommand`

(`head -n 1`)

- Resume eligibility now requires:

1. Saved session id is non-empty

2. Execution target shape matches (existing check)

3. Session-params cwd matches the realised execution cwd

4. Session-header cwd (from the on-disk jsonl) matches the realised

execution cwd

- Stale sessions are skipped silently (run starts cold) instead of

resumed

- `execute.remote.test.ts` extended with: matching header → resume;

mismatched

header → start fresh; missing/unreadable header → start fresh; remote

head

command failure → start fresh

## Verification

- `pnpm --filter @paperclipai/adapter-pi-local test`

- `pnpm test -- pi-local`

- Manual QA: ran a Pi agent twice in two different remote cwds,

confirmed

the second run did not pick up the first run's session and that

subsequent

runs in the original cwd still resumed correctly

## Risks

- Adds a `head -n 1` shell call per Pi run on remote targets. Negligible

latency (single read of session jsonl), bounded by 15s timeout.

- If the `head` call fails for unrelated reasons (transient remote

unreachability), the run will start cold instead of resuming. This is

the

safe default but worth noting — operators may see one extra cold run if

a

remote glitches mid-session.

- No data is deleted or migrated; stale sessions remain on disk for

manual

inspection if desired.

## Model Used

- OpenAI GPT-5.4 (reasoning effort: high) via Codex CLI

- Provider: OpenAI

- Used to author the code changes in this PR

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [ ] If this change affects the UI, I have included before/after

screenshots — N/A

- [ ] I have updated relevant documentation to reflect my changes — N/A

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

> **Stacked PR (part 6 of 7).** Depends on:

- PR #5114

- PR #5115

- PR #5116

- PR #5117

- PR #5118

> Diff against `master` includes commits from earlier PRs in the stack —

the new commit in this PR is the topmost one.

## Thinking Path

> - Paperclip orchestrates AI agents for zero-human companies

> - The OpenCode adapter validates that its configured model exists

before letting

> a run start so misconfiguration fails fast with a clear error

> - SSH testing reproduced an OpenCode failure where issues stayed

`backlog`,

> timed out, and produced no comments. The root cause was in

> `packages/adapters/opencode-local/src/server/execute.ts`: the local

model

> guard `ensureOpenCodeModelConfiguredAndAvailable(...)` only ran when

execution

> was *not* remote, so SSH OpenCode bypassed it and failed silently

later

> - Subsequent testing surfaced a related remote-only failure where the

probe

> (when wired up naively) hits `EACCES: permission denied, mkdir

'/var/folders'`

> on the SSH box because of how OpenCode's runtime config picks a

tempdir

> - This PR runs the model probe on the actual execution target —

`opencode

> models` via `runAdapterExecutionTargetProcess` — instead of the local

CLI,

> parses the output with the shared `parseOpenCodeModelsOutput` helper,

and

> reports a concrete error naming the offending model and a sample of

available

> remote models when the configured model isn't present

> - The benefit is that mismatched OpenCode models surface as a clear

pre-flight

> error referencing the remote target instead of a silent run that never

leaves

> `backlog`

## What Changed

- Added `ensureRemoteOpenCodeModelConfiguredAndAvailable` in

`opencode-local/src/server/execute.ts` that runs `opencode models` via

`runAdapterExecutionTargetProcess` and validates the configured model is

in

the parsed output

- `models.ts` now exports `parseOpenCodeModelsOutput` and

`requireOpenCodeModelId`

so the remote path can reuse them

- `execute.ts` calls the remote variant when `executionTargetIsRemote`,

otherwise

the existing local `ensureOpenCodeModelConfiguredAndAvailable`

- Errors include the offending model id and a sample of available remote

models

so the operator knows exactly what's missing

- `execute.remote.test.ts` extended with cases for: probe timeout, probe

non-zero exit, empty model list, and missing-model error

## Verification

- `pnpm --filter @paperclipai/adapter-opencode-local test`

- `pnpm test -- opencode-local`

- Manual QA: configured an OpenCode agent with a model that exists

locally but

not in the remote sandbox, and confirmed the new error fires before the

run

starts and references the remote target

## Risks

- New behaviour: remote model validation adds a `~20s timeout` `opencode

models`

call on every remote run start. For most environments this is fast, but

a

network-slow sandbox could see startup latency rise. Timeout is bounded.

- If the remote CLI is missing or misconfigured, the new error replaces

the old

generic startup failure — clearer message, but the failure point shifts

earlier. Monitor for any QA flows that relied on the old failure shape.

## Model Used

- OpenAI GPT-5.4 (reasoning effort: high) via Codex CLI

- Provider: OpenAI

- Used to author the code changes in this PR

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [ ] If this change affects the UI, I have included before/after

screenshots — N/A

- [ ] I have updated relevant documentation to reflect my changes — N/A

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

> **Stacked PR (part 5 of 7).** Depends on:

- PR #5114

- PR #5115

- PR #5116

- PR #5117

> Diff against `master` includes commits from earlier PRs in the stack —

the new commit in this PR is the topmost one.

## Thinking Path

> - Paperclip orchestrates AI agents for zero-human companies

> - Agents run with a Paperclip-shaped environment

(`PAPERCLIP_WORKSPACE_CWD`,

> worktree path, `PAPERCLIP_WORKSPACES_JSON` hints) so the CLI can

locate the

> correct project tree

> - SSH testing reproduced a real failure: a Codex SSH run wrote to

> `/tmp/paperclip-env-matrix-...` (the *host* path) instead of the

realized

> remote workspace at `/home/<user>/paperclip-env-matrix-ssh-claude/...`

> because the adapter injected `PAPERCLIP_WORKSPACE_CWD=/tmp/...` into

the

> remote env

> - Code review on the initial codex-only fix asked to roll the same

approach

> into every other SSH-capable adapter (claude, acpx, cursor, opencode,

gemini,

> pi) via a shared helper rather than duplicating per-adapter

> - This PR adds `shapePaperclipWorkspaceEnvForExecution` in

adapter-utils that,

> when the execution target is remote: replaces local cwd with the

realized

> execution cwd, nulls out worktree path (which has no remote meaning),

and

> rewrites/strips `cwd` entries in workspace hints based on what was

actually

> synced. Every adapter calls it before invoking the remote runner

> - The benefit is that remote runs see the realized remote workspace,

host-local

> paths stop leaking into remote env, and the rule is unit-tested in one

place

## What Changed

- Added `shapePaperclipWorkspaceEnvForExecution` to

`packages/adapter-utils/src/server-utils.ts` with full unit coverage

(`server-utils.test.ts`)

- Each of acpx-local, claude-local, codex-local, cursor-local,

gemini-local,

opencode-local, pi-local now calls the new shaper before issuing the

remote

command and feeds the shaped values into `applyPaperclipWorkspaceEnv`

- Per-adapter `execute.remote.test.ts` files extended to cover the new

shaping

behaviour: localhost paths replaced with remote cwd, foreign-cwd hints

stripped, worktree path nulled out for remote targets

- `acpx-local/src/server/execute.test.ts` extended with shaping coverage

## Verification

- `pnpm test -- server-utils execute.remote`

- `pnpm --filter @paperclipai/adapter-acpx-local test`

- Manual QA reproducing the original failure:

1. Provision an E2B sandbox environment for the Paperclip QA company

2. Assign an issue to a remote-targeted claude-local agent and confirm

the

run starts in the correct remote cwd (no `/Users/...` path leakage in

the

run logs)

3. Repeat for opencode-local and pi-local

## Risks

- Behavioural shift: hints whose `cwd` doesn't match the workspace cwd

are now

stripped on remote targets. If any adapter relied on a leaked local hint

cwd,

it will see a missing `cwd` instead. Reviewed all current callers — none

do.

- Adds a small per-run cost (path resolve + string normalisation) on

every remote

execution. Negligible.

- Worktree path is now nulled out on remote (it has no meaning there).

Adapters

that previously read the value defensively will continue to work.

## Model Used

- OpenAI GPT-5.4 (reasoning effort: high) via Codex CLI

- Provider: OpenAI

- Used to author the code changes in this PR

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [ ] If this change affects the UI, I have included before/after

screenshots — N/A

- [ ] I have updated relevant documentation to reflect my changes — N/A

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

> **Stacked PR (part 4 of 7).** Depends on:

- PR #5114

- PR #5115

- PR #5116

> Diff against `master` includes commits from earlier PRs in the stack —

the new commit in this PR is the topmost one.

## Thinking Path

> - Paperclip orchestrates AI agents for zero-human companies

> - When creating an OpenCode-local agent, Paperclip currently validates

> `adapterConfig.model` against the *Paperclip host's* `opencode models`

output

> - SSH testing surfaced that this blocks creating an OpenCode agent for

an SSH

> environment: the model that exists on the SSH target isn't visible to

the

> host, so creation fails with "OpenCode requires `adapterConfig.model`

in

> provider/model format" even when the operator picked a real remote

model

> - The initial direction was environment-aware model discovery; the

final

> decision was to keep OpenCode on the same explicit-model pattern as

other

> adapters (default + curated list + manual override) and stop blocking

> creation on host-side discovery

> - This PR does both: the adapter-models endpoint now accepts

`environmentId` and

> probes against the target environment, and the create-time hard gate

is

> replaced by `requireOpenCodeModelId` which validates `provider/model`

*format*

> without requiring host-local discovery. Test/run-time still surfaces

real

> auth/availability problems

> - The benefit is that operators can create OpenCode agents for remote

> environments without out-of-band setup, and the model picker in the UI

> reflects the actually-targeted environment

## What Changed

- Added `requireOpenCodeModelId(input)` in

`opencode-local/src/server/models.ts`,

exported it from the adapter index

- `ensureOpenCodeModelConfiguredAndAvailable` now delegates the format

check to

`requireOpenCodeModelId`

- `agentsApi.adapterModels(companyId, adapterType, { environmentId })`

now accepts

an environment ID and passes it as a query parameter

- `queryKeys.agents.adapterModels` now keys on `(companyId, adapterType,

environmentId)`

- `server/src/routes/agents.ts` reads and validates the new query

parameter,

forwarding it to the adapter's model probe

- `AgentConfigForm.tsx` and `OnboardingWizard.tsx` build the model query

key from

the currently selected default environment ID and disable autodetect for

`opencode_local` (model selection is explicit)

- `NewAgent.tsx` simplified — no longer special-cases OpenCode

autodetect

- `company-portability.ts` no longer needs OpenCode-specific autodetect

handling

- Tests added/updated:

`adapter-model-refresh-routes.test.ts`, `adapter-models.test.ts`,

`agent-permissions-routes.test.ts`,

`opencode-local/src/server/models.test.ts`

## Verification

- `pnpm --filter @paperclipai/server test -- adapter-models

adapter-model-refresh agent-permissions`

- `pnpm --filter @paperclipai/adapter-opencode-local test`

- `pnpm --filter @paperclipai/ui test -- AgentConfigForm

OnboardingWizard NewAgent`

- Manual QA in browser:

1. Boot Paperclip on Tailscale-bound port (so it's reachable from

another

machine), create an OpenCode-local agent, switch the default environment

between two installed sandboxes, and confirm the model list refreshes

per-environment

2. Submit with a malformed `provider/model` string and verify the new

`requireOpenCodeModelId` error surfaces

- Before/after screenshots attached for `AgentConfigForm` model picker

## Risks

- Behavioural shift: switching default environment now triggers a model

refetch.

Should be cheap but introduces a new UI loading state for OpenCode

users.

- Removing dynamic autodetect for OpenCode: if any user configured an

agent

without specifying `model` and relied on autodetect populating it, that

agent

will now fail at submit time. Mitigation: validation error is explicit

and

actionable.

- New query string parameter on `/api/companies/:id/adapter-models` —

older

clients that omit it still work (parameter is optional and defaults to

null).

## Model Used

- OpenAI GPT-5.4 (reasoning effort: high) via Codex CLI

- Provider: OpenAI

- Used to author the code changes in this PR

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [x] If this change affects the UI, I have included before/after

screenshots

- [ ] I have updated relevant documentation to reflect my changes — N/A

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

> **Stacked PR (part 3 of 7).** Depends on:

- PR #5114

- PR #5115

> Diff against `master` includes commits from earlier PRs in the stack —

the new commit in this PR is the topmost one.

## Thinking Path

> - Paperclip orchestrates AI agents for zero-human companies

> - Agents executing on a remote SSH-backed environment need a way to

call back into

> the Paperclip control plane (run events, log streaming, signals)

> - When the SSH host can't reach the Paperclip host (NAT, firewalls, or

simply not

> on the same network), the run silently fails or hangs — a recurring

class of

> failure during SSH testing

> - In sandboxed environments we already solved this with a callback

bridge that

> tunnels back through the existing connection; SSH was the odd one out

> - This PR migrates SSH execution to use the same callback bridge, so

every

> adapter's remote run uses one consistent reverse-channel. Per-adapter

SSH glue

> is deleted in favour of a shared `CommandManagedRuntimeRunner` built

from the

> SSH spec

> - The benefit is fewer SSH-specific failure modes, a smaller code

surface, and

> one place to evolve the callback contract going forward

## What Changed

- Added `createSshCommandManagedRuntimeRunner` in

`packages/adapter-utils/src/ssh.ts` that adapts an SSH spec into a

generic

command-managed-runtime runner (with cwd, env, and timeout handling)

- Removed `paperclipApiUrl` from `SshRemoteExecutionSpec`; the bridge

URL now flows

through the shared runner

- Reworked `execution-target.ts` to use the SSH runner alongside sandbox

runners

via a unified `CommandManagedRuntimeRunner` interface

- Simplified `remote-managed-runtime.ts` and

`sandbox-managed-runtime.ts` to consume

the shared runner abstraction

- Deleted per-adapter SSH callback wiring from claude-local,

codex-local,

cursor-local, gemini-local, opencode-local, pi-local execute.ts files

- Removed `environment-runtime-driver-contract.test.ts` (the contract is

now

enforced by `environment-execution-target.test.ts`)

- Added/updated `execute.remote.test.ts` cases for each adapter to cover

the SSH

runner path

## Verification

- `pnpm --filter @paperclipai/adapter-utils test`

- `pnpm test -- execute.remote` (covers all six local adapters' SSH

paths)

- Manual QA: ran a claude-local agent against an SSH-backed environment,

confirmed

the agent successfully called back to `/api/agent-callback/*` endpoints

during

the run

## Risks

- Refactor touches all six local adapters. If any adapter had subtle

SSH-specific

behaviour that wasn't captured in tests, it could regress. Mitigation:

each

adapter's `execute.remote.test.ts` was extended.

- `paperclipApiUrl` removal from `SshRemoteExecutionSpec` is a breaking

type change

for any internal consumer. Verified no external plugins consume this

type.

- The new `CommandManagedRuntimeRunner` shape is a public surface in

`@paperclipai/adapter-utils`; downstream plugins implementing custom

runners may

need updates, but no such plugins exist in this repo.

## Model Used

- OpenAI GPT-5.4 (reasoning effort: high) via Codex CLI

- Provider: OpenAI

- Used to author the code changes in this PR

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [ ] If this change affects the UI, I have included before/after

screenshots — N/A

- [ ] I have updated relevant documentation to reflect my changes — N/A

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

## Thinking Path

> - Paperclip orchestrates AI agents for zero-human companies

> - Agents execute in sandboxed remote environments served by pluggable

sandbox

> providers (E2B today, more later)

> - Today every sandbox command runs under `sh -lc` regardless of what

the

> provider's container actually ships

> - That misses bash-only shell init on E2B (which ships bash) and

prevents

> future providers from declaring a different default — there's no way

for a

> provider to say "I have bash, use it"

> - This PR adds a `shellCommand` field to sandbox execution targets so

providers

> can declare their preferred shell ("bash" for E2B), threads it through

the

> sandbox-managed-runtime client, callback bridge, and execution-target

shell

> helper, and validates the value at the lease-metadata boundary

> - The benefit is that sandbox commands run under the right shell on

the right

> provider, and adding new sandbox providers only needs to declare a

shell

> preference

## What Changed

- Added `packages/adapter-utils/src/sandbox-shell.ts` exporting

`preferredShellForSandbox(shellCommand)` (returns `"bash"` if input is

`"bash"`,

else `"sh"`)

- Added `shellCommand?: "bash" | "sh" | null` to

`AdapterSandboxExecutionTarget`

and `CommandManagedRuntimeSpec`; threaded it through

`runAdapterExecutionTargetShellCommand`,

`prepareAdapterExecutionTargetRuntime`,

and `startAdapterExecutionTargetPaperclipBridge`

- `createCommandManagedRuntimeClient`, `prepareCommandManagedRuntime`,

and

`createCommandManagedSandboxCallbackBridgeQueueClient` now take an

optional

`shellCommand` and use `preferredShellForSandbox` to pick the shell

- `startSandboxCallbackBridgeServer` accepts a `shellCommand` for its

server

startup, readiness probe, and stop hook

- E2B sandbox plugin declares `shellCommand: "bash"` in `leaseMetadata`

- `resolveEnvironmentExecutionTarget` reads `shellCommand` from lease

metadata

(validating against `"bash" | "sh" | null`)

- `environment-runtime.ts` adds `"shellCommand"` to

`INTERNAL_PLUGIN_SANDBOX_CONFIG_KEYS`

so the field round-trips through internal plugin config without leaking

to

external plugin metadata

- Updated tests in `command-managed-runtime.test.ts`,

`execution-target-sandbox.test.ts`, `sandbox-callback-bridge.test.ts`,

`environment-execution-target.test.ts`

## Verification

- `pnpm --filter @paperclipai/adapter-utils test`

- `pnpm --filter @paperclipai/server test --

environment-execution-target`

- `pnpm --filter @paperclipai/sandbox-providers-e2b test`

- Manual QA: boot a Paperclip instance, create an E2B-backed

environment, run a

claude_local agent against it, and confirm the run completes (verifies

bash

shell semantics flow through the callback bridge end-to-end)

## Risks

- E2B sandbox commands now run under `bash -lc` instead of `sh -lc`.

Bash is a

strict superset for the commands we issue (no busybox-only flags in our

shell

scripts), so risk is low. The shellCommand field is opt-in via lease

metadata —

providers that don't declare it stay on `sh`.

- New optional field on `CommandManagedRuntimeSpec` and

`AdapterSandboxExecutionTarget`.

Consumers ignoring the field retain previous behaviour (sh).

- Lease metadata now carries an additional field. Existing leases

without

`shellCommand` resolve to `null` and fall back to sh — backwards

compatible.

## Model Used

- OpenAI GPT-5.4 (reasoning effort: high) via Codex CLI

- Provider: OpenAI

- Used to author the code changes in this PR

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [ ] If this change affects the UI, I have included before/after

screenshots — N/A (no UI changes)

- [ ] I have updated relevant documentation to reflect my changes — N/A

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

## Thinking Path

> - Paperclip orchestrates AI agents for autonomous companies, and

heartbeat execution is the control-plane loop that keeps assigned work

moving.

> - Max-turn exhaustion is a recoverable local-adapter stop condition

for Claude and Gemini agents when a run needs another heartbeat to

continue safely.

> - The previous behavior could leave max-turn continuation details hard

to inspect, and duplicate/stale continuation wakes could keep running

after issue state changed.

> - The adapter layer also needed to avoid trusting arbitrary

stdout/stderr text as scheduler control metadata.

> - This pull request adds bounded max-turn continuation scheduling,

visible retry state, structured stop metadata handling, and

stale/duplicate continuation guards.

> - The benefit is safer automatic continuation after max-turn stops,

clearer operator visibility, and fewer duplicate or stale agent runs.

## What Changed

- Replaces closed PR #4952, whose head repository was deleted.

- Rebases the recovered max-turn continuation branch onto current

`paperclipai/paperclip:master`.

- Adds max-turn continuation scheduling and retry-state plumbing for

heartbeat runs.

- Adds stale/duplicate continuation suppression when issue status,

ownership, or execution locks change.

- Normalizes Claude/Gemini max-turn detection around structured stop

metadata instead of unstructured stdout/stderr text.

- Surfaces max-turn continuation settings and retry visibility in the

board UI.

- Adds focused server, adapter, and UI tests for max-turn stop metadata,

retry scheduling, stale queued-run invalidation, adapter

parsing/execution, run ledger display, and agent config patching.

## Verification

- `pnpm install --no-frozen-lockfile` to refresh local dependencies

after rebasing onto current `master`.

- `pnpm run preflight:workspace-links && pnpm exec vitest run

server/src/__tests__/claude-local-adapter.test.ts

server/src/__tests__/claude-local-execute.test.ts

server/src/__tests__/gemini-local-adapter.test.ts

server/src/__tests__/gemini-local-execute.test.ts

server/src/__tests__/heartbeat-retry-scheduling.test.ts

server/src/__tests__/heartbeat-stale-queue-invalidation.test.ts

server/src/services/heartbeat-stop-metadata.test.ts

ui/src/components/IssueRunLedger.test.tsx

ui/src/lib/agent-config-patch.test.ts ui/src/lib/runRetryState.test.ts

--testTimeout=20000`

- `pnpm --filter @paperclipai/adapter-claude-local typecheck && pnpm

--filter @paperclipai/adapter-gemini-local typecheck && pnpm --filter

@paperclipai/server typecheck && pnpm --filter @paperclipai/ui

typecheck`

- UI screenshot note: the UI changes are limited to config/ledger state

rendering rather than layout changes; component/unit coverage above

verifies the rendered behavior.

## Risks

- Medium behavior risk: heartbeat retry gating now suppresses max-turn

continuations when issue state or execution locks drift, so any callers

that relied on stale continuations running will now see cancellation

instead.

- Low adapter risk: Claude/Gemini unstructured text no longer triggers

max-turn scheduler metadata, so only structured stop signals and Gemini

exit code 53 are trusted.

- No database migrations.

> For core feature work, check [`ROADMAP.md`](ROADMAP.md) first and

discuss it in `#dev` before opening the PR. Feature PRs that overlap

with planned core work may need to be redirected — check the roadmap

first. See `CONTRIBUTING.md`.

## Model Used

- OpenAI Codex coding agent, GPT-5-class model, tool-enabled local

repository editing and command execution.

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [x] If this change affects the UI, I have included before/after

screenshots (not applicable: state/default rendering only; covered by

component/unit tests)

- [x] I have updated relevant documentation to reflect my changes (not

applicable: no user-facing command or docs contract changed)

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

---------

Co-authored-by: Paperclip <noreply@paperclip.ing>

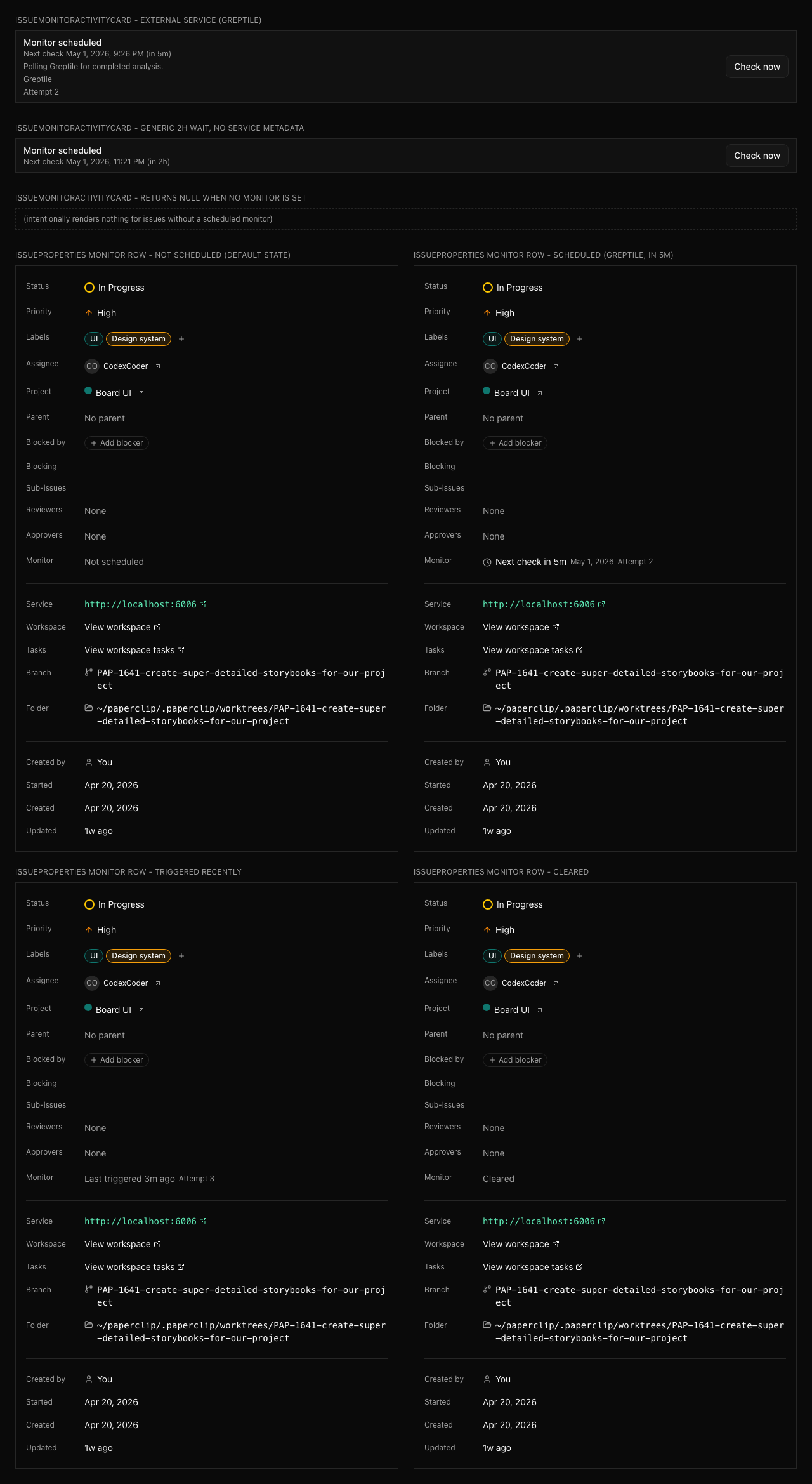

## Thinking Path

> - Paperclip is a control plane for autonomous AI companies where work

must stay observable, governable, and recoverable.

> - The task/heartbeat subsystem owns agent execution continuity, issue

state transitions, and visible recovery behavior.

> - Waiting on an external service is not the same as being blocked when

the assignee still owns a future check.

> - The gap was that agents had no first-class one-shot monitor state

for external-service waits, so recovery could look stalled or require ad

hoc comments.

> - This pull request adds bounded issue monitors that can wake the

owner, clear exhausted waits, and produce explicit recovery behavior.

> - It also surfaces monitor status in the board UI and documents when

to use monitors versus `blocked`.

> - The benefit is clearer liveness semantics for asynchronous waits

without weakening single-assignee task ownership.

## What Changed

- Added issue monitor fields, shared types, validators, constants, and

an idempotent `0075` migration for scheduled monitor state.

- Added server-side monitor scheduling, dispatch, recovery bounds,

activity logging, and external-ref redaction.

- Added board/agent route coverage for monitor permissions and child

monitor scheduling.

- Added issue detail/property UI for monitor state, a monitor activity

card, and Storybook stories for review surfaces.

- Documented monitor semantics and recovery policy behavior in

`doc/execution-semantics.md`.

- Addressed Greptile review feedback by preserving monitor state in

skipped-stage builders and making board monitor saves send `scheduledBy:

"board"`.

## Verification

- `pnpm install --frozen-lockfile`

- `pnpm run preflight:workspace-links && pnpm exec vitest run

server/src/__tests__/issue-execution-policy-routes.test.ts

server/src/__tests__/issue-execution-policy.test.ts

server/src/__tests__/issue-monitor-scheduler.test.ts

server/src/__tests__/recovery-classifiers.test.ts

ui/src/components/IssueMonitorActivityCard.test.tsx

ui/src/components/IssueProperties.test.tsx

ui/src/lib/activity-format.test.ts`

- First run passed 5 files and failed to collect 2 server suites because

the worktree was missing the optional `acpx/runtime` dependency.

- After `pnpm install --frozen-lockfile`, reran the 2 failed suites

successfully.

- `pnpm exec vitest run

server/src/__tests__/issue-monitor-scheduler.test.ts

server/src/__tests__/recovery-classifiers.test.ts`

- `pnpm --filter @paperclipai/shared typecheck && pnpm --filter

@paperclipai/db typecheck && pnpm --filter @paperclipai/server typecheck

&& pnpm --filter @paperclipai/ui typecheck`

- `pnpm exec vitest run

server/src/__tests__/issue-execution-policy.test.ts

ui/src/components/IssueProperties.test.tsx`

- `pnpm --filter @paperclipai/server typecheck && pnpm --filter

@paperclipai/ui typecheck`

- `pnpm exec vitest run

ui/src/components/IssueMonitorActivityCard.test.tsx

ui/src/components/IssueProperties.test.tsx`

- `pnpm --filter @paperclipai/ui typecheck`

- Storybook screenshot captured from

`http://127.0.0.1:6006/iframe.html?viewMode=story&id=product-issue-monitor-surfaces--monitor-surfaces`

with Playwright.

## Screenshots

## Risks

- Medium: this changes heartbeat recovery behavior for scheduled

external-service waits, so regressions could affect wake timing or

recovery issue creation.

- Migration risk is reduced by using `IF NOT EXISTS` for the new issue

monitor columns and index.

- External monitor references are treated as secret-adjacent and are

intentionally omitted from visible activity/wake payloads.

> For core feature work, check [`ROADMAP.md`](ROADMAP.md) first and

discuss it in `#dev` before opening the PR. Feature PRs that overlap

with planned core work may need to be redirected — check the roadmap

first. See `CONTRIBUTING.md`.

## Model Used

- OpenAI Codex, GPT-5 coding agent with repository tool use and terminal

execution.

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [x] If this change affects the UI, I have included before/after

screenshots or Storybook review surfaces

- [x] I have updated relevant documentation to reflect my changes

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

---------

Co-authored-by: Paperclip <noreply@paperclip.ing>

## Thinking Path

> - Paperclip is the control plane for autonomous AI companies.

> - The board UI needs a clear persistent way to move between company

workspaces.

> - The previous layout kept company switching in a separate left rail,

which made the sidebar feel split between workspace selection and

navigation.

> - The workspace switcher belongs in the sidebar header so navigation

and workspace context stay together.

> - This pull request removes the separate company rail from the layout

and turns the sidebar company menu into the primary workspace switcher.

> - The benefit is a cleaner sidebar structure that keeps workspace

identity, switching, company actions, and navigation in one place.

## What Changed

- Removed the standalone `CompanyRail` from the main layout.

- Added the company/workspace switcher to the default, company settings,

and instance settings sidebars.

- Expanded `SidebarCompanyMenu` to list active workspaces, indicate the

current workspace, navigate out of instance settings when switching, and

expose add-company onboarding.

- Updated focused component tests for the new workspace-switcher

behavior.

## Verification

- `pnpm --filter @paperclipai/ui exec vitest run

src/components/SidebarCompanyMenu.test.tsx

src/components/CompanySettingsSidebar.test.tsx`

- `pnpm --filter @paperclipai/ui typecheck`

- `git diff --check`

- Visual smoke attempted against the managed dev server at

`http://127.0.0.1:57385`; a fresh browser context reached the

authenticated sign-in screen, so I could not capture an authenticated

sidebar screenshot from this heartbeat.

## Risks

- Low-to-medium UI risk: this changes the primary sidebar structure and

workspace-switching entry point.

- The instance-settings switch behavior now routes back to the selected

company dashboard when a workspace is selected.

- No migrations, API contracts, or lockfile changes.

> For core feature work, check [`ROADMAP.md`](ROADMAP.md) first and

discuss it in `#dev` before opening the PR. Feature PRs that overlap

with planned core work may need to be redirected — check the roadmap

first. See `CONTRIBUTING.md`.

## Model Used

- OpenAI Codex, GPT-5 coding agent, tool-enabled, medium reasoning mode.

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [ ] If this change affects the UI, I have included before/after

screenshots

- [x] I have updated relevant documentation to reflect my changes

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

Co-authored-by: Paperclip <noreply@paperclip.ing>

## Thinking Path

> - Paperclip agents rely on skills for repeatable operating procedures

> - The Terminal-Bench loop skill needs to preserve enough dispatch

configuration to reproduce real heartbeat behavior

> - A bare benchmark command can create unassigned work with no

heartbeat-enabled agent, which is a harness setup failure rather than

product evidence

> - The Paperclip heartbeat skill also needs to keep escalation biased

toward agent-owned follow-through

> - This pull request documents dispatch runner config requirements and

strengthens the agent follow-through rule

> - The benefit is fewer misleading benchmark loops and clearer agent

operating guidance

## What Changed

- Documented `PAPERCLIP_HARBOR_RUNNER_CONFIG` / runner dispatch config

as required Terminal-Bench loop input.

- Updated the Terminal-Bench loop smoke check to require the dispatch

config mention.

- Added stronger Paperclip skill guidance to avoid asking humans for

work an agent can perform.

## Verification

- `pnpm smoke:terminal-bench-loop-skill`

## Risks

- Low risk: documentation and smoke expectation changes only. The

stricter smoke assertion is intentional so future edits do not drop the

dispatch config requirement.

> For core feature work, check [`ROADMAP.md`](ROADMAP.md) first and

discuss it in `#dev` before opening the PR. Feature PRs that overlap

with planned core work may need to be redirected — check the roadmap

first. See `CONTRIBUTING.md`.

## Model Used

- OpenAI Codex, GPT-5 coding agent, tool use and local command

execution. Exact context window was not exposed in the runtime.

## Checklist

- [x] I have included a thinking path that traces from project context

to this change

- [x] I have specified the model used (with version and capability

details)

- [x] I have checked ROADMAP.md and confirmed this PR does not duplicate

planned core work

- [x] I have run tests locally and they pass

- [x] I have added or updated tests where applicable

- [x] If this change affects the UI, I have included before/after

screenshots

- [x] I have updated relevant documentation to reflect my changes

- [x] I have considered and documented any risks above

- [x] I will address all Greptile and reviewer comments before

requesting merge

---------

Co-authored-by: Paperclip <noreply@paperclip.ing>

## Thinking Path

> - Paperclip is a control plane whose database is the durable audit and

work record

> - Database backup needs to include operator/plugin schemas while

excluding PostgreSQL-owned internals

> - PostgreSQL reserves the `pg_` schema prefix for system schemas,

including temp and toast variants

> - A single escaped `pg_` prefix predicate is less brittle than

enumerating individual `pg_toast` and `pg_temp` forms

> - This pull request tightens non-system schema discovery for logical

backups without changing the normal user/plugin schema path

## What Changed

- Replaced narrow `pg_toast` and `pg_temp` schema exclusions with an