mirror of

https://github.com/goauthentik/authentik

synced 2026-05-07 15:42:48 +02:00

Compare commits

4 Commits

ci/test-po

...

developer-

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

c11f407470 | ||

|

|

b7c6b961a1 | ||

|

|

e6adb72695 | ||

|

|

9cbdcd2cad |

267

.github/actions/cherry-pick/action.yml

vendored

267

.github/actions/cherry-pick/action.yml

vendored

@@ -1,267 +0,0 @@

|

||||

name: "Cherry-picker"

|

||||

description: "Cherry-pick PRs based on their labels"

|

||||

|

||||

inputs:

|

||||

token:

|

||||

description: "GitHub Token"

|

||||

required: true

|

||||

git_user:

|

||||

description: "Git user for pushing the cherry-pick PR"

|

||||

required: true

|

||||

git_user_email:

|

||||

description: "Git user email for pushing the cherry-pick PR"

|

||||

required: true

|

||||

|

||||

runs:

|

||||

using: "composite"

|

||||

steps:

|

||||

- name: Check if workflow should run

|

||||

id: should_run

|

||||

shell: bash

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ inputs.token }}

|

||||

run: |

|

||||

set -e -o pipefail

|

||||

# For issues events, check if it's actually a PR

|

||||

if [ "${{ github.event_name }}" = "issues" ]; then

|

||||

# Check if this issue is actually a PR

|

||||

PR_DATA=$(gh api repos/${{ github.repository }}/pulls/${{ github.event.issue.number }} 2>/dev/null || echo "null")

|

||||

if [ "$PR_DATA" = "null" ]; then

|

||||

echo "should_run=false" >> $GITHUB_OUTPUT

|

||||

echo "reason=not_a_pr" >> $GITHUB_OUTPUT

|

||||

echo "This is an issue, not a PR. Skipping."

|

||||

exit 0

|

||||

fi

|

||||

|

||||

# Get PR data

|

||||

PR_MERGED=$(echo "$PR_DATA" | jq -r '.merged')

|

||||

PR_NUMBER="${{ github.event.issue.number }}"

|

||||

MERGE_COMMIT_SHA=$(echo "$PR_DATA" | jq -r '.merge_commit_sha')

|

||||

|

||||

# Check if it's a backport label

|

||||

LABEL_NAME="${{ github.event.label.name }}"

|

||||

if [[ "$LABEL_NAME" =~ ^backport/(.+)$ ]]; then

|

||||

if [ "$PR_MERGED" = "true" ]; then

|

||||

echo "should_run=true" >> $GITHUB_OUTPUT

|

||||

echo "reason=label_added_to_merged_pr" >> $GITHUB_OUTPUT

|

||||

echo "pr_number=$PR_NUMBER" >> $GITHUB_OUTPUT

|

||||

echo "merge_commit_sha=$MERGE_COMMIT_SHA" >> $GITHUB_OUTPUT

|

||||

exit 0

|

||||

else

|

||||

echo "should_run=false" >> $GITHUB_OUTPUT

|

||||

echo "reason=label_added_to_open_pr" >> $GITHUB_OUTPUT

|

||||

echo "Backport label added to open PR. Will run after PR is merged."

|

||||

exit 0

|

||||

fi

|

||||

else

|

||||

echo "should_run=false" >> $GITHUB_OUTPUT

|

||||

echo "reason=non_backport_label" >> $GITHUB_OUTPUT

|

||||

exit 0

|

||||

fi

|

||||

fi

|

||||

|

||||

# For pull_request and pull_request_target events

|

||||

PR_NUMBER="${{ github.event.pull_request.number }}"

|

||||

MERGE_COMMIT_SHA="${{ github.event.pull_request.merge_commit_sha }}"

|

||||

|

||||

# Case 1: PR was just merged (closed + merged = true)

|

||||

if [ "${{ github.event.action }}" = "closed" ] && [ "${{ github.event.pull_request.merged }}" = "true" ]; then

|

||||

echo "should_run=true" >> $GITHUB_OUTPUT

|

||||

echo "reason=pr_merged" >> $GITHUB_OUTPUT

|

||||

echo "pr_number=$PR_NUMBER" >> $GITHUB_OUTPUT

|

||||

echo "merge_commit_sha=$MERGE_COMMIT_SHA" >> $GITHUB_OUTPUT

|

||||

exit 0

|

||||

fi

|

||||

|

||||

# Case 2: Label was added

|

||||

if [ "${{ github.event.action }}" = "labeled" ]; then

|

||||

LABEL_NAME="${{ github.event.label.name }}"

|

||||

# Check if it's a backport label

|

||||

if [[ "$LABEL_NAME" =~ ^backport/(.+)$ ]]; then

|

||||

# Check if PR is already merged

|

||||

if [ "${{ github.event.pull_request.merged }}" = "true" ]; then

|

||||

echo "should_run=true" >> $GITHUB_OUTPUT

|

||||

echo "reason=label_added_to_merged_pr" >> $GITHUB_OUTPUT

|

||||

echo "pr_number=$PR_NUMBER" >> $GITHUB_OUTPUT

|

||||

echo "merge_commit_sha=$MERGE_COMMIT_SHA" >> $GITHUB_OUTPUT

|

||||

exit 0

|

||||

else

|

||||

echo "should_run=false" >> $GITHUB_OUTPUT

|

||||

echo "reason=label_added_to_open_pr" >> $GITHUB_OUTPUT

|

||||

echo "Backport label added to open PR. Will run after PR is merged."

|

||||

exit 0

|

||||

fi

|

||||

else

|

||||

echo "should_run=false" >> $GITHUB_OUTPUT

|

||||

echo "reason=non_backport_label" >> $GITHUB_OUTPUT

|

||||

exit 0

|

||||

fi

|

||||

fi

|

||||

|

||||

echo "should_run=false" >> $GITHUB_OUTPUT

|

||||

echo "reason=unknown" >> $GITHUB_OUTPUT

|

||||

- name: Configure Git

|

||||

if: steps.should_run.outputs.should_run == 'true'

|

||||

shell: bash

|

||||

env:

|

||||

user: ${{ inputs.git_user }}

|

||||

email: ${{ inputs.git_user_email }}

|

||||

run: |

|

||||

git config --global user.name "${user}"

|

||||

git config --global user.email "${email}"

|

||||

- name: Get PR details and extract backport labels

|

||||

if: steps.should_run.outputs.should_run == 'true'

|

||||

id: pr_details

|

||||

shell: bash

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ inputs.token }}

|

||||

run: |

|

||||

set -e -o pipefail

|

||||

PR_NUMBER="${{ steps.should_run.outputs.pr_number }}"

|

||||

|

||||

# Get PR details

|

||||

PR_DATA=$(gh api repos/${{ github.repository }}/pulls/$PR_NUMBER)

|

||||

PR_TITLE=$(echo "$PR_DATA" | jq -r '.title')

|

||||

PR_AUTHOR=$(echo "$PR_DATA" | jq -r '.user.login')

|

||||

|

||||

echo "pr_title=$PR_TITLE" >> $GITHUB_OUTPUT

|

||||

echo "pr_author=$PR_AUTHOR" >> $GITHUB_OUTPUT

|

||||

|

||||

# Determine which labels to process

|

||||

if [ "${{ steps.should_run.outputs.reason }}" = "label_added_to_merged_pr" ]; then

|

||||

# Only process the specific label that was just added

|

||||

if [ "${{ github.event_name }}" = "issues" ]; then

|

||||

LABEL_NAME="${{ github.event.label.name }}"

|

||||

else

|

||||

LABEL_NAME="${{ github.event.label.name }}"

|

||||

fi

|

||||

|

||||

if [[ "$LABEL_NAME" =~ ^backport/(.+)$ ]]; then

|

||||

echo "labels=$LABEL_NAME" >> $GITHUB_OUTPUT

|

||||

else

|

||||

echo "Label $LABEL_NAME does not match backport pattern"

|

||||

echo "labels=" >> $GITHUB_OUTPUT

|

||||

fi

|

||||

else

|

||||

# PR was just merged, process all backport labels

|

||||

LABELS=$(gh pr view $PR_NUMBER --json labels --jq '.labels[].name' | grep '^backport/' | tr '\n' ' ' || true)

|

||||

echo "labels=$LABELS" >> $GITHUB_OUTPUT

|

||||

fi

|

||||

- name: Cherry-pick to target branches

|

||||

if: steps.should_run.outputs.should_run == 'true' && steps.pr_details.outputs.labels != ''

|

||||

shell: bash

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ inputs.token }}

|

||||

run: |

|

||||

set -e -o pipefail

|

||||

PR_NUMBER='${{ steps.should_run.outputs.pr_number }}'

|

||||

COMMIT_SHA='${{ steps.should_run.outputs.merge_commit_sha }}'

|

||||

PR_TITLE='${{ steps.pr_details.outputs.pr_title }}'

|

||||

PR_AUTHOR='${{ steps.pr_details.outputs.pr_author }}'

|

||||

LABELS='${{ steps.pr_details.outputs.labels }}'

|

||||

|

||||

echo "Processing PR #$PR_NUMBER (reason: ${{ steps.should_run.outputs.reason }})"

|

||||

echo "Found backport labels: $LABELS"

|

||||

|

||||

# Process each backport label

|

||||

for label in $LABELS; do

|

||||

if [[ "$label" =~ ^backport/(.+)$ ]]; then

|

||||

TARGET_BRANCH="${BASH_REMATCH[1]}"

|

||||

echo "Processing backport to branch: $TARGET_BRANCH"

|

||||

|

||||

# Check if target branch exists

|

||||

if ! git ls-remote --heads origin "$TARGET_BRANCH" | grep -q "$TARGET_BRANCH"; then

|

||||

echo "❌ Target branch $TARGET_BRANCH does not exist, skipping"

|

||||

|

||||

# Comment on the original PR about the missing branch

|

||||

gh pr comment $PR_NUMBER --body "⚠️ Cannot backport to \`$TARGET_BRANCH\`: branch does not exist."

|

||||

continue

|

||||

fi

|

||||

|

||||

# Create a unique branch name for the cherry-pick

|

||||

CHERRY_PICK_BRANCH="cherry-pick-${PR_NUMBER}-to-${TARGET_BRANCH}"

|

||||

|

||||

# Check if a cherry-pick PR already exists

|

||||

EXISTING_PR=$(gh pr list --head "$CHERRY_PICK_BRANCH" --json number --jq '.[0].number' 2>/dev/null || echo "")

|

||||

if [ -n "$EXISTING_PR" ]; then

|

||||

echo "⚠️ Cherry-pick PR already exists: #$EXISTING_PR"

|

||||

gh pr comment $PR_NUMBER --body "Cherry-pick to \`$TARGET_BRANCH\` already exists: #$EXISTING_PR"

|

||||

continue

|

||||

fi

|

||||

|

||||

# Fetch and checkout target branch

|

||||

git fetch origin "$TARGET_BRANCH"

|

||||

git checkout -b "$CHERRY_PICK_BRANCH" "origin/$TARGET_BRANCH"

|

||||

|

||||

# Attempt cherry-pick

|

||||

if git cherry-pick "$COMMIT_SHA"; then

|

||||

echo "✅ Cherry-pick successful for $TARGET_BRANCH"

|

||||

|

||||

# Push the cherry-pick branch

|

||||

git push origin "$CHERRY_PICK_BRANCH"

|

||||

|

||||

# Create PR for the cherry-pick

|

||||

CHERRY_PICK_TITLE="$PR_TITLE (cherry-pick #$PR_NUMBER)"

|

||||

CHERRY_PICK_BODY="Cherry-pick of #$PR_NUMBER to \`$TARGET_BRANCH\` branch.

|

||||

|

||||

**Original PR:** #$PR_NUMBER

|

||||

**Original Author:** @$PR_AUTHOR

|

||||

**Cherry-picked commit:** $COMMIT_SHA"

|

||||

|

||||

NEW_PR=$(gh pr create \

|

||||

--title "$CHERRY_PICK_TITLE" \

|

||||

--body "$CHERRY_PICK_BODY" \

|

||||

--base "$TARGET_BRANCH" \

|

||||

--head "$CHERRY_PICK_BRANCH" \

|

||||

--label "cherry-pick")

|

||||

|

||||

echo "✅ Created cherry-pick PR $NEW_PR for $TARGET_BRANCH"

|

||||

|

||||

# Comment on original PR

|

||||

gh pr comment $PR_NUMBER --body "🍒 Cherry-pick to \`$TARGET_BRANCH\` created: $NEW_PR"

|

||||

|

||||

else

|

||||

echo "⚠️ Cherry-pick failed for $TARGET_BRANCH, creating conflict resolution PR"

|

||||

|

||||

# Add conflicted files and commit

|

||||

git add .

|

||||

git commit -m "Cherry-pick #$PR_NUMBER to $TARGET_BRANCH (with conflicts)

|

||||

|

||||

This cherry-pick has conflicts that need manual resolution.

|

||||

|

||||

Original PR: #$PR_NUMBER

|

||||

Original commit: $COMMIT_SHA"

|

||||

|

||||

# Push the branch with conflicts

|

||||

git push origin "$CHERRY_PICK_BRANCH"

|

||||

|

||||

# Create PR with conflict notice

|

||||

CONFLICT_TITLE="$PR_TITLE (backport of #$PR_NUMBER)"

|

||||

CONFLICT_BODY="⚠️ **This cherry-pick has conflicts that require manual resolution.**

|

||||

|

||||

Cherry-pick of #$PR_NUMBER to \`$TARGET_BRANCH\` branch.

|

||||

|

||||

**Original PR:** #$PR_NUMBER

|

||||

**Original Author:** @$PR_AUTHOR

|

||||

**Cherry-picked commit:** $COMMIT_SHA

|

||||

|

||||

**Please resolve the conflicts in this PR before merging.**"

|

||||

|

||||

NEW_PR=$(gh pr create \

|

||||

--title "$CONFLICT_TITLE" \

|

||||

--body "$CONFLICT_BODY" \

|

||||

--base "$TARGET_BRANCH" \

|

||||

--head "$CHERRY_PICK_BRANCH" \

|

||||

--label "cherry-pick")

|

||||

|

||||

echo "⚠️ Created conflict resolution PR $NEW_PR for $TARGET_BRANCH"

|

||||

|

||||

# Comment on original PR

|

||||

gh pr comment $PR_NUMBER --body "⚠️ Cherry-pick to \`$TARGET_BRANCH\` has conflicts: $NEW_PR"

|

||||

fi

|

||||

|

||||

# Clean up - go back to main branch

|

||||

git checkout main

|

||||

git branch -D "$CHERRY_PICK_BRANCH" 2>/dev/null || true

|

||||

fi

|

||||

done

|

||||

@@ -2,28 +2,16 @@

|

||||

|

||||

import os

|

||||

from json import dumps

|

||||

from sys import exit as sysexit

|

||||

from time import time

|

||||

|

||||

from authentik import authentik_version

|

||||

|

||||

|

||||

def must_or_fail(input: str | None, error: str) -> str:

|

||||

if not input:

|

||||

print(f"::error::{error}")

|

||||

sysexit(1)

|

||||

return input

|

||||

|

||||

|

||||

# Decide if we should push the image or not

|

||||

should_push = True

|

||||

if len(os.environ.get("DOCKER_USERNAME", "")) < 1:

|

||||

# Don't push if we don't have DOCKER_USERNAME, i.e. no secrets are available

|

||||

should_push = False

|

||||

if (

|

||||

must_or_fail(os.environ.get("GITHUB_REPOSITORY"), "Repo required").lower()

|

||||

== "goauthentik/authentik-internal"

|

||||

):

|

||||

if os.environ.get("GITHUB_REPOSITORY").lower() == "goauthentik/authentik-internal":

|

||||

# Don't push on the internal repo

|

||||

should_push = False

|

||||

|

||||

@@ -32,16 +20,13 @@ if os.environ.get("GITHUB_HEAD_REF", "") != "":

|

||||

branch_name = os.environ["GITHUB_HEAD_REF"]

|

||||

safe_branch_name = branch_name.replace("refs/heads/", "").replace("/", "-").replace("'", "-")

|

||||

|

||||

image_names = must_or_fail(os.getenv("IMAGE_NAME"), "Image name required").split(",")

|

||||

image_names = os.getenv("IMAGE_NAME").split(",")

|

||||

image_arch = os.getenv("IMAGE_ARCH") or None

|

||||

|

||||

is_pull_request = bool(os.getenv("PR_HEAD_SHA"))

|

||||

is_release = "dev" not in image_names[0]

|

||||

|

||||

sha = must_or_fail(

|

||||

os.environ["GITHUB_SHA"] if not is_pull_request else os.getenv("PR_HEAD_SHA"),

|

||||

"could not determine SHA",

|

||||

)

|

||||

sha = os.environ["GITHUB_SHA"] if not is_pull_request else os.getenv("PR_HEAD_SHA")

|

||||

|

||||

# 2042.1.0 or 2042.1.0-rc1

|

||||

version = authentik_version()

|

||||

@@ -73,7 +58,7 @@ else:

|

||||

image_main_tag = image_tags[0].split(":")[-1]

|

||||

|

||||

|

||||

def get_attest_image_names(image_with_tags: list[str]) -> str:

|

||||

def get_attest_image_names(image_with_tags: list[str]):

|

||||

"""Attestation only for GHCR"""

|

||||

image_tags = []

|

||||

for image_name in set(name.split(":")[0] for name in image_with_tags):

|

||||

@@ -97,6 +82,7 @@ if os.getenv("RELEASE", "false").lower() == "true":

|

||||

image_build_args = [f"VERSION={os.getenv('REF')}"]

|

||||

else:

|

||||

image_build_args = [f"GIT_BUILD_HASH={sha}"]

|

||||

image_build_args = "\n".join(image_build_args)

|

||||

|

||||

with open(os.environ["GITHUB_OUTPUT"], "a+", encoding="utf-8") as _output:

|

||||

print(f"shouldPush={str(should_push).lower()}", file=_output)

|

||||

@@ -109,4 +95,4 @@ with open(os.environ["GITHUB_OUTPUT"], "a+", encoding="utf-8") as _output:

|

||||

print(f"imageMainTag={image_main_tag}", file=_output)

|

||||

print(f"imageMainName={image_tags[0]}", file=_output)

|

||||

print(f"cacheTo={cache_to}", file=_output)

|

||||

print(f"imageBuildArgs={"\n".join(image_build_args)}", file=_output)

|

||||

print(f"imageBuildArgs={image_build_args}", file=_output)

|

||||

|

||||

21

.github/actions/setup/action.yml

vendored

21

.github/actions/setup/action.yml

vendored

@@ -8,9 +8,6 @@ inputs:

|

||||

postgresql_version:

|

||||

description: "Optional postgresql image tag"

|

||||

default: "16"

|

||||

profiles:

|

||||

description: "Extra profiles of supporting services to start"

|

||||

default: ""

|

||||

|

||||

runs:

|

||||

using: "composite"

|

||||

@@ -58,13 +55,21 @@ runs:

|

||||

shell: bash

|

||||

run: |

|

||||

export PSQL_TAG=${{ inputs.postgresql_version }}

|

||||

export COMPOSE_PROFILES=${{ inputs.profiles }}

|

||||

docker compose -f .github/actions/setup/docker-compose.yml up -d

|

||||

cd web && npm ci

|

||||

- name: Generate config

|

||||

if: ${{ contains(inputs.dependencies, 'python') }}

|

||||

shell: bash

|

||||

env:

|

||||

PROFILES: ${{ inputs.profiles }}

|

||||

shell: uv run python {0}

|

||||

run: |

|

||||

uv run python3 ${{ github.action_path }}/ci_config.py

|

||||

from authentik.lib.generators import generate_id

|

||||

from yaml import safe_dump

|

||||

|

||||

with open("local.env.yml", "w") as _config:

|

||||

safe_dump(

|

||||

{

|

||||

"log_level": "debug",

|

||||

"secret_key": generate_id(),

|

||||

},

|

||||

_config,

|

||||

default_flow_style=False,

|

||||

)

|

||||

|

||||

18

.github/actions/setup/ci_config.py

vendored

18

.github/actions/setup/ci_config.py

vendored

@@ -1,18 +0,0 @@

|

||||

from os import getenv

|

||||

from typing import Any

|

||||

|

||||

from yaml import safe_dump

|

||||

|

||||

from authentik.lib.generators import generate_id

|

||||

|

||||

config: dict[str, Any] = {

|

||||

"log_level": "debug",

|

||||

"secret_key": generate_id(),

|

||||

}

|

||||

|

||||

profiles = getenv("PROFILES")

|

||||

if profiles and "postgres_replica" in profiles:

|

||||

config["postgresql"] = {"read_replicas": {"0": {"host": "localhost", "port": 5433}}}

|

||||

|

||||

with open("local.env.yml", "w") as _config:

|

||||

safe_dump(config, _config, default_flow_style=False)

|

||||

41

.github/actions/setup/docker-compose.yml

vendored

41

.github/actions/setup/docker-compose.yml

vendored

@@ -1,17 +1,8 @@

|

||||

services:

|

||||

redis:

|

||||

image: docker.io/library/redis:7

|

||||

ports:

|

||||

- 6379:6379

|

||||

restart: always

|

||||

|

||||

postgres:

|

||||

postgresql:

|

||||

image: docker.io/library/postgres:${PSQL_TAG:-16}

|

||||

volumes:

|

||||

- db-data:/var/lib/postgresql/data

|

||||

- ./primary/00-replication.sql:/docker-entrypoint-initdb.d/00-replication.sql

|

||||

- ./primary/01-replication-hba.sh:/docker-entrypoint-initdb.d/01-replication-hba.sh

|

||||

command: postgres -c 'wal_level=replica' -c 'max_wal_senders=10' -c 'max_replication_slots=10' -c 'listen_addresses=*'

|

||||

environment:

|

||||

POSTGRES_USER: authentik

|

||||

POSTGRES_PASSWORD: "EK-5jnKfjrGRm<77"

|

||||

@@ -19,34 +10,12 @@ services:

|

||||

ports:

|

||||

- 5432:5432

|

||||

restart: always

|

||||

healthcheck:

|

||||

test: ["CMD-SHELL", "pg_isready -U $${POSTGRES_USER}"]

|

||||

interval: 5s

|

||||

timeout: 5s

|

||||

retries: 5

|

||||

|

||||

postgres_replica:

|

||||

profiles:

|

||||

- postgres_replica

|

||||

image: docker.io/library/postgres:${PSQL_TAG:-16}

|

||||

environment:

|

||||

POSTGRES_USER: authentik

|

||||

POSTGRES_PASSWORD: "EK-5jnKfjrGRm<77"

|

||||

POSTGRES_DB: authentik

|

||||

redis:

|

||||

image: docker.io/library/redis:7

|

||||

ports:

|

||||

- "5433:5432"

|

||||

volumes:

|

||||

- db-data-replica:/var/lib/postgresql/data

|

||||

- ./replica:/replica

|

||||

command: /replica/start.sh

|

||||

healthcheck:

|

||||

test: ["CMD-SHELL", "pg_isready -U $${POSTGRES_USER}"]

|

||||

interval: 5s

|

||||

timeout: 5s

|

||||

retries: 5

|

||||

- 6379:6379

|

||||

restart: always

|

||||

|

||||

volumes:

|

||||

db-data:

|

||||

driver: local

|

||||

db-data-replica:

|

||||

driver: local

|

||||

|

||||

@@ -1,9 +0,0 @@

|

||||

-- Create replication role if it doesn't exist

|

||||

DO $$ BEGIN

|

||||

IF NOT EXISTS (SELECT FROM pg_catalog.pg_roles WHERE rolname = 'replica') THEN

|

||||

CREATE ROLE replica WITH REPLICATION LOGIN PASSWORD 'EK-5jnKfjrGRm<77';

|

||||

END IF;

|

||||

END $$;

|

||||

|

||||

-- Create replication slot if it doesn't exist

|

||||

SELECT pg_create_physical_replication_slot('replica_slot', true);

|

||||

@@ -1,3 +0,0 @@

|

||||

#!/bin/bash

|

||||

set -euxo pipefail

|

||||

echo "host replication all all scram-sha-256" >> /var/lib/postgresql/data/pg_hba.conf

|

||||

9

.github/actions/setup/replica/start.sh

vendored

9

.github/actions/setup/replica/start.sh

vendored

@@ -1,9 +0,0 @@

|

||||

#!/bin/bash

|

||||

set -euxo pipefail

|

||||

echo 'Waiting for primary to be ready...'

|

||||

while ! pg_isready -h postgres -p 5432 -U replica; do sleep 1; done;

|

||||

echo 'Primary is ready, starting replica...'

|

||||

rm -rf /var/lib/postgresql/data/* 2>/dev/null || true

|

||||

PGPASSWORD=${POSTGRES_PASSWORD} pg_basebackup -h postgres -U replica -D /var/lib/postgresql/data -Fp -Xs -R -P

|

||||

echo 'Replication setup complete, starting PostgreSQL...'

|

||||

docker-entrypoint.sh postgres

|

||||

2

.github/cherry-pick-bot.yml

vendored

Normal file

2

.github/cherry-pick-bot.yml

vendored

Normal file

@@ -0,0 +1,2 @@

|

||||

enabled: true

|

||||

preservePullRequestTitle: true

|

||||

6

.github/dependabot.yml

vendored

6

.github/dependabot.yml

vendored

@@ -77,12 +77,6 @@ updates:

|

||||

goauthentik:

|

||||

patterns:

|

||||

- "@goauthentik/*"

|

||||

react:

|

||||

patterns:

|

||||

- "react"

|

||||

- "react-dom"

|

||||

- "@types/react"

|

||||

- "@types/react-dom"

|

||||

- package-ecosystem: npm

|

||||

directory: "/website"

|

||||

schedule:

|

||||

|

||||

@@ -90,7 +90,7 @@ jobs:

|

||||

platforms: linux/${{ inputs.image_arch }}

|

||||

cache-from: type=registry,ref=${{ steps.ev.outputs.attestImageNames }}:buildcache-${{ inputs.image_arch }}

|

||||

cache-to: ${{ steps.ev.outputs.cacheTo }}

|

||||

- uses: actions/attest-build-provenance@v3

|

||||

- uses: actions/attest-build-provenance@v2

|

||||

id: attest

|

||||

if: ${{ steps.ev.outputs.shouldPush == 'true' }}

|

||||

with:

|

||||

@@ -21,7 +21,7 @@ on:

|

||||

|

||||

jobs:

|

||||

build-server-amd64:

|

||||

uses: ./.github/workflows/_reusable-docker-build-single.yml

|

||||

uses: ./.github/workflows/_reusable-docker-build-single.yaml

|

||||

secrets: inherit

|

||||

with:

|

||||

image_name: ${{ inputs.image_name }}

|

||||

@@ -31,7 +31,7 @@ jobs:

|

||||

registry_ghcr: ${{ inputs.registry_ghcr }}

|

||||

release: ${{ inputs.release }}

|

||||

build-server-arm64:

|

||||

uses: ./.github/workflows/_reusable-docker-build-single.yml

|

||||

uses: ./.github/workflows/_reusable-docker-build-single.yaml

|

||||

secrets: inherit

|

||||

with:

|

||||

image_name: ${{ inputs.image_name }}

|

||||

@@ -97,7 +97,7 @@ jobs:

|

||||

sources: |

|

||||

${{ steps.ev.outputs.attestImageNames }}@${{ needs.build-server-amd64.outputs.image-digest }}

|

||||

${{ steps.ev.outputs.attestImageNames }}@${{ needs.build-server-arm64.outputs.image-digest }}

|

||||

- uses: actions/attest-build-provenance@v3

|

||||

- uses: actions/attest-build-provenance@v2

|

||||

id: attest

|

||||

with:

|

||||

subject-name: ${{ steps.ev.outputs.attestImageNames }}

|

||||

68

.github/workflows/api-py-publish.yml

vendored

Normal file

68

.github/workflows/api-py-publish.yml

vendored

Normal file

@@ -0,0 +1,68 @@

|

||||

---

|

||||

name: API - Publish Python client

|

||||

|

||||

on:

|

||||

push:

|

||||

branches: [main]

|

||||

paths:

|

||||

- "schema.yml"

|

||||

workflow_dispatch:

|

||||

|

||||

jobs:

|

||||

build:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

id-token: write

|

||||

steps:

|

||||

- id: generate_token

|

||||

uses: tibdex/github-app-token@v2

|

||||

with:

|

||||

app_id: ${{ secrets.GH_APP_ID }}

|

||||

private_key: ${{ secrets.GH_APP_PRIVATE_KEY }}

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

token: ${{ steps.generate_token.outputs.token }}

|

||||

- name: Install poetry & deps

|

||||

shell: bash

|

||||

run: |

|

||||

pipx install poetry || true

|

||||

sudo apt-get update

|

||||

sudo apt-get install --no-install-recommends -y libpq-dev openssl libxmlsec1-dev pkg-config gettext

|

||||

- name: Setup python and restore poetry

|

||||

uses: actions/setup-python@v5

|

||||

with:

|

||||

python-version-file: "pyproject.toml"

|

||||

- name: Generate API Client

|

||||

run: make gen-client-py

|

||||

- name: Publish package

|

||||

working-directory: gen-py-api/

|

||||

run: |

|

||||

poetry build

|

||||

- name: Publish package to PyPI

|

||||

uses: pypa/gh-action-pypi-publish@release/v1

|

||||

with:

|

||||

packages-dir: gen-py-api/dist/

|

||||

# We can't easily upgrade the API client being used due to poetry being poetry

|

||||

# so we'll have to rely on dependabot

|

||||

# - name: Upgrade /

|

||||

# run: |

|

||||

# export VERSION=$(cd gen-py-api && poetry version -s)

|

||||

# poetry add "authentik_client=$VERSION" --allow-prereleases --lock

|

||||

# - uses: peter-evans/create-pull-request@v6

|

||||

# id: cpr

|

||||

# with:

|

||||

# token: ${{ steps.generate_token.outputs.token }}

|

||||

# branch: update-root-api-client

|

||||

# commit-message: "root: bump API Client version"

|

||||

# title: "root: bump API Client version"

|

||||

# body: "root: bump API Client version"

|

||||

# delete-branch: true

|

||||

# signoff: true

|

||||

# # ID from https://api.github.com/users/authentik-automation[bot]

|

||||

# author: authentik-automation[bot] <135050075+authentik-automation[bot]@users.noreply.github.com>

|

||||

# - uses: peter-evans/enable-pull-request-automerge@v3

|

||||

# with:

|

||||

# token: ${{ steps.generate_token.outputs.token }}

|

||||

# pull-request-number: ${{ steps.cpr.outputs.pull-request-number }}

|

||||

# merge-method: squash

|

||||

2

.github/workflows/api-ts-publish.yml

vendored

2

.github/workflows/api-ts-publish.yml

vendored

@@ -21,7 +21,7 @@ jobs:

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

token: ${{ steps.generate_token.outputs.token }}

|

||||

- uses: actions/setup-node@v5

|

||||

- uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: web/package.json

|

||||

registry-url: "https://registry.npmjs.org"

|

||||

|

||||

4

.github/workflows/ci-api-docs.yml

vendored

4

.github/workflows/ci-api-docs.yml

vendored

@@ -33,7 +33,7 @@ jobs:

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/setup-node@v5

|

||||

- uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: website/package.json

|

||||

cache: "npm"

|

||||

@@ -71,7 +71,7 @@ jobs:

|

||||

with:

|

||||

name: api-docs

|

||||

path: website/api/build

|

||||

- uses: actions/setup-node@v5

|

||||

- uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: website/package.json

|

||||

cache: "npm"

|

||||

|

||||

2

.github/workflows/ci-aws-cfn.yml

vendored

2

.github/workflows/ci-aws-cfn.yml

vendored

@@ -24,7 +24,7 @@ jobs:

|

||||

- uses: actions/checkout@v5

|

||||

- name: Setup authentik env

|

||||

uses: ./.github/actions/setup

|

||||

- uses: actions/setup-node@v5

|

||||

- uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: lifecycle/aws/package.json

|

||||

cache: "npm"

|

||||

|

||||

6

.github/workflows/ci-docs.yml

vendored

6

.github/workflows/ci-docs.yml

vendored

@@ -33,7 +33,7 @@ jobs:

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/setup-node@v5

|

||||

- uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: website/package.json

|

||||

cache: "npm"

|

||||

@@ -49,7 +49,7 @@ jobs:

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/setup-node@v5

|

||||

- uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: website/package.json

|

||||

cache: "npm"

|

||||

@@ -102,7 +102,7 @@ jobs:

|

||||

context: .

|

||||

cache-from: type=registry,ref=ghcr.io/goauthentik/dev-docs:buildcache

|

||||

cache-to: ${{ steps.ev.outputs.shouldPush == 'true' && 'type=registry,ref=ghcr.io/goauthentik/dev-docs:buildcache,mode=max' || '' }}

|

||||

- uses: actions/attest-build-provenance@v3

|

||||

- uses: actions/attest-build-provenance@v2

|

||||

id: attest

|

||||

if: ${{ steps.ev.outputs.shouldPush == 'true' }}

|

||||

with:

|

||||

|

||||

11

.github/workflows/ci-main.yml

vendored

11

.github/workflows/ci-main.yml

vendored

@@ -34,7 +34,6 @@ jobs:

|

||||

- codespell

|

||||

- pending-migrations

|

||||

- ruff

|

||||

- mypy

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

@@ -67,6 +66,7 @@ jobs:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

psql:

|

||||

- 15-alpine

|

||||

- 16-alpine

|

||||

- 17-alpine

|

||||

run_id: [1, 2, 3, 4, 5]

|

||||

@@ -113,7 +113,7 @@ jobs:

|

||||

run: |

|

||||

uv run make ci-test

|

||||

test-unittest:

|

||||

name: test-unittest - PostgreSQL ${{ matrix.psql }} (${{ matrix.profiles }}) - Run ${{ matrix.run_id }}/5

|

||||

name: test-unittest - PostgreSQL ${{ matrix.psql }} - Run ${{ matrix.run_id }}/5

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 20

|

||||

needs: test-make-seed

|

||||

@@ -121,11 +121,9 @@ jobs:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

psql:

|

||||

- 15-alpine

|

||||

- 16-alpine

|

||||

- 17-alpine

|

||||

profiles:

|

||||

- ""

|

||||

- postgres_replica

|

||||

run_id: [1, 2, 3, 4, 5]

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

@@ -133,7 +131,6 @@ jobs:

|

||||

uses: ./.github/actions/setup

|

||||

with:

|

||||

postgresql_version: ${{ matrix.psql }}

|

||||

profiles: ${{ matrix.profiles }}

|

||||

- name: run unittest

|

||||

env:

|

||||

CI_TEST_SEED: ${{ needs.test-make-seed.outputs.seed }}

|

||||

@@ -259,7 +256,7 @@ jobs:

|

||||

# Needed for checkout

|

||||

contents: read

|

||||

needs: ci-core-mark

|

||||

uses: ./.github/workflows/_reusable-docker-build.yml

|

||||

uses: ./.github/workflows/_reusable-docker-build.yaml

|

||||

secrets: inherit

|

||||

with:

|

||||

image_name: ${{ github.repository == 'goauthentik/authentik-internal' && 'ghcr.io/goauthentik/internal-server' || 'ghcr.io/goauthentik/dev-server' }}

|

||||

|

||||

10

.github/workflows/ci-outpost.yml

vendored

10

.github/workflows/ci-outpost.yml

vendored

@@ -17,7 +17,7 @@ jobs:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/setup-go@v6

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version-file: "go.mod"

|

||||

- name: Prepare and generate API

|

||||

@@ -38,7 +38,7 @@ jobs:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/setup-go@v6

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version-file: "go.mod"

|

||||

- name: Setup authentik env

|

||||

@@ -115,7 +115,7 @@ jobs:

|

||||

context: .

|

||||

cache-from: type=registry,ref=ghcr.io/goauthentik/dev-${{ matrix.type }}:buildcache

|

||||

cache-to: ${{ steps.ev.outputs.shouldPush == 'true' && format('type=registry,ref=ghcr.io/goauthentik/dev-{0}:buildcache,mode=max', matrix.type) || '' }}

|

||||

- uses: actions/attest-build-provenance@v3

|

||||

- uses: actions/attest-build-provenance@v2

|

||||

id: attest

|

||||

if: ${{ steps.ev.outputs.shouldPush == 'true' }}

|

||||

with:

|

||||

@@ -141,10 +141,10 @@ jobs:

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

ref: ${{ github.event.pull_request.head.sha }}

|

||||

- uses: actions/setup-go@v6

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version-file: "go.mod"

|

||||

- uses: actions/setup-node@v5

|

||||

- uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: web/package.json

|

||||

cache: "npm"

|

||||

|

||||

6

.github/workflows/ci-web.yml

vendored

6

.github/workflows/ci-web.yml

vendored

@@ -32,7 +32,7 @@ jobs:

|

||||

project: web

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/setup-node@v5

|

||||

- uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: ${{ matrix.project }}/package.json

|

||||

cache: "npm"

|

||||

@@ -49,7 +49,7 @@ jobs:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/setup-node@v5

|

||||

- uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: web/package.json

|

||||

cache: "npm"

|

||||

@@ -77,7 +77,7 @@ jobs:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/setup-node@v5

|

||||

- uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: web/package.json

|

||||

cache: "npm"

|

||||

|

||||

36

.github/workflows/gh-cherry-pick.yml

vendored

36

.github/workflows/gh-cherry-pick.yml

vendored

@@ -1,36 +0,0 @@

|

||||

name: GH - Cherry-pick

|

||||

|

||||

on:

|

||||

pull_request_target:

|

||||

types: [closed, labeled]

|

||||

|

||||

jobs:

|

||||

cherry-pick:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: app-token

|

||||

name: Generate app token

|

||||

uses: actions/create-github-app-token@v2

|

||||

if: ${{ env.GH_APP_ID != '' }}

|

||||

with:

|

||||

app-id: ${{ secrets.GH_APP_ID }}

|

||||

private-key: ${{ secrets.GH_APP_PRIVATE_KEY }}

|

||||

env:

|

||||

GH_APP_ID: ${{ secrets.GH_APP_ID }}

|

||||

- uses: actions/checkout@v5

|

||||

if: ${{ steps.app-token.outcome != 'skipped' }}

|

||||

with:

|

||||

fetch-depth: 0

|

||||

token: "${{ steps.app-token.outputs.token }}"

|

||||

- id: get-user-id

|

||||

if: ${{ steps.app-token.outcome != 'skipped' }}

|

||||

name: Get GitHub app user ID

|

||||

run: echo "user-id=$(gh api "/users/${{ steps.app-token.outputs.app-slug }}[bot]" --jq .id)" >> "$GITHUB_OUTPUT"

|

||||

env:

|

||||

GH_TOKEN: "${{ steps.app-token.outputs.token }}"

|

||||

- uses: ./.github/actions/cherry-pick

|

||||

if: ${{ steps.app-token.outcome != 'skipped' }}

|

||||

with:

|

||||

token: ${{ steps.app-token.outputs.token }}

|

||||

git_user: ${{ steps.app-token.outputs.app-slug }}[bot]

|

||||

git_user_email: '${{ steps.get-user-id.outputs.user-id }}+${{ steps.app-token.outputs.app-slug }}[bot]@users.noreply.github.com'

|

||||

4

.github/workflows/packages-npm-publish.yml

vendored

4

.github/workflows/packages-npm-publish.yml

vendored

@@ -29,13 +29,13 @@ jobs:

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

fetch-depth: 2

|

||||

- uses: actions/setup-node@v5

|

||||

- uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: ${{ matrix.package }}/package.json

|

||||

registry-url: "https://registry.npmjs.org"

|

||||

- name: Get changed files

|

||||

id: changed-files

|

||||

uses: tj-actions/changed-files@24d32ffd492484c1d75e0c0b894501ddb9d30d62

|

||||

uses: tj-actions/changed-files@ed68ef82c095e0d48ec87eccea555d944a631a4c

|

||||

with:

|

||||

files: |

|

||||

${{ matrix.package }}/package.json

|

||||

|

||||

3

.github/workflows/release-branch-off.yml

vendored

3

.github/workflows/release-branch-off.yml

vendored

@@ -43,13 +43,10 @@ jobs:

|

||||

with:

|

||||

dependencies: python

|

||||

- name: Create version branch

|

||||

env:

|

||||

GH_TOKEN: "${{ steps.app-token.outputs.token }}"

|

||||

run: |

|

||||

current_major_version="$(uv version --short | grep -oE "^[0-9]{4}\.[0-9]{1,2}")"

|

||||

git checkout -b "version-${current_major_version}"

|

||||

git push origin "version-${current_major_version}"

|

||||

gh label create "backport/version-${current_major_version}" --description "Add this label to PRs to backport changes to version-${current_major_version}" --color "fbca04"

|

||||

bump-version-pr:

|

||||

name: Open version bump PR

|

||||

needs:

|

||||

|

||||

14

.github/workflows/release-publish.yml

vendored

14

.github/workflows/release-publish.yml

vendored

@@ -7,7 +7,7 @@ on:

|

||||

|

||||

jobs:

|

||||

build-server:

|

||||

uses: ./.github/workflows/_reusable-docker-build.yml

|

||||

uses: ./.github/workflows/_reusable-docker-build.yaml

|

||||

secrets: inherit

|

||||

permissions:

|

||||

contents: read

|

||||

@@ -58,7 +58,7 @@ jobs:

|

||||

push: true

|

||||

platforms: linux/amd64,linux/arm64

|

||||

context: .

|

||||

- uses: actions/attest-build-provenance@v3

|

||||

- uses: actions/attest-build-provenance@v2

|

||||

id: attest

|

||||

if: true

|

||||

with:

|

||||

@@ -84,7 +84,7 @@ jobs:

|

||||

- rac

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/setup-go@v6

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version-file: "go.mod"

|

||||

- name: Set up QEMU

|

||||

@@ -124,7 +124,7 @@ jobs:

|

||||

file: ${{ matrix.type }}.Dockerfile

|

||||

platforms: linux/amd64,linux/arm64

|

||||

context: .

|

||||

- uses: actions/attest-build-provenance@v3

|

||||

- uses: actions/attest-build-provenance@v2

|

||||

id: attest

|

||||

with:

|

||||

subject-name: ${{ steps.ev.outputs.attestImageNames }}

|

||||

@@ -147,10 +147,10 @@ jobs:

|

||||

goarch: [amd64, arm64]

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/setup-go@v6

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version-file: "go.mod"

|

||||

- uses: actions/setup-node@v5

|

||||

- uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: web/package.json

|

||||

cache: "npm"

|

||||

@@ -187,7 +187,7 @@ jobs:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: aws-actions/configure-aws-credentials@v5

|

||||

- uses: aws-actions/configure-aws-credentials@v4

|

||||

with:

|

||||

role-to-assume: "arn:aws:iam::016170277896:role/github_goauthentik_authentik"

|

||||

aws-region: ${{ env.AWS_REGION }}

|

||||

|

||||

2

.github/workflows/repo-stale.yml

vendored

2

.github/workflows/repo-stale.yml

vendored

@@ -20,7 +20,7 @@ jobs:

|

||||

with:

|

||||

app_id: ${{ secrets.GH_APP_ID }}

|

||||

private_key: ${{ secrets.GH_APP_PRIVATE_KEY }}

|

||||

- uses: actions/stale@v10

|

||||

- uses: actions/stale@v9

|

||||

with:

|

||||

repo-token: ${{ steps.generate_token.outputs.token }}

|

||||

days-before-stale: 60

|

||||

|

||||

12

.vscode/settings.json

vendored

12

.vscode/settings.json

vendored

@@ -1,16 +1,4 @@

|

||||

{

|

||||

"[css]": {

|

||||

"editor.minimap.markSectionHeaderRegex": "#\\bregion\\s*(?<separator>-?)\\s*(?<label>.*)\\*/$"

|

||||

},

|

||||

"[makefile]": {

|

||||

"editor.minimap.markSectionHeaderRegex": "^#{25}\n##\\s\\s*(?<separator>-?)\\s*(?<label>[^\n]*)\n#{25}$"

|

||||

},

|

||||

"[dockerfile]": {

|

||||

"editor.minimap.markSectionHeaderRegex": "\\bStage\\s*\\d:(?<separator>-?)\\s*(?<label>.*)$"

|

||||

},

|

||||

"[jsonc]": {

|

||||

"editor.minimap.markSectionHeaderRegex": "#\\bregion\\s*(?<separator>-?)\\s*(?<label>.*)$"

|

||||

},

|

||||

"todo-tree.tree.showCountsInTree": true,

|

||||

"todo-tree.tree.showBadges": true,

|

||||

"yaml.customTags": [

|

||||

|

||||

@@ -1,4 +0,0 @@

|

||||

# Contributing to authentik

|

||||

|

||||

Thanks for your interest in contributing! Please see our [contributing guide](https://docs.goauthentik.io/docs/developer-docs/?utm_source=github) for more information.

|

||||

|

||||

1

CONTRIBUTING.md

Symbolic link

1

CONTRIBUTING.md

Symbolic link

@@ -0,0 +1 @@

|

||||

website/docs/developer-docs/index.md

|

||||

@@ -76,9 +76,9 @@ RUN --mount=type=secret,id=GEOIPUPDATE_ACCOUNT_ID \

|

||||

/bin/sh -c "GEOIPUPDATE_LICENSE_KEY_FILE=/run/secrets/GEOIPUPDATE_LICENSE_KEY /usr/bin/entry.sh || echo 'Failed to get GeoIP database, disabling'; exit 0"

|

||||

|

||||

# Stage 4: Download uv

|

||||

FROM ghcr.io/astral-sh/uv:0.8.22 AS uv

|

||||

FROM ghcr.io/astral-sh/uv:0.8.13 AS uv

|

||||

# Stage 5: Base python image

|

||||

FROM ghcr.io/goauthentik/fips-python:3.13.7-slim-trixie-fips AS python-base

|

||||

FROM ghcr.io/goauthentik/fips-python:3.13.7-slim-bookworm-fips AS python-base

|

||||

|

||||

ENV VENV_PATH="/ak-root/.venv" \

|

||||

PATH="/lifecycle:/ak-root/.venv/bin:$PATH" \

|

||||

|

||||

74

Makefile

74

Makefile

@@ -18,24 +18,7 @@ pg_host := $(shell uv run python -m authentik.lib.config postgresql.host 2>/dev/

|

||||

pg_name := $(shell uv run python -m authentik.lib.config postgresql.name 2>/dev/null)

|

||||

redis_db := $(shell uv run python -m authentik.lib.config redis.db 2>/dev/null)

|

||||

|

||||

UNAME := $(shell uname)

|

||||

|

||||

# For macOS users, add the libxml2 installed from brew libxmlsec1 to the build path

|

||||

# to prevent SAML-related tests from failing and ensure correct pip dependency compilation

|

||||

ifeq ($(UNAME), Darwin)

|

||||

# Only add for brew users who installed libxmlsec1

|

||||

BREW_EXISTS := $(shell command -v brew 2> /dev/null)

|

||||

ifdef BREW_EXISTS

|

||||

LIBXML2_EXISTS := $(shell brew list libxml2 2> /dev/null)

|

||||

ifdef LIBXML2_EXISTS

|

||||

BREW_LDFLAGS := -L$(shell brew --prefix libxml2)/lib $(LDFLAGS)

|

||||

BREW_CPPFLAGS := -I$(shell brew --prefix libxml2)/include $(CPPFLAGS)

|

||||

BREW_PKG_CONFIG_PATH := $(shell brew --prefix libxml2)/lib/pkgconfig:$(PKG_CONFIG_PATH)

|

||||

endif

|

||||

endif

|

||||

endif

|

||||

|

||||

all: lint-fix lint gen web test ## Lint, build, and test everything

|

||||

all: lint-fix lint test gen web ## Lint, build, and test everything

|

||||

|

||||

HELP_WIDTH := $(shell grep -h '^[a-z][^ ]*:.*\#\#' $(MAKEFILE_LIST) 2>/dev/null | \

|

||||

cut -d':' -f1 | awk '{printf "%d\n", length}' | sort -rn | head -1)

|

||||

@@ -67,14 +50,7 @@ lint: ## Lint the python and golang sources

|

||||

golangci-lint run -v

|

||||

|

||||

core-install:

|

||||

ifdef LIBXML2_EXISTS

|

||||

# Clear cache to ensure fresh compilation

|

||||

uv cache clean

|

||||

# Force compilation from source for lxml and xmlsec with correct environment

|

||||

LDFLAGS="$(BREW_LDFLAGS)" CPPFLAGS="$(BREW_CPPFLAGS)" PKG_CONFIG_PATH="$(BREW_PKG_CONFIG_PATH)" uv sync --frozen --reinstall-package lxml --reinstall-package xmlsec --no-binary-package lxml --no-binary-package xmlsec

|

||||

else

|

||||

uv sync --frozen

|

||||

endif

|

||||

|

||||

migrate: ## Run the Authentik Django server's migrations

|

||||

uv run python -m lifecycle.migrate

|

||||

@@ -184,7 +160,7 @@ gen-client-ts: gen-clean-ts ## Build and install the authentik API for Typescri

|

||||

docker run \

|

||||

--rm -v ${PWD}:/local \

|

||||

--user ${UID}:${GID} \

|

||||

docker.io/openapitools/openapi-generator-cli:v7.15.0 generate \

|

||||

docker.io/openapitools/openapi-generator-cli:v7.11.0 generate \

|

||||

-i /local/schema.yml \

|

||||

-g typescript-fetch \

|

||||

-o /local/${GEN_API_TS} \

|

||||

@@ -193,7 +169,6 @@ gen-client-ts: gen-clean-ts ## Build and install the authentik API for Typescri

|

||||

--git-repo-id authentik \

|

||||

--git-user-id goauthentik

|

||||

|

||||

cd ${PWD}/${GEN_API_TS} && npm i

|

||||

cd ${PWD}/${GEN_API_TS} && npm link

|

||||

cd ${PWD}/web && npm link @goauthentik/api

|

||||

|

||||

@@ -201,7 +176,7 @@ gen-client-py: gen-clean-py ## Build and install the authentik API for Python

|

||||

docker run \

|

||||

--rm -v ${PWD}:/local \

|

||||

--user ${UID}:${GID} \

|

||||

docker.io/openapitools/openapi-generator-cli:v7.15.0 generate \

|

||||

docker.io/openapitools/openapi-generator-cli:v7.11.0 generate \

|

||||

-i /local/schema.yml \

|

||||

-g python \

|

||||

-o /local/${GEN_API_PY} \

|

||||

@@ -239,30 +214,34 @@ node-install: ## Install the necessary libraries to build Node.js packages

|

||||

#########################

|

||||

|

||||

web-build: node-install ## Build the Authentik UI

|

||||

npm run --prefix web build

|

||||

cd web && npm run build

|

||||

|

||||

web: web-lint-fix web-lint web-check-compile ## Automatically fix formatting issues in the Authentik UI source code, lint the code, and compile it

|

||||

|

||||

web-test: ## Run tests for the Authentik UI

|

||||

npm run --prefix web test

|

||||

cd web && npm run test

|

||||

|

||||

web-watch: ## Build and watch the Authentik UI for changes, updating automatically

|

||||

npm run --prefix web watch

|

||||

rm -rf web/dist/

|

||||

mkdir web/dist/

|

||||

touch web/dist/.gitkeep

|

||||

cd web && npm run watch

|

||||

|

||||

web-storybook-watch: ## Build and run the storybook documentation server

|

||||

npm run --prefix web storybook

|

||||

cd web && npm run storybook

|

||||

|

||||

web-lint-fix:

|

||||

npm run --prefix web prettier

|

||||

cd web && npm run prettier

|

||||

|

||||

web-lint:

|

||||

npm run --prefix web lint

|

||||

npm run --prefix web lit-analyse

|

||||

cd web && npm run lint

|

||||

cd web && npm run lit-analyse

|

||||

|

||||

web-check-compile:

|

||||

npm run --prefix web tsc

|

||||

cd web && npm run tsc

|

||||

|

||||

web-i18n-extract:

|

||||

npm run --prefix web extract-locales

|

||||

cd web && npm run extract-locales

|

||||

|

||||

#########################

|

||||

## Docs

|

||||

@@ -274,31 +253,31 @@ docs-install:

|

||||

npm ci --prefix website

|

||||

|

||||

docs-lint-fix: lint-codespell

|

||||

npm run --prefix website prettier

|

||||

npm run prettier --prefix website

|

||||

|

||||

docs-build:

|

||||

npm run --prefix website build

|

||||

npm run build --prefix website

|

||||

|

||||

docs-watch: ## Build and watch the topics documentation

|

||||

npm run --prefix website start

|

||||

npm run start --prefix website

|

||||

|

||||

integrations: docs-lint-fix integrations-build ## Fix formatting issues in the integrations source code, lint the code, and compile it

|

||||

|

||||

integrations-build:

|

||||

npm run --prefix website -w integrations build

|

||||

npm run build --prefix website -w integrations

|

||||

|

||||

integrations-watch: ## Build and watch the Integrations documentation

|

||||

npm run --prefix website -w integrations start

|

||||

npm run start --prefix website -w integrations

|

||||

|

||||

docs-api-build:

|

||||

npm run --prefix website -w api build

|

||||

npm run build --prefix website -w api

|

||||

|

||||

docs-api-watch: ## Build and watch the API documentation

|

||||

npm run --prefix website -w api build:api

|

||||

npm run --prefix website -w api start

|

||||

npm run build:api --prefix website -w api

|

||||

npm run start --prefix website -w api

|

||||

|

||||

docs-api-clean: ## Clean generated API documentation

|

||||

npm run --prefix website -w api build:api:clean

|

||||

npm run build:api:clean --prefix website -w api

|

||||

|

||||

#########################

|

||||

## Docker

|

||||

@@ -321,9 +300,6 @@ ci--meta-debug:

|

||||

python -V

|

||||

node --version

|

||||

|

||||

ci-mypy: ci--meta-debug

|

||||

uv run mypy --strict $(PY_SOURCES)

|

||||

|

||||

ci-black: ci--meta-debug

|

||||

uv run black --check $(PY_SOURCES)

|

||||

|

||||

|

||||

28

README.md

28

README.md

@@ -9,21 +9,21 @@

|

||||

[](https://github.com/goauthentik/authentik/actions/workflows/ci-outpost.yml)

|

||||

[](https://github.com/goauthentik/authentik/actions/workflows/ci-web.yml)

|

||||

[](https://codecov.io/gh/goauthentik/authentik)

|

||||

|

||||

|

||||

[](https://www.transifex.com/authentik/authentik/)

|

||||

|

||||

## What is authentik?

|

||||

|

||||

authentik is an open-source Identity Provider (IdP) for modern SSO. It supports SAML, OAuth2/OIDC, LDAP, RADIUS, and more, designed for self-hosting from small labs to large production clusters.

|

||||

authentik is an open-source Identity Provider that emphasizes flexibility and versatility, with support for a wide set of protocols.

|

||||

|

||||

Our [enterprise offering](https://goauthentik.io/pricing) is available for organizations to securely replace existing IdPs such as Okta, Auth0, Entra ID, and Ping Identity for robust, large-scale identity management.

|

||||

Our [enterprise offer](https://goauthentik.io/pricing) can also be used as a self-hosted replacement for large-scale deployments of Okta/Auth0, Entra ID, Ping Identity, or other legacy IdPs for employees and B2B2C use.

|

||||

|

||||

## Installation

|

||||

|

||||

- Docker Compose: recommended for small/test setups. See the [documentation](https://docs.goauthentik.io/docs/install-config/install/docker-compose/).

|

||||

- Kubernetes (Helm Chart): recommended for larger setups. See the [documentation](https://docs.goauthentik.io/docs/install-config/install/kubernetes/) and the Helm chart [repository](https://github.com/goauthentik/helm).

|

||||

- AWS CloudFormation: deploy on AWS using our official templates. See the [documentation](https://docs.goauthentik.io/docs/install-config/install/aws/).

|

||||

- DigitalOcean Marketplace: one-click deployment via the official Marketplace app. See the [app listing](https://marketplace.digitalocean.com/apps/authentik).

|

||||

For small/test setups it is recommended to use Docker Compose; refer to the [documentation](https://goauthentik.io/docs/installation/docker-compose/?utm_source=github).

|

||||

|

||||

For bigger setups, there is a Helm Chart [here](https://github.com/goauthentik/helm). This is documented [here](https://goauthentik.io/docs/installation/kubernetes/?utm_source=github).

|

||||

|

||||

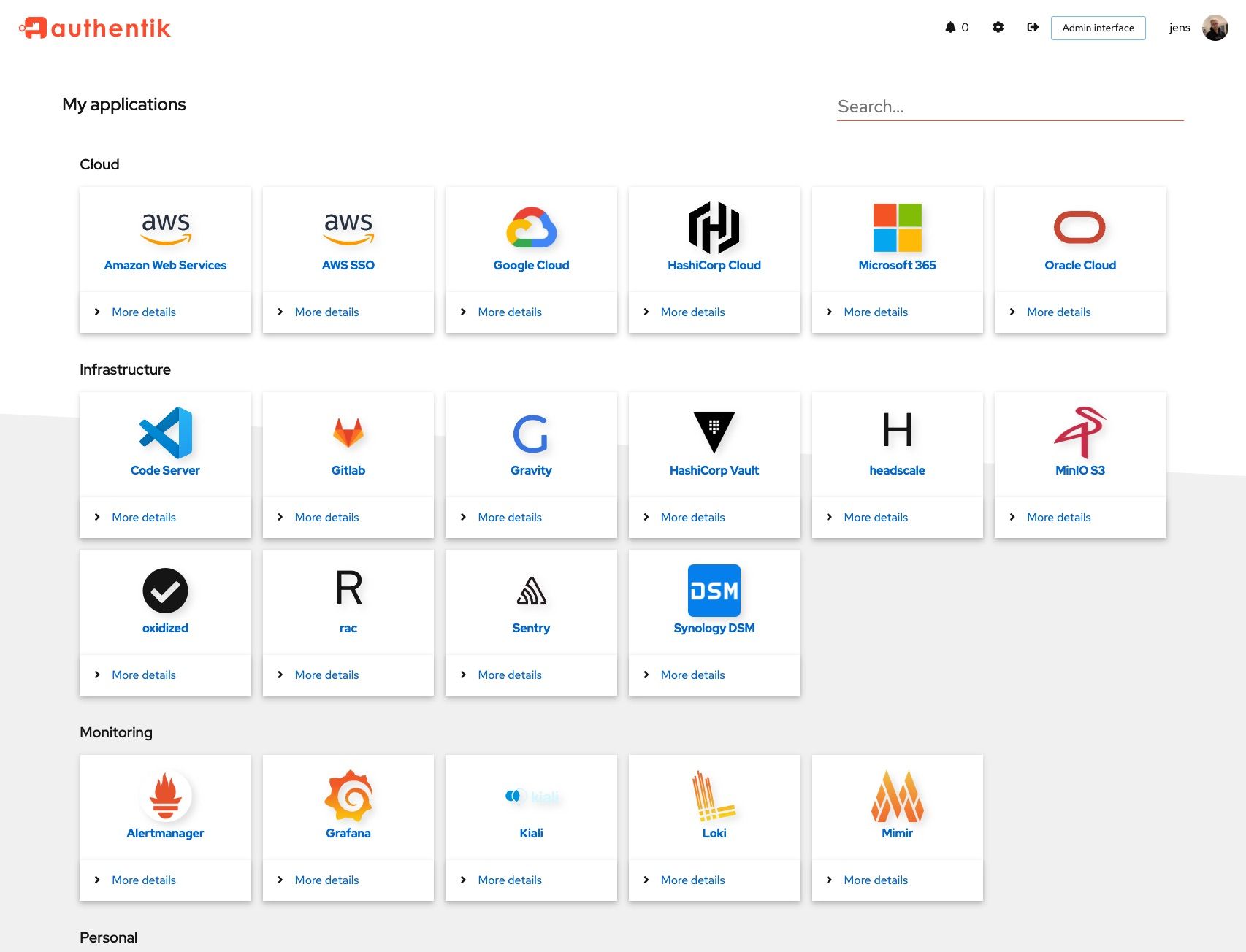

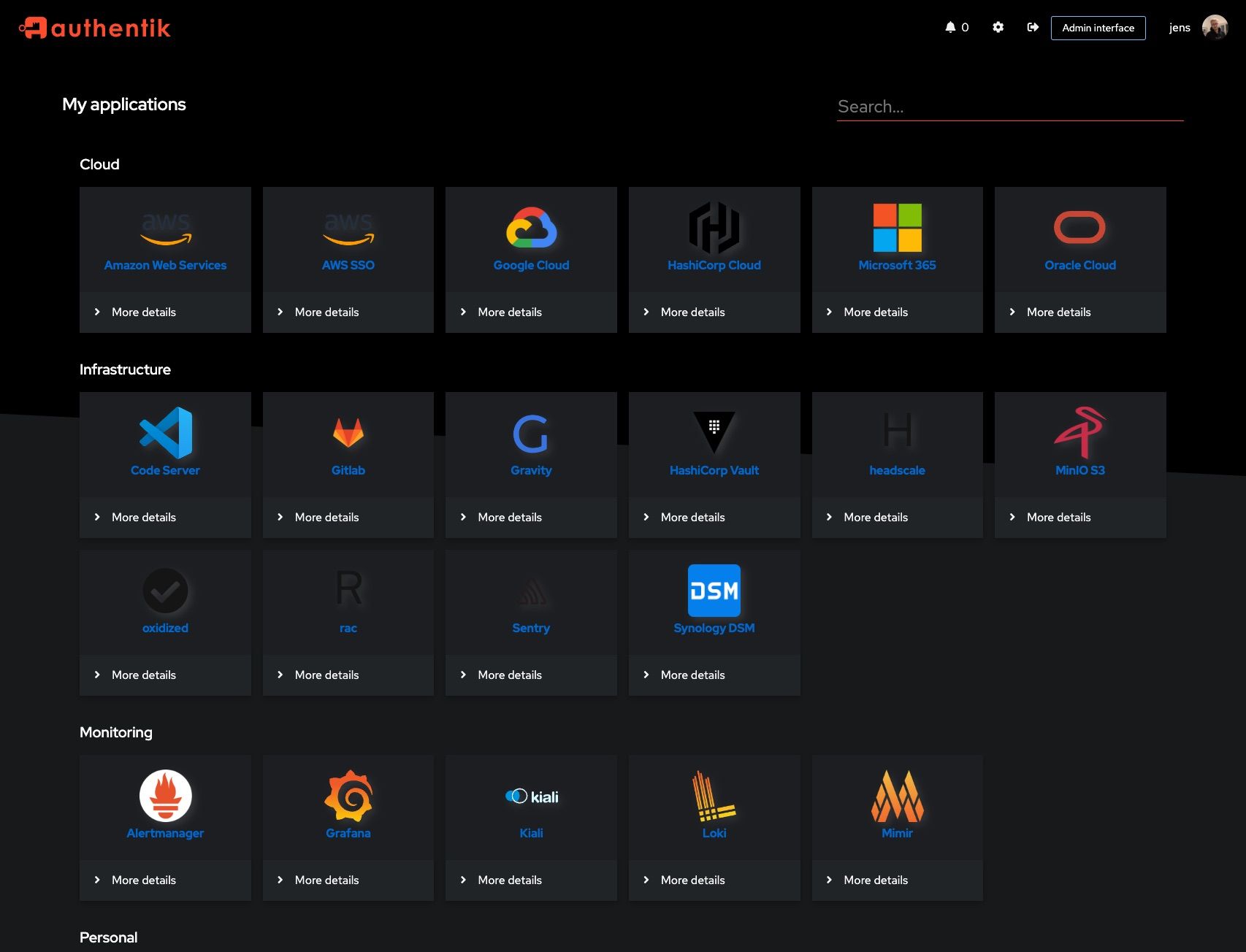

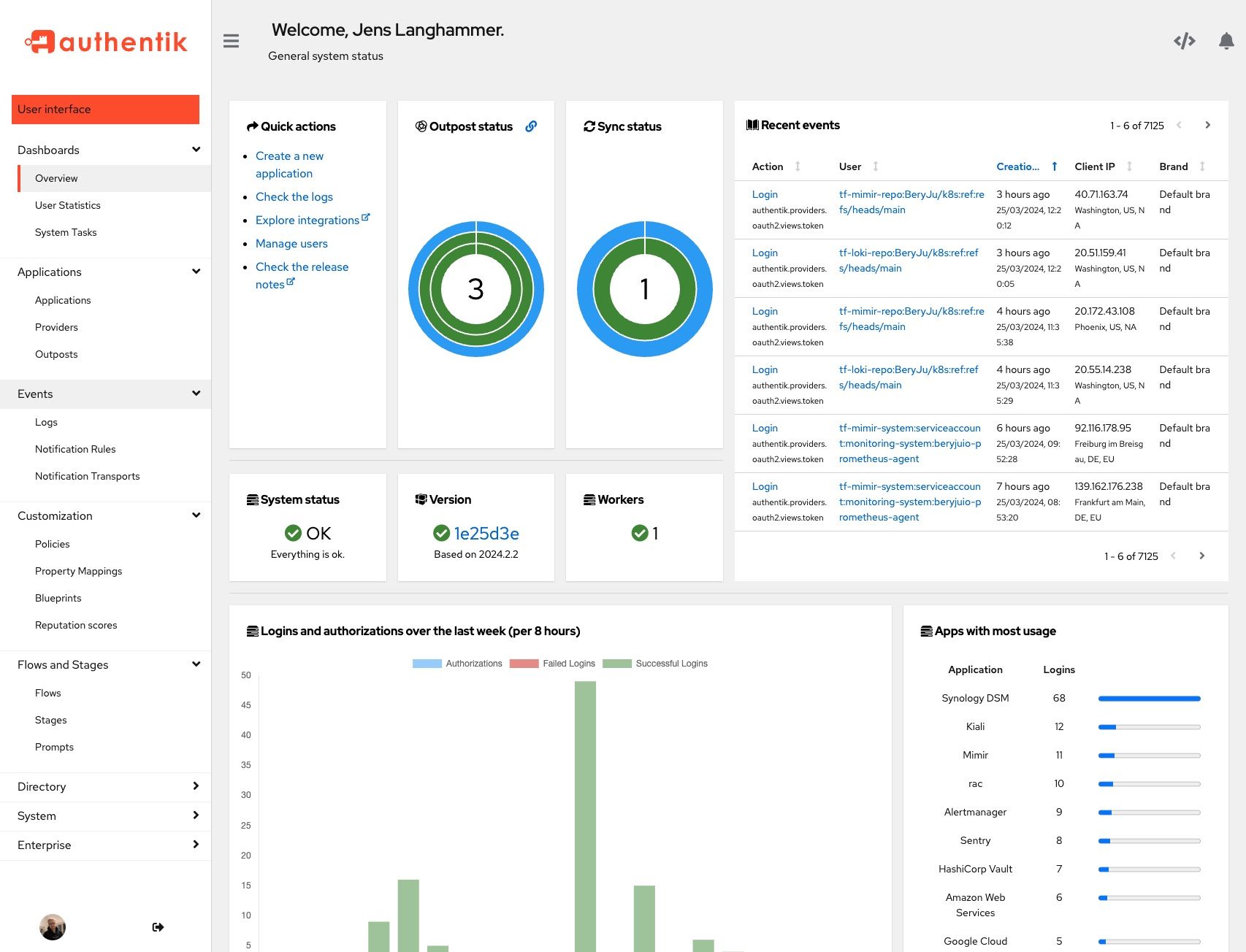

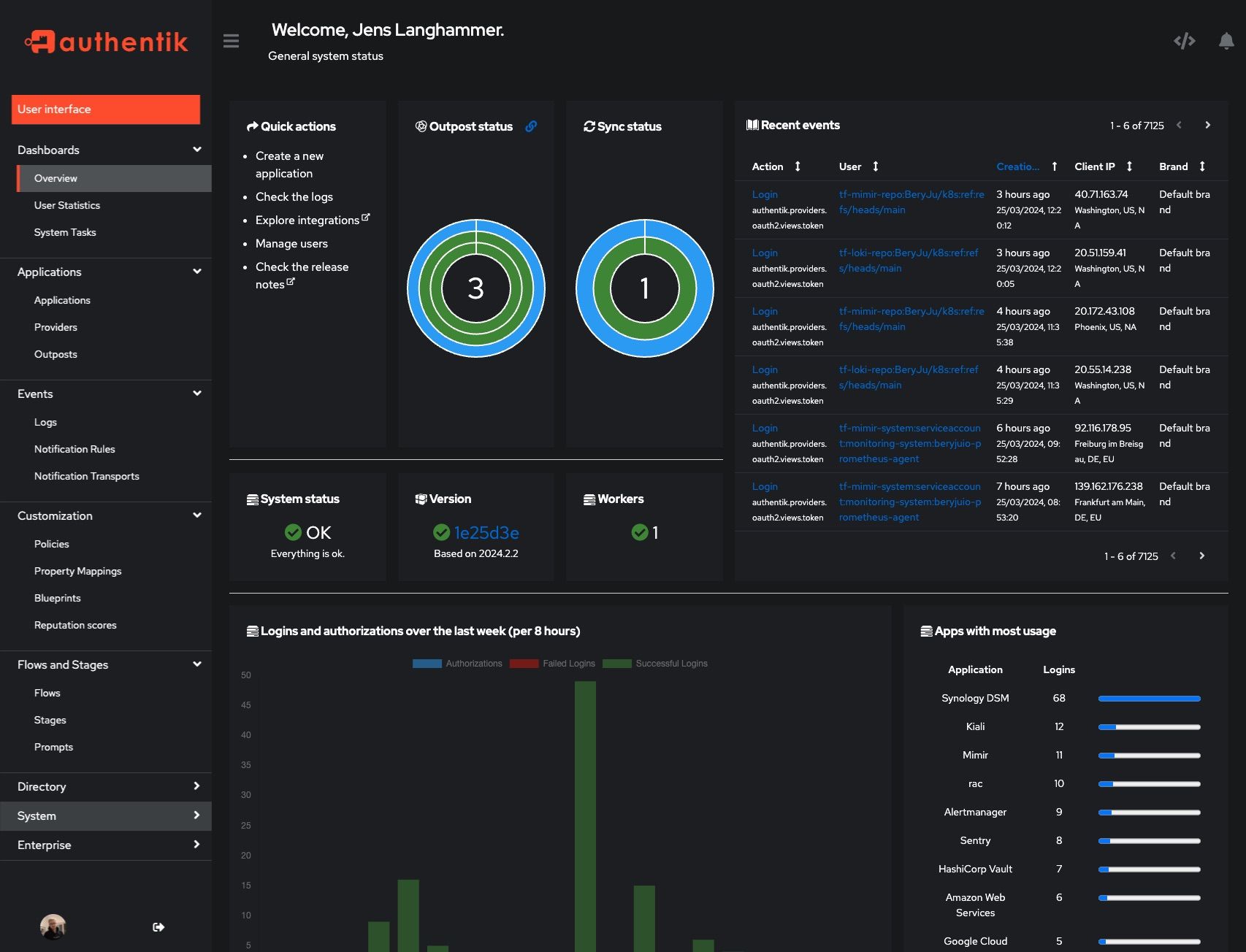

## Screenshots

|

||||

|

||||

@@ -32,20 +32,14 @@ Our [enterprise offering](https://goauthentik.io/pricing) is available for organ

|

||||

|  |  |

|

||||

|  |  |

|

||||

|

||||

## Development and contributions

|

||||

## Development

|

||||

|

||||

See the [Developer Documentation](https://docs.goauthentik.io/docs/developer-docs/) for information about setting up local build environments, testing your contributions, and our contribution process.

|

||||

See [Developer Documentation](https://docs.goauthentik.io/docs/developer-docs/?utm_source=github)

|

||||

|

||||

## Security

|

||||

|

||||

Please see [SECURITY.md](SECURITY.md).

|

||||

See [SECURITY.md](SECURITY.md)

|

||||

|

||||

## Adoption

|

||||

## Adoption and Contributions

|

||||

|

||||

Using authentik? We'd love to hear your story and feature your logo. Email us at [hello@goauthentik.io](mailto:hello@goauthentik.io) or open a GitHub Issue/PR!

|

||||

|

||||

## License

|

||||

|

||||

[](LICENSE)

|

||||

[](website/LICENSE)

|

||||

[](authentik/enterprise/LICENSE)

|

||||

Your organization uses authentik? We'd love to add your logo to the readme and our website! Email us @ hello@goauthentik.io or open a GitHub Issue/PR! For more information on how to contribute to authentik, please refer to our [contribution guide](https://docs.goauthentik.io/docs/developer-docs?utm_source=github).

|

||||

|

||||

@@ -104,68 +104,6 @@ def postprocess_schema_responses(result, generator: SchemaGenerator, **kwargs):

|

||||

return result

|

||||

|

||||

|

||||

def postprocess_schema_pagination(result, generator: SchemaGenerator, **kwargs):

|

||||

to_replace = {

|

||||

"ordering": create_component(

|

||||

generator,

|

||||

"QueryPaginationOrdering",

|

||||

{

|

||||

"name": "ordering",

|

||||

"required": False,

|

||||

"in": "query",

|

||||

"description": "Which field to use when ordering the results.",

|

||||

"schema": {"type": "string"},

|

||||

},

|

||||

ResolvedComponent.PARAMETER,

|

||||

),

|

||||

"page": create_component(

|

||||

generator,

|

||||

"QueryPaginationPage",

|

||||

{

|

||||

"name": "page",

|

||||

"required": False,

|

||||

"in": "query",

|

||||

"description": "A page number within the paginated result set.",

|

||||

"schema": {"type": "integer"},

|

||||

},

|

||||

ResolvedComponent.PARAMETER,

|

||||

),

|

||||

"page_size": create_component(

|

||||

generator,

|

||||

"QueryPaginationPageSize",

|

||||

{

|

||||

"name": "page_size",

|

||||

"required": False,

|

||||

"in": "query",

|

||||

"description": "Number of results to return per page.",

|

||||

"schema": {"type": "integer"},

|

||||

},

|

||||

ResolvedComponent.PARAMETER,

|

||||

),

|

||||

"search": create_component(

|

||||

generator,

|

||||

"QuerySearch",

|

||||

{

|

||||

"name": "search",

|

||||

"required": False,

|

||||

"in": "query",

|

||||

"description": "A search term.",

|

||||

"schema": {"type": "string"},

|

||||

},

|

||||

ResolvedComponent.PARAMETER,

|

||||

),

|

||||

}

|

||||

for path in result["paths"].values():

|

||||

for method in path.values():

|

||||

# print(method["parameters"])

|

||||

for idx, param in enumerate(method.get("parameters", [])):

|

||||

for replace_name, replace_ref in to_replace.items():

|

||||

if param["name"] == replace_name:

|

||||

method["parameters"][idx] = replace_ref.ref

|

||||

# print(method["parameters"])

|

||||

return result

|

||||

|

||||

|

||||

def preprocess_schema_exclude_non_api(endpoints, **kwargs):

|

||||

"""Filter out all API Views which are not mounted under /api"""

|

||||

return [

|

||||

|

||||

@@ -76,7 +76,6 @@ from authentik.providers.scim.models import SCIMProviderGroup, SCIMProviderUser

|

||||

from authentik.rbac.models import Role

|

||||

from authentik.sources.scim.models import SCIMSourceGroup, SCIMSourceUser

|

||||

from authentik.stages.authenticator_webauthn.models import WebAuthnDeviceType

|

||||

from authentik.stages.consent.models import UserConsent

|

||||

from authentik.tasks.models import Task

|

||||

from authentik.tenants.models import Tenant

|

||||

|

||||

@@ -136,7 +135,6 @@ def excluded_models() -> list[type[Model]]:

|

||||

EndpointDeviceConnection,

|

||||

DeviceToken,

|

||||

StreamEvent,

|

||||

UserConsent,

|

||||

)

|

||||

|

||||

|

||||

|

||||

@@ -38,7 +38,6 @@ from authentik.blueprints.v1.oci import OCI_PREFIX

|

||||

from authentik.events.logs import capture_logs

|

||||

from authentik.events.utils import sanitize_dict

|

||||

from authentik.lib.config import CONFIG

|

||||

from authentik.tasks.apps import PRIORITY_HIGH

|

||||

from authentik.tasks.models import Task

|

||||

from authentik.tasks.schedules.models import Schedule

|

||||

from authentik.tenants.models import Tenant

|

||||

@@ -112,7 +111,6 @@ class BlueprintEventHandler(FileSystemEventHandler):

|

||||

@actor(

|

||||

description=_("Find blueprints as `blueprints_find` does, but return a safe dict."),

|

||||

throws=(DatabaseError, ProgrammingError, InternalError),

|

||||

priority=PRIORITY_HIGH,

|

||||

)

|

||||

def blueprints_find_dict():

|

||||

blueprints = []

|

||||

|

||||

@@ -113,7 +113,7 @@ class Brand(SerializerModel):

|

||||

try:

|

||||

return self.attributes.get("settings", {}).get("locale", "")

|

||||

|

||||

except Exception as exc: # noqa

|

||||

except Exception as exc:

|

||||

LOGGER.warning("Failed to get default locale", exc=exc)

|

||||

return ""

|

||||

|

||||

|

||||

@@ -295,7 +295,7 @@ class GroupViewSet(UsedByMixin, ModelViewSet):

|

||||

@extend_schema(

|

||||

request=UserAccountSerializer,

|

||||

responses={

|

||||

204: OpenApiResponse(description="User removed"),

|

||||

204: OpenApiResponse(description="User added"),

|

||||

404: OpenApiResponse(description="User not found"),

|

||||

},

|

||||

)

|

||||

@@ -307,7 +307,7 @@ class GroupViewSet(UsedByMixin, ModelViewSet):

|

||||

permission_classes=[],

|

||||

)

|

||||

def remove_user(self, request: Request, pk: str) -> Response:

|

||||

"""Remove user from group"""

|

||||

"""Add user to group"""

|

||||

group: Group = self.get_object()

|

||||

user: User = (

|

||||

get_objects_for_user(request.user, "authentik_core.view_user")

|

||||

|

||||

@@ -171,7 +171,7 @@ class PropertyMappingViewSet(

|

||||

except PropertyMappingExpressionException as exc:

|

||||

response_data["result"] = exception_to_string(exc.exc)

|

||||

response_data["successful"] = False

|

||||

except Exception as exc: # noqa

|

||||

except Exception as exc:

|

||||

response_data["result"] = exception_to_string(exc)

|

||||

response_data["successful"] = False

|

||||

response = PropertyMappingTestResultSerializer(response_data)

|

||||

|

||||

@@ -328,12 +328,6 @@ class SessionUserSerializer(PassiveSerializer):

|

||||

original = UserSelfSerializer(required=False)

|

||||

|

||||

|

||||

class UserPasswordSetSerializer(PassiveSerializer):

|

||||

"""Payload to set a users' password directly"""

|

||||

|

||||

password = CharField(required=True)

|

||||

|

||||

|

||||

class UsersFilter(FilterSet):

|

||||

"""Filter for users"""

|

||||

|

||||

@@ -591,7 +585,12 @@ class UserViewSet(UsedByMixin, ModelViewSet):

|

||||

|

||||