mirror of

https://github.com/goauthentik/authentik

synced 2026-05-07 23:52:38 +02:00

Compare commits

119 Commits

version-20

...

developer-

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

c11f407470 | ||

|

|

b7c6b961a1 | ||

|

|

e6adb72695 | ||

|

|

9cbdcd2cad | ||

|

|

197f4c5585 | ||

|

|

80e9865c6a | ||

|

|

c08df26c65 | ||

|

|

332a53ceff | ||

|

|

4919772d68 | ||

|

|

a978b4b60e | ||

|

|

17bd1f1574 | ||

|

|

0b4be1fdda | ||

|

|

e305c98eb8 | ||

|

|

35bd1d9907 | ||

|

|

3150885889 | ||

|

|

5fd96518d3 | ||

|

|

287647beea | ||

|

|

2c1a0ca0fc | ||

|

|

da47095ebc | ||

|

|

2ea95ba189 | ||

|

|

b277828b21 | ||

|

|

8765c92fc4 | ||

|

|

536688f23b | ||

|

|

7861f5a40e | ||

|

|

e7b43b72ab | ||

|

|

2bf9a9d4fe | ||

|

|

f6af8f3b9d | ||

|

|

c9a4eff3a8 | ||

|

|

b893305e5f | ||

|

|

b3a5cc8320 | ||

|

|

94d7a989a1 | ||

|

|

359fa5d5df | ||

|

|

11c9015a49 | ||

|

|

f135990c6b | ||

|

|

6f63a3eb15 | ||

|

|

2209fcea2a | ||

|

|

e5efb50a37 | ||

|

|

bbc02dc065 | ||

|

|

f3f81951c6 | ||

|

|

739eff66e0 | ||

|

|

48de61a926 | ||

|

|

032031f2cf | ||

|

|

4e44209af1 | ||

|

|

289555abcd | ||

|

|

943c456555 | ||

|

|

a79b914d39 | ||

|

|

7a8816abd1 | ||

|

|

93e448c3fd | ||

|

|

109c869f97 | ||

|

|

8029fdad7b | ||

|

|

d2aac457ef | ||

|

|

70ce5ccceb | ||

|

|

173c334478 | ||

|

|

6e321097a1 | ||

|

|

f3bf8097b8 | ||

|

|

b869433e4d | ||

|

|

5aef86c3d1 | ||

|

|

970ac44ff8 | ||

|

|

9145d55e6c | ||

|

|

1c36b361b2 | ||

|

|

d55e23cdb8 | ||

|

|

52673e4223 | ||

|

|

5cbcbf8d2c | ||

|

|

f29a4c1876 | ||

|

|

38fb5cd712 | ||

|

|

5b2aad586f | ||

|

|

2dd1c7b1ab | ||

|

|

57c24e5c1c | ||

|

|

76d9b3479e | ||

|

|

e9f946cdf2 | ||

|

|

167452f1ed | ||

|

|

dbfdb37e83 | ||

|

|

efdbf7aeed | ||

|

|

8e9e4de80f | ||

|

|

a63c5b1846 | ||

|

|

80b84fa8a8 | ||

|

|

4ce9795491 | ||

|

|

e50cf1c150 | ||

|

|

4178717386 | ||

|

|

20d068f767 | ||

|

|

5b7a42e6d6 | ||

|

|

1398561142 | ||

|

|

55657e149b | ||

|

|

d5d7140631 | ||

|

|

17ff12f68f | ||

|

|

9c9a6e3d66 | ||

|

|

2cd81b2e78 | ||

|

|

bad426f694 | ||

|

|

6404fba2e4 | ||

|

|

c33b9f2d3f | ||

|

|

bac6e965f4 | ||

|

|

36cb4dc750 | ||

|

|

45d9945a3a | ||

|

|

23285ad664 | ||

|

|

91ab9503fd | ||

|

|

fb7802e6af | ||

|

|

0f13a63528 | ||

|

|

36daf4b519 | ||

|

|

5cc4793b84 | ||

|

|

a6063d4af4 | ||

|

|

8f450e6e14 | ||

|

|

a1fc0605e2 | ||

|

|

c886e4ff6b | ||

|

|

f91ebc2ad5 | ||

|

|

dbe7bfe58b | ||

|

|

05d4d207d7 | ||

|

|

11efc75451 | ||

|

|

4d2d020be1 | ||

|

|

9c0905d76d | ||

|

|

3ca94b2198 | ||

|

|

dbf51fb11f | ||

|

|

ad69eb955f | ||

|

|

c867ebc014 | ||

|

|

adea1e460c | ||

|

|

846c58e617 | ||

|

|

352079fc3c | ||

|

|

6786391732 | ||

|

|

4b3d08154d | ||

|

|

130fe4cac7 |

@@ -67,20 +67,14 @@ jobs:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

- name: Setup node

|

||||

uses: actions/setup-node@v4

|

||||

with:

|

||||

node-version-file: web/package.json

|

||||

cache: "npm"

|

||||

cache-dependency-path: web/package-lock.json

|

||||

- name: Setup go

|

||||

uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version-file: "go.mod"

|

||||

- name: Generate API Clients

|

||||

- name: make empty clients

|

||||

if: ${{ inputs.release }}

|

||||

run: |

|

||||

make gen-client-ts

|

||||

make gen-client-go

|

||||

mkdir -p ./gen-ts-api

|

||||

mkdir -p ./gen-go-api

|

||||

- name: generate ts client

|

||||

if: ${{ !inputs.release }}

|

||||

run: make gen-client-ts

|

||||

- name: Build Docker Image

|

||||

uses: docker/build-push-action@v6

|

||||

id: push

|

||||

|

||||

1

.github/workflows/api-ts-publish.yml

vendored

1

.github/workflows/api-ts-publish.yml

vendored

@@ -10,6 +10,7 @@ on:

|

||||

|

||||

jobs:

|

||||

build:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: generate_token

|

||||

|

||||

1

.github/workflows/ci-docs-source.yml

vendored

1

.github/workflows/ci-docs-source.yml

vendored

@@ -13,6 +13,7 @@ env:

|

||||

|

||||

jobs:

|

||||

publish-source-docs:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 120

|

||||

steps:

|

||||

|

||||

2

.github/workflows/ci-docs.yml

vendored

2

.github/workflows/ci-docs.yml

vendored

@@ -61,6 +61,7 @@ jobs:

|

||||

working-directory: website/

|

||||

run: npm run build -w integrations

|

||||

build-container:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

# Needed to upload container images to ghcr.io

|

||||

@@ -120,3 +121,4 @@ jobs:

|

||||

- uses: re-actors/alls-green@release/v1

|

||||

with:

|

||||

jobs: ${{ toJSON(needs) }}

|

||||

allowed-skips: ${{ github.repository == 'goauthentik/authentik-internal' && 'build-container' || '[]' }}

|

||||

|

||||

1

.github/workflows/ci-main-daily.yml

vendored

1

.github/workflows/ci-main-daily.yml

vendored

@@ -9,6 +9,7 @@ on:

|

||||

|

||||

jobs:

|

||||

test-container:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

fail-fast: false

|

||||

|

||||

10

.github/workflows/ci-main.yml

vendored

10

.github/workflows/ci-main.yml

vendored

@@ -80,15 +80,7 @@ jobs:

|

||||

cp authentik/lib/default.yml local.env.yml

|

||||

cp -R .github ..

|

||||

cp -R scripts ..

|

||||

# Previous stable tag

|

||||

prev_stable=$(git tag --sort=version:refname | grep '^version/' | grep -vE -- '-rc[0-9]+$' | tail -n1)

|

||||

# Current version family based on

|

||||

current_version_family=$(python -c "from authentik import VERSION; print(VERSION)" | grep -vE -- 'rc[0-9]+$')

|

||||

if [[ -n $current_version_family ]]; then

|

||||

prev_stable=$current_version_family

|

||||

fi

|

||||

echo "::notice::Checking out ${prev_stable} as stable version..."

|

||||

git checkout $(prev_stable)

|

||||

git checkout $(git tag --sort=version:refname | grep '^version/' | grep -vE -- '-rc[0-9]+$' | tail -n1)

|

||||

rm -rf .github/ scripts/

|

||||

mv ../.github ../scripts .

|

||||

- name: Setup authentik env (stable)

|

||||

|

||||

1

.github/workflows/ci-outpost.yml

vendored

1

.github/workflows/ci-outpost.yml

vendored

@@ -59,6 +59,7 @@ jobs:

|

||||

with:

|

||||

jobs: ${{ toJSON(needs) }}

|

||||

build-container:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

timeout-minutes: 120

|

||||

needs:

|

||||

- ci-outpost-mark

|

||||

|

||||

@@ -13,6 +13,7 @@ env:

|

||||

|

||||

jobs:

|

||||

build:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: generate_token

|

||||

|

||||

15

.github/workflows/gh-ghcr-retention.yml

vendored

15

.github/workflows/gh-ghcr-retention.yml

vendored

@@ -5,13 +5,10 @@ on:

|

||||

# schedule:

|

||||

# - cron: "0 0 * * *" # every day at midnight

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

dry-run:

|

||||

type: boolean

|

||||

description: Enable dry-run mode

|

||||

|

||||

jobs:

|

||||

clean-ghcr:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

name: Delete old unused container images

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

@@ -21,12 +18,12 @@ jobs:

|

||||

app_id: ${{ secrets.GH_APP_ID }}

|

||||

private_key: ${{ secrets.GH_APP_PRIVATE_KEY }}

|

||||

- name: Delete 'dev' containers older than a week

|

||||

uses: snok/container-retention-policy@3b0972b2276b171b212f8c4efbca59ebba26eceb # v3.0.1

|

||||

uses: snok/container-retention-policy@v2

|

||||

with:

|

||||

image-names: dev-server,dev-ldap,dev-proxy

|

||||

image-tags: "!gh-next,!gh-main"

|

||||

cut-off: One week ago UTC

|

||||

account: goauthentik

|

||||

tag-selection: untagged

|

||||

account-type: org

|

||||

org-name: goauthentik

|

||||

untagged-only: false

|

||||

token: ${{ steps.generate_token.outputs.token }}

|

||||

dry-run: ${{ inputs.dry-run }}

|

||||

skip-tags: gh-next,gh-main

|

||||

|

||||

1

.github/workflows/packages-npm-publish.yml

vendored

1

.github/workflows/packages-npm-publish.yml

vendored

@@ -14,6 +14,7 @@ on:

|

||||

|

||||

jobs:

|

||||

publish:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

fail-fast: false

|

||||

|

||||

1

.github/workflows/release-next-branch.yml

vendored

1

.github/workflows/release-next-branch.yml

vendored

@@ -12,6 +12,7 @@ permissions:

|

||||

|

||||

jobs:

|

||||

update-next:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

environment: internal-production

|

||||

steps:

|

||||

|

||||

20

.github/workflows/release-publish.yml

vendored

20

.github/workflows/release-publish.yml

vendored

@@ -87,11 +87,6 @@ jobs:

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version-file: "go.mod"

|

||||

- uses: actions/setup-node@v5

|

||||

with:

|

||||

node-version-file: web/package.json

|

||||

cache: "npm"

|

||||

cache-dependency-path: web/package-lock.json

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v3.6.0

|

||||

- name: Set up Docker Buildx

|

||||

@@ -103,10 +98,10 @@ jobs:

|

||||

DOCKER_USERNAME: ${{ secrets.DOCKER_CORP_USERNAME }}

|

||||

with:

|

||||

image-name: ghcr.io/goauthentik/${{ matrix.type }},authentik/${{ matrix.type }}

|

||||

- name: Generate API Clients

|

||||

- name: make empty clients

|

||||

run: |

|

||||

make gen-client-ts

|

||||

make gen-client-go

|

||||

mkdir -p ./gen-ts-api

|

||||

mkdir -p ./gen-go-api

|

||||

- name: Docker Login Registry

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

@@ -160,17 +155,10 @@ jobs:

|

||||

node-version-file: web/package.json

|

||||

cache: "npm"

|

||||

cache-dependency-path: web/package-lock.json

|

||||

- name: Install web dependencies

|

||||

working-directory: web/

|

||||

run: |

|

||||

npm ci

|

||||

- name: Generate API Clients

|

||||

run: |

|

||||

make gen-client-ts

|

||||

make gen-client-go

|

||||

- name: Build web

|

||||

working-directory: web/

|

||||

run: |

|

||||

npm ci

|

||||

npm run build-proxy

|

||||

- name: Build outpost

|

||||

run: |

|

||||

|

||||

7

.github/workflows/release-tag.yml

vendored

7

.github/workflows/release-tag.yml

vendored

@@ -47,14 +47,8 @@ jobs:

|

||||

test:

|

||||

name: Pre-release test

|

||||

runs-on: ubuntu-latest

|

||||

needs:

|

||||

- check-inputs

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

ref: "version-${{ needs.check-inputs.outputs.major_version }}"

|

||||

- name: Setup authentik env

|

||||

uses: ./.github/actions/setup

|

||||

- run: make test-docker

|

||||

bump-authentik:

|

||||

name: Bump authentik version

|

||||

@@ -89,7 +83,6 @@ jobs:

|

||||

# ID from https://api.github.com/users/authentik-automation[bot]

|

||||

git config --global user.name '${{ steps.app-token.outputs.app-slug }}[bot]'

|

||||

git config --global user.email '${{ steps.get-user-id.outputs.user-id }}+${{ steps.app-token.outputs.app-slug }}[bot]@users.noreply.github.com'

|

||||

git pull

|

||||

git commit -a -m "release: ${{ inputs.version }}" --allow-empty

|

||||

git tag "version/${{ inputs.version }}" HEAD -m "version/${{ inputs.version }}"

|

||||

git push --follow-tags

|

||||

|

||||

22

.github/workflows/repo-mirror-cleanup.yml

vendored

Normal file

22

.github/workflows/repo-mirror-cleanup.yml

vendored

Normal file

@@ -0,0 +1,22 @@

|

||||

---

|

||||

name: Repo - Cleanup internal mirror

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

|

||||

jobs:

|

||||

to_internal:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

fetch-depth: 0

|

||||

- if: ${{ env.MIRROR_KEY != '' }}

|

||||

uses: BeryJu/repository-mirroring-action@5cf300935bc2e068f73ea69bcc411a8a997208eb

|

||||

with:

|

||||

target_repo_url: git@github.com:goauthentik/authentik-internal.git

|

||||

ssh_private_key: ${{ secrets.GH_MIRROR_KEY }}

|

||||

args: --tags --force --prune

|

||||

env:

|

||||

MIRROR_KEY: ${{ secrets.GH_MIRROR_KEY }}

|

||||

21

.github/workflows/repo-mirror.yml

vendored

Normal file

21

.github/workflows/repo-mirror.yml

vendored

Normal file

@@ -0,0 +1,21 @@

|

||||

---

|

||||

name: Repo - Mirror to internal

|

||||

|

||||

on: [push, delete]

|

||||

|

||||

jobs:

|

||||

to_internal:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

fetch-depth: 0

|

||||

- if: ${{ env.MIRROR_KEY != '' }}

|

||||

uses: BeryJu/repository-mirroring-action@5cf300935bc2e068f73ea69bcc411a8a997208eb

|

||||

with:

|

||||

target_repo_url: git@github.com:goauthentik/authentik-internal.git

|

||||

ssh_private_key: ${{ secrets.GH_MIRROR_KEY }}

|

||||

args: --tags --force

|

||||

env:

|

||||

MIRROR_KEY: ${{ secrets.GH_MIRROR_KEY }}

|

||||

1

.github/workflows/repo-stale.yml

vendored

1

.github/workflows/repo-stale.yml

vendored

@@ -12,6 +12,7 @@ permissions:

|

||||

|

||||

jobs:

|

||||

stale:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: generate_token

|

||||

|

||||

@@ -17,6 +17,7 @@ env:

|

||||

|

||||

jobs:

|

||||

compile:

|

||||

if: ${{ github.repository != 'goauthentik/authentik-internal' }}

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- id: generate_token

|

||||

|

||||

@@ -33,17 +33,12 @@ packages/prettier-config @goauthentik/frontend

|

||||

packages/tsconfig @goauthentik/frontend

|

||||

# Web

|

||||

web/ @goauthentik/frontend

|

||||

tests/wdio/ @goauthentik/frontend

|

||||

# Locale

|

||||

locale/ @goauthentik/backend @goauthentik/frontend

|

||||

web/xliff/ @goauthentik/backend @goauthentik/frontend

|

||||

# Docs & Website

|

||||

docs/ @goauthentik/docs

|

||||

# TODO Remove after moving website to docs

|

||||

# Docs

|

||||

website/ @goauthentik/docs

|

||||

CODE_OF_CONDUCT.md @goauthentik/docs

|

||||

# Security

|

||||

SECURITY.md @goauthentik/security @goauthentik/docs

|

||||

# TODO Remove after moving website to docs

|

||||

website/security/ @goauthentik/security @goauthentik/docs

|

||||

docs/security/ @goauthentik/security @goauthentik/docs

|

||||

|

||||

@@ -1,4 +0,0 @@

|

||||

# Contributing to authentik

|

||||

|

||||

Thanks for your interest in contributing! Please see our [contributing guide](https://docs.goauthentik.io/docs/developer-docs/?utm_source=github) for more information.

|

||||

|

||||

1

CONTRIBUTING.md

Symbolic link

1

CONTRIBUTING.md

Symbolic link

@@ -0,0 +1 @@

|

||||

website/docs/developer-docs/index.md

|

||||

12

Dockerfile

12

Dockerfile

@@ -44,7 +44,6 @@ RUN --mount=type=cache,id=apt-$TARGETARCH$TARGETVARIANT,sharing=locked,target=/v

|

||||

|

||||

RUN --mount=type=bind,target=/go/src/goauthentik.io/go.mod,src=./go.mod \

|

||||

--mount=type=bind,target=/go/src/goauthentik.io/go.sum,src=./go.sum \

|

||||

--mount=type=bind,target=/go/src/goauthentik.io/gen-go-api,src=./gen-go-api \

|

||||

--mount=type=cache,target=/go/pkg/mod \

|

||||

go mod download

|

||||

|

||||

@@ -58,7 +57,6 @@ COPY ./go.mod /go/src/goauthentik.io/go.mod

|

||||

COPY ./go.sum /go/src/goauthentik.io/go.sum

|

||||

|

||||

RUN --mount=type=cache,sharing=locked,target=/go/pkg/mod \

|

||||

--mount=type=bind,target=/go/src/goauthentik.io/gen-go-api,src=./gen-go-api \

|

||||

--mount=type=cache,id=go-build-$TARGETARCH$TARGETVARIANT,sharing=locked,target=/root/.cache/go-build \

|

||||

if [ "$TARGETARCH" = "arm64" ]; then export CC=aarch64-linux-gnu-gcc && export CC_FOR_TARGET=gcc-aarch64-linux-gnu; fi && \

|

||||

CGO_ENABLED=1 GOFIPS140=latest GOARM="${TARGETVARIANT#v}" \

|

||||

@@ -78,9 +76,9 @@ RUN --mount=type=secret,id=GEOIPUPDATE_ACCOUNT_ID \

|

||||

/bin/sh -c "GEOIPUPDATE_LICENSE_KEY_FILE=/run/secrets/GEOIPUPDATE_LICENSE_KEY /usr/bin/entry.sh || echo 'Failed to get GeoIP database, disabling'; exit 0"

|

||||

|

||||

# Stage 4: Download uv

|

||||

FROM ghcr.io/astral-sh/uv:0.8.8 AS uv

|

||||

FROM ghcr.io/astral-sh/uv:0.8.13 AS uv

|

||||

# Stage 5: Base python image

|

||||

FROM ghcr.io/goauthentik/fips-python:3.13.6-slim-bookworm-fips AS python-base

|

||||

FROM ghcr.io/goauthentik/fips-python:3.13.7-slim-bookworm-fips AS python-base

|

||||

|

||||

ENV VENV_PATH="/ak-root/.venv" \

|

||||

PATH="/lifecycle:/ak-root/.venv/bin:$PATH" \

|

||||

@@ -121,11 +119,7 @@ RUN --mount=type=cache,id=apt-$TARGETARCH$TARGETVARIANT,sharing=locked,target=/v

|

||||

libltdl-dev && \

|

||||

curl https://sh.rustup.rs -sSf | sh -s -- -y

|

||||

|

||||

ENV UV_NO_BINARY_PACKAGE="cryptography lxml python-kadmin-rs xmlsec" \

|

||||

# https://github.com/rust-lang/rustup/issues/2949

|

||||

# Fixes issues where the rust version in the build cache is older than latest

|

||||

# and rustup tries to update it, which fails

|

||||

RUSTUP_PERMIT_COPY_RENAME="true"

|

||||

ENV UV_NO_BINARY_PACKAGE="cryptography lxml python-kadmin-rs xmlsec"

|

||||

|

||||

RUN --mount=type=bind,target=pyproject.toml,src=pyproject.toml \

|

||||

--mount=type=bind,target=uv.lock,src=uv.lock \

|

||||

|

||||

15

Makefile

15

Makefile

@@ -98,11 +98,11 @@ bump: ## Bump authentik version. Usage: make bump version=20xx.xx.xx

|

||||

ifndef version

|

||||

$(error Usage: make bump version=20xx.xx.xx )

|

||||

endif

|

||||

$(eval current_version := $(shell cat ${PWD}/internal/constants/VERSION))

|

||||

sed -i 's/^version = ".*"/version = "$(version)"/' pyproject.toml

|

||||

sed -i 's/^VERSION = ".*"/VERSION = "$(version)"/' authentik/__init__.py

|

||||

$(MAKE) gen-build gen-compose aws-cfn

|

||||

sed -i "s/\"${current_version}\"/\"$(version)\"/" ${PWD}/package.json ${PWD}/package-lock.json ${PWD}/web/package.json ${PWD}/web/package-lock.json

|

||||

npm version --no-git-tag-version --allow-same-version $(version)

|

||||

cd ${PWD}/web && npm version --no-git-tag-version --allow-same-version $(version)

|

||||

echo -n $(version) > ${PWD}/internal/constants/VERSION

|

||||

|

||||

#########################

|

||||

@@ -144,7 +144,12 @@ gen-clean-ts: ## Remove generated API client for TypeScript

|

||||

rm -rf ${PWD}/web/node_modules/@goauthentik/api/

|

||||

|

||||

gen-clean-go: ## Remove generated API client for Go

|

||||

mkdir -p ${PWD}/${GEN_API_GO}

|

||||

ifneq ($(wildcard ${PWD}/${GEN_API_GO}/.*),)

|

||||

make -C ${PWD}/${GEN_API_GO} clean

|

||||

else

|

||||

rm -rf ${PWD}/${GEN_API_GO}

|

||||

endif

|

||||

|

||||

gen-clean-py: ## Remove generated API client for Python

|

||||

rm -rf ${PWD}/${GEN_API_PY}/

|

||||

@@ -182,9 +187,13 @@ gen-client-py: gen-clean-py ## Build and install the authentik API for Python

|

||||

|

||||

gen-client-go: gen-clean-go ## Build and install the authentik API for Golang

|

||||

mkdir -p ${PWD}/${GEN_API_GO}

|

||||

ifeq ($(wildcard ${PWD}/${GEN_API_GO}/.*),)

|

||||

git clone --depth 1 https://github.com/goauthentik/client-go.git ${PWD}/${GEN_API_GO}

|

||||

else

|

||||

cd ${PWD}/${GEN_API_GO} && git pull

|

||||

endif

|

||||

cp ${PWD}/schema.yml ${PWD}/${GEN_API_GO}

|

||||

make -C ${PWD}/${GEN_API_GO} build version=${NPM_VERSION}

|

||||

make -C ${PWD}/${GEN_API_GO} build

|

||||

go mod edit -replace goauthentik.io/api/v3=./${GEN_API_GO}

|

||||

|

||||

gen-dev-config: ## Generate a local development config file

|

||||

|

||||

27

README.md

27

README.md

@@ -15,16 +15,15 @@

|

||||

|

||||

## What is authentik?

|

||||

|

||||

authentik is an open-source Identity Provider (IdP) for modern SSO. It supports SAML, OAuth2/OIDC, LDAP, RADIUS, and more, designed for self-hosting from small labs to large production clusters.

|

||||

authentik is an open-source Identity Provider that emphasizes flexibility and versatility, with support for a wide set of protocols.

|

||||

|

||||

Our [enterprise offering](https://goauthentik.io/pricing) is available for organizations to securely replace existing IdPs such as Okta, Auth0, Entra ID, and Ping Identity for robust, large-scale identity management.

|

||||

Our [enterprise offer](https://goauthentik.io/pricing) can also be used as a self-hosted replacement for large-scale deployments of Okta/Auth0, Entra ID, Ping Identity, or other legacy IdPs for employees and B2B2C use.

|

||||

|

||||

## Installation

|

||||

|

||||

- Docker Compose: recommended for small/test setups. See the [documentation](https://docs.goauthentik.io/docs/install-config/install/docker-compose/).

|

||||

- Kubernetes (Helm Chart): recommended for larger setups. See the [documentation](https://docs.goauthentik.io/docs/install-config/install/kubernetes/) and the Helm chart [repository](https://github.com/goauthentik/helm).

|

||||

- AWS CloudFormation: deploy on AWS using our official templates. See the [documentation](https://docs.goauthentik.io/docs/install-config/install/aws/).

|

||||

- DigitalOcean Marketplace: one-click deployment via the official Marketplace app. See the [app listing](https://marketplace.digitalocean.com/apps/authentik).

|

||||

For small/test setups it is recommended to use Docker Compose; refer to the [documentation](https://goauthentik.io/docs/installation/docker-compose/?utm_source=github).

|

||||

|

||||

For bigger setups, there is a Helm Chart [here](https://github.com/goauthentik/helm). This is documented [here](https://goauthentik.io/docs/installation/kubernetes/?utm_source=github).

|

||||

|

||||

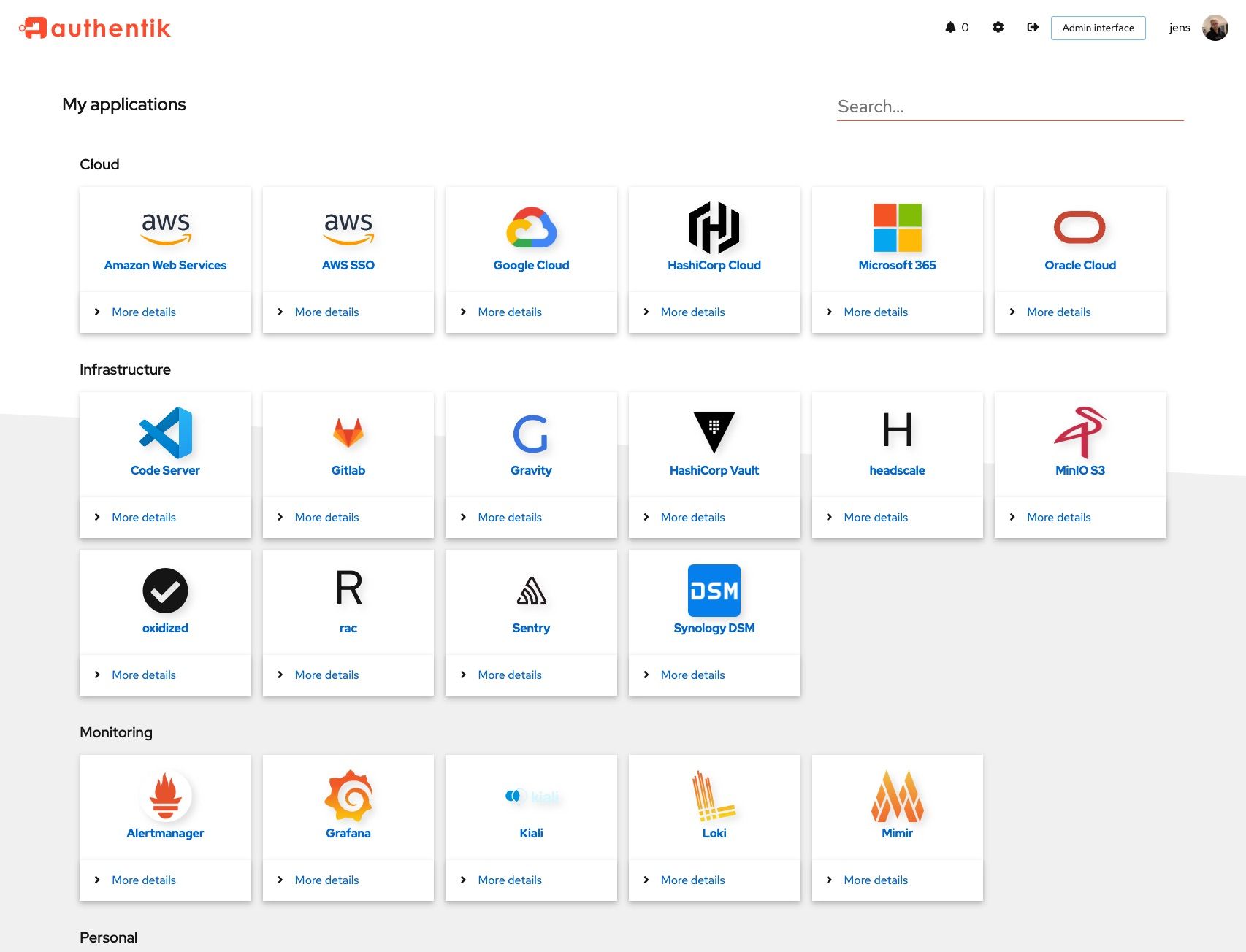

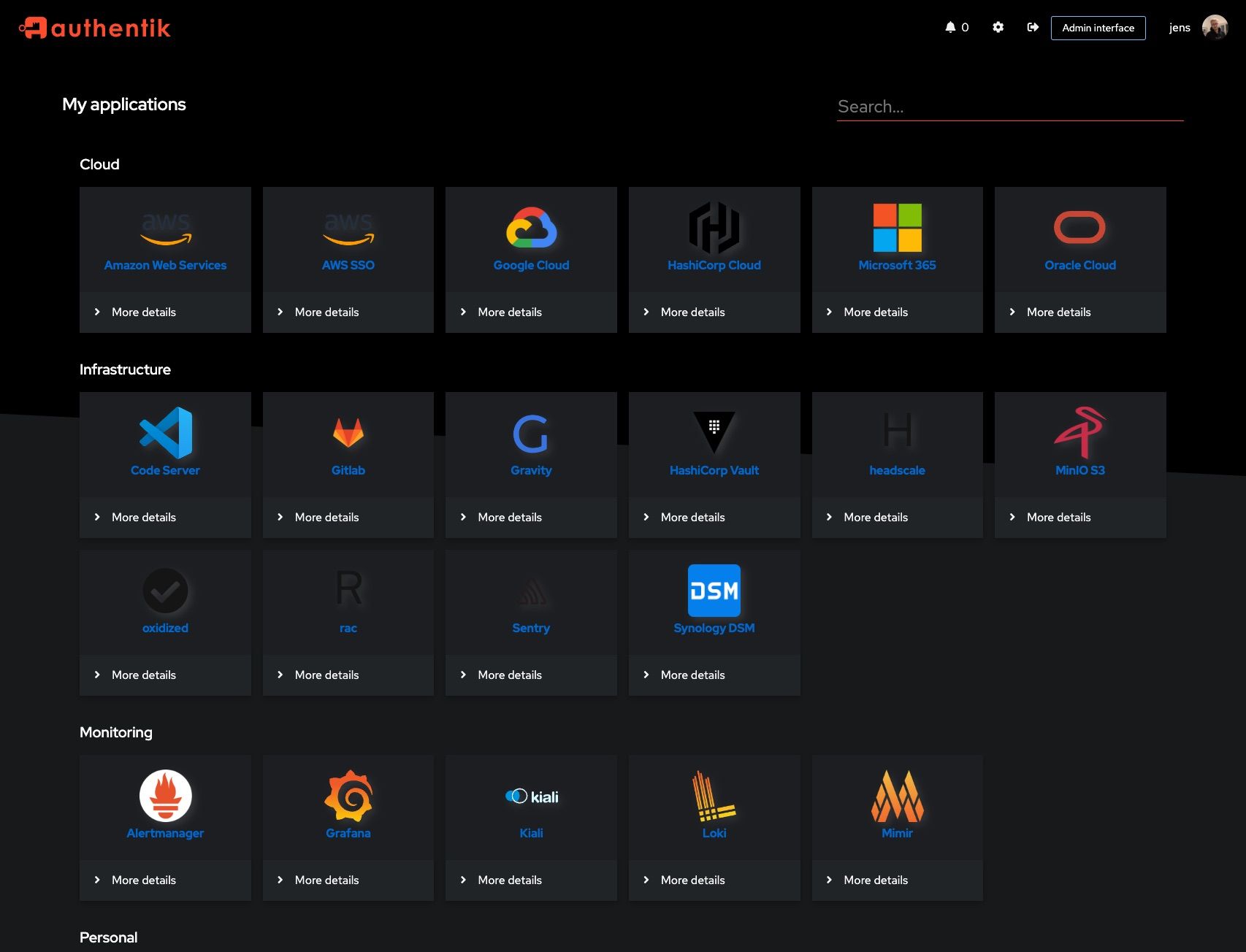

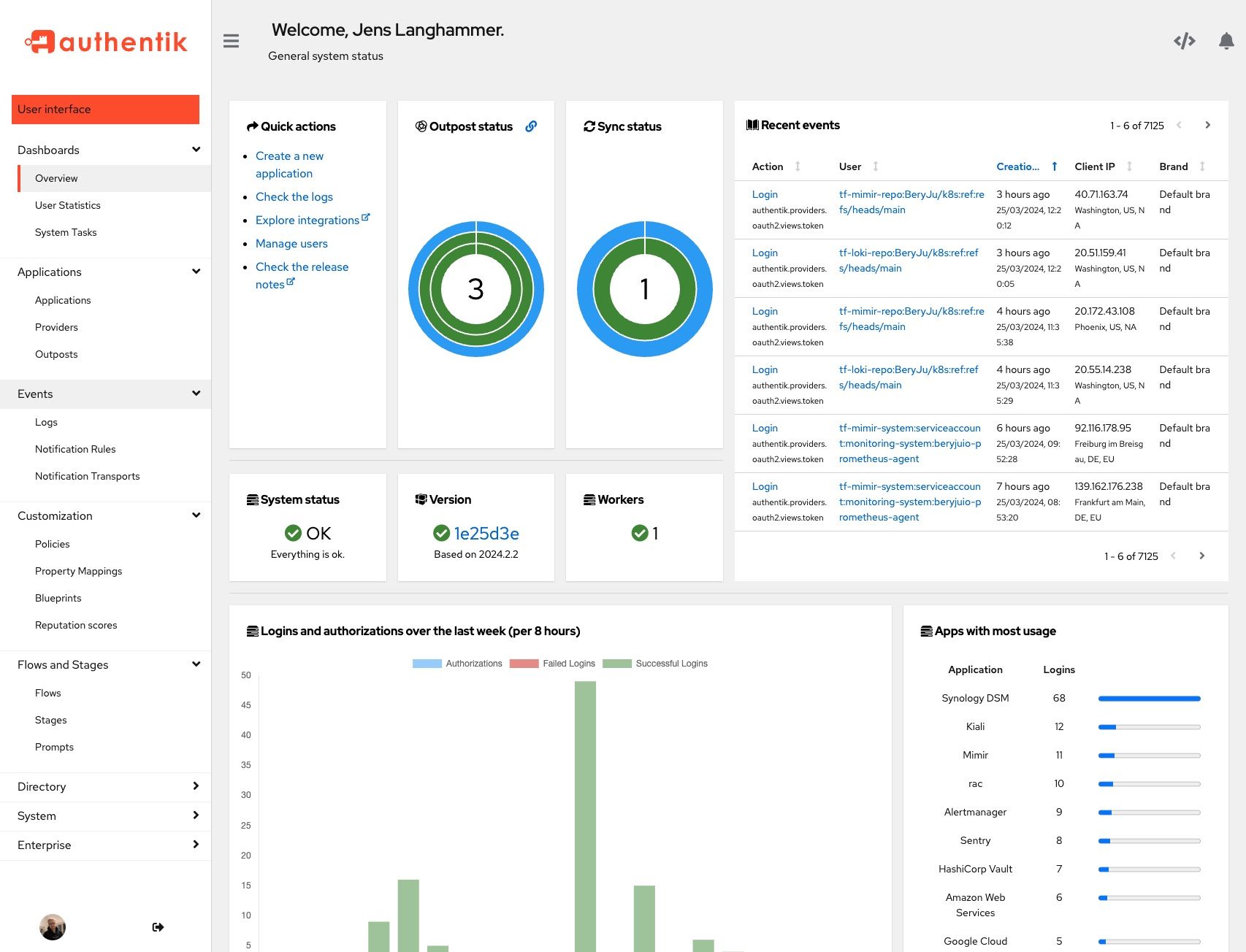

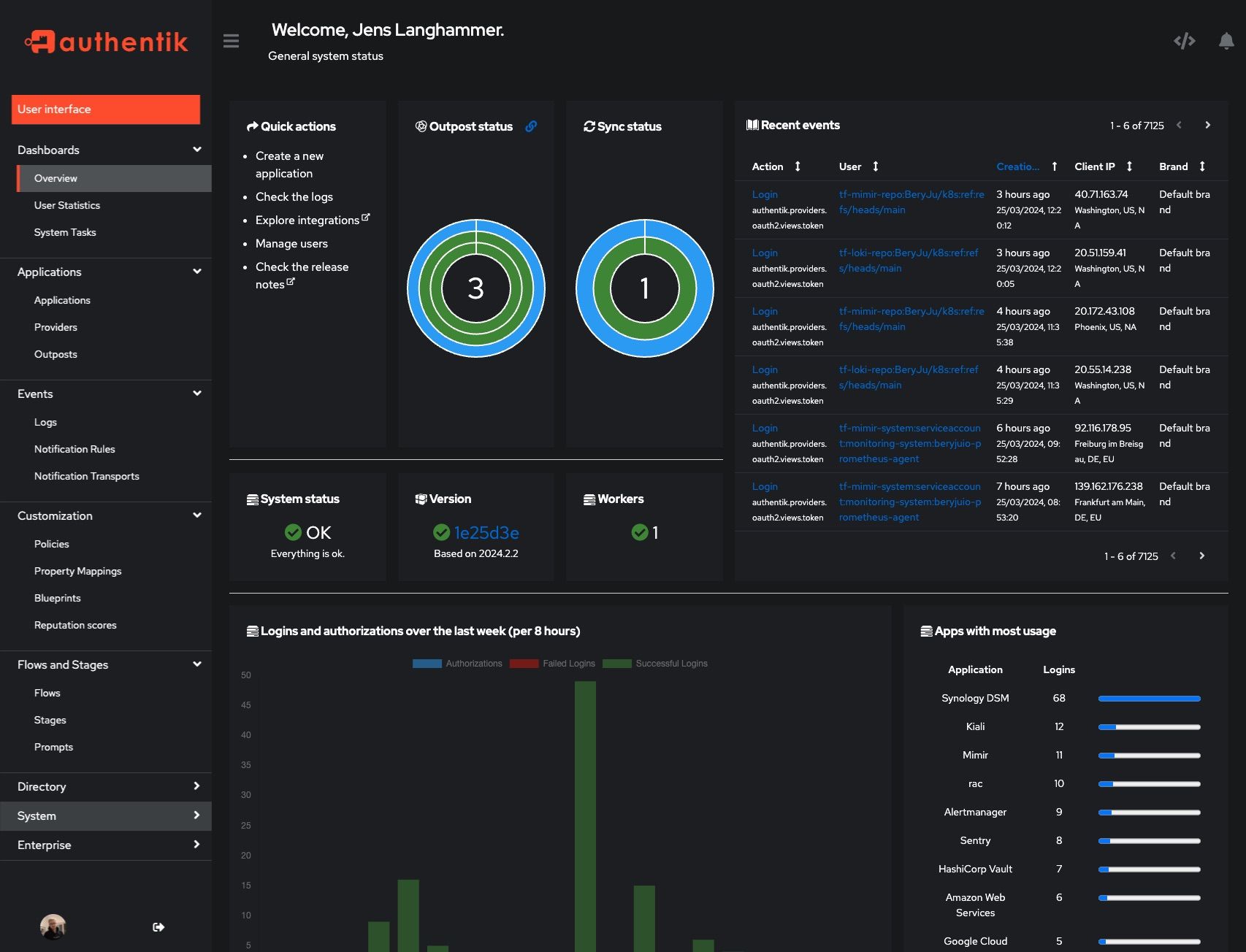

## Screenshots

|

||||

|

||||

@@ -33,20 +32,14 @@ Our [enterprise offering](https://goauthentik.io/pricing) is available for organ

|

||||

|  |  |

|

||||

|  |  |

|

||||

|

||||

## Development and contributions

|

||||

## Development

|

||||

|

||||

See the [Developer Documentation](https://docs.goauthentik.io/docs/developer-docs/) for information about setting up local build environments, testing your contributions, and our contribution process.

|

||||

See [Developer Documentation](https://docs.goauthentik.io/docs/developer-docs/?utm_source=github)

|

||||

|

||||

## Security

|

||||

|

||||

Please see [SECURITY.md](SECURITY.md).

|

||||

See [SECURITY.md](SECURITY.md)

|

||||

|

||||

## Adoption

|

||||

## Adoption and Contributions

|

||||

|

||||

Using authentik? We'd love to hear your story and feature your logo. Email us at [hello@goauthentik.io](mailto:hello@goauthentik.io) or open a GitHub Issue/PR!

|

||||

|

||||

## License

|

||||

|

||||

[](LICENSE)

|

||||

[](website/LICENSE)

|

||||

[](authentik/enterprise/LICENSE)

|

||||

Your organization uses authentik? We'd love to add your logo to the readme and our website! Email us @ hello@goauthentik.io or open a GitHub Issue/PR! For more information on how to contribute to authentik, please refer to our [contribution guide](https://docs.goauthentik.io/docs/developer-docs?utm_source=github).

|

||||

|

||||

@@ -3,7 +3,7 @@

|

||||

from functools import lru_cache

|

||||

from os import environ

|

||||

|

||||

VERSION = "2025.8.6"

|

||||

VERSION = "2025.10.0-rc1"

|

||||

ENV_GIT_HASH_KEY = "GIT_BUILD_HASH"

|

||||

|

||||

|

||||

|

||||

@@ -38,7 +38,6 @@ from authentik.blueprints.v1.oci import OCI_PREFIX

|

||||

from authentik.events.logs import capture_logs

|

||||

from authentik.events.utils import sanitize_dict

|

||||

from authentik.lib.config import CONFIG

|

||||

from authentik.tasks.apps import PRIORITY_HIGH

|

||||

from authentik.tasks.models import Task

|

||||

from authentik.tasks.schedules.models import Schedule

|

||||

from authentik.tenants.models import Tenant

|

||||

@@ -111,7 +110,7 @@ class BlueprintEventHandler(FileSystemEventHandler):

|

||||

|

||||

@actor(

|

||||

description=_("Find blueprints as `blueprints_find` does, but return a safe dict."),

|

||||

priority=PRIORITY_HIGH,

|

||||

throws=(DatabaseError, ProgrammingError, InternalError),

|

||||

)

|

||||

def blueprints_find_dict():

|

||||

blueprints = []

|

||||

@@ -149,7 +148,10 @@ def blueprints_find() -> list[BlueprintFile]:

|

||||

return blueprints

|

||||

|

||||

|

||||

@actor(description=_("Find blueprints and check if they need to be created in the database."))

|

||||

@actor(

|

||||

description=_("Find blueprints and check if they need to be created in the database."),

|

||||

throws=(DatabaseError, ProgrammingError, InternalError),

|

||||

)

|

||||

def blueprints_discovery(path: str | None = None):

|

||||

self: Task = CurrentTask.get_task()

|

||||

count = 0

|

||||

|

||||

@@ -18,14 +18,10 @@ from authentik.core.models import Provider

|

||||

class ProviderSerializer(ModelSerializer, MetaNameSerializer):

|

||||

"""Provider Serializer"""

|

||||

|

||||

assigned_application_slug = ReadOnlyField(source="application.slug", allow_null=True)

|

||||

assigned_application_name = ReadOnlyField(source="application.name", allow_null=True)

|

||||

assigned_backchannel_application_slug = ReadOnlyField(

|

||||

source="backchannel_application.slug", allow_null=True

|

||||

)

|

||||

assigned_backchannel_application_name = ReadOnlyField(

|

||||

source="backchannel_application.name", allow_null=True

|

||||

)

|

||||

assigned_application_slug = ReadOnlyField(source="application.slug")

|

||||

assigned_application_name = ReadOnlyField(source="application.name")

|

||||

assigned_backchannel_application_slug = ReadOnlyField(source="backchannel_application.slug")

|

||||

assigned_backchannel_application_name = ReadOnlyField(source="backchannel_application.name")

|

||||

|

||||

component = SerializerMethodField()

|

||||

|

||||

|

||||

@@ -328,12 +328,6 @@ class SessionUserSerializer(PassiveSerializer):

|

||||

original = UserSelfSerializer(required=False)

|

||||

|

||||

|

||||

class UserPasswordSetSerializer(PassiveSerializer):

|

||||

"""Payload to set a users' password directly"""

|

||||

|

||||

password = CharField(required=True)

|

||||

|

||||

|

||||

class UsersFilter(FilterSet):

|

||||

"""Filter for users"""

|

||||

|

||||

@@ -591,7 +585,12 @@ class UserViewSet(UsedByMixin, ModelViewSet):

|

||||

|

||||

@permission_required("authentik_core.reset_user_password")

|

||||

@extend_schema(

|

||||

request=UserPasswordSetSerializer,

|

||||

request=inline_serializer(

|

||||

"UserPasswordSetSerializer",

|

||||

{

|

||||

"password": CharField(required=True),

|

||||

},

|

||||

),

|

||||

responses={

|

||||

204: OpenApiResponse(description="Successfully changed password"),

|

||||

400: OpenApiResponse(description="Bad request"),

|

||||

@@ -600,11 +599,9 @@ class UserViewSet(UsedByMixin, ModelViewSet):

|

||||

@action(detail=True, methods=["POST"], permission_classes=[])

|

||||

def set_password(self, request: Request, pk: int) -> Response:

|

||||

"""Set password for user"""

|

||||

data = UserPasswordSetSerializer(data=request.data)

|

||||

data.is_valid(raise_exception=True)

|

||||

user: User = self.get_object()

|

||||

try:

|

||||

user.set_password(data.validated_data["password"], request=request)

|

||||

user.set_password(request.data.get("password"), request=request)

|

||||

user.save()

|

||||

except (ValidationError, IntegrityError) as exc:

|

||||

LOGGER.debug("Failed to set password", exc=exc)

|

||||

|

||||

@@ -21,8 +21,6 @@ from rest_framework.serializers import (

|

||||

raise_errors_on_nested_writes,

|

||||

)

|

||||

|

||||

from authentik.rbac.permissions import assign_initial_permissions

|

||||

|

||||

|

||||

def is_dict(value: Any):

|

||||

"""Ensure a value is a dictionary, useful for JSONFields"""

|

||||

@@ -52,15 +50,6 @@ class ModelSerializer(BaseModelSerializer):

|

||||

serializer_field_mapping = BaseModelSerializer.serializer_field_mapping.copy()

|

||||

serializer_field_mapping[models.JSONField] = JSONDictField

|

||||

|

||||

def create(self, validated_data):

|

||||

instance = super().create(validated_data)

|

||||

|

||||

request = self.context.get("request")

|

||||

if request and hasattr(request, "user") and not request.user.is_anonymous:

|

||||

assign_initial_permissions(request.user, instance)

|

||||

|

||||

return instance

|

||||

|

||||

def update(self, instance: Model, validated_data):

|

||||

raise_errors_on_nested_writes("update", self, validated_data)

|

||||

info = model_meta.get_field_info(instance)

|

||||

|

||||

@@ -1,18 +0,0 @@

|

||||

# Generated by Django 5.1.12 on 2025-09-25 13:39

|

||||

|

||||

from django.db import migrations, models

|

||||

|

||||

|

||||

class Migration(migrations.Migration):

|

||||

|

||||

dependencies = [

|

||||

("authentik_core", "0050_user_last_updated_and_more"),

|

||||

("authentik_rbac", "0006_alter_role_options"),

|

||||

]

|

||||

|

||||

operations = [

|

||||

migrations.AddIndex(

|

||||

model_name="group",

|

||||

index=models.Index(fields=["is_superuser"], name="authentik_c_is_supe_1e5a97_idx"),

|

||||

),

|

||||

]

|

||||

@@ -29,7 +29,6 @@ from authentik.blueprints.models import ManagedModel

|

||||

from authentik.core.expression.exceptions import PropertyMappingExpressionException

|

||||

from authentik.core.types import UILoginButton, UserSettingSerializer

|

||||

from authentik.lib.avatars import get_avatar

|

||||

from authentik.lib.config import CONFIG

|

||||

from authentik.lib.expression.exceptions import ControlFlowException

|

||||

from authentik.lib.generators import generate_id

|

||||

from authentik.lib.merge import MERGE_LIST_UNIQUE

|

||||

@@ -201,10 +200,7 @@ class Group(SerializerModel, AttributesMixin):

|

||||

"parent",

|

||||

),

|

||||

)

|

||||

indexes = (

|

||||

models.Index(fields=["name"]),

|

||||

models.Index(fields=["is_superuser"]),

|

||||

)

|

||||

indexes = [models.Index(fields=["name"])]

|

||||

verbose_name = _("Group")

|

||||

verbose_name_plural = _("Groups")

|

||||

permissions = [

|

||||

@@ -567,10 +563,8 @@ class Application(SerializerModel, PolicyBindingModel):

|

||||

it is returned as-is"""

|

||||

if not self.meta_icon:

|

||||

return None

|

||||

if self.meta_icon.name.startswith("http"):

|

||||

if "://" in self.meta_icon.name or self.meta_icon.name.startswith("/static"):

|

||||

return self.meta_icon.name

|

||||

if self.meta_icon.name.startswith("/"):

|

||||

return CONFIG.get("web.path", "/")[:-1] + self.meta_icon.name

|

||||

return self.meta_icon.url

|

||||

|

||||

def get_launch_url(self, user: Optional["User"] = None) -> str | None:

|

||||

@@ -772,10 +766,8 @@ class Source(ManagedModel, SerializerModel, PolicyBindingModel):

|

||||

starts with http it is returned as-is"""

|

||||

if not self.icon:

|

||||

return None

|

||||

if self.icon.name.startswith("http"):

|

||||

if "://" in self.icon.name or self.icon.name.startswith("/static"):

|

||||

return self.icon.name

|

||||

if self.icon.name.startswith("/"):

|

||||

return CONFIG.get("web.path", "/")[:-1] + self.icon.name

|

||||

return self.icon.url

|

||||

|

||||

def get_user_path(self) -> str:

|

||||

|

||||

@@ -82,51 +82,6 @@ class TestApplicationsAPI(APITestCase):

|

||||

self.assertEqual(self.allowed.get_meta_icon, app["meta_icon"])

|

||||

self.assertEqual(self.allowed.meta_icon.read(), b"text")

|

||||

|

||||

def test_set_icon_relative(self):

|

||||

"""Test set_icon (relative path)"""

|

||||

self.client.force_login(self.user)

|

||||

response = self.client.post(

|

||||

reverse(

|

||||

"authentik_api:application-set-icon-url",

|

||||

kwargs={"slug": self.allowed.slug},

|

||||

),

|

||||

data={"url": "relative/path"},

|

||||

)

|

||||

self.assertEqual(response.status_code, 200)

|

||||

|

||||

self.allowed.refresh_from_db()

|

||||

self.assertEqual(self.allowed.get_meta_icon, "/media/public/relative/path")

|

||||

|

||||

def test_set_icon_absolute(self):

|

||||

"""Test set_icon (absolute path)"""

|

||||

self.client.force_login(self.user)

|

||||

response = self.client.post(

|

||||

reverse(

|

||||

"authentik_api:application-set-icon-url",

|

||||

kwargs={"slug": self.allowed.slug},

|

||||

),

|

||||

data={"url": "/relative/path"},

|

||||

)

|

||||

self.assertEqual(response.status_code, 200)

|

||||

|

||||

self.allowed.refresh_from_db()

|

||||

self.assertEqual(self.allowed.get_meta_icon, "/relative/path")

|

||||

|

||||

def test_set_icon_url(self):

|

||||

"""Test set_icon (url)"""

|

||||

self.client.force_login(self.user)

|

||||

response = self.client.post(

|

||||

reverse(

|

||||

"authentik_api:application-set-icon-url",

|

||||

kwargs={"slug": self.allowed.slug},

|

||||

),

|

||||

data={"url": "https://authentik.company/img.png"},

|

||||

)

|

||||

self.assertEqual(response.status_code, 200)

|

||||

|

||||

self.allowed.refresh_from_db()

|

||||

self.assertEqual(self.allowed.get_meta_icon, "https://authentik.company/img.png")

|

||||

|

||||

def test_check_access(self):

|

||||

"""Test check_access operation"""

|

||||

self.client.force_login(self.user)

|

||||

@@ -179,8 +134,6 @@ class TestApplicationsAPI(APITestCase):

|

||||

"provider_obj": {

|

||||

"assigned_application_name": "allowed",

|

||||

"assigned_application_slug": "allowed",

|

||||

"assigned_backchannel_application_name": None,

|

||||

"assigned_backchannel_application_slug": None,

|

||||

"authentication_flow": None,

|

||||

"invalidation_flow": None,

|

||||

"authorization_flow": str(self.provider.authorization_flow.pk),

|

||||

@@ -235,8 +188,6 @@ class TestApplicationsAPI(APITestCase):

|

||||

"provider_obj": {

|

||||

"assigned_application_name": "allowed",

|

||||

"assigned_application_slug": "allowed",

|

||||

"assigned_backchannel_application_name": None,

|

||||

"assigned_backchannel_application_slug": None,

|

||||

"authentication_flow": None,

|

||||

"invalidation_flow": None,

|

||||

"authorization_flow": str(self.provider.authorization_flow.pk),

|

||||

|

||||

@@ -102,16 +102,6 @@ class TestUsersAPI(APITestCase):

|

||||

self.admin.refresh_from_db()

|

||||

self.assertTrue(self.admin.check_password(new_pw))

|

||||

|

||||

def test_set_password_blank(self):

|

||||

"""Test Direct password set"""

|

||||

self.client.force_login(self.admin)

|

||||

response = self.client.post(

|

||||

reverse("authentik_api:user-set-password", kwargs={"pk": self.admin.pk}),

|

||||

data={"password": ""},

|

||||

)

|

||||

self.assertEqual(response.status_code, 400)

|

||||

self.assertJSONEqual(response.content, {"password": ["This field may not be blank."]})

|

||||

|

||||

def test_recovery(self):

|

||||

"""Test user recovery link"""

|

||||

flow = create_test_flow(

|

||||

|

||||

@@ -25,7 +25,7 @@ class GoogleWorkspaceGroupClient(

|

||||

"""Google client for groups"""

|

||||

|

||||

connection_type = GoogleWorkspaceProviderGroup

|

||||

connection_type_query = "group"

|

||||

connection_attr = "googleworkspaceprovidergroup_set"

|

||||

can_discover = True

|

||||

|

||||

def __init__(self, provider: GoogleWorkspaceProvider) -> None:

|

||||

|

||||

@@ -20,7 +20,7 @@ class GoogleWorkspaceUserClient(GoogleWorkspaceSyncClient[User, GoogleWorkspaceP

|

||||

"""Sync authentik users into google workspace"""

|

||||

|

||||

connection_type = GoogleWorkspaceProviderUser

|

||||

connection_type_query = "user"

|

||||

connection_attr = "googleworkspaceprovideruser_set"

|

||||

can_discover = True

|

||||

|

||||

def __init__(self, provider: GoogleWorkspaceProvider) -> None:

|

||||

|

||||

@@ -139,7 +139,11 @@ class GoogleWorkspaceProvider(OutgoingSyncProvider, BackchannelProvider):

|

||||

if type == User:

|

||||

# Get queryset of all users with consistent ordering

|

||||

# according to the provider's settings

|

||||

base = User.objects.all().exclude_anonymous()

|

||||

base = (

|

||||

User.objects.prefetch_related("googleworkspaceprovideruser_set")

|

||||

.all()

|

||||

.exclude_anonymous()

|

||||

)

|

||||

if self.exclude_users_service_account:

|

||||

base = base.exclude(type=UserTypes.SERVICE_ACCOUNT).exclude(

|

||||

type=UserTypes.INTERNAL_SERVICE_ACCOUNT

|

||||

@@ -149,7 +153,11 @@ class GoogleWorkspaceProvider(OutgoingSyncProvider, BackchannelProvider):

|

||||

return base.order_by("pk")

|

||||

if type == Group:

|

||||

# Get queryset of all groups with consistent ordering

|

||||

return Group.objects.all().order_by("pk")

|

||||

return (

|

||||

Group.objects.prefetch_related("googleworkspaceprovidergroup_set")

|

||||

.all()

|

||||

.order_by("pk")

|

||||

)

|

||||

raise ValueError(f"Invalid type {type}")

|

||||

|

||||

def google_credentials(self):

|

||||

|

||||

@@ -29,7 +29,7 @@ class MicrosoftEntraGroupClient(

|

||||

"""Microsoft client for groups"""

|

||||

|

||||

connection_type = MicrosoftEntraProviderGroup

|

||||

connection_type_query = "group"

|

||||

connection_attr = "microsoftentraprovidergroup_set"

|

||||

can_discover = True

|

||||

|

||||

def __init__(self, provider: MicrosoftEntraProvider) -> None:

|

||||

|

||||

@@ -24,7 +24,7 @@ class MicrosoftEntraUserClient(MicrosoftEntraSyncClient[User, MicrosoftEntraProv

|

||||

"""Sync authentik users into microsoft entra"""

|

||||

|

||||

connection_type = MicrosoftEntraProviderUser

|

||||

connection_type_query = "user"

|

||||

connection_attr = "microsoftentraprovideruser_set"

|

||||

can_discover = True

|

||||

|

||||

def __init__(self, provider: MicrosoftEntraProvider) -> None:

|

||||

|

||||

@@ -128,7 +128,11 @@ class MicrosoftEntraProvider(OutgoingSyncProvider, BackchannelProvider):

|

||||

if type == User:

|

||||

# Get queryset of all users with consistent ordering

|

||||

# according to the provider's settings

|

||||

base = User.objects.all().exclude_anonymous()

|

||||

base = (

|

||||

User.objects.prefetch_related("microsoftentraprovideruser_set")

|

||||

.all()

|

||||

.exclude_anonymous()

|

||||

)

|

||||

if self.exclude_users_service_account:

|

||||

base = base.exclude(type=UserTypes.SERVICE_ACCOUNT).exclude(

|

||||

type=UserTypes.INTERNAL_SERVICE_ACCOUNT

|

||||

@@ -138,7 +142,11 @@ class MicrosoftEntraProvider(OutgoingSyncProvider, BackchannelProvider):

|

||||

return base.order_by("pk")

|

||||

if type == Group:

|

||||

# Get queryset of all groups with consistent ordering

|

||||

return Group.objects.all().order_by("pk")

|

||||

return (

|

||||

Group.objects.prefetch_related("microsoftentraprovidergroup_set")

|

||||

.all()

|

||||

.order_by("pk")

|

||||

)

|

||||

raise ValueError(f"Invalid type {type}")

|

||||

|

||||

def microsoft_credentials(self):

|

||||

|

||||

@@ -46,5 +46,5 @@ class FlowStageBindingViewSet(UsedByMixin, ModelViewSet):

|

||||

serializer_class = FlowStageBindingSerializer

|

||||

filterset_fields = "__all__"

|

||||

search_fields = ["stage__name"]

|

||||

ordering = ["order", "pk"]

|

||||

ordering_fields = ["order", "stage__name", "target__uuid", "pk"]

|

||||

ordering = ["order"]

|

||||

ordering_fields = ["order", "stage__name"]

|

||||

|

||||

@@ -190,7 +190,7 @@ class Flow(SerializerModel, PolicyBindingModel):

|

||||

)

|

||||

if self.background.name.startswith("http"):

|

||||

return self.background.name

|

||||

if self.background.name.startswith("/"):

|

||||

if self.background.name.startswith("/static"):

|

||||

return CONFIG.get("web.path", "/")[:-1] + self.background.name

|

||||

return self.background.url

|

||||

|

||||

|

||||

@@ -19,7 +19,7 @@ def start_debug_server(**kwargs) -> bool:

|

||||

)

|

||||

return False

|

||||

|

||||

listen: str = CONFIG.get("listen.debug_py", "127.0.0.1:9901")

|

||||

listen: str = CONFIG.get("listen.listen_debug_py", "127.0.0.1:9901")

|

||||

host, _, port = listen.rpartition(":")

|

||||

try:

|

||||

debugpy.listen((host, int(port)), **kwargs) # nosec

|

||||

|

||||

@@ -31,14 +31,14 @@ postgresql:

|

||||

# host: replica1.example.com

|

||||

|

||||

listen:

|

||||

http: 0.0.0.0:9000

|

||||

https: 0.0.0.0:9443

|

||||

ldap: 0.0.0.0:3389

|

||||

ldaps: 0.0.0.0:6636

|

||||

radius: 0.0.0.0:1812

|

||||

metrics: 0.0.0.0:9300

|

||||

debug: 0.0.0.0:9900

|

||||

debug_py: 0.0.0.0:9901

|

||||

listen_http: 0.0.0.0:9000

|

||||

listen_https: 0.0.0.0:9443

|

||||

listen_ldap: 0.0.0.0:3389

|

||||

listen_ldaps: 0.0.0.0:6636

|

||||

listen_radius: 0.0.0.0:1812

|

||||

listen_metrics: 0.0.0.0:9300

|

||||

listen_debug: 0.0.0.0:9900

|

||||

listen_debug_py: 0.0.0.0:9901

|

||||

trusted_proxy_cidrs:

|

||||

- 127.0.0.0/8

|

||||

- 10.0.0.0/8

|

||||

@@ -152,7 +152,7 @@ worker:

|

||||

processes: 1

|

||||

threads: 2

|

||||

consumer_listen_timeout: "seconds=30"

|

||||

task_max_retries: 5

|

||||

task_max_retries: 20

|

||||

task_default_time_limit: "minutes=10"

|

||||

lock_purge_interval: "minutes=1"

|

||||

task_purge_interval: "days=1"

|

||||

|

||||

@@ -36,7 +36,7 @@ ARG_SANITIZE = re.compile(r"[:.-]")

|

||||

|

||||

|

||||

def sanitize_arg(arg_name: str) -> str:

|

||||

return re.sub(ARG_SANITIZE, "_", slugify(arg_name))

|

||||

return re.sub(ARG_SANITIZE, "_", arg_name)

|

||||

|

||||

|

||||

class BaseEvaluator:

|

||||

@@ -218,9 +218,7 @@ class BaseEvaluator:

|

||||

|

||||

def wrap_expression(self, expression: str) -> str:

|

||||

"""Wrap expression in a function, call it, and save the result as `result`"""

|

||||

handler_signature = ",".join(

|

||||

[x for x in [sanitize_arg(x) for x in self._context.keys()] if x]

|

||||

)

|

||||

handler_signature = ",".join(sanitize_arg(x) for x in self._context.keys())

|

||||

full_expression = ""

|

||||

full_expression += f"def handler({handler_signature}):\n"

|

||||

full_expression += indent(expression, " ")

|

||||

|

||||

@@ -43,9 +43,7 @@ def structlog_configure():

|

||||

structlog.stdlib.PositionalArgumentsFormatter(),

|

||||

structlog.processors.TimeStamper(fmt="iso", utc=False),

|

||||

structlog.processors.StackInfoRenderer(),

|

||||

structlog.processors.ExceptionRenderer(

|

||||

structlog.processors.ExceptionDictTransformer(show_locals=CONFIG.get_bool("debug"))

|

||||

),

|

||||

structlog.processors.dict_tracebacks,

|

||||

structlog.stdlib.ProcessorFormatter.wrap_for_formatter,

|

||||

],

|

||||

logger_factory=structlog.stdlib.LoggerFactory(),

|

||||

@@ -67,14 +65,7 @@ def get_logger_config():

|

||||

"json": {

|

||||

"()": structlog.stdlib.ProcessorFormatter,

|

||||

"processor": structlog.processors.JSONRenderer(sort_keys=True),

|

||||

"foreign_pre_chain": LOG_PRE_CHAIN

|

||||

+ [

|

||||

structlog.processors.ExceptionRenderer(

|

||||

structlog.processors.ExceptionDictTransformer(

|

||||

show_locals=CONFIG.get_bool("debug")

|

||||

)

|

||||

),

|

||||

],

|

||||

"foreign_pre_chain": LOG_PRE_CHAIN + [structlog.processors.dict_tracebacks],

|

||||

},

|

||||

"console": {

|

||||

"()": structlog.stdlib.ProcessorFormatter,

|

||||

|

||||

@@ -1,5 +1,4 @@

|

||||

from dramatiq.actor import Actor

|

||||

from dramatiq.results.errors import ResultFailure

|

||||

from drf_spectacular.utils import extend_schema

|

||||

from rest_framework.decorators import action

|

||||

from rest_framework.fields import BooleanField, CharField, ChoiceField

|

||||

@@ -111,13 +110,9 @@ class OutgoingSyncProviderStatusMixin:

|

||||

"override_dry_run": params.validated_data["override_dry_run"],

|

||||

"pk": params.validated_data["sync_object_id"],

|

||||

},

|

||||

retries=0,

|

||||

rel_obj=provider,

|

||||

)

|

||||

try:

|

||||

msg.get_result(block=True)

|

||||

except ResultFailure:

|

||||

pass

|

||||

msg.get_result(block=True)

|

||||

task: Task = msg.options["task"]

|

||||

task.refresh_from_db()

|

||||

return Response(SyncObjectResultSerializer(instance={"messages": task._messages}).data)

|

||||

|

||||

@@ -22,7 +22,6 @@ if TYPE_CHECKING:

|

||||

|

||||

|

||||

class Direction(StrEnum):

|

||||

|

||||

add = "add"

|

||||

remove = "remove"

|

||||

|

||||

@@ -36,13 +35,16 @@ SAFE_METHODS = [

|

||||

|

||||

|

||||

class BaseOutgoingSyncClient[

|

||||

TModel: "Model", TConnection: "Model", TSchema: dict, TProvider: "OutgoingSyncProvider"

|

||||

TModel: "Model",

|

||||

TConnection: "Model",

|

||||

TSchema: dict,

|

||||

TProvider: "OutgoingSyncProvider",

|

||||

]:

|

||||

"""Basic Outgoing sync client Client"""

|

||||

|

||||

provider: TProvider

|

||||

connection_type: type[TConnection]

|

||||

connection_type_query: str

|

||||

connection_attr: str

|

||||

mapper: PropertyMappingManager

|

||||

|

||||

can_discover = False

|

||||

@@ -62,9 +64,7 @@ class BaseOutgoingSyncClient[

|

||||

def write(self, obj: TModel) -> tuple[TConnection, bool]:

|

||||

"""Write object to destination. Uses self.create and self.update, but

|

||||

can be overwritten for further logic"""

|

||||

connection = self.connection_type.objects.filter(

|

||||

provider=self.provider, **{self.connection_type_query: obj}

|

||||

).first()

|

||||

connection = getattr(obj, self.connection_attr).filter(provider=self.provider).first()

|

||||

try:

|

||||

if not connection:

|

||||

connection = self.create(obj)

|

||||

|

||||

@@ -20,7 +20,6 @@ from authentik.lib.sync.outgoing.exceptions import (

|

||||

TransientSyncException,

|

||||

)

|

||||

from authentik.lib.sync.outgoing.models import OutgoingSyncProvider

|

||||

from authentik.lib.utils.errors import exception_to_dict

|

||||

from authentik.lib.utils.reflection import class_to_path, path_to_class

|

||||

from authentik.tasks.models import Task

|

||||

|

||||

@@ -165,17 +164,16 @@ class SyncTasks:

|

||||

except BadRequestSyncException as exc:

|

||||

self.logger.warning("failed to sync object", exc=exc, obj=obj)

|

||||

task.warning(

|

||||

f"Failed to sync {str(obj)} due to error: {str(exc)}",

|

||||

f"Failed to sync {obj._meta.verbose_name} {str(obj)} due to error: {str(exc)}",

|

||||

arguments=exc.args[1:],

|

||||

obj=sanitize_item(obj),

|

||||

exception=exception_to_dict(exc),

|

||||

)

|

||||

except TransientSyncException as exc:

|

||||

self.logger.warning("failed to sync object", exc=exc, user=obj)

|

||||

task.warning(

|

||||

f"Failed to sync {str(obj)} due to " f"transient error: {str(exc)}",

|

||||

f"Failed to sync {obj._meta.verbose_name} {str(obj)} due to "

|

||||

"transient error: {str(exc)}",

|

||||

obj=sanitize_item(obj),

|

||||

exception=exception_to_dict(exc),

|

||||

)

|

||||

except StopSync as exc:

|

||||

self.logger.warning("Stopping sync", exc=exc)

|

||||

|

||||

@@ -1,7 +1,5 @@

|

||||

"""Test Evaluator base functions"""

|

||||

|

||||

from pathlib import Path

|

||||

|

||||

from django.test import RequestFactory, TestCase

|

||||

from django.urls import reverse

|

||||

from jwt import decode

|

||||

@@ -79,18 +77,3 @@ class TestEvaluator(TestCase):

|

||||

jwt, provider.client_secret, algorithms=["HS256"], audience=provider.client_id

|

||||

)

|

||||

self.assertEqual(decoded["preferred_username"], user.username)

|

||||

|

||||

def test_expr_arg_escape(self):

|

||||

"""Test escaping of arguments"""

|

||||

eval = BaseEvaluator()

|

||||

eval._context = {

|

||||

'z=getattr(getattr(__import__("os"), "popen")("id > /tmp/test"), "read")()': "bar",

|

||||

"@@": "baz",

|

||||

"{{": "baz",

|

||||

"aa@@": "baz",

|

||||

}

|

||||

res = eval.evaluate("return locals()")

|

||||

self.assertEqual(

|

||||

res, {"zgetattrgetattr__import__os_popenid_tmptest_read": "bar", "aa": "baz"}

|

||||

)

|

||||

self.assertFalse(Path("/tmp/test").exists()) # nosec

|

||||

|

||||

@@ -4,11 +4,9 @@ from traceback import extract_tb

|

||||

|

||||

from structlog.tracebacks import ExceptionDictTransformer

|

||||

|

||||

from authentik.lib.config import CONFIG

|

||||

from authentik.lib.utils.reflection import class_to_path

|

||||

|

||||

TRACEBACK_HEADER = "Traceback (most recent call last):"

|

||||

_exception_transformer = ExceptionDictTransformer(show_locals=CONFIG.get_bool("debug"))

|

||||

|

||||

|

||||

def exception_to_string(exc: Exception) -> str:

|

||||

@@ -25,4 +23,4 @@ def exception_to_string(exc: Exception) -> str:

|

||||

|

||||

def exception_to_dict(exc: Exception) -> dict:

|

||||

"""Format exception as a dictionary"""

|

||||

return _exception_transformer((type(exc), exc, exc.__traceback__))

|

||||

return ExceptionDictTransformer()((type(exc), exc, exc.__traceback__))

|

||||

|

||||

@@ -47,9 +47,7 @@ class OutpostSerializer(ModelSerializer):

|

||||

)

|

||||

providers_obj = ProviderSerializer(source="providers", many=True, read_only=True)

|

||||

service_connection_obj = ServiceConnectionSerializer(

|

||||

source="service_connection",

|

||||

read_only=True,

|

||||

allow_null=True,

|

||||

source="service_connection", read_only=True

|

||||

)

|

||||

refresh_interval_s = SerializerMethodField()

|

||||

|

||||

|

||||

@@ -13,7 +13,6 @@ from urllib3.exceptions import HTTPError

|

||||

from yaml import dump_all

|

||||

|

||||

from authentik.events.logs import LogEvent, capture_logs

|

||||

from authentik.lib.utils.reflection import class_to_path

|

||||

from authentik.outposts.controllers.base import BaseClient, BaseController, ControllerException

|

||||

from authentik.outposts.controllers.k8s.base import KubernetesObjectReconciler

|

||||

from authentik.outposts.controllers.k8s.deployment import DeploymentReconciler

|

||||

@@ -106,7 +105,7 @@ class KubernetesController(BaseController):

|

||||

LogEvent(

|

||||

log_level="info",

|

||||

event=f"{reconcile_key.title()}: Disabled",

|

||||

logger=class_to_path(self.__class__),

|

||||

logger=str(type(self)),

|

||||

)

|

||||

)

|

||||

continue

|

||||

@@ -145,7 +144,7 @@ class KubernetesController(BaseController):

|

||||

LogEvent(

|

||||

log_level="info",

|

||||

event=f"{reconcile_key.title()}: Disabled",

|

||||

logger=class_to_path(self.__class__),

|

||||

logger=str(type(self)),

|

||||

)

|

||||

)

|

||||

continue

|

||||

|

||||

@@ -124,5 +124,4 @@ class PolicyBindingViewSet(UsedByMixin, ModelViewSet):

|

||||

serializer_class = PolicyBindingSerializer

|

||||

search_fields = ["policy__name"]

|

||||

filterset_class = PolicyBindingFilter

|

||||

ordering = ["order", "pk"]

|

||||

ordering_fields = ["order", "target__uuid", "pk"]

|

||||

ordering = ["target", "order"]

|

||||

|

||||

@@ -26,6 +26,7 @@ HIST_POLICIES_EXECUTION_TIME = Histogram(

|

||||

"binding_order",

|

||||

"binding_target_type",

|

||||

"binding_target_name",

|

||||

"object_pk",

|

||||

"object_type",

|

||||

"mode",

|

||||

],

|

||||

|

||||

@@ -86,6 +86,7 @@ class PolicyEngine:

|

||||

binding_order=binding.order,

|

||||

binding_target_type=binding.target_type,

|

||||

binding_target_name=binding.target_name,

|

||||

object_pk=str(self.request.obj.pk),

|

||||

object_type=class_to_path(self.request.obj.__class__),

|

||||

mode="cache_retrieve",

|

||||

).time():

|

||||

|

||||

@@ -131,6 +131,7 @@ class PolicyProcess(PROCESS_CLASS):

|

||||

binding_order=self.binding.order,

|

||||

binding_target_type=self.binding.target_type,

|

||||

binding_target_name=self.binding.target_name,

|

||||

object_pk=str(self.request.obj.pk) if self.request.obj else "",

|

||||

object_type=class_to_path(self.request.obj.__class__) if self.request.obj else "",

|

||||

mode="execute_process",

|

||||

).time(),

|

||||

|

||||

@@ -8,12 +8,7 @@ from jwt import decode

|

||||

|

||||

from authentik.blueprints.tests import apply_blueprint

|

||||

from authentik.core.models import Application, Group, Token, TokenIntents, UserTypes

|

||||

from authentik.core.tests.utils import (

|

||||

create_test_admin_user,

|

||||

create_test_cert,

|

||||

create_test_flow,

|

||||

create_test_user,

|

||||

)

|

||||

from authentik.core.tests.utils import create_test_admin_user, create_test_cert, create_test_flow

|

||||

from authentik.policies.models import PolicyBinding

|

||||

from authentik.providers.oauth2.constants import (

|

||||

GRANT_TYPE_CLIENT_CREDENTIALS,

|

||||

@@ -126,30 +121,6 @@ class TestTokenClientCredentialsUserNamePassword(OAuthTestCase):

|

||||

},

|

||||

)

|

||||

|

||||

def test_deactivate(self):

|

||||

"""test deactivated user"""

|

||||

self.user.is_active = False

|

||||

self.user.save()

|

||||

response = self.client.post(

|

||||

reverse("authentik_providers_oauth2:token"),

|

||||

{

|

||||

"grant_type": GRANT_TYPE_CLIENT_CREDENTIALS,

|

||||

"scope": SCOPE_OPENID,

|

||||

"client_id": self.provider.client_id,

|

||||

"username": "sa",

|

||||

"password": self.token.key,

|

||||

},

|

||||

)

|

||||

self.assertEqual(response.status_code, 400)

|

||||

self.assertJSONEqual(

|

||||

response.content.decode(),

|

||||

{

|

||||

"error": "invalid_grant",

|

||||

"error_description": TokenError.errors["invalid_grant"],

|

||||

"request_id": response.headers["X-authentik-id"],

|

||||

},

|

||||

)

|

||||

|

||||

def test_permission_denied(self):

|

||||

"""test permission denied"""

|

||||

group = Group.objects.create(name="foo")

|

||||

@@ -211,47 +182,6 @@ class TestTokenClientCredentialsUserNamePassword(OAuthTestCase):

|

||||

self.assertEqual(jwt["given_name"], self.user.name)

|

||||

self.assertEqual(jwt["preferred_username"], self.user.username)

|

||||

|

||||

def test_successful_two_tokens(self):

|

||||

"""test successful when two app passwords with the same key exist"""

|

||||

Token.objects.create(

|

||||

identifier="sa-token-two",

|

||||

user=create_test_user(),

|

||||

intent=TokenIntents.INTENT_APP_PASSWORD,

|

||||

expiring=False,

|

||||

key=self.token.key,

|

||||

)

|

||||

|

||||

response = self.client.post(

|

||||

reverse("authentik_providers_oauth2:token"),

|

||||

{

|

||||

"grant_type": GRANT_TYPE_CLIENT_CREDENTIALS,

|

||||

"scope": f"{SCOPE_OPENID} {SCOPE_OPENID_EMAIL} {SCOPE_OPENID_PROFILE}",

|

||||

"client_id": self.provider.client_id,

|

||||

"username": "sa",

|

||||

"password": self.token.key,

|

||||

},

|

||||

)

|

||||

self.assertEqual(response.status_code, 200)

|

||||

body = loads(response.content.decode())

|

||||

self.assertEqual(body["token_type"], TOKEN_TYPE)

|

||||

_, alg = self.provider.jwt_key

|

||||

jwt = decode(

|

||||

body["access_token"],

|

||||

key=self.provider.signing_key.public_key,

|

||||

algorithms=[alg],

|

||||

audience=self.provider.client_id,

|

||||

)

|

||||

self.assertEqual(jwt["given_name"], self.user.name)

|

||||

self.assertEqual(jwt["preferred_username"], self.user.username)

|

||||

jwt = decode(

|

||||

body["id_token"],

|

||||

key=self.provider.signing_key.public_key,

|

||||

algorithms=[alg],

|

||||

audience=self.provider.client_id,

|

||||

)

|

||||

self.assertEqual(jwt["given_name"], self.user.name)

|

||||

self.assertEqual(jwt["preferred_username"], self.user.username)

|

||||

|

||||

def test_successful_password(self):

|

||||

"""test successful (password grant)"""

|

||||

response = self.client.post(

|